A Comprehensive Guide to Benchmarking Monte Carlo Methods for Reliable Parameter Estimation in Biomedical Research

This article provides a systematic guide for researchers and drug development professionals on benchmarking Monte Carlo methods for parameter estimation.

A Comprehensive Guide to Benchmarking Monte Carlo Methods for Reliable Parameter Estimation in Biomedical Research

Abstract

This article provides a systematic guide for researchers and drug development professionals on benchmarking Monte Carlo methods for parameter estimation. It covers foundational principles and the role of Monte Carlo simulations in addressing uncertainty within complex biological models, such as pharmacokinetic-pharmacodynamic systems. Methodologically, it details the implementation of key algorithms, including Adaptive Metropolis, Parallel Tempering, and modern Sequential Monte Carlo techniques for global optimization. The guide addresses common challenges like non-identifiability, model drift, and computational bottlenecks, offering troubleshooting and optimization strategies. Finally, it establishes a rigorous framework for the comparative validation of sampling methods, emphasizing the use of standardized benchmark problems and performance metrics to select robust algorithms for predictive modeling and clinical decision support.

Understanding the 'Why': Core Principles of Monte Carlo for Parameter Estimation in Complex Systems

In biomedical research, computational models have become indispensable for predicting biological function, designing treatments, and understanding complex physiological systems. However, the predictive power of these models is inherently constrained by parametric uncertainty—the variability in model parameters representing physical properties, material coefficients, and physiological effects that are rarely known with precision [1]. This variability stems from genuine biological differences between individuals, measurement limitations, and natural stochasticity in biological processes.

The challenge is two-fold: first, to accurately estimate these uncertain parameters from often noisy and limited experimental data; and second, to rigorously quantify how this parameter uncertainty propagates to uncertainty in model predictions that inform clinical or research decisions. This dual challenge sits at the heart of model-informed drug development, personalized medicine, and reliable clinical prediction tools [2]. Failure to adequately account for parametric uncertainty can lead to overconfident predictions, failed clinical trials, and suboptimal therapeutic strategies.

Within the broader context of benchmarking parameter estimation methods for Monte Carlo research, this article provides a structured comparison of contemporary approaches. Monte Carlo methods, which rely on repeated random sampling, are fundamental to many uncertainty quantification (UQ) frameworks but require careful benchmarking to balance computational cost with statistical accuracy [3]. The following guides objectively compare the performance, underlying experimental protocols, and practical implementation of leading software tools and methodological paradigms for tackling uncertainty and parameter estimation in biomedical models.

Comparison Guide I: Software Suites for Uncertainty Quantification

The following table compares the core capabilities of prominent open-source and commercial software toolboxes designed for forward uncertainty quantification, highlighting their applicability to biomedical simulations.

Table 1: Capability Comparison of Uncertainty Quantification Software Suites [1]

| Feature / Software | UncertainSCI | UncertainPy | ChaosPy | SimNIBS | UQLab | DAKOTA |

|---|---|---|---|---|---|---|

| Open-source | Yes | Yes | Yes | Yes | No | Yes |

| 1st/2nd Order Statistics | Yes | Yes | Yes | No | Yes | Yes |

| Sensitivity Analysis | Yes | Yes | Yes | No | Yes | Yes |

| Medians & Quantiles | Yes | No | No | No | Yes | No |

| General Scalar Distributions | Yes | Yes | Yes | No | Yes | Yes |

| Flexible Polynomial Spaces | Yes | No | No | No | No | No |

| Tensor-product Sampling | Yes | No | Yes | No | Yes | Yes |

| Weighted Max-volume Sampling | Yes | No | No | No | No | No |

| Mean Best-approximation Guarantees | Yes | No | No | No | No | No |

Note: This is a selective comparison based on a survey of tools; a comprehensive list includes additional packages such as PyApprox, Sparse Grids Matlab, UQTk, MUQ, and Tasmanian [1].

UncertainSCI is a Python-based, open-source library that addresses a gap in general-purpose UQ tools for biomedicine [1]. Its non-intrusive pipeline allows users to wrap existing simulation code. It employs modern polynomial chaos (PC) expansion techniques, building an efficient emulator (a surrogate model) from strategically sampled model evaluations. A key innovation is its use of weighted Fekete points for near-optimal sampling, which provides formal mean best-approximation guarantees not commonly found in other toolboxes [1]. It has been experimentally validated in cardiac (modeling bioelectric potentials) and neural (electric brain stimulation) applications, demonstrating efficient computation of output statistics and parameter sensitivities.

Commercial Platforms (e.g., Certara Suite): In contrast to research-focused open-source tools, integrated commercial suites like those offered by Certara are engineered for the drug development pipeline [2]. These are not singular UQ tools but ecosystems combining physiologically-based pharmacokinetic (PBPK) modeling (Simcyp Simulator), pharmacometric analysis (Phoenix), quantitative systems pharmacology (QSP), and clinical trial simulation. Their validation is heavily regulatory; for instance, the Simcyp Simulator has received a qualification opinion from the European Medicines Agency (EMA) for use in certain regulatory submissions, a testament to its rigorous validation against clinical data [2]. Performance is measured by successful drug development outcomes and regulatory endorsement rather than algorithmic benchmarks.

Domain-Specific Tools (e.g., for Medical Imaging): Challenges like the Quantification of Uncertainties in Biomedical Image Quantification (QUBIQ) benchmark focus on quantifying uncertainty in segmentation tasks, where the "ground truth" is defined by variability among multiple human expert annotators [4]. The top-performing methods in this benchmark consistently utilized ensemble techniques, such as model ensembles or Monte Carlo dropout, to capture predictive uncertainty. Experimental protocols involve training on multi-rater annotated datasets spanning different modalities (MRI, CT) and organs, with performance evaluated via metrics that measure how well the algorithm's uncertainty maps align with inter-rater disagreement regions [4].

Comparison Guide II: Methodological Paradigms for Parameter Estimation

This guide compares the accuracy of different statistical parameter estimation methods as applied to a critical clinical problem: predicting Normal Tissue Complication Probability (NTCP) after radiotherapy.

Table 2: Performance Comparison of Parameter Estimation Methods for NTCP Models [5]

| Dataset (Purpose) | Parameter Estimation Method | Area Under Curve (AUC) | Coefficient of Determination (R²) |

|---|---|---|---|

| Data-A (Training) | Bayesian Estimation (BE) | 0.938 | 0.953 |

| Least Squares Estimation (LSE) | 0.942 | 0.986 | |

| Maximum Likelihood Estimation (MLE) | 0.940 | 0.843 | |

| Data-B (External Validation) | Bayesian Estimation (BE) | 0.744 | 0.958 |

| Least Squares Estimation (LSE) | 0.743 | 0.697 | |

| Maximum Likelihood Estimation (MLE) | 0.745 | 0.857 | |

| Data-C (Internal Validation) | Bayesian Estimation (BE) | 0.867 | 0.915 |

| Least Squares Estimation (LSE) | 0.862 | 0.916 | |

| Maximum Likelihood Estimation (MLE) | 0.865 | 0.896 |

Note: The study calibrated five different NTCP models (e.g., Lyman, Poisson, Logit) using data from 612 nasopharyngeal carcinoma patients to predict temporal lobe injury. The Poisson model coupled with Bayesian Estimation consistently showed robust performance across training and validation sets [5].

Methodological Protocols and Analysis

Bayesian Estimation (BE): The top-performing method in the NTCP study, BE, incorporates prior knowledge or beliefs about parameters (as a prior distribution) and updates this with observed data to produce a posterior distribution [5]. This provides not just a point estimate but a full probability distribution for each parameter, inherently quantifying estimation uncertainty. The experimental protocol involved defining likelihood functions for the NTCP models and using computational methods (likely Markov Chain Monte Carlo) to sample from the posterior. Its strength, as shown in Table 2, is its robustness and generalizability, maintaining high R² values on the external validation set where LSE performance dropped significantly [5].

Data-Driven & Machine Learning Approaches: A novel paradigm uses machine learning to invert complex models. For example, a deep learning approach to multi-fiber parameter estimation in diffusion MRI decomposes the high-dimensional inverse problem into smaller subproblems solved by specialized neural networks [6]. The protocol involves training these networks on a vast corpus of synthetic data generated from the forward biophysical model. Once trained, inference is instantaneous and includes uncertainty quantification for each parameter. Experiments on Human Connectome Project data showed it could reliably estimate intracellular volume fraction while correctly identifying high uncertainty in extracellular diffusivity parameters under typical acquisition schemes [6].

Neutrosophic Logic Approaches: For problems with deep epistemic uncertainty (involving indeterminacy or conflicting information), traditional probability may be insufficient. Neutrosophic logic, which generalizes fuzzy logic by incorporating independent truth, falsity, and indeterminacy components, offers an alternative framework [7]. A proposed Neutro-Genetic Hidden Markov Model (NG-HMM) applies this to genomic analysis, assigning neutrosophic values to states and transitions. The experimental protocol involves modifying the HMM inference algorithms to handle neutrosophic, rather than purely probabilistic, calculations. This is a nascent approach promising for personalized medicine where genetic data is often ambiguous, though extensive clinical validation is still future work [7].

Visualizing Workflows and Relationships

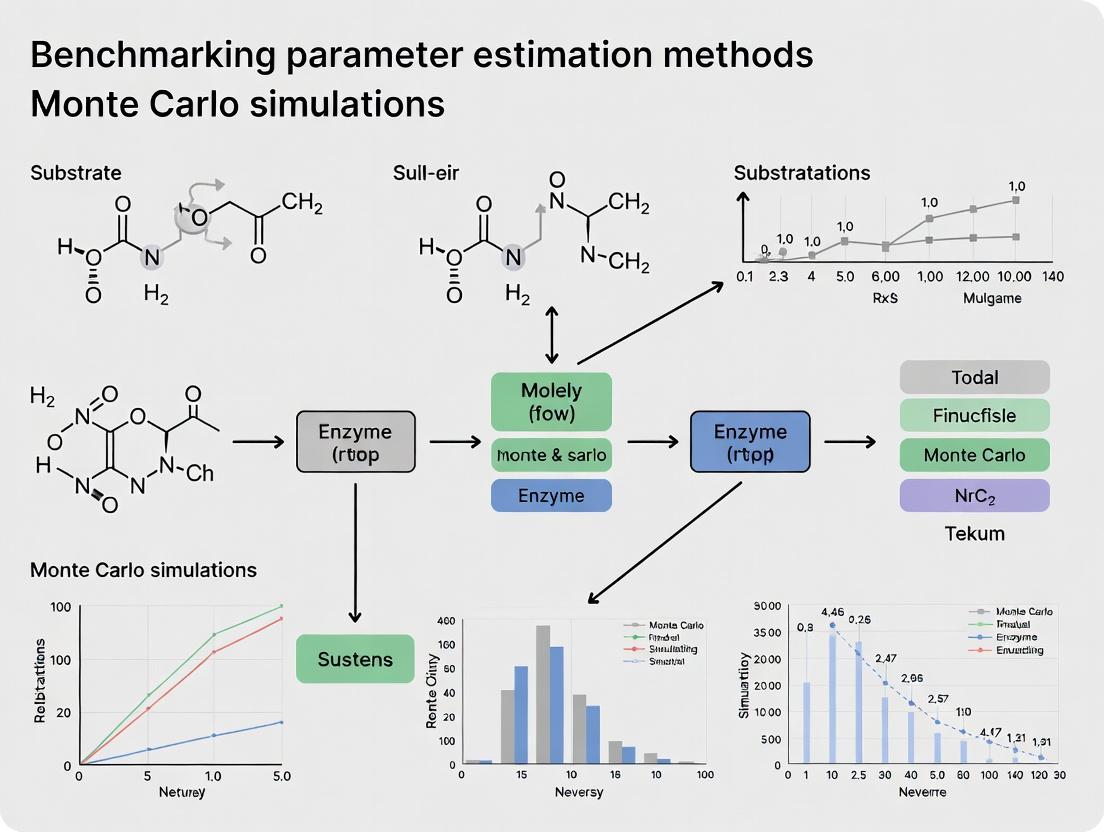

Workflow for Data-Driven Model Selection & Parameter Estimation

Diagram 1: A two-phase pipeline for selecting a mathematical model from a pattern image and estimating its parameters [8].

Benchmarking Framework for NTCP Model Estimation Methods

Diagram 2: The experimental framework for comparing parameter estimation methods across multiple NTCP models using separate training and validation datasets [5].

Uncertainty Quantification Pipeline with Polynomial Chaos

Diagram 3: A non-intrusive uncertainty quantification pipeline using polynomial chaos expansion to create a fast surrogate model for statistical analysis [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Software, Data, and Analytical Reagents for Parameter Estimation & UQ Research

| Tool / Reagent Category | Specific Example(s) | Primary Function in Research |

|---|---|---|

| UQ & Probabilistic Programming Frameworks | UncertainSCI [1], PyMC, Stan | Provide foundational algorithms (MCMC, PC expansion) for building models with inherent uncertainty quantification. |

| Biomedical Simulation Environments | Simcyp PBPK Simulator [2], NEURON, OpenCOR | Offer validated, domain-specific forward models (e.g., for pharmacokinetics, electrophysiology) essential for generating in silico data. |

| Clinical & Experimental Datasets | QUBIQ Multi-rater Segmentation Data [4], HCP Diffusion MRI [6], NTCP Patient Data [5] | Serve as the empirical ground truth for calibrating models and benchmarking estimation algorithms. |

| Parameter Estimation Engines | Bayesian Estimation (BE) [5], NGBoost [8], Custom DNN Inverters [6] | Core algorithms that perform the inverse problem of finding parameters that best explain observed data. |

| Benchmarking & Validation Suites | QUBIQ Challenge Framework [4], Custom Monte Carlo Benchmarks [3] | Provide standardized protocols, metrics, and data for objectively comparing the performance of different methods. |

| High-Performance Computing (HPC) Resources | Cloud Clusters, GPU Arrays | Enable the computationally intensive tasks of large-scale simulation, ensemble training for ML, and sampling for MCMC methods. |

Within the rigorous discipline of benchmarking parameter estimation methods, Monte Carlo simulation has evolved from a specialized computational tool into a foundational paradigm for quantifying uncertainty and comparing algorithmic performance. This primer establishes that the core value of Monte Carlo methods lies in their unique capacity to transform deterministic problems—such as fitting a model to data—into probabilistic frameworks where performance can be statistically evaluated through random sampling [9]. For researchers, scientists, and drug development professionals, this transformation is not merely computational but epistemological, enabling the comparison of estimation techniques under controlled, reproducible conditions that mirror the stochastic nature of real-world biological systems [10] [11].

The central thesis of modern Monte Carlo research in this domain is that robust benchmarking must move beyond point estimates to characterize the full posterior distribution of parameters, accounting for noise, sparse data, and model misspecification [11]. This guide provides a comparative analysis of leading Monte Carlo-based estimation methodologies, supported by experimental data, to inform the selection and validation of parameter estimation strategies in complex fields like systems biology and pharmacokinetics.

Theoretical Foundation: From Deterministic to Probabilistic

The Monte Carlo method inverts traditional analytical approaches. Instead of solving a deterministic equation directly, it identifies a probabilistic analog and uses random sampling to approximate the solution [9]. The method follows a canonical workflow: define a domain of possible inputs, generate inputs from a probability distribution over that domain, perform a deterministic computation, and aggregate the results [9].

For parameter estimation, this often involves a Bayesian framework where unknown parameters are treated as random variables. The goal is to compute the posterior distribution ( p(\theta | y) )—the probability of parameters (\theta) given observed data (y). This is proportional to the likelihood ( p(y | \theta) ) multiplied by the prior ( p(\theta) ). For all but the simplest models, this posterior is analytically intractable, necessitating Monte Carlo methods to generate samples from it [11].

The historical shift was significant: early simulations tested understood deterministic problems, while modern Monte Carlo solves deterministic problems by treating them probabilistically [10]. This shift is foundational for benchmarking, as it allows researchers to pose "what-if" scenarios: given a known "true" parameter set and a defined data-generating process, how effectively can a given estimation method recover those parameters from noisy, limited observations?

Comparative Benchmark of Monte Carlo Estimation Methods

A critical application is estimating unknown parameters in stochastic models of genetic networks, which is directly relevant to drug target identification and synthetic biology [11]. A benchmark study compared three state-of-the-art Bayesian inference methods for this task using a stochastic version of a synthetic multicellular clock model (a coupled repressilator system) [11].

Experimental Protocol & Model System

The experiment used a stochastic differential equation (SDE) model of a genetic network, introducing dynamical noise and assuming partial, noisy observations [11]. The model consisted of two modified repressilators (genetic oscillators) coupled by a diffusive signaling molecule. The system's 14-dimensional state (including mRNA and protein concentrations) was partially observed through only 2 dimensions, with data sparse in time, creating a data-poor inference scenario [11]. The objective was to compute the posterior distribution of a subset of model parameters (e.g., transcription rates, degradation ratios) conditional on these sparse observations.

Table 1: Comparative Performance of Bayesian Monte Carlo Methods for Parameter Estimation [11]

| Method | Algorithm Class | Key Mechanism | Relative Estimation Error (Low Cost) | Relative Estimation Error (High Cost) | Computational Efficiency |

|---|---|---|---|---|---|

| Particle Metropolis-Hastings (PMH) | Markov Chain Monte Carlo (MCMC) | Uses particle filter to approximate likelihood; produces correlated parameter chain. | Low (Best in class) | Medium | Moderate; suffers from chain correlation. |

| Nonlinear Population Monte Carlo (NPMC) | Importance Sampling (IS) | Uses non-linearly transformed importance weights to reduce variance. | Low | Lowest | High with sufficient samples; more efficient at high budget. |

| Approximate Bayesian Computation SMC (ABC-SMC) | Likelihood-Free Inference | Compares simulated data to observed data via distance metric; no likelihood calculation. | Highest | High | Low; requires massive simulation for accuracy. |

Results Interpretation

The study concluded that while all three methods could solve the inference problem, NPMC and PMH achieved significantly lower estimation errors than ABC-SMC for equivalent computational cost [11]. Under limited computational budgets, PMH and NPMC performed similarly, with a slight edge for PMH in fully stochastic scenarios. As the computational budget increased, NPMC outperformed PMH, showcasing its superior efficiency in refining estimates [11]. ABC-SMC, while advantageous when likelihoods are incalculable, was less efficient for this problem where likelihood approximations were feasible.

Sampling Methodologies & Efficiency

The core of any Monte Carlo simulation is the sampling engine. The basic method uses crude pseudo-random number generators (e.g., Mersenne Twister) [10]. However, advanced methods improve statistical efficiency (faster convergence for a given sample size).

Table 2: Comparison of Advanced Sampling Techniques

| Technique | Description | Advantage | Convergence Rate | Primary Use Case |

|---|---|---|---|---|

| Crude Monte Carlo | Simple pseudo-random sampling from distributions. | Simple, parallelizable. | (O(1/\sqrt{N})) | General-purpose, baseline. |

| Latin Hypercube Sampling (LHS) | Stratifies each input distribution into equiprobable intervals; samples once per interval [12] [13]. | Reduces variance, better coverage of input space. | Faster than crude MC for smooth outputs. | Default in many tools (e.g., @RISK, Analytica); good for low-to-moderate dimensions [12]. |

| Sobol Sequences | A quasi-Monte Carlo method using low-discrepancy, deterministic sequences [12]. | Fills multi-dimensional space more uniformly than random samples. | (O(1/N)) for moderate dimensions (~<15). | High-efficiency integration, sensitivity analysis. |

| Importance Sampling | Oversamples from a "importance" distribution, then weights results to correct bias [12]. | Dramatically reduces variance for estimating rare events. | Varies; can be vastly superior for tail events. | Risk analysis of extreme outcomes (e.g., system failure). |

Diagram: Logical flow comparing different Monte Carlo sampling methodologies, from input distributions to output results.

The Scientist's Toolkit: Software and Reagents for Monte Carlo Research

Research Reagent Solutions (In Silico)

For in silico benchmarking experiments, the "reagents" are software tools, libraries, and numerical standards.

Table 3: Essential Software Tools for Monte Carlo Simulation & Benchmarking

| Tool / Reagent | Type | Primary Function | Key Feature for Benchmarking | Typical Application |

|---|---|---|---|---|

| @RISK | Excel Add-in [12] | Integrates Monte Carlo simulation directly into spreadsheet models. | RiskOptimizer for optimization under uncertainty. | Financial modeling, project risk. |

| Analytica | Stand-alone [12] | Visual modeling platform using influence diagrams. | Intelligent Arrays for multi-dimensional modeling; built-in LHS, Sobol. | Complex systems modeling, policy analysis. |

| R Programming Language | Statistical Environment [14] | Flexible, open-source platform for statistical computing and simulation design. | Packages like mcmc, rstan, EasyABC for custom algorithm implementation. |

Methodological research, custom benchmark studies. |

| GoldSim | Stand-alone [12] | Dynamic simulation platform for complex systems. | Strong handling of time-dependent processes and stochastic events. | Engineering, environmental, and ecological systems. |

| SIPmath Standard | Data Format Standard [12] | JSON-based standard for storing and exchanging random samples (Stochastic Information Packets). | Ensures reproducibility and auditability of simulation inputs. | Sharing and auditing risk models across organizations. |

Experimental Protocol for a Benchmarking Study

Drawing from best practices [14], a robust benchmarking study for parameter estimation methods should follow this workflow:

- Define the Data-Generating Process (DGP): Specify the true model (e.g., the stochastic repressilator SDEs [11]) and fix the "true" parameter values (\theta^*).

- Specify Experimental Conditions: Systematically vary factors like sample size (N), noise level ((\sigma)), and observation frequency.

- Generate Replicated Datasets: For each experimental condition, use the DGP to simulate (R) (e.g., 1000) independent datasets.

- Apply Estimation Methods: Run each candidate estimation algorithm (e.g., PMH, NPMC, ABC-SMC) on each replicated dataset.

- Compute Performance Metrics: Calculate metrics for each method/replicate, such as:

- Bias: ( \frac{1}{R}\sum (\hat{\theta}r - \theta^*) )

- Root Mean Square Error (RMSE): ( \sqrt{\frac{1}{R}\sum (\hat{\theta}r - \theta^)^2 } )

- Coverage Probability: Proportion of replications where the true (\theta^) falls within the 95% credible interval.

- Analyze and Compare: Use summary tables and visualizations to compare metric distributions across methods and conditions.

Diagram: Generic workflow for benchmarking parameter estimation methods using Monte Carlo simulation.

Case Study: Parameter Estimation in a Stochastic Genetic Network

To illustrate the principles, we detail the experimental setup from the comparative study of Bayesian methods [11].

Biological System and Model

The model is a stochastic coupled repressilator, a synthetic genetic clock. Two identical repressilator cells are coupled through a fast-diffusing autoinducer (AI) molecule. Each cell's dynamics are described by SDEs for mRNA and protein concentrations of three genes (tetR, cI, lacI), with Wiener noise representing intrinsic stochasticity [11].

The key unknown parameters for estimation included the dimensionless transcription rate ((\alpha)), the maximum induced transcription rate ((\kappa)), and mRNA-protein lifetime ratios ((\betaa, \betab, \beta_c)).

Diagram: The stochastic coupled repressilator system, a genetic network used for benchmarking parameter estimation methods [11].

Observations: Simulated data consisted of noisy, sparse measurements of only two protein concentrations over time [11]. Benchmarked Methods: PMH, NPMC, and ABC-SMC as described in Section 3. Key Finding: The study demonstrated that in a data-poor, noisy environment, sophisticated Monte Carlo methods (NPMC, PMH) that approximate the true likelihood significantly outperform likelihood-free approaches (ABC-SMC) in accuracy per unit computational cost [11]. This provides a clear, data-driven guideline for method selection in similar biological inference problems.

Monte Carlo simulation is the indispensable engine for modern benchmarking of parameter estimation methods. It transforms the deterministic question of "which method is better?" into a probabilistic one that can be answered with statistical confidence: "with what probability does Method A outperform Method B under a defined set of conditions?"

For researchers and drug development professionals selecting an estimation strategy, evidence-based guidance emerges:

- For high-dimensional, stochastic models where approximate likelihoods can be calculated, NPMC and PMH algorithms are superior, with NPMC excelling when computational resources are ample [11].

- When model complexity precludes likelihood calculation, ABC-SMC remains viable but requires greater computational investment for comparable accuracy [11].

- Software selection should be driven by model complexity: Excel add-ins suffice for well-defined, spreadsheet-based models, while stand-alone visual platforms like Analytica or GoldSim are better suited for complex, dynamic systems [12].

- Sampling efficiency matters. For most applied work, Latin Hypercube Sampling provides a reliable boost in convergence and should be preferred over crude Monte Carlo [12] [13].

Ultimately, the power of Monte Carlo lies in its ability to rigorously stress-test estimation methods against the uncertainty they are designed to handle, providing a critical empirical foundation for scientific inference and decision-making.

In quantitative research across fields from drug development to quantum physics, the estimation of unknown parameters from noisy observational data is a fundamental challenge. Monte Carlo methods have emerged as a powerful, flexible toolkit for tackling this inverse problem, especially where analytical solutions are intractable [15]. These stochastic simulation techniques, which include Markov Chain Monte Carlo (MCMC) and Importance Sampling algorithms, allow researchers to approximate complex posterior distributions and obtain robust parameter estimates [15].

However, the very flexibility of the Monte Carlo paradigm presents a critical challenge: with a multitude of available algorithms and implementations, how can researchers select the most appropriate, reliable, and efficient method for their specific problem? This is where systematic benchmarking becomes indispensable. Benchmarking provides an objective framework for comparing the performance of different Monte Carlo methods against standardized metrics and under controlled conditions that mirror real-world research challenges, such as low signal-to-noise ratios, parameter non-identifiability, and multi-modal posteriors [16] [17].

This guide provides a comparative analysis of prominent Monte Carlo methods for parameter estimation. We focus on objective performance comparisons supported by experimental data, detail key experimental protocols, and provide essential resources to inform methodological selection, thereby enhancing the rigor and reliability of computational research in scientific and industrial applications.

Comparative Analysis of Monte Carlo Methods

The choice of Monte Carlo method significantly impacts the accuracy, computational cost, and feasibility of parameter estimation. The following tables compare key families of algorithms and their documented performance in specific applications.

Table 1: Comparison of Monte Carlo Algorithm Families for Parameter Estimation

| Algorithm Family | Core Mechanism | Key Advantages | Major Limitations | Typical Applications |

|---|---|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Constructs an ergodic Markov chain whose stationary distribution is the target posterior [15]. | Handles complex, high-dimensional posteriors; provides full uncertainty quantification. | Convergence can be slow; difficult to diagnose; sensitive to tuning [17]. | Estimating parameters in dynamical systems biology [17], statistical signal processing [15]. |

| Importance Sampling (IS) | Draws samples from a simpler proposal distribution and weights them to approximate the target [15]. | Can be more efficient than MCMC if a good proposal is found; naturally parallelizable. | Performance collapses with poor proposal choice; "curse of dimensionality" for weight variance. | Localization in sensor networks, Bayesian inference in signal processing [15]. |

| Perturbation Monte Carlo (pMC) | Re-uses photon path information from a single forward simulation to compute Jacobians for multiple detectors [18]. | Highly efficient for many source-detector pairs; directly computes sensitivity. | Requires storing full photon history; accuracy depends on reference simulation. | Time-domain fluorescence molecular tomography (FMT) [18]. |

| Adjoint Monte Carlo (aMC) | Combines a forward simulation from the source and an adjoint (backward) simulation from the detector [18]. | Efficient for few source-detector pairs; based on rigorous reciprocity theorem. | Requires double simulation per pair; can suffer from high variance at boundaries [18]. | Tomographic reconstruction with point sources/detectors [18]. |

A critical insight from comprehensive benchmarking is that no single algorithm dominates all others. Performance is highly problem-dependent. For example, in dynamical systems biology, a benchmarking study of MCMC methods on problems featuring multistability, oscillations, and chaotic regimes found that multi-chain algorithms (e.g., Parallel Tempering) generally outperformed single-chain methods (e.g., Adaptive Metropolis) in exploring complex posterior landscapes [17]. The study also highlighted that effective sample size—a common quality measure—can be misleading unless the exploration quality of the chains is first verified [17].

In biomedical imaging, the computational efficiency of Monte Carlo methods for fluorescence tomography was directly compared. The mid-way Monte Carlo (mMC) method was found to be computationally prohibitive for time-domain applications [18]. The choice between the more viable pMC and aMC methods depends on the experimental setup: pMC is advantageous when using early time-gates and a large number of detectors, while aMC is the method of choice for a small number of source-detector pairs [18].

Table 2: Benchmark Performance in Selected Applications

| Application Field | Benchmarked Methods | Key Performance Metric | Finding | Source |

|---|---|---|---|---|

| Optical Quantum System Characterization | Median estimator vs. Monte Carlo Method (MCM) | Accuracy & Precision of Linewidth Estimate | In low-signal regimes, the median is precise but inaccurate. MCM restores reliable estimates from undersampled data [16]. | [16] |

| Fluorescence Molecular Tomography (Time-Domain) | pMC vs. aMC vs. mMC | Computational Time for Jacobian Calculation | mMC is computationally prohibitive. pMC is faster for many detectors/early gates; aMC is faster for few source-detector pairs [18]. | [18] |

| Dynamical Systems Biology (ODE Models) | Single-Chain MCMC (AM, DRAM) vs. Multi-Chain MCMC (PT, PHS) | Effective Sample Size & Exploration of Multi-Modal Posterior | Multi-chain methods (PT, PHS) consistently outperform single-chain methods for complex, multi-modal posteriors [17]. | [17] |

Experimental Protocols for Benchmarking

A robust benchmarking study requires a standardized experimental protocol to ensure fair and informative comparisons. The following outlines two key protocols from the literature.

Protocol 1: Benchmarking MCMC for Dynamical Systems

This protocol, designed for parameter estimation in systems biology, uses Ordinary Differential Equation (ODE) models to generate synthetic data [17].

- Problem Definition: Select an ODE model (\dot{x} = f(x,t,\eta)) with parameters (\eta) and observables (y = h(x,t,\eta)).

- Data Simulation: Generate noise-corrupted experimental data (\mathcal{D} = {(tk, \tilde{y}k)}). Typically, additive Gaussian noise is assumed: (\tilde{y}{ik} = yi(tk) + \epsilon{ik}, \epsilon{ik} \sim \mathcal{N}(0,\sigmai^2)) [17].

- Posterior Formulation: Define the parameter vector (\theta = (\eta, \sigma)). Compute the likelihood (p(\mathcal{D}|\theta)) and combine with a prior (p(\theta)) to form the posterior (p(\theta|\mathcal{D})) using Bayes' theorem [17].

- Algorithm Configuration: Initialize each sampling algorithm (e.g., Adaptive Metropolis, Parallel Tempering). Use multiple, independent chains with varied initializations.

- Sampling Execution: Run each algorithm to generate a chain of parameter samples from the posterior. Ensure runs are sufficiently long to meet convergence criteria.

- Performance Analysis: Use a semi-automated pipeline to analyze results. Key metrics include:

- Effective Sample Size (ESS): Measures the number of independent samples.

- Convergence Diagnostics: e.g., Gelman-Rubin statistic.

- Computational Cost: CPU time per independent sample.

- Accuracy: Distance between true parameters and posterior mean/median [17].

Protocol 2: Monte Carlo Parameter Reconstruction in Quantum Optics

This protocol evaluates methods for estimating the linewidth of a quantum emitter (e.g., a nitrogen-vacancy center in diamond) from noisy photoluminescence excitation (PLE) spectroscopy data [16].

- Data Acquisition: Perform a PLE scan by sweeping a laser across the emitter's resonance frequency and recording photon counts. Deliberately enter a low signal-to-noise regime using a variable neutral density filter [16].

- Conventional Analysis Fit: Fit each individual PLE scan with a Voigt profile to extract a "single-scan" linewidth (Full Width at Half Maximum - FWHM). Observe the failure mode: fits yield either illusory narrow linewidths with high precision or overestimated linewidths with large uncertainty due to shot noise [16].

- Monte Carlo Simulation:

- Modeling: Simulate a single PLE scan. Sample the total number of detection events (n) from a normal distribution (\mathcal{N}(\bar{n}, \sigma)). Distribute these events across frequency according to a Cauchy (Lorentzian) distribution (P(\omega, \gamma) = \gamma/[\pi(\omega^2 + \gamma^2)]) with linewidth (\gamma) [16].

- Noise Addition: Add background noise events sampled from a Poisson distribution.

- Fitting & Repetition: Fit the simulated spectrum with a Voigt profile and record the FWHM. Repeat this process thousands of times to build a histogram of simulated linewidths.

- Parameter Estimation: Compare the experimental linewidth distribution (from many scans) to the simulated histograms. Use a (\chi^2)-test to find the simulation parameters ((\gamma, \bar{n})) that best fit the experimental data: (S(\gamma,N) = \sumi [Oi - Ei(\gamma,N)]^2 / Ei(\gamma,N)), where (Oi) and (Ei) are observed and simulated bin counts, respectively [16].

- Validation: Compare the (\gamma) estimated by the Monte Carlo method to the lifetime-limited linewidth and to estimates from high-signal data. The method reliably recovers the true linewidth even from severely undersampled data where the median estimator fails [16].

Monte Carlo Benchmarking Workflow

Visualizing Methodological Relationships

Understanding the conceptual and practical relationships between different Monte Carlo approaches is crucial for informed selection. The following diagram synthesizes the pathways for several key methods discussed.

Monte Carlo Method Relationships and Performance

The Scientist's Toolkit: Essential Research Reagents & Solutions

Implementing and benchmarking Monte Carlo methods requires both conceptual tools and practical software resources. The following table details key "research reagent solutions" for this domain.

Table 3: Essential Toolkit for Monte Carlo Parameter Estimation Research

| Category | Item/Resource | Function & Purpose | Example/Note |

|---|---|---|---|

| Benchmark Problems | Dynamical Systems Collection [17] | Provides standardized testbeds with known features (bifurcations, chaos, multistability) to fairly compare algorithm performance on realistic challenges. | ODE models from systems biology. Essential for evaluating exploration of multi-modal posteriors. |

| Quantum Emitter Platform | Nitrogen-Vacancy (NV) Center in Diamond [16] | A stable solid-state quantum system used as a testbed for developing parameter estimation methods under extreme low-signal conditions. | Used to benchmark median estimator vs. Monte Carlo method for linewidth reconstruction [16]. |

| Simulation & Sampling Software | DRAM Toolbox [17] | MATLAB toolbox providing implementations of single-chain MCMC methods (Delayed Rejection Adaptive Metropolis). | A standard starting point for Bayesian parameter estimation in dynamical systems. |

| Simulation & Sampling Software | Custom Multi-Chain MCMC Code [17] | Implementations of advanced samplers like Parallel Tempering (PT) and Parallel Hierarchical Sampling (PHS). | Required for tackling complex posteriors where single-chain methods fail; often not in standard toolboxes [17]. |

| Forward Simulation Engine | Monte Carlo Photon Transport Code [18] | Software to simulate the stochastic propagation of photons in scattering media (e.g., tissue). | The "gold standard" forward model for optical tomography; forms the basis for pMC, aMC, and mMC methods [18]. |

| Analysis & Diagnostic Framework | Semi-Automatic Benchmarking Pipeline [17] | Custom software pipeline to process thousands of MCMC runs, compute metrics (ESS, convergence), and compare results objectively. | Critical for rigorous, large-scale benchmarking studies to avoid subjective analysis. |

Drug development is a process of profound attrition, characterized by significant uncertainty in predicting human efficacy, safety, and ultimate commercial success from early-stage data [19]. Only approximately 15% of lead compounds approaching preclinical candidate selection advance into clinical trials, and merely 10% of those progress to become approved medicines [19]. This high failure rate underscores the critical need for robust, quantitative decision-making tools that can objectively compare alternatives, optimize resource allocation, and de-risk development pathways.

Within this context, Monte Carlo (MC) simulation methods have emerged as powerful tools for benchmarking parameter estimation and navigating uncertainty [20] [21]. As a class of computational algorithms relying on repeated random sampling, MC methods transform uncertainties in input variables—such as pharmacokinetic parameters, clinical event rates, or trial recruitment timelines—into probability distributions for outcomes of interest [22]. This approach allows researchers and portfolio managers to move beyond deterministic, single-point forecasts and instead quantify risk, model complex dependencies, and evaluate the probability of success (PoS) under varying scenarios [23] [21].

This comparison guide evaluates key applications of quantitative, simulation-based methodologies across the drug development continuum, with a focus on benchmarking their performance against traditional approaches. It objectively compares tools and frameworks—from preclinical candidate selection metrics like the Probability of Pharmacological Success (PoPS) to clinical trial simulation for power analysis—by presenting supporting experimental data, detailed protocols, and standardized metrics for productivity assessment [24] [19].

Comparative Analysis of Parameter Estimation and Simulation Methods

The following tables provide a quantitative and qualitative comparison of key methodologies, highlighting how simulation-based approaches benchmark against traditional decision-making frameworks.

Table 1: Comparison of Preclinical Development Performance Metrics

| Metric | Definition & Calculation | Industry Benchmark (Typical Range) | Monte Carlo Simulation Enhancement |

|---|---|---|---|

| Preclinical Success Rate [24] | (No. of Candidates Entering Phase I / No. of Candidates in Preclinical Research) x 100 | 15-20% [24] [19] | Models variability in attrition points (e.g., toxicology, PK) to predict a distribution of possible success rates rather than a fixed average. |

| Cost per Candidate [24] | Preclinical Research Spending / Number of Candidates Entering Phase I | $50-60 million (based on sample data) [24] | Simulates cost drivers and project timelines under uncertainty, providing a probabilistic range of cost outcomes and identifying key financial risks. |

| Time to Preclinical Advancement [24] | Total Preclinical Duration (months) / Number of Candidates Entering Phase I | 24-36 months [25] | Incorporates random delays (e.g., in synthesis, study start) and resource dependencies to forecast timeline distributions and optimize scheduling. |

| Portfolio Output (Candidates/Year) [23] | Number of preclinical candidates selected per year per given resource pool. | Dependent on portfolio size and team composition [23]. | Models scientist allocation, project priority, and milestone transition probabilities to identify optimal team sizing and maximize output [23]. |

Table 2: Benchmarking of Candidate Selection and Clinical Power Methodologies

| Methodology | Primary Application | Traditional/Deterministic Approach | Simulation-Based (Monte Carlo) Approach | Key Comparative Advantage |

|---|---|---|---|---|

| Candidate Selection | Choosing the optimal lead compound for clinical development. | Relies on ranking compounds by discrete, point-estimate parameters (e.g., IC50, AUC). Subjective weighting of factors. | Probability of Pharmacological Success (PoPS): Integrates uncertainties in PK, PD, and disease biology to estimate the probability a compound achieves target pharmacology [19]. | Quantifies overall strength and risk in a single, comparable probability term, accounting for multidimensional uncertainty. |

| Dose Optimization | Identifying effective and safe dose regimens, especially for combination therapies. | Checkerboard assays or fixed-ratio designs; analysis of variance on limited replicates. | Regression Modeling Enabled by Monte Carlo (ReMEMC): Uses sample variation to generate probability distributions for regression coefficients, optimizing combinations amidst noise [26]. | Superior robustness against experimental noise; identifies optimal combinations with fewer experimental rounds (e.g., 3-drug COVID-19 combo in 2 rounds) [26]. |

| Clinical Trial Power Analysis | Determining sample size required to detect a treatment effect. | Based on closed-form equations assuming fixed, known parameters for effect size, variance, and dropout rate. | Clinical Trial Simulation (CTS): Models the full trial process, including patient recruitment variability, protocol deviations, and multiple endpoints, to estimate the distribution of possible trial outcomes and power. | Captures the impact of operational and statistical complexities on power, leading to more robust and realistic sample size choices. |

| Portfolio & Go/No-Go Decisions | Prioritizing projects and making stage-gate decisions. | Discounted cash flow (DCF) with single-point estimates for cost, timeline, and probability of success. | Integrated Portfolio Simulation: Dynamically connects technical and commercial models, simulating interdependencies and triggering recovery plans for risks [21]. | Captures the full value chain from research to launch, quantifies the value of dependencies, and allows for proactive risk mitigation planning [21]. |

Detailed Experimental Protocols for Key Applications

Protocol 1: Estimating Probability of Pharmacological Success (PoPS) for Candidate Selection

- Objective: To quantitatively compare two lead compounds and select the preclinical candidate with the highest probability of achieving sufficient target engagement in the patient population while preserving necessary peripheral activity [19].

- Background: Used for a rare brain disease target expressed both centrally and peripherally. Success requires normalizing central activity while preserving a minimum level of peripheral activity [19].

- Materials: PK/PD parameters for Compounds A & B (CL/F, kp,uu, IC50), in vitro potency data, in vivo animal PK data, literature data on target activity elevation (Fe) in patient populations.

- Software: R with mlxR package (Simulx function) or equivalent PK/PD simulation software [19].

- Procedure:

- Model Construction: Develop a population PK/PD model for each compound. Use simple Emax models for central (Eq. 1) and peripheral (Eq. 2) inhibition [19].

- Parameter Definition: Assign population geometric means and distributions. Key parameters include oral clearance (CL/F), brain-plasma free-drug partition coefficient (kp,uu), and in vitro IC50. Incorporate between-subject variability (e.g., log-normal distribution with 30% CV) [19].

- Uncertainty Specification: Define prior distributions for critical uncertain parameters: uniform distribution for population mean Fe (1.5-3.0 fold) and for kp,uu ranges (e.g., 0.45–0.75 for Compound A) [19].

- Simulation Execution: For each compound, simulate 1000 virtual trials. Each trial consists of 1000 virtual patients. For each patient, calculate central and peripheral inhibition at steady-state for a range of doses [19].

- Success Categorization: For each patient in each trial, categorize based on pre-defined pharmacologic success criteria: "Group A" (success: sufficient central inhibition AND sufficient peripheral preservation). The example criteria required ≥80% of patients in Group A and <5% in a risk group [19].

- PoPS Calculation: The PoPS is the proportion of the 1000 simulated trials that meet the overall success criteria. The candidate with the higher PoPS is selected for advancement [19].

- Data Analysis: Compare dose-PoPS curves for each compound. The optimal dose is that which maximizes PoPS. Conduct sensitivity analysis on success criteria and uncertain parameters [19].

Protocol 2: Monte Carlo Simulation for Preclinical Portfolio Productivity Analysis

- Objective: To model the flow of virtual drug discovery projects through a milestone system to determine the optimal allocation of chemists and biologists for maximizing preclinical candidate output [23].

- Background: Addresses the question of optimal human resource distribution across a portfolio of early-stage discovery projects (hit-to-lead, lead optimization) [23].

- Materials: Historical or assumed milestone transition probabilities (e.g., screening to hit-to-lead), target cycle times for each stage, definitions of project types (biology-driven, chemistry-driven, follow-on), total number of available FTEs by discipline [23].

- Software: Custom algorithm as described in [23], or general-purpose simulation software (e.g., R, Python, AnyLogic).

- Procedure:

- Initialize Portfolio: Create a set of virtual projects, each assigned a type and a starting milestone (e.g., exploratory screening).

- Define Project Parameters: For each project type and milestone, specify the target number of chemists (TC) and biologists (TB), the baseline cycle time (CT), and the probability of success (POS) for transitioning to the next milestone [23].

- Resource Allocation Logic: Staff projects based on priority. A random number (r, 0≤r<1) assigned at milestone entry determines priority and maximum staffing (Eq. 2). Cycle times are adjusted based on actual staffing vs. target staffing using a linear correction function (Eq. 1) [23].

- Project Progression: At each milestone decision point, generate a new random number. If it is less than the stage POS, the project advances and is re-staffed according to the rules for the next stage. If it fails, the project is terminated, and its scientists are released [23].

- Simulation Execution: Run the simulation for a defined period (e.g., 5-10 years) with a fixed total pool of scientists. Track the number of projects entering preclinical development (candidate selection) each year.

- Output Analysis: The primary output is the number of preclinical candidates produced per year. The simulation is repeated with varying total numbers of scientists to identify the point where additional resources no longer increase annual output, indicating the optimum team size for the given portfolio [23].

Visualization of Key Workflows and Methodologies

Diagram 1: Integrated Drug Development Workflow with Monte Carlo Simulation Integration Points. MC simulations inform and optimize decisions across the pipeline.

Diagram 2: Preclinical Candidate Selection Workflow Using the Probability of Pharmacological Success (PoPS) Method.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Translational Biomarker and Model Development

| Research Reagent / Model System | Primary Function in Drug Development | Key Application in Monte Carlo/Quantitative Frameworks |

|---|---|---|

| Patient-Derived Organoids (PDOs) | 3D in vitro models that replicate human tissue biology for efficacy testing and biomarker discovery [27]. | Provide high-content data on patient-specific drug response variability, which can inform the parameter distributions (e.g., IC50 variability) used in PoPS and other simulation models. |

| Patient-Derived Xenografts (PDX) | In vivo tumor models created from patient tissues for validating oncology drug candidates and resistance mechanisms [27]. | Generate translational PK/PD and efficacy data that bridge in vitro findings and human predictions, reducing uncertainty in simulation model parameters. |

| Genetically Engineered Mouse Models (GEMMs) | Immune-competent animal models for studying tumor progression, immune interactions, and biomarker response [27]. | Used in preclinical efficacy studies to establish proof-of-concept and quantify dose-response relationships, which are critical inputs for pharmacodynamic models. |

| Humanized Mouse Models | Mice engineered with human immune system components for immunotherapy biomarker discovery and efficacy testing [27]. | Essential for generating PK/PD and safety data for biologics and immunotherapies, informing species translation factors in PK models. |

| Microfluidic Organ-on-a-Chip | Dynamic platforms that mimic human physiological conditions for drug screening and toxicity testing [27]. | Generate human-relevant ADME and toxicity data early in discovery, helping to parameterize systems pharmacology models and improve translational accuracy. |

| Liquid Biopsy Assays (e.g., ctDNA) | Non-invasive tools for cancer detection, monitoring treatment response, and measuring minimal residual disease (MRD) [27]. | Provide dynamic, patient-specific biomarker data that can be used as surrogate endpoints in clinical trial simulations, enriching models for patient heterogeneity and early efficacy signals. |

| Validated Bioanalytical Assays | GLP-compliant methods for quantifying drug concentrations (PK) and pharmacodynamic biomarkers in biological matrices [25]. | Generate the high-quality, reproducible data necessary for building and validating the mathematical models that underpin all quantitative simulation approaches. |

From Theory to Practice: Implementing and Applying Key Monte Carlo Estimation Algorithms

In computational biology and drug development, estimating unknown model parameters from noisy experimental data is a fundamental challenge. Markov Chain Monte Carlo (MCMC) methods have become indispensable for this task, providing a framework for sampling from posterior distributions to quantify parameter and prediction uncertainties [17] [28]. Selecting the appropriate sampler is critical, as performance varies dramatically with problem features like multimodality, parameter correlations, and chaotic dynamics [17] [15]. This guide provides a comparative benchmark of four prominent MCMC algorithms—Adaptive Metropolis (AM), Delayed Rejection Adaptive Metropolis (DRAM), Metropolis Adjusted Langevin Algorithm (MALA), and Parallel Tempering (PT)—within the context of a broader thesis on Monte Carlo method evaluation. The analysis is based on a comprehensive benchmarking study [17], offering objective performance data and practical protocols to inform method selection for complex, real-world problems in systems biology and pharmacokinetics.

Comparative Performance Analysis

A large-scale benchmarking study [17] evaluated these MCMC methods across diverse dynamical systems common in biological modeling. The study considered challenging features such as bifurcations, periodic orbits, multistability, and chaotic regimes, which give rise to complex posterior distributions with multiple modes and heavy tails. Performance was measured primarily by the effective sample size per computational hour (ESS/hour), which balances statistical efficiency with runtime cost. The following tables summarize key findings.

Table: Performance Summary Across Benchmark Problems

| Algorithm | Class | Key Mechanism | Typical Acceptance Rate | Relative ESS/Hour (Median) | Best Suited For |

|---|---|---|---|---|---|

| Adaptive Metropolis (AM) | Single-Chain | Adapts proposal covariance based on chain history. | ~25% [17] | 1.0 (Baseline) | Moderately complex, uni-modal posteriors. |

| DRAM | Single-Chain | AM + delayed rejection upon proposal rejection. | ~35% [17] | 1.2 - 1.5 | Problems with moderate correlations and non-identifiabilities. |

| MALA | Single-Chain | Uses gradient information for informed proposals. | ~55% [17] | Highly Variable (0.1 - 10+) | High-dimensional, smooth, unimodal log-posteriors. |

| Parallel Tempering (PT) | Multi-Chain | Runs chains at tempered temperatures; swaps states. | Variable (swap rate is key) | 5 - 50 [17] | Multimodal, rugged, or complex parameter landscapes. |

Table: Detailed Quantitative Benchmark Results (Select Problems) [17]

| Benchmark Problem (Feature) | AM | DRAM | MALA | Parallel Tempering | Notes |

|---|---|---|---|---|---|

| FitzHugh-Nagumo (Oscillations) | 142 ESS/hour | 189 ESS/hour | 605 ESS/hour | 1,250 ESS/hour | PT and MALA excel with gradients. |

| Genetic Toggle Switch (Bistability) | 55 ESS/hour | 72 ESS/hour | Failed to converge | 420 ESS/hour | MALA stuck in one mode; PT explores both. |

| Lorenz (Chaotic System) | <10 ESS/hour | <10 ESS/hour | <5 ESS/hour | 85 ESS/hour | Single-chain methods mix poorly. |

| Protein Signaling (Non-Identifiable) | 105 ESS/hour | 310 ESS/hour | 90 ESS/hour | 280 ESS/hour | DRAM's delayed rejection handles flat directions well. |

The data reveals a clear hierarchy: multi-chain methods, particularly Parallel Tempering, consistently and significantly outperform single-chain methods on complex problems [17]. While DRAM offers a reliable improvement over basic AM, MALA's performance is highly conditional on the availability of accurate gradients and the absence of multimodality. The overarching conclusion from the benchmarking is that for the challenging problems typical in systems pharmacology and quantitative biology, the investment in multi-chain algorithms like Parallel Tempering is warranted.

Detailed Experimental Protocols

The following protocols are synthesized from the methodologies used in the comprehensive benchmarking study [17], providing a template for reproducible evaluation of MCMC algorithms.

2.1 Benchmark Problem Suite The evaluation used a curated suite of ordinary differential equation (ODE) models representing common dynamical features:

- Models: FitzHugh-Nagumo (oscillations), Genetic Toggle Switch (bistability), Lorenz '96 (chaos), and models of gene regulation and signaling pathways with practical non-identifiabilities [17].

- Data Simulation: For each model, synthetic observational data

y(t)was generated by numerically solving the ODEs at true parametersη_trueand adding independent Gaussian noise:ỹ(t) = y(t) + ϵ, whereϵ ~ N(0, σ²)[17]. - Posterior Formulation: The likelihood was

p(D|θ) ∝ exp(-∑(ỹᵢ - yᵢ(t))² / (2σᵢ²)). Priorsp(θ)were chosen as weakly informative uniforms or broad normals. The target was the posteriorp(θ|D) ∝ p(D|θ)p(θ)[17].

2.2 Algorithm Configuration & Computational Implementation

- Common Settings: All samplers ran for a fixed budget of 50,000 iterations post-adaptation/burn-in. Convergence was assessed using the potential scale reduction factor (R-hat < 1.1) and trace plot inspection [17] [29].

- Algorithm-Specific Tuning:

- AM/DRAM: Initial proposal covariance scaled by identity matrix. Adaptation began after 1,000 iterations [17] [30].

- MALA: Step-size parameter tuned to achieve an acceptance rate near 55%. Gradients were computed via adjoint sensitivity analysis or automatic differentiation [17].

- Parallel Tempering: Configured with 5 chains. Temperatures were geometrically spaced (

T_max=10⁴). State swaps between adjacent chains were proposed every 10 iterations [17] [31].

- Software & Hardware: Implementations were based on generic MATLAB code [17], with DRAM utilizing its official toolbox [30]. Runs were performed on high-performance computing clusters, with total benchmarking consuming approximately 300,000 CPU hours [17].

2.3 Performance Metrics & Evaluation Pipeline A semi-automatic analysis pipeline was developed to ensure fair comparison [17]:

- Run Samplers: Execute multiple independent chains for each algorithm-problem pair.

- Compute Diagnostics: Calculate ESS, ESS/hour, chain autocorrelation, and acceptance/swap rates.

- Assess Exploration: Visually inspect marginal and pairwise posterior plots to ensure all modes were found, a critical step before trusting ESS values [17].

- Rank Performance: Aggregate metrics across problems to rank algorithm robustness and efficiency.

Selecting and implementing MCMC algorithms requires both theoretical understanding and practical software tools. The following table lists key resources for researchers.

Table: Key Research Reagent Solutions for MCMC Implementation

| Tool / Resource | Type | Primary Function | Relevance to Featured Methods |

|---|---|---|---|

| DRAM Toolbox for MATLAB [30] | Software Library | Provides well-tested implementations of the DRAM algorithm and simpler Metropolis variants. | The primary resource for implementing AM and DRAM. Ideal for getting started with adaptive MCMC in systems biology [17]. |

| MCMCStat for MATLAB [30] | Software Library | A general toolbox for Metropolis-Hastings MCMC, supporting user-defined likelihoods and priors. | Useful for custom implementation and benchmarking of single-chain methods, including prototype adaptations. |

| NPL MCMC Software (MCMCMH, NLLSMH) [32] | Reference Software | Demonstrates robust, well-commented Metropolis-Hastings implementations for metrology and non-linear models. | Excellent as educational and reference code for understanding precise, production-grade MCMC implementation details. |

| Benchmark Problem Collection [17] | Dataset & Code | Provides the ODE models, synthetic data, and posterior definitions used in the comparative study. | Essential for controlled benchmarking of new algorithms against standard methods (AM, DRAM, MALA, PT) on realistic problems. |

| Generalized Parallel Tempering (GPT) Theory [31] | Algorithmic Framework | Presents advanced PT variants with state-dependent swapping for improved efficiency on inverse problems. | Points to the next-generation development of multi-chain methods beyond standard PT for extremely challenging posteriors. |

| Neural Transport Accelerated PT [33] | Emerging Method | Uses neural samplers (e.g., normalizing flows) to create better-informed inter-chain proposals for PT. | Represents the cutting-edge integration of deep learning and MCMC to tackle high-dimensional, complex target distributions. |

Logical Workflows and Method Selection

The relationship between algorithm properties, problem characteristics, and selection logic is visualized in the following diagrams.

Diagram: MCMC Algorithm Relationships and Applications

Diagram: MCMC Algorithm Relationships and Applications

Diagram: MCMC Method Selection Workflow

Diagram: MCMC Method Selection Workflow

Methodological Foundations and Comparative Framework

In the context of benchmarking parameter estimation methods, Monte Carlo (MC) techniques provide the foundational framework for probabilistic inference where analytical solutions are intractable. While Markov Chain Monte Carlo (MCMC) has long been the workhorse for Bayesian computation, Sequential Monte Carlo (SMC) and Optimization Monte Carlo (OMC) represent advanced paradigms designed to address its limitations, particularly in complex, high-dimensional, or multimodal problems prevalent in systems biology and drug development [15].

Sequential Monte Carlo (SMC), also known as particle filtering, operates by propagating a population of weighted samples (particles) through a sequence of intermediary distributions that gradually converge to the target posterior [34]. Its core steps—reweighting, resampling, and moving—allow it to handle complex posterior landscapes adaptively. A key innovation is Persistent Sampling (PS), an extension that retains particles from previous iterations to form a growing, weighted ensemble. This mitigates particle impoverishment and mode collapse, leading to more accurate posterior approximations and lower-variance marginal likelihood estimates, which are critical for robust model comparison in research [34].

Optimization Monte Carlo (OMC) represents a distinct class of methods that hybridize random sampling with optimization principles. These techniques, which include frameworks like Posterior Exploration SMC (PE-SMC), transform a non-negative objective function into a probability density [35]. They then use sequences of distributions, often controlled by an annealing schedule, to steer a population of samples toward global optima. This makes OMC particularly suited for problems like maximum likelihood estimation or finding global minima in multimodal landscapes, common in pharmacokinetic and pharmacodynamic modeling [35].

The following diagram illustrates the logical relationship and core workflow differences between MCMC, SMC, and OMC within the Monte Carlo family.

Diagram: Methodological comparison of MCMC, SMC, and OMC workflows.

Key Differentiators from MCMC: Benchmarking studies reveal that multi-chain MCMC methods generally outperform single-chain approaches but still face challenges with parallelization and exploration of complex posteriors [17]. In contrast, SMC's inherent parallel structure allows efficient use of modern multi-core and distributed computing architectures [36]. OMC methods excel in global optimization tasks, often outperforming simulated annealing and particle swarm optimization in multimodal settings [35].

Performance Benchmarking: Experimental Data and Results

A rigorous comparison within a benchmarking thesis requires quantitative data on computational efficiency, estimation accuracy, and robustness. The following tables consolidate experimental findings from recent studies.

Table 1: Computational Performance and Efficiency

| Method | Key Variant | Parallelization Efficiency | Typical Use Case | Relative Speed (vs. baseline MCMC) | Critical Performance Threshold | Source |

|---|---|---|---|---|---|---|

| SMC | Standard SMC Sampler | High (embarrassingly parallel) | Bayesian model calibration | Comparable or slower on single core | Outperforms MCMC when model runtime > ~20 ms on multi-core systems | [36] |

| SMC | Persistent Sampling (PS) | High | Complex, multimodal posteriors | Lower computational cost for same accuracy | Reduces marginal likelihood error significantly vs. standard SMC | [34] |

| SMC | With approx. optimal L-kernel | High | General Bayesian inference | N/A (variance reduction focus) | Reduces estimate variance by up to 99%; reduces resampling needs by 65-70% | [37] |

| OMC | PE-SMC (Posterior Exploration) | High | Global optimization, multimodal functions | Outperforms simulated annealing, particle swarm | Effective in dimensions d ≥ 10 | [35] |

| MCMC | Parallel Tempering (multi-chain) | Low to Moderate | Multimodal distributions | Benchmark baseline | Generally outperforms single-chain MCMC | [17] |

Table 2: Accuracy and Robustness in Parameter Estimation

| Benchmark Problem / Domain | Method | Performance Metric | Result | Interpretation | Source |

|---|---|---|---|---|---|

| Complex Distributions | SMC with Persistent Sampling | Squared Bias (posterior moments) | Consistently lower than standard methods | More accurate posterior approximation | [34] |

| DFT+U for UO₂ (Material Science) | SMC vs. OMC | Ground State Energy Difference | SMC GS 0.0022 Ry/(f.u.) above OMC GS | Methods search different subspaces; neither alone finds global minimum | [38] |

| Dynamical Systems (ODE models) | Multi-chain MCMC (e.g., PT, DRAM) | Exploration Quality & Effective Sample Size | Superior to single-chain MCMC | Better handling of multimodality and non-identifiability | [17] |

| General Bayesian Inference | SMC with optimal L-kernel | Variance of Estimates | Up to 99% reduction | Dramatically improved statistical efficiency | [37] |

| Ecological Model Calibration | SMC vs. state-of-the-art MCMC | Calibration accuracy & uncertainty | Comparable posterior estimates | SMC achieves similar accuracy with better parallel scaling | [36] |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear basis for the benchmark data, this section outlines the core experimental methodologies from key cited studies.

Protocol 1: Benchmarking SMC (Persistent Sampling) vs. Standard SMC/MCMC [34]

- Objective: Compare accuracy in posterior moment estimation and marginal likelihood (

Z) computation. - Algorithm Setup:

- Standard SMC: Uses a fixed number of particles (N). Sequence of distributions created via temperature annealing (Eq. 2 in source).

- Persistent Sampling (PS): Extends SMC by retaining all particles from previous iterations. Employs multiple importance sampling from the mixture of all past distributions for resampling.

- Performance Metrics: Squared bias of posterior mean estimates, mean squared error of log(

Z), and computational cost. - Outcome: PS demonstrated lower squared bias and significantly reduced

Zerror at a lower computational cost by avoiding particle impoverishment.

Protocol 2: Comparing SMC and OMC for Energy Minimization in DFT+U [38]

- Objective: Determine the ground-state and meta-stable states of UO₂ crystal.

- Method Comparison:

- OMC: Traditionally used in DFT+U, manipulates occupation matrices of Uranium atoms.

- SMC: Proposed alternative, uses only oxygen electronic spin-polarization degrees of freedom.

- Procedure: Both methods were applied to sample the multi-minima energy landscape. The resulting geometries, energies, and electronic structures were compared against each other and experimental data.

- Outcome: Both methods found similar low-energy states, but the precise ground states differed, indicating complementary search subspaces. A hybrid search was recommended.

Protocol 3: Efficiency Gain via Optimal L-Kernels in SMC [37]

- Objective: Minimize the variance of SMC estimates by optimizing the L-kernel, a user-defined tuning parameter.

- Derivation: The theoretically optimal L-kernel was derived. Approximation schemes (e.g., Gaussian mixture approximation of the joint proposal distribution) were developed for practical implementation.

- Testing: The "approximately optimal" L-kernel was tested on uni-modal, bi-modal, and high-dimensional target distributions.

- Performance Measure: Variance of estimated posterior means and number of required resampling steps.

- Outcome: The method achieved variance reductions up to 99% and reduced resampling frequency by 65-70%, demonstrating a major efficiency improvement.

The Scientist's Toolkit: Research Reagent Solutions

Implementing and benchmarking these methods requires specific software tools and algorithmic components. The following table details essential "research reagents" for this field.

Table 3: Essential Research Reagents for SMC/OMC Implementation

| Item Name / Concept | Type | Primary Function | Relevance to SMC/OMC | Example Source / Implementation |

|---|---|---|---|---|

| Persistent Sampling (PS) Algorithm | Algorithmic Extension | Retains particles from all SMC iterations to form a mixture distribution, reducing variance. | Addresses core SMC limitations (particle impoverishment, high variance). | Key innovation from Karamanis & Seljak (2024) [34]. |

| L-Kernel | Algorithmic Parameter | A conditional probability density in the SMC weight update. Controls sampling efficiency. | Optimizing it is a major pathway to increase SMC efficiency (variance reduction). | Derivation and approximation methods in Green et al. [37]. |

| Temperature Annealing Schedule | Algorithmic Scheme | Defines the sequence of intermediary distributions from prior to posterior. | Crucial for both SMC and OMC performance; can be adaptive. | Used in SMC (Eq. 2 [34]) and OMC/PE-SMC frameworks [35]. |

| BayesianTools R Package | Software Library | Provides implementations of various MCMC and SMC samplers for Bayesian inference. | Enables practical benchmarking and application on ecological models. | Used for method comparison in Hartig et al. [36]. |

| Github: SMCapproxoptL | Code Repository | Python code for SMC samplers with approximately optimal L-kernels. | Provides a tested implementation for the variance reduction technique. | Associated with Green et al. (2021) [37]. |

| Parallel Tempering (PT) | Benchmark Algorithm | A multi-chain MCMC method that swaps states between chains at different "temperatures". | Standard benchmark for handling multimodal posteriors; baseline for SMC/OMC comparison. | Included in comprehensive MCMC benchmarking [17]. |

Within the thesis of benchmarking parameter estimation methods, SMC and OMC emerge as powerful complements and alternatives to MCMC. The choice between them depends on the problem's specific contours and the available computational resources.

Recommendations for Selection:

- Choose SMC when working with moderately complex to very complex posteriors, especially when access to parallel computing resources (multi-core CPUs, clusters) is available. Its inherent parallelizability allows it to outperform MCMC for models with runtimes as low as 20ms per evaluation [36]. For critical inference tasks requiring highly accurate marginal likelihoods for model selection, SMC extensions like Persistent Sampling are strongly recommended [34].

- Choose OMC frameworks when the primary goal is global optimization—finding the best-fit parameters—in a multimodal, high-dimensional landscape where traditional optimizers fail. This is pertinent in problems like molecular docking or kinetic parameter fitting where identifying the global minimum is paramount [35].

- Use Multi-chain MCMC as a robust, well-understood benchmark. It remains a strong choice for complex, multimodal problems when parallelization is limited and its convergence can be carefully monitored [17].

Future Directions for Benchmarking: The evidence suggests that hybrid approaches may yield the greatest gains. Future benchmarking work should focus on integrated strategies, such as using OMC for rapid identification of promising modes, followed by SMC for full Bayesian uncertainty quantification within and across those modes. Furthermore, as demonstrated in materials science, neither SMC nor OMC alone may find the true global optimum; systematic benchmarking should guide the development of protocols that combine their complementary strengths [38].

In computational biology and drug development, mechanistic mathematical models are essential for predicting system dynamics, from intracellular signaling to whole-organism pharmacokinetics. The parameters of these models are typically unknown and must be estimated from experimental data [39]. Markov Chain Monte Carlo (MCMC) methods have become a cornerstone for this task, providing a framework for Bayesian parameter estimation and a rigorous analysis of parameter uncertainty [17].

However, selecting, tuning, and validating MCMC algorithms for a specific biological problem remains a significant challenge. Performance is highly dependent on problem features such as multi-modal posteriors, parameter correlations, and structural non-identifiabilities [17]. The scarcity of standardized, realistic test problems hinders objective comparison and optimization of these methods. This creates a critical need for synthetic benchmarking frameworks that can generate controlled, reproducible, and biologically plausible test datasets.

Synthetic datasets offer a "sandbox" environment where the ground truth is known. By simulating data from a model with predefined parameters, researchers can objectively assess an algorithm's accuracy, precision, and efficiency in recovering those parameters [40]. This is especially valuable for evaluating performance on challenging features like bifurcations, oscillations, and multi-stability, which are common in biological systems but difficult to rigorously test with often noisy and limited real-world data alone [17].

This guide compares contemporary approaches for creating synthetic benchmarks, details experimental protocols for their use, and provides a toolkit for researchers to implement these strategies in the context of Monte Carlo parameter estimation for drug development and systems biology.

Comparison of Synthetic Dataset Generation Strategies

The design of a synthetic benchmark involves strategic choices that influence the type and difficulty of the resulting parameter estimation challenge. The table below compares two dominant paradigms: one focused on generating synthetic observational data from a known mechanistic model, and another focused on simulating abstract data structures that mimic complex real-world data, such as clinical spectroscopy signals [40] [17].

Table 1: Comparison of Strategies for Generating Synthetic Benchmarking Datasets

| Feature | Mechanistic Model-Based Simulation [17] | Feature-Based Spectral Simulation [40] |

|---|---|---|

| Core Approach | Solves a known ODE/ODE model with a true parameter vector (θ*) and initial states to simulate time-course observational data (y). | Generates artificial spectra (e.g., IR/Raman) using probabilistic models (e.g., Lorentzian bands) without reference to specific chemical structures. |

| Key Tunable Parameters | True parameter values (θ*), initial conditions, measurement timepoints, noise model (type & magnitude), observables. | Number, position, and amplitude of discriminant/non-discriminant spectral peaks; type and level of instrumental noise; sample size. |

| Realism & Control | High biological/mechanistic realism; direct control over dynamical features (e.g., bistability, oscillations). | High phenomenological realism for spectral data; precise control over signal-to-noise and feature overlap. |

| Primary Benchmarking Use | Evaluating parameter identifiability, estimation accuracy, and uncertainty quantification for dynamical models. | Benchmarking machine learning classification algorithms and feature selection methods (e.g., oPLS-DA, VIP scores). |

| Typical Validation | Recovery of θ* and prediction intervals by MCMC/optimization algorithms. | Classification performance (sensitivity, specificity) on held-out synthetic data or transfer to real clinical spectra [40]. |

| Advantages | Tests the full inverse problem pipeline; results are directly interpretable for modelers. | Rapid generation of large, complex datasets; ideal for stress-testing data analysis algorithms under controlled conditions. |

Experimental Protocols for Benchmarking Parameter Estimation Methods

To ensure fair and reproducible comparisons between different Monte Carlo sampling algorithms, a standardized experimental protocol is essential. The following methodology, synthesized from comprehensive benchmarking studies, provides a robust framework [17].

Protocol: Benchmarking MCMC Samplers on Dynamical Systems

1. Problem Definition & Synthetic Data Generation:

- Select or develop a mechanistic ordinary differential equation (ODE) model,

dx/dt = f(x, t, θ), with defined observables,y = h(x, t, θ)[17]. - Choose a "true" parameter vector θ* and initial conditions x₀.

- Numerically integrate the model to generate noise-free time-course data at specified time points.

- Add independent, normally distributed measurement noise to generate the synthetic dataset D:

ỹ(t_k) = y(t_k) + ε_k, whereε_k ~ N(0, σ²)[17]. - This creates a known ground truth (θ*, x₀) against which algorithms are evaluated.

2. Sampling Algorithm Configuration:

- Define the parameter prior distribution, p(θ), often uniform or weakly informative within biologically plausible bounds.

- Configure a suite of MCMC sampling algorithms for testing. A comprehensive comparison should include:

- Single-chain methods: Adaptive Metropolis (AM), Delayed Rejection Adaptive Metropolis (DRAM).

- Multi-chain methods: Parallel Tempering (PT), Parallel Hierarchical Sampling (PHS).

- Consider initialization schemes: random draws from the prior or from a point found via multi-start local optimization [17].

3. Execution & Computational Setup:

- Run each MCMC algorithm multiple times (e.g., 100 runs) from different initializations to account for variability.

- For multi-chain methods, standardize the number of chains and temperature ladder settings.

- All runs must use an identical computational budget (e.g., total number of model evaluations or iterations).

4. Performance Evaluation & Metrics: