Assessing the Reliability of Reported Enzyme Kinetic Parameters: A Framework for Accurate Research and Drug Development

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on evaluating the reliability of reported enzyme kinetic parameters (e.g., Km, kcat, Vmax).

Assessing the Reliability of Reported Enzyme Kinetic Parameters: A Framework for Accurate Research and Drug Development

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on evaluating the reliability of reported enzyme kinetic parameters (e.g., Km, kcat, Vmax). It explores the foundational importance of these parameters in systems modeling and enzyme engineering, reviews methodological approaches from classical assays to modern AI-based prediction tools, addresses common troubleshooting and data optimization challenges, and discusses validation and comparative analysis techniques. The goal is to equip the target audience with a practical framework to critically assess data quality, mitigate errors, and enhance the accuracy of kinetic parameters used in biomedical research, metabolic engineering, and therapeutic development[citation:1][citation:2][citation:4].

Understanding Enzyme Kinetic Parameters: Core Concepts and Reliability Challenges

Comparative Analysis of Core Kinetic Parameters

The quantitative characterization of enzyme activity relies on three fundamental parameters: the Michaelis constant (Kₘ), the maximum velocity (Vₘₐₓ), and the turnover number (kₐₜ). Together, they define an enzyme's affinity for its substrate and its catalytic power, providing essential metrics for comparing enzyme performance, engineering biocatalysts, and understanding metabolic regulation [1] [2].

Table 1: Definition, Interpretation, and Comparative Significance of Core Kinetic Parameters

| Parameter | Mathematical & Operational Definition | Biological & Functional Interpretation | Comparative Insight |

|---|---|---|---|

| Kₘ (Michaelis Constant) | The substrate concentration ([S]) at which the reaction velocity (v) is half of Vₘₐₓ [1] [3]. Defined as (k₋₁ + k₂)/k₁, where k₁ and k₋₁ are the rate constants for ES complex formation and dissociation, and k₂ is the catalytic rate constant [1]. | Inverse measure of apparent substrate affinity. A lower Kₘ value indicates that the enzyme requires a lower concentration of substrate to reach half-maximal efficiency, suggesting tighter binding or more efficient complex formation [2] [3]. It is often assumed, though not universally true, that the substrate with the lowest Kₘ is an enzyme's natural substrate [3]. | Enables direct comparison of an enzyme's affinity for different substrates or different enzymes' affinities for the same substrate. Critical for identifying the preferred substrate in a pathway. |

| Vₘₐₓ (Maximum Velocity) | The maximum reaction rate achieved when the enzyme is fully saturated with substrate (i.e., all active sites are occupied) [2]. The plateau of the hyperbolic curve in a Michaelis-Menten plot [2]. | Measure of catalytic capacity. Represents the intrinsic speed limit of the enzyme under a given set of conditions (pH, temperature). It is directly proportional to the total concentration of active enzyme [Eₜₒₜₐₗ]: Vₘₐₓ = kₐₜ[Eₜₒₜₐₗ] [1]. | Used to compare the total throughput of different enzymes or enzyme variants under saturating conditions. A higher Vₘₐₓ indicates a greater product output per unit time when substrate is non-limiting. |

| kₐₜ (Turnover Number) | The number of substrate molecules converted to product per active site per unit time when the enzyme is fully saturated [4]. Calculated as kₐₜ = Vₘₐₓ / [Eₜₒₜₐₗ] [5]. | Intrinsic catalytic rate constant. Measures the efficiency of the chemical conversion step once the ES complex is formed. A higher kₐₜ indicates a faster catalytic cycle [4]. | Allows comparison of the inherent catalytic power of enzyme active sites, independent of enzyme concentration. Essential for evaluating the success of enzyme engineering efforts. |

| kₐₜ/Kₘ (Specificity Constant) | The ratio of the turnover number to the Michaelis constant [4]. | Overall measure of catalytic efficiency. Combines affinity (Kₘ) and catalysis (kₐₜ) into a single second-order rate constant that describes the enzyme's performance at low, physiologically relevant substrate concentrations [4] [2]. A higher kₐₜ/Kₘ indicates a more efficient enzyme [4]. | The most important comparative metric for evaluating an enzyme's effectiveness for a given substrate. It is the definitive parameter for comparing the efficiency of different enzymes or mutant variants, as it reflects performance under non-saturating conditions [4]. |

Biological Significance and Practical Applications

These parameters are not abstract numbers but have direct physiological and industrial implications. For instance, in steroid hormone biosynthesis, human 21-hydroxylase (P450c21) exhibits a lower Kₘ for 17α-hydroxyprogesterone (1.2 µM) than for progesterone (2.8 µM), indicating a higher affinity and likely a role as a preferred physiological substrate, which is critical for understanding congenital adrenal hyperplasia [6]. Similarly, the selenoenzyme deiodinase type 1 (D1) has a Kₘ for thyroxine (T4) that is about 1000-fold higher than that of deiodinase type 2 (D2), explaining why D2 is responsible for intracellular T3 production under normal conditions, while D1 becomes a major source of plasma T3 in thyrotoxicosis [6].

In drug transport, kinetic analysis revealed a Kₘ of 71.5 nM for the efflux of propranolol by P-glycoprotein (P-gp) in conjunctival cells, confirming a high-affinity interaction that significantly restricts drug absorption [6]. These examples underscore that reliability in determining Kₘ, Vₘₐₓ, and kₐₜ is foundational for predicting in vivo enzyme behavior, diagnosing metabolic diseases, and designing drugs or inhibitors.

Methodological Comparison: Experimental vs. Computational Determination

The reliability of kinetic parameters is inextricably linked to the methodology used to derive them. Researchers must choose between traditional experimental characterization and emerging computational prediction, each with distinct workflows, strengths, and sources of error.

Table 2: Comparison of Methodological Pathways for Kinetic Parameter Determination

| Aspect | Traditional Experimental Characterization | Computational Prediction & AI Extraction |

|---|---|---|

| Core Principle | Direct measurement of reaction velocity under controlled in vitro conditions, followed by curve-fitting to the Michaelis-Menten equation [7] [5]. | 1. Prediction: Using machine learning models trained on existing kinetic data to forecast parameters for novel enzyme-substrate pairs [8].2. Extraction: Using natural language processing (NLP) to mine published literature for hidden ("dark matter") kinetic data [9]. |

| Primary Workflow | 1. Protein expression & purification [5].2. Assay development (e.g., colorimetric, fluoride probe) [7] [5].3. Initial rate measurement across a [S] range [7].4. Non-linear regression to fit v vs. [S] data [7]. | For Prediction (e.g., DLERKm model): Encode enzyme sequence, substrate/product SMILES strings, and reaction fingerprints → process through deep neural network → predict Kₘ value [8].For Extraction (e.g., EnzyExtract): Process full-text publications with OCR & NLP → identify and validate kinetic parameters → map data to structured databases [9]. |

| Key Advantages | • Provides direct, empirical evidence.• Can control for specific conditions (pH, temperature, cofactors).• Yields a full kinetic profile (curve).• Considered the "gold standard" for validation. | Prediction: Extremely fast, low-cost, scales to thousands of predictions, guides experimental design [8].Extraction: Unlocks vast amounts of legacy data from literature, creates large-scale, structured datasets for model training [9]. |

| Key Limitations & Reliability Concerns | • Time-consuming and resource-intensive [8].• Assay artifacts (e.g., non-linear product detection, enzyme instability) [5].• Errors in enzyme concentration determination propagate to kₐₜ.• Results are condition-specific and may not translate to in vivo. | Prediction: Model accuracy depends on training data quality and diversity; poor generalizability to novel enzyme classes [8].Extraction: Susceptible to OCR errors, misinterpretation of context (e.g., units, conditions), and incomplete reporting in source literature [9]. |

| Ideal Use Case | Definitive characterization of a specific enzyme under relevant conditions; validation of engineered enzyme variants; rigorous mechanistic studies [7] [5]. | High-throughput screening of enzyme libraries in silico; meta-analysis of kinetic trends across enzyme families; filling knowledge gaps where experimentation is impractical [9] [8]. |

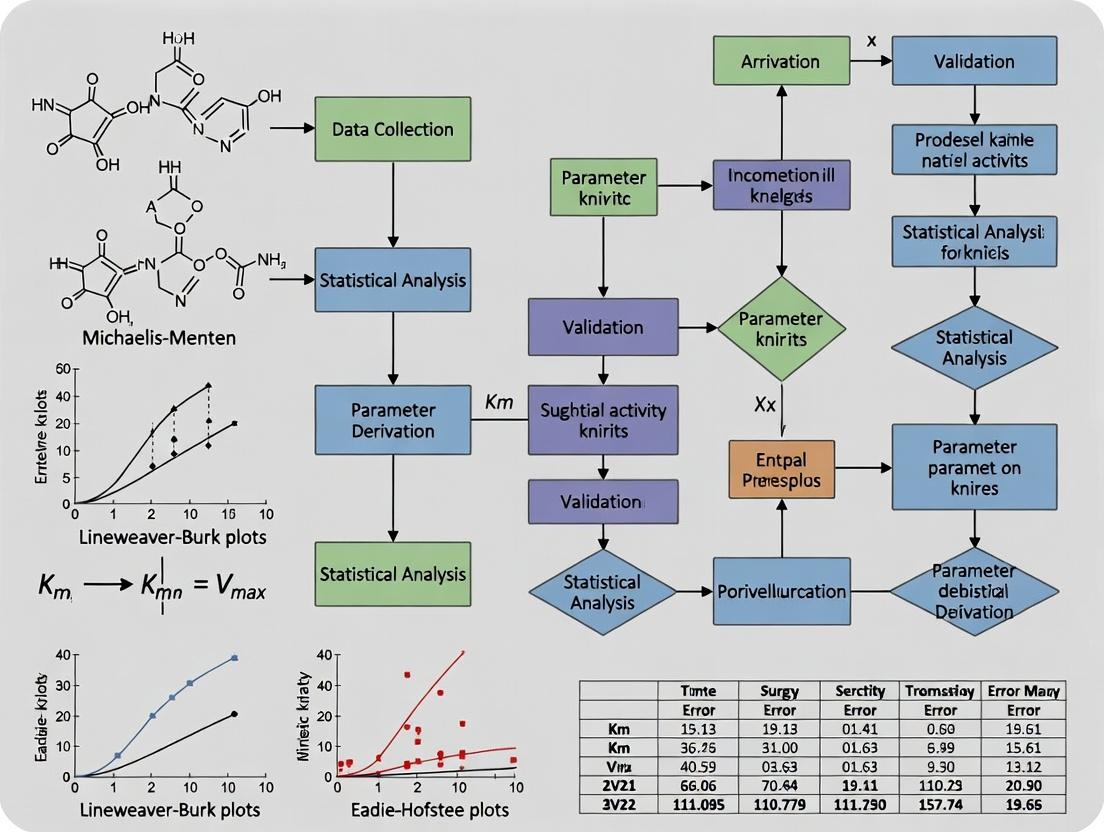

Figure 1: Comparative Workflows for Deriving Enzyme Kinetic Parameters. The traditional experimental pathway (top) is empirical and condition-specific, while the computational pathway (bottom) leverages data-driven models for prediction or literature mining for extraction [7] [9] [8].

Critical Experimental Protocols for Reliable Data

The reliability of experimentally determined parameters hinges on meticulous protocol design. A standard Michaelis-Menten kinetics experiment involves several critical stages [7] [5]:

- Enzyme Preparation: Obtain purified enzyme at a known, accurate concentration. Impurities or inaccurate concentration measurement directly invalidate kₐₜ calculation.

- Assay Development: Choose a specific, linear detection method. For example, a colorimetric assay using 4-nitrophenol (pNP) release monitored at 405 nm is common for glycosidases [7]. Orthogonal validation with a direct method like a fluoride ion-selective electrode for dehalogenases is recommended [5].

- Determining the Linear Range: Conduct preliminary tests to identify the time window where product formation is linear with time and the enzyme concentration range where initial velocity (v₀) is proportional to [E]. This ensures measurements reflect true initial rates [5].

- Data Collection: Measure v₀ at a minimum of 6-8 substrate concentrations spanning values below and above the expected Kₘ. Each condition should be performed in replicate (e.g., triplicate) [7].

- Data Fitting and Analysis: Plot v₀ versus [S] and fit the data using non-linear regression to the Michaelis-Menten equation to obtain Kₘ and Vₘₐₓ directly. Avoid using linearized plots (e.g., Lineweaver-Burk) for parameter estimation, as they distort error distribution [7]. Calculate kₐₜ = Vₘₐₓ / [Eₜₒₜₐₗ].

A critical reliability pitfall is attempting to determine Kₘ from a single progress curve (product vs. time plot at one substrate concentration). As demonstrated in educational resources, while Vₘₐₓ can sometimes be estimated from a plateau, Kₘ cannot be determined without data from multiple substrate concentrations [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Enzyme Kinetic Assays

| Reagent/Material | Typical Source/Example | Primary Function in Kinetic Assays |

|---|---|---|

| Purified Enzyme | Heterologous expression (e.g., E. coli) and purification via affinity chromatography [5]. | The catalyst of interest. Must be purified to homogeneity, and its concentration must be accurately determined (via A₂₈₀ or assay) for kₐₜ calculation. |

| Synthetic Substrate | Commercial suppliers (e.g., Sigma-Aldrich, Fisher Scientific) [7]. Often coupled to a chromophore like p-nitrophenol (pNP). | The molecule upon which the enzyme acts. pNP-coupled substrates allow direct spectrophotometric detection of product formation [7]. |

| Specialized Assay Buffer | e.g., 10X GlycoBuffer (500 mM sodium acetate, 50 mM CaCl₂, pH 5.5) [7] or Tris buffer [5]. | Maintains optimal pH and ionic strength for enzyme activity. May contain essential cofactors (e.g., Ca²⁺) or stabilizers like BSA [7]. |

| Detection Reagent/Probe | • Chromogenic: p-nitrophenol (detect at 405 nm) [7].• Fluorometric: Fluoride ion-selective electrode/probe [5].• pH Indicator: Phenol red for proton-release assays [5]. | Enables quantitative measurement of product formation or substrate depletion over time. Choice dictates assay sensitivity and specificity. |

| Standard for Calibration | e.g., pNP standard for colorimetric assays; fluoride ion standards for ISE calibration [7] [5]. | Essential for converting raw signal (absorbance, voltage) into molar concentration of product, creating the standard curve needed for quantitation. |

| High-Throughput Platform | 96-well or 384-well microplate reader [7]. | Allows simultaneous kinetic measurement of many reactions (different [S], replicates, controls), improving throughput and data consistency. |

| Data Analysis Software | GraphPad Prism, SigmaPlot, or custom scripts (Python/R) [5]. | Performs non-linear regression fitting of v₀ vs. [S] data to the Michaelis-Menten equation, generating Kₘ, Vₘₐₓ, and associated confidence intervals. |

Reliability Assessment in a Modern Data Landscape

The thesis of reliability assessment must now contend with two parallel data streams: empirical results and computational predictions. The gold standard remains well-controlled experimentation, but its scope is limited. The emerging paradigm involves a synergistic loop:

- Computational models (like DLERKm for Kₘ prediction [8]) are trained on curated high-quality experimental data from databases like BRENDA and SABIO-RK.

- Literature mining tools (like EnzyExtract [9]) expand these training sets by automatically extracting the "dark matter" of enzymology—hundreds of thousands of previously inaccessible kinetic parameters from published literature.

- Improved models then make more reliable predictions to guide targeted experiments.

- New experimental results feed back into databases, closing the loop.

The major reliability challenge for computational data is traceability and context. An AI-predicted Kₘ value is useless without an estimate of confidence, and a literature-mined value is unreliable if the original experimental conditions (pH, temperature) are not captured [9]. Therefore, the future of reliable kinetic parameter research lies in standardized reporting (e.g., using EnzymeML), robust model benchmarking, and the integration of both empirical and computational evidence to build a more complete and trustworthy understanding of enzyme function.

The quantitative parameters describing enzyme catalysis—the turnover number (kcat), the Michaelis constant (Km), and the catalytic efficiency (kcat/Km)—form the foundational language of biochemistry. Their reliability is not merely an academic concern but a pivotal determinant of success across biotechnology. In metabolic modeling, inaccurate kinetic parameters compromise the predictive power of genome-scale models, leading to erroneous flux predictions and failed strain-engineering strategies [11] [12]. For drug discovery, unreliable parameters for targets or off-target enzymes can mislead the assessment of compound potency and specificity, wasting resources and increasing developmental risks [13]. In enzyme engineering, the iterative cycle of design, prediction, and testing hinges on the accuracy of baseline kinetic data and the models built upon them; unreliable data leads to plateaus in performance and inefficient campaigns [14] [15].

A core thesis emerging from contemporary research is that reliability is a multifaceted challenge. It encompasses the accuracy and generalizability of predictive computational models, the completeness and veracity of foundational datasets, and the context-specific application of parameters within systems-level frameworks [14] [9] [16]. This guide provides a comparative analysis of modern solutions addressing these reliability challenges, detailing their methodologies, performance, and practical applications.

Comparison of Modern Reliability Assessment and Enhancement Approaches

The following table compares three seminal approaches that target different facets of the reliability problem: a deep learning model for accurate parameter prediction, a large-language-model pipeline for expanding and curating reliable data, and a computational framework for reliable metabolic state comparison.

Table 1: Comparison of Modern Approaches for Enhancing Reliability in Enzyme Kinetics and Metabolic Analysis

| Approach / Tool | Core Purpose & Design | Key Inputs | Validation & Performance | Primary Advantages | Key Limitations |

|---|---|---|---|---|---|

| CataPro (Deep Learning Model) [14] | Predicts kcat, Km, and kcat/Km with enhanced accuracy and generalization. Uses ProtT5 protein embeddings and molecular fingerprints. | Enzyme amino acid sequence; Substrate structure (SMILES). | Unbiased 10-fold cross-validation (clustered by sequence similarity). Outperformed baseline models (DLKcat, TurNuP). Experimental validation: identified SsCSO enzyme (19.53x activity boost). | Mitigates data leakage and overfitting. Demonstrated utility in real-world enzyme discovery and engineering. Integrates state-of-the-art protein language models. | Performance limited by coverage of training data. May struggle with entirely novel enzyme folds or substrate classes. |

| EnzyExtract (LLM Data Pipeline) [9] | Automates extraction and structuring of enzyme kinetic data from literature to illuminate "dark data." Uses fine-tuned GPT-4o-mini and OCR. | Full-text scientific publications (PDF/XML). | Extracted 218,095 entries from 137,892 papers. 89,544 entries were new vs. BRENDA. Retraining existing kcat predictors (e.g., DLKcat) with its database (EnzyExtractDB) improved model performance (RMSE, MAE, R²). | Dramatically expands available, structured data. High accuracy benchmarked against manual curation. Directly enhances predictive models by providing more training data. | Confidence levels in entries vary (High/Medium/Low). Requires sequence and substrate mapping in post-processing. |

| ComMet (Metabolic State Comparison) [16] | Compares metabolic states/phenotypes in large genome-scale models (GEMs) without assuming an objective function. Uses flux space sampling and PCA. | Genome-scale metabolic model (GEM); Condition-specific constraints (e.g., uptake rates). | Applied to human adipocyte model to distinguish metabolic states with/without branched-chain amino acid uptake. Identified differentially active modules (e.g., TCA cycle). | Objective-function independent, crucial for complex human cell analysis. Identifies functional metabolic differences, not just flux values. Scalable to large models. | Computationally intensive for very high-dimensional sampling. Interpretation of PCA-based modules requires biochemical expertise. |

Detailed Experimental Protocols for Key Studies

This protocol outlines the creation of an unbiased benchmark and a robust deep learning model for kinetic parameter prediction.

Unbiased Dataset Construction:

- Data Collection: Compile initial datasets for kcat and Km by aggregating entries from the BRENDA and SABIO-RK databases.

- Data Curation: Extract entries where both kcat and Km are available to create a kcat/Km dataset. Map all entries to standardized enzyme sequences (UniProt) and substrate structures (PubChem CID, canonical SMILES).

- Sequence Clustering: Use CD-HIT to cluster enzyme sequences within each dataset at a 40% sequence identity threshold. This prevents homologous sequences from appearing in both training and test sets.

- Dataset Partitioning: Divide the resulting sequence clusters into ten distinct folds to create a ten-fold cross-validation dataset where each fold represents phylogenetically distinct enzyme groups.

Model Architecture (CataPro):

- Enzyme Representation: Encode enzyme amino acid sequences into a 1024-dimensional feature vector using the pre-trained protein language model ProtT5-XL-UniRef50.

- Substrate Representation: Encode substrate SMILES strings using a combined feature vector of MolT5 embeddings (768-dim) and MACCS keys fingerprints (167-dim).

- Network Design: Concatenate the enzyme and substrate vectors. Process through a multi-layer neural network consisting of:

- Three fully connected (dense) layers with ReLU activation and dropout for regularization.

- A final linear output layer to predict the log10-transformed value of the target kinetic parameter (kcat, Km, or kcat/Km).

- Training: Train the model using the Adam optimizer and Mean Squared Error (MSE) loss function on the prepared unbiased folds.

Experimental Validation (Case Study):

- Enzyme Mining: Use CataPro to screen for potential enzymes catalyzing the conversion of 4-vinylguaiacol to vanillin. Select a high-scoring candidate (SsCSO from Sphingobium sp.).

- Wet-Lab Assay: Express, purify, and kinetically characterize the candidate enzyme. Compare its activity (e.g., specific activity or kcat/Km) to a known reference enzyme (CSO2).

- Iterative Engineering: Use CataPro to predict the effects of point mutations on SsCSO activity. Design, construct, and test mutant libraries. A top mutant demonstrated a 3.34-fold increase in activity over the wild-type SsCSO.

This protocol describes an automated pipeline for mining published literature to build a comprehensive kinetic database.

Literature Acquisition and Parsing:

- Assemble a corpus of full-text scientific publications (PDF and XML) using APIs from publishers (Elsevier, Wiley) and open-access repositories (OpenAlex). Search using keywords related to enzyme kinetics (e.g., "kcat," "Michaelis-Menten").

- Parse XML files using BeautifulSoup. For PDFs, use PyMuPDF for text extraction and a fine-tuned ResNet-18 model for specialized unit recognition in figures/tables.

- Process tables within PDFs using the TableTransformer deep learning model to correctly identify and structure tabular data before converting to Markdown.

LLM-Powered Information Extraction:

- Segment processed text into relevant passages (e.g., methods, results sections).

- Use a fine-tuned GPT-4o-mini large language model (LLM) to extract entities and relationships from each passage. The LLM is prompted to identify and link:

- Enzyme: Name, organism, source, mutations.

- Substrate: Name, concentration.

- Kinetic Parameters: kcat, Km, values with units.

- Assay Conditions: pH, temperature, buffer.

- The LLM outputs structured JSON records for each identified data point.

Data Curation and Database Construction:

- Entity Mapping: Map extracted enzyme names to standardized UniProt IDs and protein sequences. Map substrate names to PubChem CIDs and SMILES.

- Confidence Scoring: Assign confidence levels (High/Medium/Low) to each entry based on the clarity of extraction and success of entity mapping.

- Database Creation: Compile high-confidence, sequence-mapped entries into a structured SQL database (EnzyExtractDB). The published database contains 92,286 high-confidence entries, significantly expanding upon manually curated resources.

This protocol details a hybrid computational method for identifying gene knockouts to optimize metabolite production.

Metabolic Model and Algorithm Setup:

- Obtain a genome-scale metabolic model (GEM) for the target organism (e.g., E. coli) in a stoichiometric matrix (S) format.

- Formulate the optimization problem: Identify a set of reaction (gene) knockouts that maximize the production flux of a target metabolite (e.g., succinate) while maintaining a minimum biomass flux.

- Hybridize a metaheuristic algorithm (Particle Swarm Optimization - PSO) with the metabolic modeling algorithm Minimization of Metabolic Adjustment (MOMA). MOMA predicts the sub-optimal flux distribution in the knockout mutant by minimizing the Euclidean distance from the wild-type flux distribution.

PSOMOMA Iterative Optimization:

- Initialization: Generate a swarm of particles, where each particle's position vector represents a potential set of reaction knockouts (e.g., a binary vector indicating reactions are knocked out or not).

- Fitness Evaluation: For each particle (knockout set):

- Apply the knockouts to the model by constraining the corresponding reaction fluxes to zero.

- Use MOMA (a quadratic programming solution) to calculate the steady-state flux distribution of the mutant.

- The fitness score is the calculated production flux of the target metabolite from the MOMA solution.

- Swarm Update: Update each particle's velocity and position based on its own best-known solution (personal best) and the swarm's best-known solution (global best). This stochastically explores the combinatorial knockout space.

- Termination: Repeat the fitness evaluation and swarm update for a predefined number of iterations or until convergence. The global best position indicates the predicted optimal knockout strategy.

Validation: The in silico predicted optimal knockout strain is constructed in vivo using genetic engineering techniques (e.g., CRISPR). The mutant strain is cultured under defined conditions, and the actual production yield of the target metabolite is measured via analytics (e.g., HPLC) and compared to the model prediction.

Visualizations of Workflows and Model Architectures

The Pathway from Data to Reliable Application

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Reliable Enzyme Kinetics and Metabolic Analysis Research

| Resource Name | Type | Primary Function & Application | Key Benefit for Reliability |

|---|---|---|---|

| CataPro [14] | Deep Learning Model | Predicts enzyme kinetic parameters (kcat, Km, kcat/Km) from sequence and substrate structure. Used for virtual enzyme screening and guiding engineering. | Built and tested on unbiased datasets to prevent overfitting, enhancing generalizability and trust in predictions. |

| EnzyExtractDB [9] | Curated Kinetic Database | A large-scale database of enzyme-kinetic data extracted from literature. Used as training data for models or a reference for experimentalists. | Illuminates "dark data," expanding the coverage and diversity of available reliable kinetic measurements. |

| ComMet [16] | Computational Framework | Compares metabolic states (e.g., healthy vs. disease) using genome-scale models without a pre-defined objective function. | Removes a major assumption (objective function) from metabolic analysis, leading to more biologically plausible and reliable comparisons. |

| BRENDA / SABIO-RK [14] [9] | Manually Curated Database | The gold-standard repositories for enzyme functional data, including kinetic parameters. | Provide essential, high-quality ground-truth data for validation, model training, and experimental design. |

| PSOMOMA / OptKnock [11] | Metabolic Optimization Algorithm | Identifies genetic interventions (e.g., knockouts) to optimize metabolite production in silico. | Integrates reliable kinetic/thermodynamic constraints to generate genetically engineered strains with a higher chance of success in the lab. |

| ProtT5 / ESM [14] | Protein Language Model | Converts amino acid sequences into informative numerical feature vectors (embeddings). | Provides a robust, general-purpose representation of enzyme sequences that captures evolutionary and functional information, improving model input reliability. |

| Directed Evolution Platforms (e.g., CodeEvolver) [15] [17] | Experimental Workflow | High-throughput systems for generating and screening mutant enzyme libraries. | Generates large, high-quality datasets linking sequence to function, which are critical for training and validating the next generation of reliable predictive models. |

The accurate reporting and curation of enzyme kinetic parameters—such as the Michaelis constant (Km), turnover number (kcat), and catalytic efficiency (kcat/Km)—form the empirical foundation for understanding biological systems [18]. These parameters are essential for deterministic systems modeling, drug discovery, metabolic engineering, and biocatalyst design. However, the reliability of these parameters in published literature and databases is often compromised by incomplete reporting of experimental conditions, inconsistent methodologies, and a lack of standardized data formats [18] [19]. This comparison guide objectively evaluates four primary sources of enzyme kinetic data—primary literature, the BRENDA database, the SABIO-RK database, and the STRENDA Initiative—within the critical context of reliability assessment for research and industrial applications.

The landscape of enzyme kinetic data sources varies significantly in curation method, data comprehensiveness, and intrinsic reliability. The following table provides a structured, high-level comparison of the four primary sources.

Table 1: Core Characteristics of Primary Enzyme Kinetic Data Sources

| Feature | Primary Literature | BRENDA (BRaunschweig ENzyme DAtabase) | SABIO-RK (System for the Analysis of Biochemical Pathways - Reaction Kinetics) | STRENDA (STandards for Reporting ENzymology DAta) DB |

|---|---|---|---|---|

| Primary Data Source | Direct publication of original research. | Automated text mining of literature, supplemented with manual curation [20]. | Manual extraction and curation from literature [21]. | Direct submission by researchers during manuscript preparation [19]. |

| Core Focus | Novel findings, specific enzymes, or methodologies. | Comprehensive enzyme information, including kinetic parameters, nomenclature, and functional data [18]. | Biochemical reactions and their kinetic properties, with an emphasis on supporting computational modeling [21] [22]. | Standardized reporting and validation of enzyme kinetics data to ensure completeness and reproducibility [19]. |

| Key Strength | Source of new, original data. | Extensive coverage of enzymes and parameters from a vast body of literature [18] [20]. | High data quality and rich context, including kinetic rate laws, formulas, and detailed experimental conditions [21]. | Promotes data reliability and completeness by enforcing reporting guidelines before publication [18] [19]. |

| Inherent Reliability Challenge | Highly variable; often omits essential metadata (pH, temperature, buffer) needed for reproducibility and comparison [18] [19]. | Risk of erroneous data extraction via automated text mining from poorly reported literature [20]. Quality depends on source literature. | Manual process limits data volume and coverage compared to automated systems [21]. | Voluntary adoption; data coverage is limited to submissions from authors and participating journals [20]. |

| Primary User Interface | Scientific journals. | Web-based search interface. | Web-based search interface and RESTful web services for integration into modeling tools [21]. | Web-based submission tool and public query database [19]. |

The following diagram illustrates the logical relationships and data flow between these sources and the broader research ecosystem.

Diagram 1: Data Flow and Relationships Between Kinetic Data Sources and Users. SRN: STRENDA Registry Number.

In-Depth Source Profiles and Reliability Metrics

Primary Scientific Literature

The primary literature is the origin of all experimental kinetic data. Its reliability is the foundational variable upon which all secondary databases depend. Common pitfalls that severely compromise reliability include the use of non-physiological assay conditions (e.g., wrong pH, temperature, or buffer systems), failure to report essential metadata like enzyme purity and source, and a lack of initial rate verification [18]. The absence of this information makes it impossible to validate, compare, or correctly integrate parameters into models.

BRENDA Database

BRENDA is the most comprehensive enzyme resource. Its kinetic data is primarily extracted via the KENDA (Kinetic ENzyme Data) automated text-mining pipeline, which scans scientific literature [20]. This allows for vast coverage but introduces reliability concerns. Automated extraction struggles with the unstructured and inconsistent reporting common in manuscripts, leading to potential annotation errors or the loss of critical contextual metadata [20]. While manual curation exists, it cannot fully vet all automatically mined entries. BRENDA's strength is its breadth, but users must critically evaluate individual entries for contextual completeness.

SABIO-RK Database

SABIO-RK prioritizes quality and contextual depth for systems biology modeling. It relies on manual curation by biological experts who extract and structure data from publications, ensuring a high degree of accuracy and completeness [21] [22]. As of 2017, it contained data from over 5,600 publications, comprising about 57,000 database entries across 934 organisms, with a focus on metabolic reactions [21]. Each entry is richly annotated with links to external databases (UniProt, ChEBI, KEGG) and includes critical information like kinetic rate laws, formulas, and detailed experimental conditions [21]. Its primary limitation is scale, as the manual process cannot match the volume of automated systems.

STRENDA DB Initiative

STRENDA DB addresses reliability at the source. It is a community-driven submission and validation system that implements the STRENDA Guidelines [19]. Authors input their kinetic data during manuscript preparation; the system automatically checks for completeness and formal correctness against the guidelines (e.g., mandatory pH, temperature, enzyme source) [19]. Compliant datasets receive a perennial STRENDA Registry Number (SRN) and DOI, which can be referenced in the publication [19]. This process ensures that before peer review, the data meets minimum reporting standards for reproducibility. Its effectiveness grows as more journals mandate its use.

Detailed Experimental Protocols for Data Handling and Curation

- Literature Selection: Publications are identified via keyword searches (e.g., in PubMed) or through specific collaboration projects and user requests.

- Expert Data Extraction: A biological expert reads the full publication.

- Structured Data Entry: The curator enters relevant information into a web-based curation interface. Data is semi-automatically checked for consistency.

- Contextual Annotation: The curator adds extensive annotations using controlled vocabularies and links to external databases (e.g., UniProt ID for the enzyme, ChEBI ID for compounds, NCBI Taxonomy for organism).

- Quality Control & Storage: The curated data for a single reaction under specific conditions is stored as a unique database entry. Data from models or labs can also be uploaded directly in SBML format for processing.

This protocol, used to create a structure-kinetics dataset, exemplifies the complex processing required to enhance the utility of database-derived information.

- Data Curation: Raw Km and kcat values for enzyme-substrate pairs are extracted from BRENDA. Redundant entries are resolved by computing geometric means after manual verification.

- Entity Annotation: Enzyme sequences are mapped to UniProtKB IDs to find associated protein structures (PDB IDs). Substrate IUPAC names are converted to isomeric SMILES strings using tools like OPSIN and PubChemPy.

- Structure Mapping & Modeling: Available PDB structures are classified (e.g., with substrate bound, apo form). For enzymes without a structure, homology modeling is performed. Substrate 3D structures are generated from SMILES.

- Complex Assembly & Refinement: Substrates are docked into enzyme active sites. The protonation states of enzyme residues are adjusted based on the experimental pH reported in BRENDA.

- Validation & Packaging: The final dataset of enzyme-substrate complex structures with associated kinetic parameters is compiled for downstream analysis.

Diagram 2: Workflow for Constructing a Structure-Kinetics Dataset from BRENDA.

- Researcher Registration: The user creates an account in the STRENDA DB system.

- Manuscript & Experiment Definition: The user creates a "Manuscript" entry and defines one or more "Experiments" within it, each detailing a specific enzyme/protein studied.

- Guideline-Driven Data Entry: For each Experiment, the user enters data into structured fields that enforce the STRENDA Guidelines. Mandatory fields include:

- Enzyme Source & Identity: Organism, recombinant source, purity, modifications.

- Assay Conditions: Temperature, pH, buffer, pressure.

- Substrate/Effector Details: Identity, concentrations.

- Kinetic Results: Parameter values (Km, kcat, etc.) with associated errors and the model used for fitting.

- Automated Validation: The system checks all compulsory fields for completeness and formal correctness (e.g., valid pH range).

- Identifier Assignment: Upon successful validation, the system issues a unique STRENDA Registry Number (SRN) and a DOI for the dataset, which the researcher cites in their manuscript.

Table 2: Key Resources for Reliable Enzyme Kinetics Research

| Tool / Resource | Primary Function | Role in Reliability Assessment |

|---|---|---|

| STRENDA Guidelines | A checklist of minimum information required for reporting enzymology data [19]. | Provides the gold standard for evaluating data completeness in any source (literature or database). |

| Enzyme Commission (EC) Number | A numerical classification system for enzymes based on the chemical reaction they catalyze [18]. | Critical for unambiguous enzyme identification, preventing errors from synonymous or similar enzyme names [18]. |

| UniProtKB Identifier | A unique accession number for a protein sequence entry in the UniProt Knowledgebase. | Enables precise mapping of kinetic data to a specific protein sequence and its known features, facilitating cross-database queries [21] [20]. |

| SBML (Systems Biology Markup Language) | A standard computational format for representing biochemical reaction networks [21]. | Allows for direct, error-free import of curated kinetic data (e.g., from SABIO-RK) into modeling and simulation software, preserving context [21]. |

| PubChem CID / ChEBI ID | Unique identifiers for chemical compounds. | Ensures precise and unambiguous identification of substrates, products, and effectors, which is often a major source of ambiguity in literature reports. |

| Primary Literature Reference (PMID/DOI) | The direct link to the original research article. | Essential for traceability. Any database entry should provide this to allow users to consult the original context and methodology [21] [18]. |

The reliability of reported enzyme kinetic parameters forms the cornerstone of research in biochemistry, drug discovery, and molecular diagnostics. This reliability is critically undermined by a triad of interconnected challenges: inconsistent experimental assay conditions, pervasive missing or inadequate metadata, and fundamental issues in database curation [23] [24] [25]. Inconsistent conditions lead to irreproducible and conflicting kinetic data, as vividly illustrated in the CRISPR-Cas field where reported turnover rates for the same enzyme vary by orders of magnitude [26]. Missing metadata strips experimental data of the essential context needed for validation and reuse, a systemic problem evident in major repositories like ClinicalTrials.gov [27]. Finally, inadequate curation at the database level allows these poor-quality data to persist, proliferate, and mislead subsequent analyses [24] [28]. This guide objectively compares methodologies and tools designed to address these challenges, providing a framework for researchers to assess and improve the robustness of their kinetic parameter data within the broader thesis of scientific reliability assessment.

Comparative Analysis of Critical Challenges and Solutions

This section provides a structured comparison of the three core challenges, detailing their manifestations, consequences, and the available strategies for mitigation. The following tables synthesize key findings from the surveyed literature to offer a clear, actionable overview.

Table 1: Challenge Comparison: Inconsistent Assay Conditions

| Aspect | Problem Manifestation | Documented Consequence | Recommended Mitigation Strategy |

|---|---|---|---|

| Environmental Control | Poor control of temperature, pH, and ionic strength [29]. | A 1°C change can alter activity by 4-8%; variable pH affects enzyme charge and substrate binding [29]. | Use automated analyzers with precise temperature control and pH probes [29]. |

| Methodology & Throughput | Use of manual spectrophotometry vs. variable microplate assays [29]. | Manual methods introduce human error; microplates suffer from edge effects and path length variability [29]. | Employ discrete analyzers using disposable cuvettes to eliminate edge effects and ensure consistent path length [29]. |

| Experimental Design | Use of "one-factor-at-a-time" (OFAT) optimization [30]. | Inefficient, misses factor interactions, can take >12 weeks for assay optimization [30]. | Adopt Design of Experiments (DoE) approaches (e.g., fractional factorial design) to model interactions and find optima faster [30]. |

| Data Validation | Publication of kinetically inconsistent data without basic validation [26]. | Gross errors, including violation of conservation laws; impossible turnover numbers reported [26]. | Apply self-consistency checks (e.g., Ratios R1-R3) [26] and report full progress curves with calibrations [26]. |

Table 2: Challenge Comparison: Missing and Inadequate Metadata

| Metadata Field | Documented Issue & Rate | Impact on Reusability & Analysis | Source of Evidence |

|---|---|---|---|

| Contact Information | Frequently missing or underspecified [27]. | Hinders collaboration, clarification, and data provenance tracking. | Analysis of ClinicalTrials.gov [27]. |

| Outcome Measures | Frequently missing or underspecified [27]. | Prevents assessment of selective reporting bias in systematic reviews and meta-analyses. | Analysis of ClinicalTrials.gov [27]. |

| Condition & Intervention | ~50% of conditions are not denoted by standardized MeSH terms [27]. | Impedes accurate search, data linkage, and interoperability across systems. | Analysis of ClinicalTrials.gov [27]. |

| Eligibility Criteria | Stored as semi-structured free text rather than a structured element [27]. | Cannot be computationally queried for patient matching to trials or automated meta-analysis. | Analysis of ClinicalTrials.gov [27]. |

| General Completeness | Required fields are often not filled, despite automated validation in systems like the PRS [27]. | Limits the utility of the entire record for secondary research and regulatory oversight. | Analysis of ClinicalTrials.gov [27]. |

Table 3: Challenge Comparison: Database Curation Issues

| Curation Phase | Common Deficiencies | Risks & Consequences | Best Practices & Frameworks |

|---|---|---|---|

| Collection & Assessment | Lack of upfront governance; inconsistent formats and sources [25]. | Data silos, incomplete datasets, and integration headaches downstream [24]. | Define governance policies and ethical/legal collection protocols at the project start [24] [25]. |

| Cleaning & Transformation | Ad hoc cleaning; lack of standardization and terminology harmonization [25]. | Inaccurate analytics, inability to combine datasets, and loss of data value. | Implement automated validation tools and align terms to controlled vocabularies/ontologies [24] [25]. |

| Storage, Preservation & Management | Inadequate metadata management; lack of data lineage tracking [24] [25]. | Data becomes inaccessible, uninterpretable, or non-compliant over time. | Use standardized metadata schemas (e.g., Dublin Core, HL7 FHIR) and data lineage tools [24] [28]. |

| Quality Framework | No systematic framework for assessing data quality throughout lifecycle [28]. | Unreliable data leads to poor research decisions and limits secondary analysis. | Adopt comprehensive guidelines like DAQCORD, which defines quality factors (completeness, correctness, etc.) [28]. |

Detailed Experimental Protocols

3.1 Protocol for Validating Self-Consistency of Enzyme Kinetic Data This protocol, derived from checks proposed for CRISPR-Cas kinetics [26], provides a minimum validation step for any reported Michaelis-Menten parameters.

- Objective: To perform three back-of-the-envelope checks on published or newly generated progress curve data to identify violations of basic conservation laws and kinetic principles.

- Principle: The calculations verify that the reported velocity and timescales are physically plausible given the reported initial concentrations of enzyme and substrate.

- Materials: Published article or dataset containing: initial reporter/substrate concentration (

[S]0), initial activated enzyme concentration ([E]0), reported reaction velocity (v), Michaelis constant (K_M), turnover number (k_cat), and a progress curve (signal vs. time). - Procedure:

- Extract or calculate the maximum reaction velocity,

v_max = k_cat * [E]0. - From the progress curve, estimate the timescale of the initial linear phase,

τ_linear. - Calculate the following three ratios as defined in the source [26]:

- R1:

(v * τ_linear) / [S]0. Acceptance Criterion: R1 < 1. This ensures the number of molecules consumed in the linear phase does not exceed the total available. - R2:

v / v_max. Acceptance Criterion: R2 < 1. This ensures the measured velocity does not exceed the theoretical maximum. - R3:

τ_linear / ([S]0 / v). Acceptance Criterion: R3 is on the order of 1 or less. This checks that the linear phase duration is consistent with the total reaction timescale.

- R1:

- Extract or calculate the maximum reaction velocity,

- Interpretation: Failure of any of these checks indicates a high probability of error in the experimental measurements, data analysis, or reported parameters. The data should be re-evaluated before use in comparative analyses or models.

3.2 Protocol for Rapid Enzyme Assay Optimization Using Design of Experiments (DoE) This protocol outlines a DoE approach to efficiently optimize assay conditions, contrasting with the traditional one-factor-at-a-time method [30].

- Objective: To identify significant factors affecting enzyme activity and determine optimal assay conditions in a time-efficient manner.

- Principle: A fractional factorial design screens multiple factors simultaneously, followed by response surface methodology (e.g., Central Composite Design) to model interactions and locate optima.

- Materials: Target enzyme, substrates, buffer components, plate reader or discrete analyzer capable of kinetic measurements [29].

- Procedure (Summary):

- Factor Selection: Identify key variables (e.g., pH, buffer concentration, ionic strength, [enzyme], [substrate], temperature, cofactors).

- Screening Design: Execute a fractional factorial design (e.g., Resolution IV) to test all selected factors at high and low levels in a minimal number of experiments. Measure response (e.g., initial velocity, signal-to-noise).

- Statistical Analysis: Use ANOVA to identify factors with statistically significant effects on the response.

- Optimization Design: For the significant factors (typically 2-4), perform a Response Surface Methodology design (e.g., Central Composite Design) to explore curvature and interaction effects.

- Modeling & Prediction: Fit a quadratic model to the data and use it to predict the combination of factor levels that yields the optimal response (e.g., maximum activity, stability).

- Verification: Experimentally confirm the predicted optimal conditions.

- Advantage: This structured approach can reduce optimization time from over 12 weeks (OFAT) to a few days while providing a robust model of the assay system [30].

Mandatory Visualizations

Data Curation Lifecycle and Metadata Management

Workflow for Validating Enzyme Kinetic Data Self-Consistency

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents, Tools, and Materials

| Item | Category | Primary Function in Context |

|---|---|---|

| Discrete Automated Analyzer (e.g., Gallery Plus) [29] | Instrumentation | Provides superior temperature control (25-60°C), uses disposable cuvettes to eliminate microplate edge effects and path length issues, enabling reliable kinetic measurements [29]. |

| Fluorophore-Quencher Reporter Probes (ssDNA/ssRNA) [26] | Biochemical Reagent | Used as the trans-cleavage substrate for CRISPR-Cas (Cas12, Cas13) diagnostic assays. Cleavage separates fluor from quencher, generating a fluorescent signal proportional to activity [26]. |

| Validated CRISPR-Cas Enzyme (Cas12a, Cas13b, etc.) [26] | Enzyme | The core biocatalyst for CRISPR-based detection. Specificity is programmed by guide RNA. Kinetic performance (k_cat, K_M) fundamentally limits assay sensitivity and speed [26]. |

| Protocol Registration System (PRS) [27] | Software/System | The web-based data entry system for ClinicalTrials.gov. It enforces some data type rules but lacks strict ontology requirements for key fields, contributing to metadata quality issues [27]. |

| Biomedical Ontologies (MeSH, SNOMED CT, etc.) [27] | Standard | Controlled vocabularies that provide unique identifiers for concepts (e.g., diseases, drugs). Their mandated use in metadata fields is essential for making data findable and interoperable (FAIR) [27]. |

| Data Curation & Lineage Tools (e.g., Atlan, Collibra, IBM InfoSphere) [24] | Software/Platform | Facilitate metadata management, automated data quality checks, and tracking of data origin and transformations (lineage), which are critical for curation, reproducibility, and compliance [24]. |

| Statistical Software with MI/MMRM (e.g., SAS, R) [31] | Software | Enables advanced handling of missing data in experimental and clinical datasets using robust methods like Multiple Imputation (MI) and Mixed Models for Repeated Measures (MMRM), reducing bias [31]. |

The comparative analysis presented here underscores that the challenges of inconsistent assay conditions, missing metadata, and poor database curation are not isolated issues but interconnected facets of a systemic data quality crisis in enzyme kinetics and related fields. Addressing them requires a multi-pronged strategy: adopting robust experimental design and validation protocols, enforcing the use of standardized metadata from the point of data generation, and implementing rigorous, framework-driven curation throughout the data lifecycle. By integrating the tools and best practices compared in this guide—from DoE and self-consistency checks to ontology-driven metadata and the DAQCORD framework—researchers, database curators, and drug development professionals can significantly enhance the reliability, reproducibility, and ultimate value of enzyme kinetic data. This fosters a more solid foundation for scientific discovery, diagnostic development, and therapeutic innovation.

Methodologies for Reliability Assessment: From Experimental Best Practices to AI Predictions

The accurate determination of enzyme kinetic parameters (kcat, Km) is a cornerstone of biochemistry, with direct implications for understanding metabolic pathways, diagnosing diseases, and developing new therapeutics and biocatalysts [32]. Within the context of a broader thesis on the reliability assessment of reported kinetic parameters, a critical examination of foundational experimental methodologies is required. For decades, the measurement of initial rates under steady-state conditions has been the gold standard taught in textbooks and implemented in laboratories [33]. This method, which analyzes the linear portion of a reaction progress curve where substrate depletion is minimal (typically <10%), aims to simplify the complex differential equations governing enzyme kinetics.

However, this approach presents significant practical and theoretical challenges to reliability. Measuring a true initial rate often requires rapid, continuous monitoring techniques and can be highly sensitive to subjective judgments in determining linear regions, especially for reactions with rapid curvature [33] [34]. Furthermore, the requirement for multiple experiments at varying substrate concentrations to construct a Michaelis-Menten plot is resource-intensive. In contrast, progress curve analysis offers a powerful alternative by extracting kinetic parameters from a single time-course experiment that monitors product formation or substrate depletion until the reaction approaches completion [35] [33]. This method utilizes the integrated form of the rate equation, thereby containing more information about the reaction's kinetic properties. Recent methodological comparisons indicate that progress curve analysis, particularly with modern numerical tools, can provide robust parameter estimates with lower experimental effort, challenging the dogma that initial rate measurement is an absolute necessity [35] [33]. This guide objectively compares these two paradigms, providing researchers with the data and protocols needed to assess their suitability for ensuring the reliability of kinetic parameters in diverse applications.

Core Methodological Comparison: Initial Rate vs. Progress Curve Analysis

Initial Rate Measurements

- Core Principle: Measures the velocity of the enzymatic reaction (v = d[P]/dt) at time zero, under conditions where substrate concentration ([S]) is essentially constant and product inhibition is negligible.

- Experimental Requirement: Requires a separate reaction for each data point on the Michaelis-Menten plot. The reaction is typically stopped or monitored for only a short initial period (e.g., 30-60 seconds) where progress is linear.

- Data Fitting: The initial velocities (v) measured at different initial substrate concentrations ([S]₀) are fitted directly to the Michaelis-Menten equation (v = Vmax[S]₀ / (Km + [S]₀)) or its linear transformations (e.g., Lineweaver-Burk).

- Key Challenge: Determining the linear range can be subjective. For reactions with inherent curvature, like protein hydrolysis, defining a reliable initial rate may be impossible using simple linear regression [34].

Progress Curve Analysis

- Core Principle: Utilizes the entire time course of a single reaction, from [S]₀ to near-complete depletion. The integrated Michaelis-Menten equation (t = [P]/Vmax + (Km/Vmax) * ln([S]₀/([S]₀-[P]))) directly relates product concentration [P] at time t to Vmax and Km [33].

- Experimental Requirement: One continuous or densely sampled discontinuous assay per enzyme condition. The reaction is monitored over a longer period, often for multiple half-lives.

- Data Fitting: The [P] vs. t data is fitted to the integrated equation using non-linear regression. Advanced numerical approaches, such as direct integration of differential equations or spline interpolation of data, are also employed to handle more complex mechanisms [35].

- Key Advantage: More data points per experiment and inherent averaging over the reaction time course can lead to more robust parameter estimation, especially for low-activity enzymes or scarce substrates.

Table 1: Comparison of Initial Rate and Progress Curve Methodologies for Reliability Assessment

| Aspect | Initial Rate Measurement | Progress Curve Analysis | Implication for Reliability |

|---|---|---|---|

| Experimental Throughput | Lower (multiple assays per Km, Vmax) | Higher (single assay per Km, Vmax) | Progress curves reduce time/cost, enabling more replicates [35]. |

| Substrate/Enzyme Consumption | High | Low | Crucial for expensive or scarce materials; improves feasibility of robust testing. |

| Handling of Assay Artifacts | Susceptible to errors in judging linear phase; may miss lag/burst phases. | Reveals time-dependent artifacts (e.g., enzyme inactivation, product inhibition). | Progress curves provide inherent quality control of kinetic assumptions [33]. |

| Error in Parameter Estimation | Prone to systematic error if linear phase is misjudged, especially near Km. | Systematic error can arise from neglecting factors like product inhibition. | Modern numerical fitting of progress curves shows lower dependence on initial parameter guesses, enhancing robustness [35]. |

| Case Study Insight | Deemed unsuitable for proteolytic reactions due to immediate curvature [34]. | Non-linear fitting of progress curves enabled precise protease activity quantification and fair comparison [34]. | Demonstrates necessity of method matching to reaction chemistry. |

Experimental Protocols for Key Assays

Protocol A: Initial Rate Determination for a Standard Hydrolase This protocol is suitable for reactions where a clear linear progress phase can be established.

- Reaction Setup: Prepare a master mix of buffer and enzyme. In a microplate or cuvette, initiate separate reactions by adding substrate at a minimum of 8 concentrations spanning 0.2–5.0 x Km.

- Rapid Monitoring: Immediately start monitoring the signal (e.g., absorbance, fluorescence) using a continuous-read capable instrument. The total measurement time should not exceed 1-2% of the time estimated for complete substrate conversion.

- Linear Regression: For each [S]₀, plot the signal vs. time for the first 10-20 data points (typically 30-60 seconds). Perform a linear regression; the slope is the initial velocity (v).

- Parameter Estimation: Plot v against [S]₀. Fit the data to the Michaelis-Menten equation using non-linear regression software to obtain Km and Vmax.

Protocol B: Progress Curve Analysis via Integrated Rate Equation This general protocol extracts parameters from a single time-course [33].

- Reaction Setup: Prepare a reaction mixture with a single, well-defined initial substrate concentration ([S]₀). It is optimal to choose an [S]₀ value close to the expected Km.

- Continuous Monitoring: Initiate the reaction and record the product concentration ([P]) or a proportional signal at frequent intervals until the reaction reaches at least 70-80% completion. Ensure enzyme stability over this period (verify via Selwyn's test).

- Data Transformation: Convert the raw signal to [P] values using a calibration curve. Calculate the corresponding remaining substrate [S] = [S]₀ – [P].

- Non-Linear Fitting: Input the data pairs (t, [P]) into data analysis software. Fit the data directly to the integrated Michaelis-Menten equation:

t = [P]/Vmax + (Km/Vmax)*ln([S]₀/([S]₀-[P])). The fitting algorithm will iteratively solve for the best-fit values of Km and Vmax.

Protocol C: Numerical Progress Curve Analysis with Spline Interpolation (Advanced) For complex systems or noisy data, a numerical approach offers robustness [35].

- Data Collection: Follow steps 1-3 of Protocol B to obtain the [P] vs. t dataset.

- Spline Fitting: Instead of using the analytical integrated equation, fit a smoothing cubic spline function to the [P] vs. t data. This creates a continuous, differentiable mathematical representation of the progress curve.

- Rate Calculation: Differentiate the spline function numerically to obtain an estimate of the instantaneous reaction rate (v = d[P]/dt) at each time point.

- Substrate Association: For each time point, calculate the corresponding substrate concentration ([S] = [S]₀ – [P]).

- Parameter Regression: Perform a non-linear regression by fitting the (v, [S]) data pairs to the standard Michaelis-Menten equation (v = Vmax*[S] / (Km + [S])). This method decouples the integration and regression steps and has been shown to be less sensitive to initial parameter estimates [35].

Visualization of Methodological Workflows

Decision and Workflow for Kinetic Parameter Estimation

Progress Curve Analysis: Two Computational Pathways

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Robust Kinetic Assays

| Reagent/Material | Function in Assay | Key Considerations for Reliability |

|---|---|---|

| High-Purity, Characterized Enzyme | The catalyst of interest; concentration must be known accurately. | Source (recombinant/purified), specific activity, and verification of absence of inhibitors or contaminating activities are critical. |

| Defined Substrate(s) | The molecule(s) transformed by the enzyme. | Purity is paramount. For spectrophotometric assays, the extinction coefficient (ε) must be accurately known. Solubility limitations can constrain usable [S]₀. |

| Universal Detection Reagents (e.g., Transcreener) | Fluorescent probes that detect common reaction products (e.g., ADP, GDP) [36]. | Enable homogeneous, mix-and-read assays across many enzyme classes (kinases, GTPases). Reduce assay development time and variability [36]. |

| pH & Ionic Strength Buffer | Maintains constant, physiologically relevant reaction conditions. | Must not interact with enzyme or substrates. Buffer capacity should be sufficient to handle proton production/consumption. |

| Cofactors / Metal Ions (Mg²⁺, ATP, NADH) | Essential activators or cosubstrates for many enzymes. | Required concentration must be determined and maintained in excess where applicable. Purity is critical to avoid inhibition. |

| Positive & Negative Control Inhibitors | Compounds with known mechanism (e.g., competitive inhibitor) and potency. | Essential for validating assay performance, calculating Z'-factor for HTS, and ensuring the system responds as expected [36]. |

| Case Study Material: Salmon Frame Proteins [34] | A complex, natural substrate mixture for protease assays. | Represents a physiologically relevant but heterogeneous substrate. Highlights the need for robust progress curve methods when classic initial rates fail [34]. |

| Reference Kinetics Dataset (e.g., from BRENDA/EnzyExtractDB) | Benchmark values (Km, kcat) for well-studied enzymes under specific conditions. | Serves as a critical external control for method validation. Automated extraction tools like EnzyExtract are expanding these reference datasets [9]. |

Application in Drug Discovery: From Screening to Mechanistic Insight

Robust enzyme assays are the engine of small-molecule drug discovery [36]. The choice between initial rate and progress curve methods depends on the stage of the pipeline.

- High-Throughput Screening (HTS): Speed and simplicity are prioritized. Initial rate measurements in homogeneous, fluorescent, or luminescent formats (e.g., detecting ADP production) dominate 384- or 1536-well primary screens [36]. Robustness is quantified by the Z' factor (≥0.7 is excellent).

- Hit Validation & Mechanistic Studies: Reliability and depth of information become paramount. Progress curve analysis is invaluable here. A single time-course experiment can not only confirm activity but also reveal time-dependent inhibition (e.g., slow-binding inhibitors), irreversible inactivation, or the onset of product inhibition—mechanisms that initial rate screens might miss [33]. This directly informs the drug's mechanism of action (MOA).

Table 3: Common Enzyme Assay Formats and Their Fit for Purpose in Reliability Assessment [36]

| Assay Format | Readout | Best for Initial Rate (IR) or Progress Curve (PC)? | Advantages for Reliable Kinetics | Disadvantages/Limitations |

|---|---|---|---|---|

| Fluorescence (FP, TR-FRET) | Fluorescence polarization or resonance energy transfer. | Primarily IR for HTS; PC possible with continuous read. | High sensitivity, homogeneous (mix-and-read), adaptable to many targets. | Potential compound interference (fluorescence/quenching). |

| Luminescence | Light emission (e.g., luciferase-coupled). | Primarily IR (endpoint). | Extremely sensitive, broad dynamic range. | Coupled enzymes add complexity; susceptible to luciferase inhibitors. |

| Absorbance (Colorimetric) | Change in optical density (OD). | Both IR and PC, if continuous read is available. | Simple, inexpensive, robust. | Lower sensitivity, can be hampered by colored compounds. |

| Label-Free (ITC, SPR) | Heat change or mass binding. | PC by nature (monitors binding/process over time). | No labeling, provides direct thermodynamic/affinity data. | Low throughput, high material consumption, specialized equipment. |

Integration of Enzyme Assays in the Drug Discovery Pipeline

Emerging Integration with Computational and Data Science

The reliability of experimental kinetics is increasingly intertwined with computational approaches. Two synergies are key:

- Data Curation for Model Training: Robust experimental parameters are the essential training data for machine learning (ML) models like CataPro [32], RealKcat [37], and EITLEM-Kinetics [38]. Automated extraction tools like EnzyExtract, which uses LLMs to mine kinetic data from literature, are creating larger, more diverse datasets (e.g., EnzyExtractDB with >218,000 entries) [9]. The quality of these datasets directly impacts model generalizability.

- In Silico Prediction for Experimental Design: These trained models can predict kinetic parameters for enzyme variants or new substrates, guiding researchers toward the most promising candidates for experimental testing. This creates a virtuous cycle: reliable experiments improve models, which then make research more efficient [32] [37].

Table 4: Comparison of Advanced Computational Tools for Kinetic Parameter Prediction

| Model (Year) | Core Approach | Key Input Features | Reported Performance / Advantage | Role in Reliability Assessment |

|---|---|---|---|---|

| CataPro (2025) [32] | Deep learning neural network. | Enzyme: ProtT5 embeddings. Substrate: MolT5 + fingerprints. | Enhanced accuracy & generalization on unbiased, clustered datasets. | Provides reliable in silico benchmarks and pre-screens enzyme variants. |

| RealKcat (2025 Preprint) [37] | Gradient-boosted trees (classification by order of magnitude). | Enzyme: ESM-2 embeddings. Substrate: ChemBERTa embeddings. | >85% test accuracy; sensitive to catalytic residue mutations. | Curated KinHub-27k dataset addresses inconsistencies in public data. |

| EITLEM-Kinetics (2024) [38] | Ensemble iterative transfer learning. | Enzyme sequence & substrate data. | Accurate for mutants with <40% sequence similarity to training set. | Predicts multi-mutation effects, aiding the design of reliable variant assays. |

| EnzyExtract (2025) [9] | LLM-powered data extraction pipeline. | Full-text scientific literature (PDF/XML). | Extracted 218k+ kinetic entries, expanding known datasets significantly. | Addresses "dark matter" of enzymology, creating larger validation datasets. |

The pursuit of reliable enzyme kinetic parameters does not mandate allegiance to a single historical method. Initial rate measurement remains a powerful, high-throughput tool, especially for primary screening where conditions can be tightly controlled to minimize its inherent limitations [36]. However, progress curve analysis emerges as a robust, information-rich alternative that can yield accurate parameters with greater efficiency and provide built-in quality checks for kinetic behavior [35] [33]. Its application in challenging systems, such as protease activity quantification, demonstrates its practical superiority where initial rates fail [34].

The future of reliability assessment in enzyme kinetics is unquestionably interdisciplinary. Experimental rigor must be coupled with computational transparency (detailed reporting of fitting procedures and confidence intervals) and data curation excellence. The integration of automated data extraction [9] and predictive ML models [32] [37] [38] will not replace careful experimentation but will instead elevate it, guiding researchers toward more informative assays and providing a broader context for evaluating their results. Ultimately, adopting a methodologically pluralistic approach—selecting the assay paradigm best suited to the enzyme system and research question—will be the most robust strategy for advancing our understanding of enzyme function and accelerating discovery.

Within the critical thesis of reliability assessment for reported enzyme kinetic parameters, selecting appropriate data sources is foundational. Manually curated databases like BRENDA and SABIO-RK serve as primary repositories, yet they differ fundamentally in scope, structure, and the contextual depth of their data, directly impacting their utility for reliable systems biology modeling and drug discovery [21] [18]. While BRENDA offers unparalleled breadth of enzyme-centric data, SABIO-RK provides deeper, reaction-oriented context including kinetic rate laws and experimental conditions [21] [39]. This guide objectively compares their performance, supported by experimental data, and situates them within a modern workflow that includes emerging machine learning frameworks and standardized reporting initiatives like STRENDA, which are essential for advancing parameter reliability [18] [40] [20].

Database Comparison: Core Characteristics & Performance

The selection between BRENDA and SABIO-RK hinges on the specific research question. The following tables break down their quantitative content, data models, and access capabilities.

Table 1: Quantitative Content and Coverage (Representative Statistics)

| Feature | BRENDA (As referenced in comparative studies) | SABIO-RK (Reported Data) | Implication for Reliability Assessment |

|---|---|---|---|

| Primary Focus | Enzyme-centric information and kinetic constants [21]. | Biochemical reactions and their kinetic properties [21] [39]. | BRENDA is optimal for enzyme-specific queries; SABIO-RK is better for pathway/modeling contexts. |

| Data Curation | Mix of manual curation and automated text mining (KENDA) [20]. | Manually curated by biological experts from literature [21] [39]. | Manual curation (SABIO-RK) may offer higher accuracy for complex data; automated mining (BRENDA) enables scale. |

| Key Kinetic Parameters | Contains kinetic constants (Km, kcat, Ki) [40]. | Contains kinetic parameters, plus associated kinetic rate laws and formulas [21]. | SABIO-RK provides directly model-ready mathematical relationships, reducing interpretation error. |

| Organism Coverage | Very broad (comprehensive enzyme database) [20]. | ~934 organisms (as of 2017), focused on eukaryotes and bacteria [21]. | BRENDA may have wider species coverage; SABIO-RK content is shaped by past projects/user requests [21]. |

| Content Volume (Entries) | Cited as containing ~87,000 kcat, 176,000 Km, and 46,000 Ki entries (2022 release) [40]. | ~57,000 database entries from >5,600 publications (2017) [21]. | BRENDA's larger raw volume offers more data points, but requires rigorous filtering for consistency. |

| Experimental Context | Provides assay conditions (pH, temp, etc.) [18]. | Explicitly stores detailed environmental conditions and experimental setups [21] [39]. | SABIO-RK's structured experimental data is critical for assessing parameter fitness for specific conditions [18]. |

| Mutant Data | Includes mutant enzyme data. | ~25% of entries are for specific mutant enzyme variants [21]. | Both are valuable for enzyme engineering studies, allowing wild-type/mutant comparisons. |

Table 2: Data Model, Access, and Integration

| Feature | BRENDA | SABIO-RK | Implication for Reliability Assessment |

|---|---|---|---|

| Data Model | Enzyme-centric. Each entry centers on an enzyme and its properties [21]. | Reaction-centric. Each entry describes a single reaction under specific conditions [21] [41]. | SABIO-RK's model aligns directly with the needs of kinetic modelers building reaction networks. |

| Search Interface | Standard database search with parameter statistics visualization [41]. | Advanced search with free text, filters, and interactive visual search (heat maps, parallel coordinates) [21] [41]. | SABIO-RK's visual tools help identify clusters, outliers, and parameter distributions, aiding reliability checks [41]. |

| Data Export Formats | Standard database formats. | SBML, BioPAX, Matlab, spreadsheet formats [21]. | Direct export to modeling formats (SBML) from SABIO-RK reduces manual transcription errors. |

| API/Integration | Web services available. | REST-ful web services; integrated into systems biology tools (COPASI, CellDesigner, etc.) [21]. | Programmatic access (SABIO-RK) facilitates reproducible workflows and integration into modeling pipelines. |

| External Links | Links to multiple resources. | Extensive links to UniProt, KEGG, ChEBI, GO, PubMed, etc. [21]. | Both enable cross-validation with authoritative sources, a key step in reliability assessment. |

Experimental Protocols for Reliability Assessment

Assessing the reliability of parameters sourced from these databases requires systematic validation. The following protocols are synthesized from best practices and recent research.

Protocol 1: Cross-Database Validation and Outlier Detection

This protocol is designed to identify and reconcile discrepancies between database entries for the same nominal parameter.

- Parameter Selection: Identify a target enzyme (via EC number) and kinetic parameter (e.g., Km for a primary substrate).

- Parallel Query: Execute identical queries in both BRENDA and SABIO-RK. Extract all reported values, ensuring you capture associated metadata: organism, tissue, pH, temperature, and publication source [21] [20].

- Data Structuring: Compile values into a table with columns for: Parameter Value, Organism, Experimental Conditions (pH, Temp), Publication PMID, and Source Database.

- Statistical & Contextual Analysis:

- Calculate basic statistics (mean, median, standard deviation, geometric mean for log-normal distributions) [20].

- Use SABIO-RK's visual search tools (e.g., scatter plots, parallel coordinates) to visualize the distribution and cluster data points by experimental conditions [41].

- Flag outliers (e.g., values beyond 3 standard deviations from the log-transformed mean) [20].

- Source Investigation: For outlier values and a representative sample of consensus values, retrieve the original publications. Check for adherence to STRENDA (STandards for Reporting ENzymology DAta) guidelines, such as clear initial rate conditions and full assay descriptions [18].

- Curation of a "Gold Standard" Set: Based on the above, select values obtained under conditions closest to your target physiological or experimental context, prioritizing studies with complete methodological reporting.

Protocol 2: Benchmarking Database-Derived Parameters in a Kinetic Model

This experimental protocol tests the functional reliability of database-sourced parameters in a practical modeling context.

- Pathway Definition: Select a small, well-defined metabolic pathway (e.g., a segment of glycolysis).

- Parameter Acquisition: Build a parameter set using either:

- BRENDA-Sourced: Collect Km and kcat values for each enzyme, applying filters for the correct organism and tissue.

- SABIO-RK-Sourced: Collect reactions, specifying the need for kinetic rate laws. Export the model scaffold in SBML format [21].

- Model Construction: Construct an ODE-based kinetic model using software like COPASI or PySCeS.

- Experimental Benchmarking: If possible, perform a simple wet-lab experiment (e.g., monitoring substrate depletion or product formation in a cell lysate) to generate a time-course dataset for the pathway.

- Model Simulation & Comparison: Simulate the model under the same initial conditions as the experiment. Compare the simulation output (metabolite dynamics) to the experimental data.

- Sensitivity Analysis: Perform parameter sensitivity analysis. Identify which kinetic parameters (likely those with high coefficients of variation in the database) have the greatest influence on model output. This pinpoints parameters where reliability is most critical [18].

- Iterative Refinement: Manually adjust the most sensitive parameters within their plausible ranges (based on database distributions) to improve the model fit. The need for significant adjustment indicates potential reliability issues in the database-derived value.

Visual Workflow for Reliability Assessment

The following diagrams map the logical process of assessing parameter reliability using databases and complementary tools.

Diagram Title: Workflow for Assessing Database Kinetic Parameter Reliability

Diagram Title: Ecosystem for Kinetic Data Reliability & Applications

This table details key resources, both physical and digital, essential for experimental and computational work in enzyme kinetics and reliability assessment.

Table 3: Research Reagent Solutions & Essential Resources

| Item / Resource | Function / Purpose in Reliability Assessment | Key Considerations & Examples |

|---|---|---|

| STRENDA Guidelines & Database | Defines the minimum information required for reporting enzymology data to ensure reproducibility and assessability [18] [20]. | A critical checklist when reviewing source literature. Journals increasingly require STRENDA compliance. |

| SKiD (Structure-oriented Kinetics Dataset) | Integrates kinetic parameters (kcat, Km) with 3D structural data of enzyme-substrate complexes [20]. | Allows correlation of kinetic values with structural features, adding a layer of validation beyond numerical value. |

| Machine Learning Frameworks (CatPred, UniKP, GELKcat) | Predicts kinetic parameters (kcat, Km, Ki) for uncharacterized enzymes and provides uncertainty estimates [40] [42] [43]. | Not a replacement for experimental data, but useful for validation (e.g., flagging predictions far from experimental values) and filling gaps with quantified uncertainty [40]. |

| GotEnzymes2 Database | Provides millions of predicted enzyme kinetic and thermal parameters (kcat, Km, optimal T) using benchmarked ML models [44]. | Offers broad coverage for initial screening or hypothesis generation. Users must be aware it is a prediction resource, not a repository of experimental measurements. |