Bayesian Optimization in Protein Engineering: A Data-Driven Guide for Accelerating Therapeutic Discovery

This comprehensive guide explores Bayesian optimization (BO) as a transformative framework for protein engineering.

Bayesian Optimization in Protein Engineering: A Data-Driven Guide for Accelerating Therapeutic Discovery

Abstract

This comprehensive guide explores Bayesian optimization (BO) as a transformative framework for protein engineering. It begins by establishing the core statistical principles of BO and its necessity in navigating high-dimensional, resource-intensive protein fitness landscapes. The article details the practical workflow, from selecting acquisition functions and surrogate models to integrating wet-lab experiments in automated platforms. We address common experimental pitfalls, computational bottlenecks, and strategies for model optimization. Finally, we validate BO's performance by comparing it to traditional methods (e.g., Directed Evolution, Random Search) and highlighting recent breakthroughs in therapeutic protein design. Tailored for researchers and drug development professionals, this article provides the methodological insights and troubleshooting guidance needed to implement BO for efficient protein optimization.

Why Bayesian Optimization? Mastering the Fundamentals for Protein Fitness Landscapes

Technical Support Center: Troubleshooting Bayesian Optimization in Protein Engineering

FAQs & Troubleshooting Guides

Q1: During an initial Bayesian optimization (BO) campaign for enzyme activity, my first 5 experimental batches showed no improvement over wild-type. Should I abort the campaign? A1: Not necessarily. This is a common scenario. BO requires an initial phase of exploration.

- Diagnosis: The acquisition function (e.g., Expected Improvement) may be prioritizing exploration of the vast sequence space. The model's uncertainty is still high.

- Solution: First, verify your experimental assays for consistency. Ensure your model uses a sensible kernel (e.g., Matern 5/2 for protein landscapes). Do not adjust the model hyperparameters prematurely. Continue for at least 10-15 iterations. If no improvement is seen by then, revisit your feature representation (e.g., from one-hot encoding to physicochemical embeddings).

Q2: My BO model's predictions and the actual experimental results diverge significantly after several rounds. What could be wrong? A2: This indicates model breakdown, often due to the "search space shift" problem.

- Diagnosis: The model was trained on data from a region of sequence space that is no longer representative of the current promising regions. The assumed smoothness of the landscape is violated.

- Solution:

- Retrain from Scratch: Discard old data from poor-performing regions and retrain the Gaussian Process (GP) model only on data from the last few promising batches.

- Incorporate Ensemble Methods: Switch to using a Random Forest or Gradient Boosting Machine as the surrogate model, which can handle more complex, non-stationary landscapes.

- Adjust the Kernel: Implement a spectral mixture kernel or combine multiple kernels to capture different scales of variation.

Q3: The computational cost of training the Gaussian Process model is becoming prohibitive as my experimental dataset grows beyond 500 variants. What are my options? A3: This is a key scalability challenge.

- Diagnosis: Standard GP regression scales cubically O(n³) with the number of data points

n. - Solution: Implement scalable approximate GP methods.

- Sparse Variational GPs: Use inducing points to approximate the full dataset.

- Deep Kernel Learning: Combine neural networks for feature extraction with a GP layer, improving scalability and representation power.

- Switch to Bayesian Neural Networks: While potentially less data-efficient, they scale better for very large

n.

Key Experimental Protocols

Protocol 1: Setting Up a Baseline BO Campaign for Protein Thermostability (Tm) Objective: To find protein variants with increased melting temperature (Tm) using a library of 10^5 possible single and double mutants.

- Feature Encoding: Encode each variant using a one-hot encoding of amino acid identities at variable positions. Consider adding predicted structural features (e.g., solvent accessibility, secondary structure) as additional dimensions.

- Initial Design: Use a space-filling design (e.g., Sobol sequence) or a diverse subset from existing data to select the first 20-30 variants for experimental characterization.

- Surrogate Model: Initialize a Gaussian Process model with a Matern 5/2 kernel and automatic relevance determination (ARD).

- Acquisition Function: Use Expected Improvement (EI) with a small jitter parameter (ξ=0.01) to balance exploration/exploitation.

- Batch Selection: For each cycle, select the next batch of 5-8 variants using a parallel acquisition strategy (e.g., q-EI, or Thompson Sampling).

- Experimental Loop: Express, purify, and measure Tm via DSF (Differential Scanning Fluorimetry) for selected variants. Append results to the training dataset and re-train the GP model. Iterate for 15-20 cycles.

Protocol 2: Handling Noisy High-Throughput Screening Data for Binding Affinity (KD) Objective: Optimize protein-protein binding affinity using a noisy yeast display or phage display screening output (e.g., sequencing counts).

- Data Preprocessing: Normalize sequence counts per batch. Transform counts into log-enrichment scores relative to a reference. Model the score variance as a function of mean to estimate uncertainty for each variant.

- Probabilistic Model: Use a Heteroskedastic GP model, which learns input-dependent noise levels. This prevents the optimizer from being misled by high-variance measurements.

- Acquisition: Use the Noisy Expected Improvement acquisition function, which explicitly accounts for measurement noise in the experimental data.

- Validation: Periodically (every 3-4 cycles) validate top predictions using a low-throughput, high-accuracy method (e.g., SPR or BLI).

Table 1: Comparison of Surrogate Models for Protein Engineering BO

| Model | Typical Data Size (n) | Computational Scaling | Handles Non-Stationary Data? | Best For |

|---|---|---|---|---|

| Gaussian Process (GP) | < 500 | O(n³) | Poor (without custom kernel) | Data-efficient search, uncertainty quantification |

| Sparse Variational GP | 500 - 10,000 | O(m²n) where m< | Moderate | Medium-scale campaigns |

| Random Forest | > 100 | O(n features * n log n) | Excellent | Complex, rugged landscapes, parallel batches |

| Bayesian Neural Network | > 1,000 | O(n parameters) | Good | Very large datasets, integration w/ deep learning |

Table 2: Common Acquisition Functions and Their Parameters

| Function | Key Parameter(s) | Effect of Increasing Parameter | Recommended Use Case |

|---|---|---|---|

| Expected Improvement (EI) | ξ (jitter) | More exploration | General-purpose, balanced search |

| Upper Confidence Bound (UCB) | β (balance weight) | More exploration | Theoretical convergence guarantees |

| Probability of Improvement (PI) | ξ (trade-off) | More exploration | Pure exploitation (not recommended alone) |

| Thompson Sampling | (None) | N/A | Parallel batch selection, simple implementation |

Visualizations

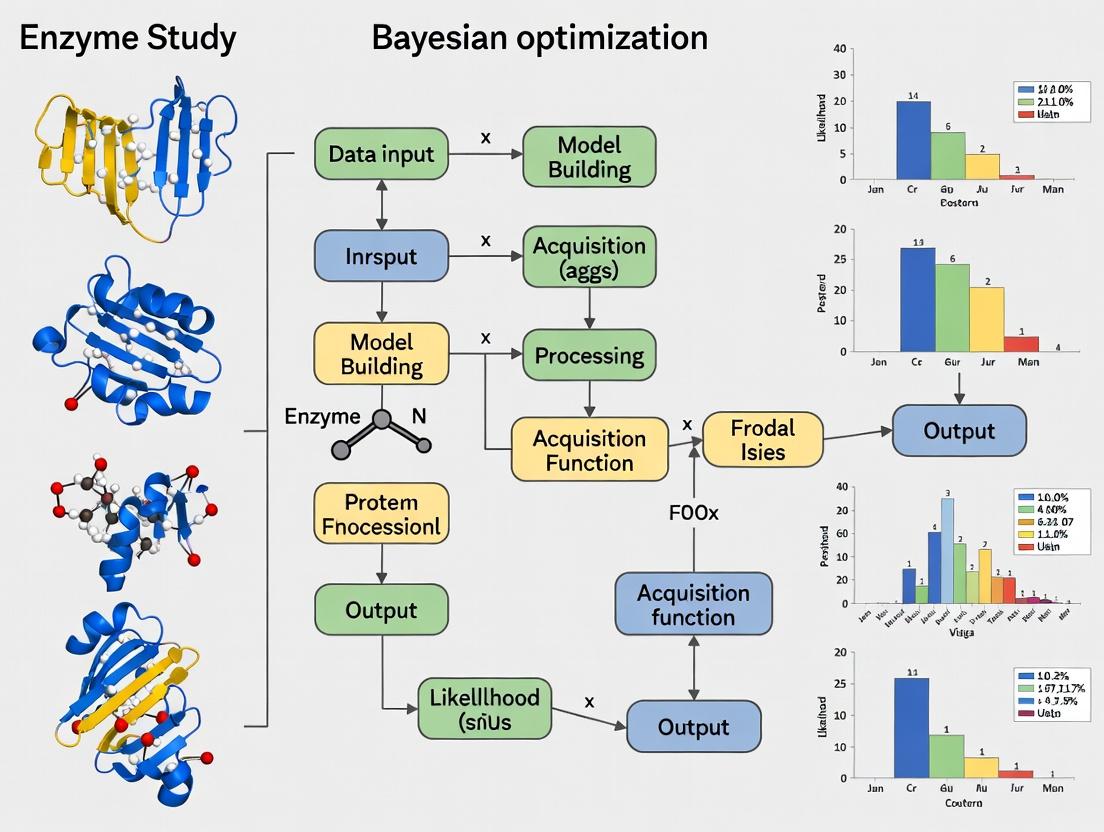

BO Workflow for Protein Engineering

Bayesian Optimization Core Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in BO-Driven Protein Engineering |

|---|---|

| Directed Evolution Library (e.g., NNK degenerate primers) | Creates the initial diverse sequence space for the first BO batch. Essential for exploration. |

| High-Throughput Expression System (e.g., 96-well microplate cultures) | Enables parallel production of the batch of protein variants proposed by the BO algorithm. |

| Rapid Purification Kit (e.g., His-tag plates/beads) | Facilitates fast, parallel purification of multiple variants for functional assays. |

| Stability Assay Reagents (e.g., SYPRO Orange for DSF) | Provides the quantitative fitness metric (Tm) for training the surrogate model on stability. |

| Binding Affinity Reagents (e.g., Biotinylated ligand for SPR/BLI) | Provides the quantitative fitness metric (KD) for optimizing protein-protein interactions. |

| Next-Generation Sequencing (NGS) Kit | Critical for analyzing pooled screening outputs (e.g., from phage display), generating data for noisy, high-throughput BO campaigns. |

| Positive/Negative Control Plasmids | Essential for normalizing experimental batch effects and ensuring data quality for robust model training. |

This technical support center provides guidance for researchers applying Bayesian optimization (BO) in protein engineering. Our troubleshooting guides and FAQs address common pitfalls in constructing probabilistic models, defining acquisition functions, and executing sequential experimental loops, all within the context of optimizing protein properties like stability, binding affinity, or enzymatic activity.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My Gaussian Process (GP) model fails to converge or produces "ill-conditioned matrix" errors during fitting. What should I do? A: This is often caused by numerical instability due to length-scale parameters becoming too small or highly correlated data points.

- Action 1: Add a kernel WhiteKernel. Explicitly add a

WhiteKernel(e.g.,WhiteKernel(noise_level=1e-3)) to your composite kernel to model experimental noise and improve matrix conditioning. - Action 2: Increase

alphain the regressor. Use thealphaparameter (e.g., in scikit-learn'sGaussianProcessRegressor) to add a diagonal value to the kernel matrix during fitting, acting as a regularization term. - Action 3: Normalize your input data. Ensure all your protein sequence features (e.g., descriptors, embeddings) and target values (e.g., fluorescence, affinity score) are standardized to zero mean and unit variance.

Q2: The acquisition function (e.g., Expected Improvement) suggests samples very close to existing ones, failing to explore new regions. How can I encourage more exploration? A: This indicates over-exploitation. Adjust the balance between exploration and exploitation.

- Action 1: Tune the acquisition function's

xiparameter. Increase thexiparameter in Expected Improvement (EI) or Upper Confidence Bound (UCB) to weight unexplored regions more heavily. - Action 2: Use a different acquisition function. Switch to an explicitly explorative function like

UCBwith a highkappaparameter for early iterations. - Action 3: Add a minimum distance constraint. Programmatically reject candidate points within a specified Euclidean distance of previous experiments to enforce diversity in your protein sequence space.

Q3: My sequential optimization loop appears stuck in a local optimum of the protein fitness landscape. How can I escape it? A: Local optima are common in rugged protein fitness landscapes.

- Action 1: Introduce random exploration steps. Implement a mixed strategy where, with a small probability (e.g., 5%), the next experiment is chosen completely at random from the design space, not by the acquisition function.

- Action 2: Use a multi-start optimization for the acquisition function. When maximizing the acquisition function to propose the next point, run the optimizer from multiple random starting positions to find a global, not just local, maximum of the acquisition landscape.

- Action 3: Periodically re-initialize or adapt the kernel. If the model is too confident in a region, consider restarting the GP with a different kernel length-scale or using a periodic transformation if you suspect multimodality.

Q4: How do I effectively incorporate known protein biophysical constraints (e.g., structural viability) into the Bayesian optimization loop? A: Constraints must be integrated into the proposal mechanism.

- Action 1: Use a constrained acquisition function. Formulate the constraint as a second GP classifier or regressor and use a constrained acquisition method like Constrained Expected Improvement.

- Action 2: Pre-filter candidate proposals. Generate a batch of candidate points from the acquisition function, then filter out those predicted (by a separate rule-based or machine learning model) to violate key constraints (e.g., unstable folding) before selecting the final experiment.

- Action 3: Encode constraints directly into the input space. Design your feature representation (e.g., using latent space coordinates from a generative model trained on stable proteins) so that the search space inherently respects the constraints.

Experimental Protocol: A Standard Bayesian Optimization Cycle for Protein Engineering

Objective: To iteratively optimize a target protein property (e.g., thermostability expressed as Tm) over a defined sequence or mutant space.

Protocol Steps:

- Initial Design of Experiment (DoE):

- Select 10-20 initial protein variants using a space-filling design (e.g., Latin Hypercube Sampling) over your parameterized sequence space.

- Express, purify, and assay these variants to obtain initial fitness data.

Model Initialization:

- Standardize input features (e.g., one-hot encoded mutations, physicochemical descriptors) and target values.

- Define a GP model with a composite kernel (e.g.,

Matern()+WhiteKernel()). - Fit the GP to the initial data.

Sequential Loop (Iterate until budget exhausted): a. Surrogate Model Update: Re-fit the GP model using all data collected to date. b. Acquisition Optimization: Maximize the Expected Improvement (EI) acquisition function over the entire sequence space. c. Candidate Selection: The point maximizing EI is selected as the next protein variant to test. d. Wet-Lab Experimentation: Conduct the experiment (cloning, expression, purification, assay) for the chosen variant. e. Data Augmentation: Append the new

(variant, measured_fitness)pair to the dataset.Validation:

- After the loop, characterize the top-performing identified variants with biological replicates.

- Validate model predictions on a held-out test set of variants.

Bayesian Optimization Workflow for Protein Engineering

Diagram Title: Bayesian Optimization Loop for Protein Design

Research Reagent Solutions Toolkit

| Item | Function in Bayesian Optimization for Protein Engineering |

|---|---|

| Plasmid Library (e.g., Site-saturation Mutagenesis) | Provides the foundational genetic diversity for the initial Design of Experiments (DoE) and subsequent variant testing. |

| High-Throughput Expression System (e.g., E. coli, yeast, cell-free) | Enables parallel production of dozens to hundreds of protein variants for initial screening and iterative testing. |

| Thermofluor Dye (e.g., SYPRO Orange) | Allows rapid, high-throughput measurement of protein thermostability (Tm) as a key fitness parameter for optimization. |

| Microplate Reader (Fluorescence-capable) | Essential for running and reading high-throughput assays (e.g., thermal shift, enzymatic activity, binding). |

| Gaussian Process Software (e.g., scikit-learn, GPyTorch, BoTorch) | Provides the computational backbone for building the surrogate model and calculating acquisition functions. |

| Automated Liquid Handling System | Critical for minimizing manual error and enabling reproducibility in preparing assays and variant samples. |

Comparison of Common Acquisition Functions

| Acquisition Function | Key Parameter(s) | Best For | Risk of Stagnation |

|---|---|---|---|

| Expected Improvement (EI) | xi (exploration weight) |

General-purpose optimization; balanced search. | Medium (can exploit if xi is low) |

| Upper Confidence Bound (UCB) | kappa (balance parameter) |

Explicit exploration; theoretical guarantees. | Low (with high kappa) |

| Probability of Improvement (PI) | xi (trade-off) |

Simple, quick convergence to any improvement. | High (very greedy) |

| Thompson Sampling | Random draws from posterior | Natural trade-off; good for batch/parallel settings. | Low |

Typical Model Hyperparameters and Ranges

| Hyperparameter | Description | Typical Value/Range (Initial) |

|---|---|---|

| Kernel Length Scale | Determines smoothness of GP. | 1.0 (after data normalization) |

| Kernel Variance | Output scale of GP. | 1.0 (after target normalization) |

| Alpha / Noise Level | Homoscedastic noise variance. | 1e-3 to 1e-5 |

EI xi |

Exploration-exploitation balance. | 0.01 (low exploit) to 0.1 (high explore) |

UCB kappa |

Controls exploration. | 2.0 - 5.0 (higher = more explore) |

Troubleshooting Guides & FAQs

FAQ 1: Why does my optimization loop fail to improve after the first few iterations?

Answer: This is often due to an over-exploitative acquisition function or an inaccurate surrogate model. The Expected Improvement (EI) function may become too greedy, while a Gaussian Process (GP) model with an incorrectly specified kernel (e.g., length-scale) cannot generalize. First, visualize the surrogate's mean and uncertainty across the search space. If uncertainty is negligible outside data points, the model is over-confident. Switch to an Upper Confidence Bound (UCB) with a higher beta parameter (e.g., increase from 2 to 5) to force exploration. Alternatively, re-fit the GP using a Matérn kernel (e.g., Matérn 5/2) instead of the common Radial Basis Function (RBF), as it handles non-smooth functions better. Ensure your data is normalized (zero mean, unit variance) before model training.

FAQ 2: How do I handle high-dimensional protein sequence spaces where performance plateaus?

Answer: High-dimensional spaces (>20 dimensions) break standard BO. The surrogate model becomes unreliable. Employ one or more dimensionality reduction strategies:

- Embeddings: Use learned representations (e.g., from ESM-2 protein language model) as inputs instead of one-hot encoding.

- Additive GP Models: Decompose the high-dimensional function into a sum of lower-dimensional functions.

- Trust Region BO: Limit searches to a local region of the high-dimensional space and adaptively move it.

Experimental Protocol for Embedding Integration:

- Generate Embeddings: For your initial sequence library, compute sequence embeddings using a pre-trained model (e.g., ESM-2

esm2_t30_150M_UR50D). - Dimensionality Reduction: Apply Principal Component Analysis (PCA) to reduce embedding dimensions to 10-50.

- Initialize BO: Use PCA-reduced vectors as the input

Xfor the GP surrogate model. - Optimize: Run the standard BO loop.

- Decode: For suggested points in PCA space, find the nearest neighbor in the original embedding library to obtain a candidate sequence.

FAQ 3: My experimental measurement is noisy. How do I prevent the BO loop from overfitting to noise?

Answer: A GP model inherently accounts for noise via its alpha or noise parameter. If not set correctly, the model will overfit.

Protocol: Configuring a GP for Noisy Protein Expression Data:

- Define Kernel: Use

ConstantKernel() * Matern(nu=2.5) + WhiteKernel(). - Set Bounds: Constrain the

WhiteKernel'snoise_levelparameter based on your assay's known coefficient of variation (CV). For example, if CV is ~10%, set bounds[1e-4, 0.1]. - Optimize Hyperparameters: Fit the GP by maximizing the log-marginal likelihood, allowing the

noise_levelto be optimized within bounds. - Acquisition Function: Use the Noisy Expected Improvement (NEI) which integrates over the posterior uncertainty, rather than standard EI.

Key Performance Metrics & Parameters

| Issue | Key Parameter | Typical Value Range | Recommended Adjustment |

|---|---|---|---|

| Over-exploitation | UCB beta |

0.01 - 10 | Increase to 5-10 for more exploration. |

| Model Inaccuracy | GP Kernel | RBF, Matérn, etc. | Use Matérn 5/2 for physical landscapes. |

| High Dimensionality | Input Dimension | >20 | Use embeddings + PCA to reduce to <50. |

| Experimental Noise | GP alpha / WhiteKernel |

1e-6 - 0.1 | Set based on assay CV; use WhiteKernel. |

| Slow Computation | Training Data Size | >500 points | Use sparse GP (SVGP) or Bayesian NN surrogate. |

The Bayesian Optimization Workflow

Research Reagent Solutions Toolkit

| Item | Function in Protein Engineering BO |

|---|---|

| Pre-trained Protein Language Model (e.g., ESM-2) | Generates continuous vector representations (embeddings) of protein sequences, reducing dimensionality for the surrogate model. |

| Gaussian Process Library (e.g., GPyTorch, scikit-learn) | Provides flexible, scalable models to build the probabilistic surrogate that predicts fitness from sequence. |

| Acquisition Function Library (e.g., BoTorch, Ax Platform) | Implements and optimizes functions like EI, UCB, and NEI to balance exploration and exploitation. |

| High-Throughput Cloning System (e.g., Golden Gate) | Enables rapid assembly of candidate DNA variants for experimental testing. |

| Microplate Fluorescence/Absorbance Reader | Measures protein expression or activity in a high-throughput format to generate fitness labels for the BO loop. |

| Automated Liquid Handler | Robots experimental steps (transformation, culture, assay) to increase throughput and reduce manual noise. |

Surrogate Model Selection Logic

Technical Support Center: Troubleshooting & FAQs

FAQs for Bayesian Optimization in Protein Engineering

Q1: Why is my Bayesian optimization model failing to converge or improve protein fitness after several iterations?

A: This is often due to an inadequate acquisition function or kernel choice for your specific landscape. For noisy, high-throughput screening (HTS) data, consider switching from the standard Expected Improvement (EI) to the Noisy Expected Improvement (NEI). Ensure your kernel (e.g., Matérn 5/2) hyperparameters are optimized via marginal likelihood maximization, not left at defaults. Check the table below for guidance.

Q2: How do I handle excessive experimental noise that is overwhelming the optimization signal?

A: Implement explicit noise modeling. Use a Gaussian Process (GP) with a dedicated noise parameter (alpha or GaussianProcessRegressor(alpha=...)). Start by quantifying your baseline noise from replicate controls and set this as the prior. For batch parallelization, use a noisy acquisition function like q-Noisy Expected Improvement (qNEI), which accounts for both noise and parallel candidates.

Q3: My parallel batch suggestions appear highly correlated and do not explore the sequence space effectively. How can I fix this?

A: You are likely using a naive parallelization strategy. Implement a batch diversity mechanism. Use local penalization or the Kriging Believer algorithm to force exploration. For q candidates, the optimization should solve a multi-point acquisition problem. The diagram "Parallel Batch Selection Workflow" outlines this logic.

Q4: What are the best practices for defining the initial design for a new protein engineering campaign?

A: Never use a purely random design. For a sequence space of dimension d, use a space-filling design like Latin Hypercube Sampling (LHS) or Sobol sequences. The initial sample size n should be at least 4d to 6d for a reasonable initial GP model. See the protocol below.

Troubleshooting Guides

Issue: High Variance in Model Predictions Symptoms: The GP surrogate model's uncertainty bounds are excessively wide across the design space, making the acquisition function uninformative. Solution:

- Re-evaluate your kernel choice. The Matérn kernel is generally preferred over the Radial Basis Function (RBF) for biological landscapes.

- Check for input scaling. Ensure all sequence features (e.g., physicochemical descriptors, one-hot encodings) are standardized to zero mean and unit variance.

- Verify the likelihood optimization. Increase the

n_restarts_optimizerparameter (e.g., to 10) to avoid poor local minima.

Issue: Optimization Stuck in a Local Optimum Symptoms: Rapid initial improvement plateaus at a suboptimal fitness level. Solution:

- Increase the exploration weight. Temporarily increase the κ parameter in Upper Confidence Bound (UCB) or adjust the xi parameter in EI.

- Inject diversity. Add a small percentage of random samples to your next batch (e.g., 1 out of 4 candidates).

- Consider a trust region approach. Implement Turbo by dynamically adjusting the search bounds based on progress.

Table 1: Comparison of Acquisition Functions for Noisy HTS Data

| Acquisition Function | Sample Efficiency (Typical Iterations to Hit) | Noise Robustness | Parallelization (q > 1) Support | Best Use Case |

|---|---|---|---|---|

| Expected Improvement (EI) | High | Low | No | Low-noise, sequential optimization |

| Noisy EI (NEI) | High | High | No | Noisy, sequential screening |

| Upper Confidence Bound (UCB) | Medium | Medium | No | Exploration-focused campaigns |

| q-Noisy EI (qNEI) | High | High | Yes | Noisy, high-throughput parallel screening |

| q-Probability of Improvement (qPI) | Low-Medium | Low | Yes | Pure exploitation in batches |

Table 2: Impact of Initial Design Size on Convergence

| Initial Design Size (n) | Convergence Iterations (Mean ± SD) | Probability of Success (>90% Optimum) | Recommended For |

|---|---|---|---|

| n = 2d | 45 ± 12 | 65% | Very low-throughput assays |

| n = 4d | 28 ± 8 | 89% | Standard protein engineering |

| n = 6d | 22 ± 7 | 95% | High-dimensional landscapes (d>20) |

| Random 10d | 35 ± 15 | 70% | (Baseline for comparison) |

Experimental Protocols

Protocol 1: Establishing a Bayesian Optimization Loop for Directed Evolution Objective: To efficiently navigate a protein sequence-function landscape using noisy HTS data.

- Sequence Encoding: Encode protein variants using a relevant feature set (e.g., one-hot encoding, ESM-2 embeddings, or physicochemical descriptors).

- Initial Design: Generate n = 4 x [feature dimension] initial variants using a Sobol sequence for maximal space-filling.

- High-Throughput Assay: Measure fitness (e.g., fluorescence, binding, activity) of the initial batch. Include at least 3 replicate controls per plate to estimate experimental noise (α).

- Model Training: Train a Gaussian Process Regressor (Matérn 5/2 kernel) on the collected data. Set the

alphaparameter to the measured variance from replicates. Optimize kernel hyperparameters. - Candidate Selection: Using the trained GP, optimize the q-Noisy Expected Improvement (qNEI) acquisition function (q=4-8) to select the next batch of variants.

- Iteration: Return to Step 3. Loop for a predefined budget (e.g., 10-15 rounds) or until convergence (no improvement in rolling average for 3 rounds).

Protocol 2: Quantifying and Integrating Experimental Noise

- Replicate Experiment: In every assay plate, include 3-5 identical control variants (wild-type and a medium-fitness variant).

- Noise Calculation: After each round, calculate the coefficient of variation (CV = standard deviation / mean) for each control. The plate-wide median CV is your empirical noise estimate (σₙ).

- Model Integration: Set the GP's noise prior (

alpha) to σₙ². For adaptive integration, use a WhiteKernel component within the GP kernel, fixing its initial value to σₙ² but allowing it to be re-optimized.

Visualizations

Title: Bayesian Optimization Loop for Protein Engineering

Title: Parallel Batch Selection with Diversity

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian Optimization-Driven Screening

| Item / Reagent | Function in the Workflow | Example/Note |

|---|---|---|

| Sobol Sequence Generator | Creates optimal space-filling initial designs to maximize information gain. | Use sobol_seq (Python) or randtoolbox (R). Critical for sample efficiency. |

| Gaussian Process Library | Core engine for building the surrogate model of the sequence-fitness landscape. | scikit-learn (GPRegressor), GPyTorch, or BoTorch. BoTorch is built for parallelization. |

| Acquisition Optimizer | Solves the high-dimensional problem of selecting the next best variant(s). | BoTorch for qNEI. For simple EI, scipy.optimize is sufficient. |

| Plate Reader / HTS Imager | Generates the high-throughput fitness data, the primary source of observational noise. | Calibrate regularly. Use same instrument settings throughout a campaign. |

| Noise Control Variants | Provides an empirical estimate of experimental noise for robust GP modeling. | Clone 3-5 reference variants into every assay plate. |

| Sequence Feature Encoder | Transforms protein sequences into numerical vectors for the GP. | One-hot, AAIndex (physicochemical), or deep learning embeddings (ESM-2). |

FAQs & Troubleshooting Guide

Q1: In a Bayesian optimization campaign for a therapeutic antibody, my model seems stuck on a local optimum, favoring variants with high stability but mediocre affinity. What can I do? A: This is a classic multi-objective trade-off issue. Your acquisition function is likely not properly balanced. Implement a Pareto-frontier aware acquisition function like Expected Hypervolume Improvement (EHVI). This explicitly searches for candidates that improve the trade-off between your objectives (e.g., stability and affinity).

Q2: My high-throughput activity assay data is noisy, leading to poor BO model performance. How should I handle this?

A: You must account for heteroscedastic noise. Instead of a standard Gaussian Process (GP), use a GP model that explicitly models input-dependent noise. Provide the model with assay replicate data if possible. Also, consider adjusting the acquisition function to be less greedy; using a higher xi parameter in Expected Improvement can promote exploration in noisy regions.

Q3: How do I effectively define the bounds of my protein sequence search space for BO? A: Use expert knowledge and preliminary data. Start with a curated library based on known homologs or conserved residues. Encode sequences using a relevant featurization (e.g., physicochemical properties, one-hot encoding, or embeddings from a protein language model). The bounds should be defined in this feature space. Begin with a broader space and iteratively refine based on initial BO rounds.

Q4: When optimizing for both expression yield (stability) and catalytic activity, how do I weight these objectives before I know the ideal trade-off? A: Avoid fixed weighting. Instead, perform multi-objective Bayesian optimization (MOBO) to map the Pareto front—the set of non-dominated optimal trade-offs. Present the resulting Pareto front to project stakeholders for informed decision-making. This data-driven approach reveals the feasible trade-offs without pre-commitment to arbitrary weights.

Experimental Protocols

Protocol 1: Multi-Objective Bayesian Optimization for Enzyme Engineering

This protocol details a MOBO cycle to balance thermostability (Tm) and specific activity.

- Initial Library Design: Construct a diverse library of 96 variants via site-saturation mutagenesis at 3-5 pre-identified flexible regions.

- High-Throughput Screening:

- Activity: Perform a microplate-based kinetic assay using a fluorescent or colorimetric substrate. Report initial velocity (nM/s/µg).

- Stability: Use a thermal shift assay (Sypro Orange) in a real-time PCR machine to determine melting temperature (Tm °C).

- Model Training: Use a GP with a Matérn kernel for each objective. Train on normalized log-transformed data from the completed rounds.

- Acquisition & Selection: Calculate the Expected Hypervolume Improvement (EHVI) over the current Pareto front. Select the top 24 candidates suggested by EHVI for the next round.

- Iteration: Repeat steps 2-4 for 4-5 rounds. Express and characterize top Pareto-optimal variants in triplicate for validation.

Protocol 2: Noisy Affinity Measurement for Antibody Optimization

Protocol for generating reliable KD data for a BO campaign targeting antibody affinity maturation.

- Expression: Express antibody Fv variants in a mammalian transient system (e.g., HEK293).

- Biosensor Assay: Use biolayer interferometry (BLI). Load antigen onto anti-His biosensors. Dip sensors into a dilution series of each antibody variant (e.g., 200, 100, 50, 25 nM).

- Data Collection: Record association and dissociation steps. Perform reference well subtraction.

- Noise Handling: For each variant, run two independent dilution series on separate sensors. Fit a 1:1 binding model to each curve to obtain two independent KD estimates.

- Input for BO: Report the mean of log(KD) as the target value and the standard deviation as the measurement noise parameter for the GP model, enabling robust modeling of assay uncertainty.

Data Presentation

Table 1: Comparison of Acquisition Functions for Multi-Objective Protein Optimization

| Acquisition Function | Key Principle | Best For | Computational Cost | Risk of Local Optima |

|---|---|---|---|---|

| Expected Improvement (EI) | Maximizes predicted improvement on a scalarized objective. | Single objective or pre-defined weighted sum. | Low | High in MO problems |

| ParEGO | Randomly scalarizes objectives each iteration to guide exploration. | Moderate number of objectives (2-4). | Moderate | Moderate |

| EHVI | Directly measures volume improvement in objective space. | Precisely mapping the Pareto front (2-3 objectives). | High (scales with objectives) | Low |

| qNParEGO | Batch version of ParEGO for parallel candidate selection. | When screening a batch of variants per round. | Moderate-High | Moderate |

Table 2: Example Pareto Front Results from a MOBO Campaign for a Lipase

| Variant | Thermostability (Tm °C) | Specific Activity (U/mg) | Dominance Status |

|---|---|---|---|

| WT | 45.2 | 100 | Dominated |

| P3A | 58.7 | 85 | Pareto Optimal (Best Stability) |

| F10S | 52.1 | 180 | Pareto Optimal (Best Activity) |

| D5G | 56.5 | 165 | Pareto Optimal (Balanced) |

| K2R | 47.8 | 110 | Dominated |

Visualizations

Title: Bayesian Optimization Cycle for Protein Design

Title: Pareto Front Defining Optimal Trade-offs

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Optimization Workflow |

|---|---|

| Gaussian Process Software (BoTorch, GPyTorch) | Provides flexible models for MOBO, handling noise and complex objective spaces. |

| Sypro Orange Dye | Fluorescent dye for high-throughput thermal shift assays to estimate protein stability (Tm). |

| Biolayer Interferometry (BLI) Biosensors | For label-free, parallel measurement of binding kinetics (KD) of multiple protein variants. |

| Phosphate Sensor (e.g., PiColorLock) | Coupled enzyme assay system for high-throughput measurement of phosphatase/kinase activity. |

| Site-Directed Mutagenesis Kit (NEB Q5) | Enables rapid construction of variant libraries for validation of BO-predicted sequences. |

| Mammalian Transient Expression System | For producing properly folded, glycosylated proteins (e.g., antibodies) for functional assays. |

A Practical Workflow: Implementing Bayesian Optimization in Your Protein Engineering Pipeline

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My one-hot encoded protein sequence data is leading to poor Bayesian optimization performance. The acquisition function is not effectively exploring the sequence space. What could be wrong?

A: This is often due to the lack of meaningful topological relationships in one-hot encoding. Each variant is equidistant in encoding space, providing no useful gradient for the surrogate model. We recommend transitioning to a feature-based encoding.

Recommended Protocol:

- Generate Features: Use tools like

PROFEToresm-2to generate per-residue or per-sequence embeddings. For a protein variant, extract the embedding vector for the mutated position(s) and its neighbors. - Dimensionality Reduction: Apply UMAP or PCA to reduce the embedding dimensions to a manageable size (e.g., 10-50 features) for the BO loop.

- Encode: Concatenate these reduced embeddings with other scalar features (e.g., predicted stability ΔΔG, hydrophobicity index) to form your final parameterization vector

X_i. - Validate: Compute pairwise distances between encoded variants. Functionally similar variants should be closer in this new space.

Q2: When integrating predicted protein structure features (e.g., from AlphaFold2), how should I handle low pLDDT confidence regions in my feature vector?

A: Low confidence (pLDDT < 70) features can inject noise. Implement a confidence-weighted encoding scheme.

Recommended Protocol:

- Run AlphaFold2 or RoseTTAFold on your wild-type and variant sequences to obtain predicted structures and per-residue pLDDT scores.

- Extract Structural Features: Calculate features like dihedral angles, solvent accessible surface area (SASA), and distance maps for each variant.

- Apply Weights: For each residue's structural feature, multiply by a weight factor

w = pLDDT / 100. This down-weights contributions from low-confidence regions. - Pool Features: Create a fixed-length vector by calculating the mean and standard deviation of each weighted feature across all residues, or across a region of interest.

Q3: I am combining sequence embeddings with experimental physicochemical data. How do I normalize these heterogeneous features for a Gaussian Process model?

A: Standard scaling (Z-score normalization) per feature across your dataset is critical for stable kernel computation.

Recommended Protocol:

- Compile Raw Data: Assemble your

N x Mmatrix, where N is the number of variants, and M is the total number of features (e.g., 1280 from ESM-2 + 5 experimental metrics). - Fit StandardScaler: Calculate the mean (μ) and standard deviation (σ) for each of the M feature columns using only the training/observed data.

- Transform: For each feature column

j, applyX_norm = (X_raw - μ_j) / σ_j. - Important: Store the μ and σ for each feature. When a new, unobserved variant is encoded for prediction by the GP, it must be normalized using the original training μ and σ.

Table 1: Comparison of Protein Variant Encoding Strategies

| Encoding Method | Dimensionality | Pros | Cons | Best Use Case |

|---|---|---|---|---|

| One-Hot (Amino Acid) | 20 x L | Simple, interpretable. | Extremely high-dim, no relatedness info. | Small, discrete mutation sets. |

| BLOSUM62 Substitution Matrix | 20 x L | Encodes biochemical similarity. | Still high-dim, linear. | Saturation mutagenesis studies. |

| Learned Sequence Embeddings (e.g., ESM-2) | 1280 - 5120 | Captures deep sequence context; dense. | Computationally intensive; "black box". | Large-scale variant screening. |

| Predicted Structural Features | Variable (~10-100) | Directly related to function. | Dependent on prediction accuracy. | Enzyme or binder engineering. |

| UniRep / TAPE Embeddings | 1900 - 4800 | Protein-level representation; transferable. | May miss local mutation effects. | Protein fitness prediction tasks. |

Experimental Protocols

Protocol 1: Generating a Feature-Based Encoding from ESM-2 and Rosetta Objective: Create a 50-dimensional feature vector for a set of single-point protein variants.

- Sequence Preparation: Prepare a FASTA file for each variant (e.g.,

>Variant_A123G). - ESM-2 Embedding Extraction:

- Use the

esm-extracttool or HuggingFacetransformerslibrary. - Load the

esm2_t33_650M_UR50Dmodel. - Pass each sequence to obtain the last hidden layer representation (1280 dimensions per residue).

- Pooling: For the region around the mutation site (e.g., positions ± 15), take the mean of the embedding vectors. This yields a 1280-dim local context vector.

- Use the

- Rosetta ΔΔG Calculation:

- Use the

RosettaScriptsprotocol with theddg_monomerapplication. - Input the wild-type and variant PDB files (can be AlphaFold2 models).

- Run the flexibility protocol (e.g.,

backrub) with at least 35,000 trajectories. - Extract the calculated ΔΔG (kcal/mol) from the output.

- Use the

- Feature Concatenation & Reduction:

- Concatenate the 1280-dim ESM-2 vector with the scalar ΔΔG value (1281 total).

- Using a pre-fitted PCA model (trained on a large corpus of natural sequences), reduce the 1281-dim vector to 50 principal components. This is your final encoded variant

X_i.

Protocol 2: Setting Up a Bayesian Optimization Loop with Protein Encodings Objective: Iteratively select protein variants to test based on previous experimental results.

- Initial Data (

D_n): Start with 10-20 experimentally characterized variants. Encode each into feature vectorsX_1:n. Assay results are targetsy_1:n. - Surrogate Model Training: Fit a Gaussian Process (GP) model to

{X_1:n, y_1:n}. Use a Matérn 5/2 kernel. Optimize hyperparameters (length scale, noise) via marginal likelihood maximization. - Acquisition Function Maximization: Apply the Expected Improvement (EI) function to the GP posterior over the search space (1000s of in silico encoded, but untested, variants).

- Select Next Variant: Identify the variant

X_n+1with the highest EI score. - Experiment & Iterate: Synthesize and test variant

X_n+1to obtainy_n+1. Append{X_n+1, y_n+1}toD_n. Retrain the GP and repeat from step 2.

Diagrams

Protein Variant Parameterization Workflow

Bayesian Optimization Loop for Protein Engineering

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Reagents & Tools for Parameterization Experiments

| Item | Function in Encoding | Example Product / Software |

|---|---|---|

| Multiple Sequence Alignment (MSA) Generator | Provides evolutionary context for sequences, used by many embedding methods. | HH-suite3, JackHMMER (Pfam database) |

| Protein Language Model (Pre-trained) | Generates dense, context-aware sequence embeddings without requiring an MSA. | ESM-2 (Meta), ProtT5 (Rostlab) |

| Structure Prediction Engine | Predicts 3D structure from sequence to enable structural feature extraction. | AlphaFold2 (ColabFold), RoseTTAFold |

| Structure Analysis Suite | Calculates quantitative structural features (angles, distances, energies). | Rosetta (ddg_monomer), Biopython PDB, MDTraj |

| Physicochemical Calculator | Computes scalar biochemical properties from sequence. | PROPKA (pI), Bio.SeqUtils (instability index, aromacity) |

| Dimensionality Reduction Library | Reduces high-dimensional embeddings for efficient BO. | scikit-learn (PCA, UMAP), umap-learn |

| Bayesian Optimization Framework | Implements the surrogate model (GP) and acquisition function logic. | BoTorch, GPyOpt, scikit-optimize |

| High-Throughput Cloning Kit | Rapidly constructs encoded variant libraries for experimental validation. | Gibson Assembly kits, Golden Gate Assembly kits (e.g., NEB) |

Welcome to the Technical Support Center for Bayesian Optimization in Protein Engineering. This guide provides troubleshooting and FAQs for selecting and implementing surrogate models in your research pipeline.

Troubleshooting Guides & FAQs

Q1: My Gaussian Process (GP) regression is failing due to memory errors when my protein sequence or fitness dataset exceeds ~5000 data points. What are my options? A: This is a common scalability issue. GP memory complexity scales O(n²). Consider these actions:

- Use Sparse Gaussian Processes. Implement inducing point methods (e.g., SGPR, SVGP) to approximate the full dataset.

- Switch to a Tree-Based Method. For very large datasets (>10k points), Bayesian Optimization with Tree-structured Parzen Estimator (TPE) or Bayesian Neural Networks may be more memory-efficient initially.

- Optimize Kernels. Use a combination of

LinearandMaternkernels instead of the computationally heavyRBFkernel if appropriate for your fitness landscape.

Q2: How do I handle categorical variables, like amino acid types at a specific position, in my surrogate model? A: GPs require careful kernel encoding for categorical inputs.

- Solution 1 (GPs): Use a kernel that computes similarity between categorical values, such as the Hamming kernel integrated into libraries like

BoTorchorGPyTorch. - Solution 2 (BNNs/Trees): Use embedding layers in a BNN to transform categories into continuous vectors, or rely on tree-based methods (e.g., SMAC, TPE) which natively handle categorical parameters.

- Protocol: For a GP with 20 amino acid choices per position, define a kernel:

K = Matern(nu=2.5) + Hamming. Normalize your continuous parameters separately.

Q3: My Bayesian Neural Network (BNN) surrogate provides poor uncertainty quantification (UQ), leading to uninformative acquisition function scores. How can I improve this? A: Poor UQ often stems from inappropriate inference or architecture.

- Checklist:

- Inference Method: Are you using a true Bayesian method (e.g., Stochastic Gradient Hamiltonian Monte Carlo, Deep Ensembles) versus a simple dropout approximation? For protein engineering, Deep Ensembles often provide robust UQ.

- Network Calibration: Regularly assess calibration metrics (e.g., Expected Calibration Error) on a held-out validation set of protein variants.

- Architecture Size: Increase model capacity (width/depth) if underfitting; consider ensembling if computationally feasible.

Q4: For tree-based methods like SMAC or TPE, how should I set the initial design of experiments (DoE) for screening protein variants? A: The initial DoE is critical for model bootstrapping.

- Protocol: For a library with

dtunable parameters (e.g., 10 residue positions), generate2dto5dinitial random samples. Use a space-filling design like Sobol sequences if your sequence space allows. Ensure the initial set includes diverse variants (e.g., wild-type, known active mutants, and random combinations) to seed the model effectively.

Q5: How do I choose between a model that excels at interpolation (GP) vs. one good at handling complex, discontinuous landscapes (BNN/Trees)? A: This depends on your prior knowledge of the protein fitness landscape.

- Guidance: Start with a GP if you expect a smooth, continuous relationship between sequence and function (e.g., stability metrics). Use BNNs or Tree-based methods (TPE) if you anticipate sharp peaks, discontinuities, or very high-dimensional interactions (e.g., antigen-binding affinity with epistatic effects). You can run a quick benchmark using a held-out set of known variants to compare model prediction error.

Surrogate Model Comparison & Benchmark Data

Table 1: Quantitative Comparison of Surrogate Models for Protein Engineering

| Feature | Gaussian Process (GP) | Bayesian Neural Network (BNN) | Tree-Based (e.g., TPE/SMAC) |

|---|---|---|---|

| Native Handling of Categorical Data | Poor (requires special kernels) | Good (via embeddings) | Excellent (native split) |

| Scalability (Data Points) | Poor (<10k) | Good (>10k) | Excellent (>50k) |

| Uncertainty Quantification | Excellent (analytic) | Good (via ensembles/MCMC) | Fair (distribution-based) |

| Extrapolation Ability | Good | Fair | Poor |

| Typical Optimization Loop Speed | Slow | Moderate | Fast |

| Best for Landscape Type | Smooth, Continuous | Complex, High-Dim | Discontinuous, Mixed Variables |

Table 2: Recent Benchmark Results on Protein Fitness Prediction (Normalized RMSE)

| Model | GB1 Dataset (4 sites) | AAV Dataset (capsid) | Recommended Use Case |

|---|---|---|---|

| Sparse GP (500 inducing) | 0.15 | 0.32 | Medium datasets (<15k), need robust UQ |

| Deep Ensemble BNN | 0.18 | 0.28 | Large datasets, complex epistasis |

| Bayesian Random Forest | 0.22 | 0.31 | Fast iteration, many categorical choices |

| TPE (Tree-structured) | 0.25 | 0.30 | Very large initial random screens |

Experimental Protocol: Benchmarking Surrogate Models

Objective: To empirically select the best surrogate model for a given protein engineering dataset. Protocol:

- Data Partitioning: Split your dataset of

Nprotein variant sequences and measured fitness into training (70%), validation (15%), and hold-out test (15%) sets. Ensure splits are random but stratified across fitness ranges if possible. - Model Training: Train each candidate surrogate model (GP, BNN, TPE) on the training set. For GP, optimize kernel hyperparameters via marginal likelihood maximization. For BNN, train an ensemble of 5 networks with different random seeds.

- Validation & Calibration: On the validation set, evaluate: a) Root Mean Square Error (RMSE), b) Mean Absolute Error (MAE), c) Spearman's Rank Correlation, and d) Expected Calibration Error (for UQ assessment).

- Acquisition Simulation: Simulate one step of Bayesian Optimization. For each model, calculate the Expected Improvement (EI) over the validation set. Select the top 5 proposed variants. Calculate the average true fitness of these proposed variants from the held-out validation labels. The model whose proposals yield the highest average fitness is most effective for optimization.

- Final Test: Retrain the best model on training+validation data and report final metrics on the hold-out test set.

Visualization: Surrogate Model Selection Workflow

Diagram Title: Workflow for Selecting a Bayesian Optimization Surrogate Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Surrogate Modeling

| Item (Software/Package) | Function in Protein Engineering | Key Consideration |

|---|---|---|

| GPyTorch / BoTorch | Implements scalable Gaussian Processes with GPU acceleration and built-in kernels for categorical data. | Use Linear + Matern kernels for stability screens. |

| TensorFlow Probability / Pyro | Provides probabilistic layers and trainers for building and inferring Bayesian Neural Networks. | Essential for implementing Deep Ensembles. |

| Optuna (TPE) | A hyperparameter optimization framework that uses the Tree-structured Parzen Estimator as its default surrogate. | Excellent for rapid, high-dimensional sequence space exploration. |

| SMAC3 | Sequential Model-based Algorithm Configuration; uses random forests as surrogates. | Handles mixed parameter spaces (continuous, categorical, conditional) natively. |

| scikit-learn | Provides baseline models (Random Forests) and essential metrics for benchmarking. | Use for initial data exploration and simple baselines. |

| Custom Embedding Layers | Neural network layers to convert amino acid sequences into continuous numerical vectors. | Critical for BNNs to process raw sequence data effectively. |

Troubleshooting Guides and FAQs

Q1: During my Bayesian optimization (BO) run for protein fitness, the Expected Improvement (EI) acquisition function consistently selects the same point for evaluation. My optimization has stalled. What could be the cause and how do I fix it?

A: This is a common issue known as "over-exploitation" where the model's uncertainty is too low, causing EI to see no potential for improvement elsewhere. To troubleshoot:

- Check your GP kernel hyperparameters. An excessively large length scale can oversmooth the model. Re-optimize hyperparameters by maximizing the marginal likelihood.

- Add or increase "jitter". Most BO libraries (like BoTorch or GPyOpt) have a

jitterparameter that adds a small noise term to the diagonal of the covariance matrix. This artificially inflates uncertainty at observed points, encouraging exploration. Start with a value like 1e-6. - Consider switching to a more exploratory acquisition function, such as Upper Confidence Bound (UCB) with a higher

betaparameter, for a few iterations to gather new, diverse data.

Q2: When using Upper Confidence Bound (UCB), how do I choose the correct beta (κ) parameter to balance exploration and exploitation for my protein sequence screen?

A: The beta parameter controls the confidence level. There is no universal setting, but standard strategies exist:

- Theoretically: For linear kernels,

beta = 0.2 * d * log(2t)(where d is dimension, t is iteration) provides theoretical guarantees, but is often too conservative for practical GP models. - Empirically: A common heuristic is to set

betabetween 0.5 and 5. Start withbeta=2.0. Monitor the optimization:- If the process seems too random (poor exploitation), gradually decrease

beta. - If it gets stuck in local optima (poor exploration), increase

beta.

- If the process seems too random (poor exploitation), gradually decrease

- Adaptive Schemes: Use libraries like BoTorch that implement

betaschedules (e.g., decaying over time) or algorithms like GP-UCB that computebetaautomatically based on iteration count.

Q3: I want to use Knowledge Gradient (KG) for my expensive, batch-based protein expression assay, but the computation is extremely slow. Is this expected?

A: Yes, this is a known limitation. KG's value requires solving a nested optimization problem, which is computationally expensive, especially in high-dimensional spaces (like protein sequence space). Solutions:

- Use a one-shot or multi-fidelity approximation. Modern libraries like BoTorch provide

qKnowledgeGradientandqMultiFidelityKnowledgeGradientwhich use stochastic optimization to approximate KG efficiently for batch (parallel) settings. - Reduce candidate set size. Instead of optimizing over the full continuous space, optimize KG over a discrete, randomly sampled set of candidate points (e.g., 500-1000 points).

- For very high dimensions, consider pairing KG with a dimensionality reduction technique (like PCA on sequence embeddings) before running BO.

Q4: My acquisition function (EI, UCB, KG) suggests a protein sequence that is physically invalid or cannot be synthesized. How should I handle this constraint?

A: You must incorporate constraints into the optimization loop.

- Feasibility Modeling: Train a separate classifier (e.g., GP classifier or random forest) on known feasible/infeasible sequences. Use its predictive probability of feasibility to penalize the acquisition function.

- Constrained Acquisition Functions: Use

ConstrainedExpectedImprovementorConstrainedUpperConfidenceBound(available in BoTorch). These functions multiply the standard acquisition value by the probability of satisfying the constraint. - Direct Penalization: Manually set the acquisition value of invalid regions to a very low number (e.g., -1e10) during the optimization of the acquisition function.

Quantitative Comparison of Acquisition Functions

The table below summarizes the core characteristics of the three acquisition functions in the context of protein engineering.

Table 1: Comparison of Acquisition Functions for Protein Engineering BO

| Feature | Expected Improvement (EI) | Upper Confidence Bound (UCB) | Knowledge Gradient (KG) |

|---|---|---|---|

| Core Principle | Expected value of improvement over current best. | Optimistic estimate: mean + β * uncertainty. | Value of information: incorporates post-decision optimization. |

| Exploration/Exploitation | Adaptive balance. | Explicit control via β parameter. | Information-theoretic, inherently global. |

| Computational Cost | Low (analytic). | Low (analytic). | Very High (requires nested optimization). |

| Best For | General-purpose, efficient global optimization. | When explicit control over exploration is needed. | Very expensive, batch, or multi-fidelity experiments. |

| Key Hyperparameter | Jitter (ξ) to prevent stalling. | Beta (β) or Kappa (κ). | Number of fantasy samples (for approximations). |

| Constraint Handling | Requires modified version (e.g., CEI). | Requires modified version (e.g., CUCB). | Complex, but possible with approximations. |

Experimental Protocol: Benchmarking Acquisition Functions for a Protein Fitness Landscape

Objective: To empirically compare the performance of EI, UCB, and KG on a simulated or empirically derived protein fitness landscape.

Materials: See Research Reagent Solutions below. Workflow:

- Landscape Preparation: Use a publicly available dataset (e.g., from the

FLIPbenchmark or a deep mutational scanning study of GB1 or PABP). Split data into a sparse initial training set (5-10 points) and a held-out test set representing the full landscape. - Model Initialization: Fit a Gaussian Process (GP) model with a Matérn 5/2 kernel to the initial training set.

- BO Iteration Loop: For a fixed number of iterations (e.g., 50):

- Optimize each acquisition function (EI, UCB with β=2.0, one-shot KG) to select the next point (protein variant) to "evaluate."

- "Evaluate" the point by retrieving its true fitness value from the held-out test set.

- Augment the training data with this new point.

- Refit the GP model hyperparameters.

- Metrics & Analysis: Track and plot the best observed fitness vs. iteration number for each method. Repeat the entire process with multiple random initial training sets to generate performance statistics.

Research Reagent Solutions

Table 2: Key Computational Tools for Acquisition Function Research

| Item / Software | Function in Experiment |

|---|---|

| BoTorch / GPyTorch | Primary Python libraries for defining GP models and implementing state-of-the-art acquisition functions (including qEI, qUCB, qKG). |

| Ax Platform | Adaptive experimentation platform from Meta that provides user-friendly APIs for BO, ideal for benchmarking. |

| FLIP (Fitness Landscape Inference Package) | Provides benchmark protein fitness landscapes for standardized testing of optimization algorithms. |

| PyMOL / BioPython | For visualizing protein structures and handling sequence data, especially when enforcing physical constraints. |

| Jupyter Notebook | Interactive environment for prototyping BO loops, visualizing convergence, and analyzing results. |

Workflow and Logical Diagrams

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

General Workflow & System Integration

- Q1: Our robotic liquid handler consistently fails to pick up tips during the protein variant plate preparation step. What could be the issue?

- A: This is often a calibration or labware definition issue. First, verify the tip box location in the deck layout definition matches the physical deck slot. Check for tip box misalignment. Re-run the tip pick-up calibration routine for the specific head and tip type. Ensure the tip box barcode (if used) is correctly registered in the scheduler software.

Q2: The Bayesian optimization loop seems to have stalled; it's no longer suggesting new protein variants after several cycles. How can we diagnose this?

- A: This typically indicates convergence or an issue with the acquisition function. Check the convergence criteria (e.g., expected improvement below threshold). Inspect the model's surrogate landscape; it may be overly confident. Try adjusting the acquisition function's exploration/exploitation parameter (e.g., kappa for UCB, xi for EI). Verify that the measured experimental data (e.g., fluorescence, absorbance) from the robot is being correctly parsed and fed back to the optimizer without formatting errors.

Q3: We are observing high variance in the assay readouts (e.g., ELISA) from our robotic platform, even for technical replicates. What steps should we take?

- A: High inter-plate or inter-replicate variance undermines the BO loop. Follow this protocol:

- Liquid Handler Check: Perform gravimetric or dye-based dispense verification for all critical reagents (enzyme, substrate, buffer).

- Assay Protocol: Ensure consistent incubation times and temperatures. Use a calibrated plate reader.

- Data Normalization: Implement robust plate normalization using positive and negative controls on every plate.

- Control Chart: Maintain a statistical process control (SPC) chart for your control samples to track system performance over time.

- A: High inter-plate or inter-replicate variance undermines the BO loop. Follow this protocol:

Software & Data Pipeline

- Q4: The data pipeline fails when translating raw plate reader OD values into the fitness score for the Bayesian model. Error logs point to a "type mismatch."

- A: This is a common data parsing error. The script expects a numeric value but receives text (e.g., "OVRFLW" for overflows, or a comma as a decimal separator). Implement a pre-processing filter to: 1) Replace any non-numeric flags with

NaN. 2) Handle locale-specific number formats. 3) Apply a defined fitness function (e.g., normalized signal/background) only to valid numeric data, imputing or flaggingNaNvalues for review.

- A: This is a common data parsing error. The script expects a numeric value but receives text (e.g., "OVRFLW" for overflows, or a comma as a decimal separator). Implement a pre-processing filter to: 1) Replace any non-numeric flags with

- Q5: How do we integrate a custom, proprietary assay readout from a specialized instrument into the automated optimization loop?

- A: The key is creating a standardized connector. Develop a Python wrapper that:

- Polls a designated network folder for the instrument's output file.

- Extracts the relevant metric using a known file format (CSV, JSON) or a regular expression.

- Maps the well ID from the instrument file to the variant ID from the robotic run log.

- Writes the final (variant ID, fitness) pair to the central database queue for the BO model.

- A: The key is creating a standardized connector. Develop a Python wrapper that:

Experimental Protocol: Key Methodologies

Protocol 1: Microplate-Based High-Throughput Protein Expression & Screening

- Cloning & Transformation: Use robotic liquid handling to assemble variant genes via Golden Gate or Gibson Assembly into an expression vector. Transform into high-efficiency E. coli or yeast competent cells.

- Expression Culturing: Pick colonies into deep-well 96-well plates containing auto-induction media. Incubate with shaking (800 rpm) at optimal temperature for 24 hours.

- Cell Lysis & Clarification: Add lysis buffer (e.g., B-PER with lysozyme) via reagent dispenser. Shake, then centrifuge plates to pellet debris.

- Activity Assay: Transfer clarified lysate to assay plates pre-loaded with substrate. For an enzymatic assay, monitor product formation kinetically using a plate reader.

- Data Reduction: Calculate initial velocity for each well. Normalize to total protein concentration (via Bradford assay on parallel plate) to determine specific activity.

Protocol 2: Automated Bayesian Optimization Cycle Execution

- Iteration Zero (Initial Design): Manually or robotically prepare an initial diverse set of 24-96 protein variants (e.g., covering sequence space). Measure fitness.

- Model Update: Input (variant sequence/structure features, fitness) into the Gaussian Process (GP) model. Train model hyperparameters.

- Acquisition & Selection: Use the acquisition function (Expected Improvement) on the GP posterior to select the next batch (e.g., 8-24) of predicted-high-performing variants.

- Robotic Validation: Automated scheduler converts the variant list into physical labware instructions (which well contains which DNA). The robotic platform executes Protocol 1 for the new batch.

- Loop Closure: New fitness data is automatically uploaded, and the cycle returns to Step 2. Continue for predetermined cycles or until convergence.

Table 1: Comparison of Bayesian Optimization Acquisition Functions for Protein Engineering

| Acquisition Function | Key Parameter(s) | Best For | Convergence Speed | Risk of Stagnation |

|---|---|---|---|---|

| Expected Improvement (EI) | ξ (Exploration) | General-purpose, balanced search | High | Moderate |

| Upper Confidence Bound (UCB) | κ (Balance) | Explicit exploration control | Very High | Low |

| Probability of Improvement (PI) | ξ (Trade-off) | Pure exploitation, local search | Moderate (local) | Very High |

| Thompson Sampling | N/A | Parallel/batch selection, natural trade-off | High | Low |

Table 2: Common Robotic Liquid Handler Performance Metrics

| Metric | Target Specification | Typical Impact of Deviation |

|---|---|---|

| Dispense Precision (CV%) | <5% for 1-50µL | High assay variance, poor replicates. |

| Tip Pick-Up Success Rate | >99.5% | Run failures, incomplete data. |

| Well-to-Well Carryover | <0.1% | Cross-contamination, false positives. |

| Deck Temperature Uniformity | ±1.0°C from setpoint | Variable reaction kinetics. |

Research Reagent Solutions Toolkit

Table 3: Essential Materials for Robotic Protein Engineering Pipeline

| Item | Function | Example Product/Catalog |

|---|---|---|

| Low-Bind, Round-Bottom 96-Well Plates | Minimizes protein loss during expression and assay steps. | Greiner 96-well PP, V-bottom |

| Automation-Compatible Tip Boxes | Ensures reliable robotic tip pick-up. | Beckman Coulter Biomek FX Tips |

| Lyophilized Substrate Plates | Enables rapid, consistent assay initiation by adding lysate. | Custom-spotted fluorogenic substrate plates |

| Lysis Buffer with Robust Protease Inhibitors | Ensures consistent, active protein extraction across variants. | Commercial bacterial lysis reagent + EDTA/PMSF |

| Master Mix for qPCR/Colony PCR | Quality control of DNA constructs pre-expression. | ThermoFisher Platinum SuperFi II |

| Barcoded Tube Racks & Plates | Critical for sample tracking and preventing pipeline errors. | ThermoScientific Matrix 2D-barcoded tubes |

Visualizations

Bayesian Optimization Cycle for Protein Engineering

Pipeline: Robotic Execution to Data Processing

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our high-throughput screening for an engineered lipase shows inconsistent activity readings between replicates. What could be the cause? A: Inconsistent activity in enzyme screens is often due to substrate preparation variability or microplate edge effects. For lipid substrates, ensure uniform emulsion by sonicating immediately before dispensing. Use a plate seal during incubation to prevent evaporation gradients. Always include internal control columns on every plate. Center the plate in the plate reader and pre-equilibrate to the assay temperature.

Q2: Post-transfection, our HEK293 cell viability drops significantly during AAV vector production, reducing titers. How can we mitigate this? A: This indicates cellular stress from transfection reagents or transgene toxicity. First, optimize the DNA:PEI ratio (typically 1:2 to 1:3) in a small-scale test. Consider using a ternary transfection system with a transfection enhancer. Implement a temperature shift from 37°C to 32°C at 24 hours post-transfection to slow metabolism and improve yield. Supplement media with 1mM valproic acid to boost ITR-driven expression without increasing cell death.

Q3: Our Bayesian optimization model for antibody affinity maturation is converging on a local optimum with poor off-rate. How do we escape this? A: Your acquisition function may be too exploitative. Increase the exploration parameter (kappa or epsilon). Incorporate a "random mutation" batch (10-15% of each library cycle) to explore sequence space outside the model's predictions. Also, ensure your training data includes negative (poor binding) clones to better define the fitness landscape. Re-evaluate your feature representation; include structural descriptors like charge patches if using only sequence.

Q4: Purification yields of our His-tagged engineered enzyme are low despite high expression. What steps should we take? A: This suggests insolubility or tag inaccessibility. First, run a solubility check via fractionation. If insoluble, refactor the construct: add a solubility tag (MBP, SUMO) N-terminal to the His-tag, or co-express with chaperones. If soluble, the tag may be buried. Optimize binding conditions: increase imidazole (10-40mM) in the binding buffer to reduce weak non-specific interactions, test different buffers (Tris vs. Phosphate, pH 7.4-8.0), and ensure no reducing agents are chelating the Ni²⁺ resin.

Q5: Our adenoviral vector loses infectivity after CsCl gradient purification. How can we stabilize it? A: CsCl can be destabilizing. Switch to a non-ionic iodixanol gradient which is gentler and improves recovery. After purification, promptly desalt into a stabilizing formulation buffer: 20mM Tris, 2mM MgCl2, 25mM NaCl, 5% sucrose (w/v), pH 8.0. Always use low-protein-binding tubes and pipette tips. Determine the optimal storage temperature; for many adenoviruses, -80°C in single-use aliquots is better than 4°C.

Q6: During yeast surface display for antibody fragments, the antigen-binding signal is weak despite known affinity. Why? A: This is commonly an expression/folding issue in the yeast secretory pathway. Codon-optimize the scFv gene for S. cerevisiae. Ensure your induction conditions are optimal: maintain OD600 < 2.0 at induction, use SC -Trp -Ura medium with 2% galactose, and induce at 20-30°C for 18-24 hours. Include a mild reducing agent (e.g., 5mM DTT) in the staining buffer to reduce non-specific disulfide-mediated aggregation.

Table 1: Bayesian Optimization Performance in Protein Engineering Case Studies

| Protein Class | Library Size | Initial Hits | BO Cycles | Final Improvement | Key Metric |

|---|---|---|---|---|---|

| PETase Enzyme | 5x10^5 | 0.12 U/mg | 6 | 4.8x | Activity (kcat/Km) |

| Anti-IL17 Antibody | 2x10^7 | 3.2 nM (KD) | 8 | 78x (41 pM) | Binding Affinity (KD) |

| AAV9 Capsid | 1x10^6 | 12% TR | 5 | 3.1x (37% TR) | Tropism Ratio (CNS/Liver) |

| CAR-T scFv Domain | 3x10^6 | EC50: 45nM | 7 | 22x (EC50: 2nM) | Cytotoxicity (EC50) |

Table 2: Critical Reagent Formulations for Viral Vector Production

| Reagent | Composition / Specification | Purpose & Critical Notes |

|---|---|---|

| Polyethylenimine (PEI) | Linear, 40kDa, pH 7.0, 1mg/mL in water, filter sterilized | Transfection; batch variability is high, test each new lot. |

| Iodixanol Gradient | 15%, 25%, 40%, 60% (w/v) in DPBS + 1mM MgCl2 + 2.5mM KCl | AAV/AdV purification; osmolarity must be ~270 mOsm/kg. |

| Cell Culture Media | FreeStyle 293 or similar, + 1% GlutaMAX, + 0.1% Pluronic F-68 | Suspension HEK293 culture; reduces shear stress. |

| Lysis Buffer | 50mM Tris, 150mM NaCl, 1mM MgCl2, 0.5% Triton X-100, pH 8.0 | Harvesting intracellular vectors; include Benzonase (50U/mL). |

Detailed Experimental Protocols

Protocol 1: Bayesian-Optimized Site-Saturation Library Construction for Enzymes

- Input: Identify 5-8 mutable positions from structural analysis.

- Oligo Design: Design degenerate primers using NNK codons (32 codons, all 20 AAs) for each position.

- PCR Assembly: Perform overlap extension PCR (OE-PCR) to incorporate degenerate primers into the gene template.

- Purification: Gel-purify the full-length product using a column-based kit (e.g., Zymoclean).

- Cloning: Digest both insert and vector (e.g., pET-28a+) with appropriate restriction enzymes (2h, 37°C). Ligate at a 3:1 insert:vector molar ratio using T4 DNA ligase (1h, 22°C).

- Transformation: Desalt the ligation mixture and electroporate into competent E. coli DH10B cells (2.5kV, 5ms). Recover in 1mL SOC for 1h.

- Library Quality Control: Plate serial dilutions to calculate transformation efficiency. Sequence 10-20 random colonies to assess diversity and mutation rate.

- Induction: Express in E. coli BL21(DE3) with 0.5mM IPTG at 18°C for 16h.

Protocol 2: High-Throughput SPR Screening for Antibody Affinity Maturation

- Sensor Chip Preparation: Prime a Series S CM5 chip with HBS-EP+ buffer (10mM HEPES, 150mM NaCl, 3mM EDTA, 0.05% P20, pH 7.4).

- Antigen Immobilization: Dilute antigen to 20μg/mL in 10mM sodium acetate pH 4.5. Inject for 300s at 10μL/min to achieve ~5000 RU response using amine coupling chemistry (EDC/NHS activation).

- Library Binding: Clarify crude periplasmic extracts by centrifugation (15,000xg, 10min). Dilute 1:5 in HBS-EP+.

- High-Throughput Injection: Using an autosampler, inject each sample for 120s at 30μL/min, followed by 300s dissociation. Regenerate with two 30s pulses of 10mM Glycine pH 2.0.

- Data Processing: Double-reference all sensograms (reference flow cell & buffer blank). Fit the steady-state binding response at equilibrium (Req) vs. concentration to a 1:1 Langmuir model to derive apparent KD.

- Model Update: Feed Req values for the screened subset (e.g., 200 clones) into the Gaussian Process model. The acquisition function (e.g., Expected Improvement) selects the next batch for screening.

Visualizations

Title: Bayesian Optimization Cycle for Protein Engineering

Title: Viral Vector Production Workflow & Troubleshooting

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Protein Engineering & Optimization

| Item | Function & Application |

|---|---|

| NNK Degenerate Oligos | Encodes all 20 amino acids + TAG stop; used for comprehensive site-saturation mutagenesis library design. |

| Linear Polyethylenimine (PEI Max) | High-efficiency, low-cost transfection reagent for transient viral vector production in HEK293 cells. |

| Protein A/G Magnetic Beads | Rapid, small-scale purification of antibodies/FC-fusions from crude lysates for quick screening assays. |

| Benzonase Nuclease | Digests host cell nucleic acids post-lysis to reduce viscosity and improve vector purity. |

| Iodixanol (OptiPrep) | Non-ionic, iso-osmotic density gradient medium for high-recovery purification of AAV and other vectors. |

| HBS-EP+ Buffer (10X) | Gold-standard running buffer for surface plasmon resonance (SPR) to minimize non-specific binding. |

| Tris(2-carboxyethyl)phosphine (TCEP) | Stable, odorless reducing agent for disulfide bonds in protein storage and assay buffers. |

| Pluronic F-68 (10% Solution) | Non-ionic surfactant added to suspension culture to protect cells from shear stress. |

Overcoming Challenges: Troubleshooting and Advanced Optimization Strategies for Robust Performance

Troubleshooting Guides & FAQs

Q1: Why does my assay show high technical variability (CV > 20%) between replicates, even with the same protein variant? A1: High technical variability often stems from inconsistent reagent handling or environmental drift. Implement these steps:

- Pre-wet Pipette Tips: For viscous solutions (e.g., glycerol stocks, concentrated protein), pre-wet tips by aspirating and dispensing the liquid 3 times before taking the final transfer volume.

- Single-Use Aliquots: Thaw master stocks of critical reagents (e.g., enzymes, co-factors) once and create single-use aliquots to avoid freeze-thaw degradation.

- Plate Reader Calibration: Perform a full-wavelength scan and path length check monthly using a neutral density filter and water absorbance test, respectively.

Q2: How can I distinguish true protein function signal from background noise in a high-throughput screen? A2: Utilize Z'-factor analysis for each assay plate to statistically validate screen quality.

- Protocol: Include 16 positive control wells (known active variant) and 16 negative control wells (wild-type or knockout) on every 384-well plate. Calculate using:

Z' = 1 - [ (3σ_positive + 3σ_negative) / |μ_positive - μ_negative| ]A Z' > 0.5 indicates a robust assay suitable for Bayesian optimization input. Discard plates with Z' < 0.

Q3: My expression titers (mg/L) and activity (U/mg) data from the same clone are negatively correlated. What's wrong? A3: This indicates a sample processing timing issue. High titers can lead to rapid resource depletion and proteolytic degradation if harvest is delayed.

- Fix: Standardize harvest time by optical density (OD600) rather than fixed hours post-induction. For E. coli, harvest at OD600 = 0.8 ± 0.05. For yeast/Pichia, harvest at mid-log phase (OD600 5-10) before carbon source depletion.

Q4: How should I preprocess inconsistent data before feeding it into a Bayesian optimization (BO) model? A4: Apply a tiered normalization and fusion strategy. Do not use raw heterogenous readings.

Table 1: Data Preprocessing Protocol for BO in Protein Engineering

| Data Type | Primary Issue | Normalization Method | Weight in Multi-Fidelity BO |

|---|---|---|---|

| HTS Activity (96/384-well) | High noise, false positives | Robust Z-score: (x – median)/(MAD*1.4826) | Low (0.3-0.5) |

| Purified Protein Activity | Low throughput, consistent | Min-Max to [0,1] scale relative to wild-type | High (1.0) |

| Expression Titer (mg/L) | Scale mismatch with activity | Log10 transformation, then Z-score | Medium (0.7) |

| Thermostability (Tm, °C) | Instrument-specific bias | Plate-based correction using control Tm | High (0.8) |

MAD = Median Absolute Deviation

Q5: What's the best way to handle missing or "failed" data points in my sequence-function dataset? A5: Do not simply impute with the mean. Use a Bayesian hierarchical model for informed imputation during the BO loop.

- Flag data as "missing" if the assay control's Z' < 0 or if the purification yield was below a detectable threshold (e.g., < 0.1 mg/L).

- The BO algorithm's surrogate model (e.g., Gaussian Process) treats these as latent variables, inferring their distribution based on correlations with other measured features (e.g., expression level correlates with solubility).

- This explicitly models uncertainty, preventing the optimizer from being overly confident in sparse regions of the sequence space.

The Scientist's Toolkit: Research Reagent Solutions