Beyond Linearity: A Comprehensive Guide to Traditional and Nonlinear Methods in Modern Drug Development

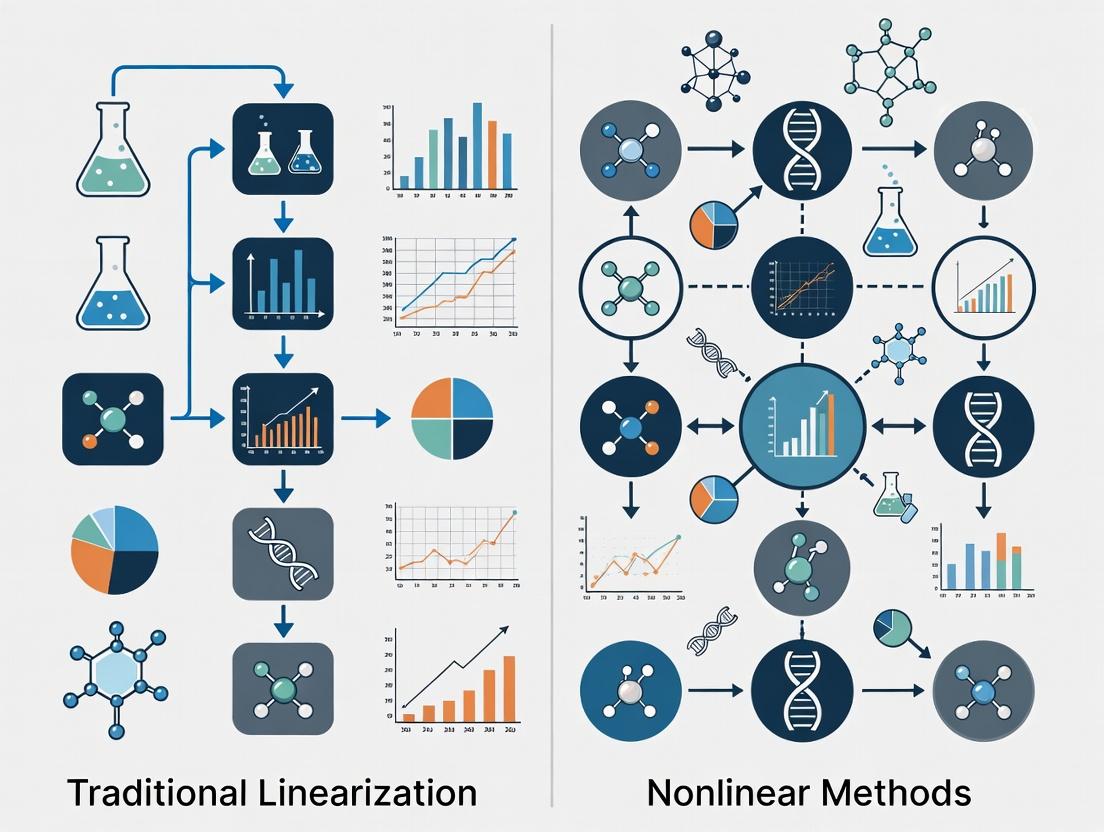

This article provides a definitive comparison of traditional linearization and modern nonlinear methods tailored for biomedical research and drug development.

Beyond Linearity: A Comprehensive Guide to Traditional and Nonlinear Methods in Modern Drug Development

Abstract

This article provides a definitive comparison of traditional linearization and modern nonlinear methods tailored for biomedical research and drug development. We first establish the core principles and limitations of linear approaches. We then explore advanced nonlinear methodologies, including entropy measures and AI-driven platforms, detailing their specific applications from target discovery to clinical trial optimization. Practical guidance is offered for overcoming common implementation challenges, such as noise sensitivity and parameter selection. Finally, we present a rigorous, evidence-based framework for selecting and validating the appropriate method based on specific research questions and data characteristics. This guide empowers researchers to leverage the full potential of both traditional and nonlinear techniques to enhance model accuracy and accelerate therapeutic discovery.

From Linear Assumptions to Complex Reality: Core Principles and When They Fall Short

Within the broader thesis on comparing traditional linearization and nonlinear methods research, this guide provides an objective performance comparison across three distinct methodological paradigms. The analysis is framed for researchers, scientists, and drug development professionals who must select appropriate tools for data analysis, predictive modeling, and complex system simulation. The spectrum ranges from linearization methods, which simplify inherently nonlinear problems into tractable linear forms, through traditional nonlinear methods, which directly model curvilinear relationships, to modern complex methods, which leverage advanced architectures like Graph Neural Networks (GNNs) to learn from intricate, relational data structures [1] [2] [3]. The evolution from linear to nonlinear to complex mirrors a shift from simplicity and interpretability towards flexibility and power, often at the cost of increased computational demand and reduced model transparency [2].

The following table provides a high-level comparison of the three core methodological paradigms discussed in this guide.

Table 1: Core Characteristics of Methodological Paradigms

| Aspect | Linearization Methods | Traditional Nonlinear Methods | Modern Complex Methods (e.g., GNNs) |

|---|---|---|---|

| Core Principle | Approximate nonlinear systems with linear models for simplified analysis [4] [5]. | Directly model curvilinear relationships using nonlinear functions [6] [2]. | Learn from graph-structured data, capturing dependencies in interconnected systems [1]. |

| Interpretability | High. Relationships are explicit and coefficients are easily explainable [2]. | Moderate to Low. Model behavior can be complex and less transparent [2]. | Very Low. Operate as "black boxes"; internal representations are difficult to decipher [1]. |

| Computational Demand | Generally low. Efficient algorithms exist for solving linear systems. | Higher. Often requires iterative optimization and can be prone to overfitting [2]. | Very High. Requires significant data and specialized hardware (e.g., GPUs/TPUs) for training [1]. |

| Data Structure Assumption | Euclidean, tabular data. Assumes independent observations. | Euclidean, tabular data. | Non-Euclidean, graph data. Explicitly models entities (nodes) and relationships (edges) [1]. |

| Primary Risk | Underfitting and inaccurate predictions if linearity assumption is violated [6]. | Overfitting to noise in the training data, leading to poor generalization [2]. | Poor generalization if graph structure is not representative or data is insufficient. |

Linearization Methods: Performance and Protocols

Linearization techniques reformulate complex, nonlinear problems into linear approximations to leverage efficient linear solvers. Their performance is highly context-dependent, excelling in systems where nonlinearities are mild or can be effectively bounded.

Experimental Performance Data

Recent research in compositional reservoir simulation provides a direct comparison of advanced linearization techniques. The study implemented four methods within a parallel framework and tested them on hydrocarbon reservoir models of varying complexity [4].

Table 2: Performance of Linearization Methods in Reservoir Simulation [4]

| Test Case | Method | Nonlinear Iterations | Key Performance Insight |

|---|---|---|---|

| 5-Component Gas Field | Operator-Based Linearization (OBL) | 770 | Most efficient for simpler systems; fastest convergence. |

| Finite Backward Difference (FDB) | 841 | Reliable but less efficient than OBL in this case. | |

| Finite Central Difference (FDC) | 843 | Comparable to FDB. | |

| Residual Accelerated Jacobian (RAJ) | 842 | Comparable to FDB. | |

| 10-Component Gas Field (with injection) | Finite Backward Difference (FDB) | 706 | Most robust for complex systems; only method to converge reliably. |

| Residual Accelerated Jacobian (RAJ) | 723 | Converged but with more iterations than FDB. | |

| Operator-Based Linearization (OBL) | Failed to Converge | Unsuitable for high-complexity scenarios in this test. | |

| 10-Component (no injection) | Residual Accelerated Jacobian (RAJ) | ~ Comparable to others | Effective at capturing dynamics with lower computational expense. |

Detailed Experimental Protocol: Advanced Linearization in Simulation

The quantitative findings in Table 2 were generated using the following rigorous methodology [4]:

- Problem Formulation: The study focused on fully implicit compositional simulation of multiphase (water, oil, gas) fluid flow in heterogeneous porous media, governed by highly nonlinear partial differential equations for mass conservation and algebraic constraints for thermodynamic phase equilibrium.

- Method Implementation: Four linearization schemes—Finite Backward Difference (FDB), Finite Central Difference (FDC), Operator-Based Linearization (OBL), and Residual Accelerated Jacobian (RAJ)—were implemented within a unified, MPI-based parallel simulation framework.

- Benchmarking Design: Performance was benchmarked against a legacy commercial simulator using three structured test cases: a simplified five-component hydrocarbon model with CO₂ injection, a complex ten-component model with CO₂ injection, and a ten-component model without injection.

- Primary Metrics: The key metric for efficiency was the total number of nonlinear iterations required for convergence at each time step. Secondary metrics included the percentage of total simulation time spent computing linearized operators.

- Validation: The physical accuracy of the simulation outputs (e.g., pressure fields, component saturation) was validated against the legacy simulator to ensure the linearization methods did not compromise solution correctness.

Traditional Nonlinear Methods: Performance and Protocols

Traditional nonlinear methods directly model relationships without relying on a linearity assumption. They are essential when variables interact in complex, curvilinear ways, which is common in biological and survey data [6].

Experimental Performance Data

A comprehensive 2019 study compared feature selection performance between linear and nonlinear methods using large-scale aging-related survey datasets (e.g., Health and Retirement Study) and synthetic data where the true relationships were known [6].

Table 3: Performance of Linear vs. Nonlinear Feature Selection Methods [6]

| Performance Aspect | Linear Methods | Nonlinear Methods | Implication |

|---|---|---|---|

| Overall Feature Selection Accuracy | Lower | Better overall performance | Nonlinear methods more correctly identify relevant variables when relationships are not straight-line. |

| Stability to Variable Inclusion/Exclusion | Affected | More stable performance | Results from nonlinear methods are less sensitive to changes in the initial set of variables analyzed. |

| Ability to Identify Non-linear Dependencies | Poor | Effectively identifies | Linear methods often fail to detect curvilinear or interactive relationships, leading to misleading conclusions. |

| Common Use in Gerontology (at time of study) | >50% of papers | Less common | Highlights a potential gap between common practice and optimal methodological choice. |

Detailed Experimental Protocol: Feature Selection Comparison

The comparative results in Table 3 were derived from the following experimental design [6]:

- Data Sources:

- Real-world Data: Two major longitudinal aging surveys: the Wisconsin Longitudinal Study (WLS) and the Health and Retirement Study (HRS).

- Synthetic Data: Artificially generated datasets with pre-defined linear and nonlinear relationships between features and target outcomes, serving as a ground-truth benchmark.

- Method Categories: The study evaluated:

- Linear Methods: Common linear regression-based feature selection techniques frequently used in gerontology.

- Nonlinear Methods: Three specific nonlinear feature selection methods (implemented as filter methods) capable of detecting non-linear dependencies.

- Evaluation Procedure: Algorithms were tasked with identifying which features in the dataset were relevant to a specified dependent variable (e.g., a health outcome). Performance was measured by comparing the algorithm's selected features against the known relevant features (in synthetic data) or through robust cross-validation metrics (in real-world data).

- Key Metrics: The primary metrics were precision (proportion of selected features that are truly relevant) and recall (proportion of all relevant features that are selected), combined into an overall F1-score. Stability was measured by varying the initial feature pool and observing changes in output.

Modern Complex Methods: Performance and Protocols

Modern complex methods, such as Graph Neural Networks (GNNs), represent a paradigm shift by learning directly from graph-structured data. This makes them uniquely powerful for problems involving interrelated entities, such as molecular structures in drug discovery or recommendation systems [1].

Experimental Performance Data

GNNs have demonstrated substantial performance improvements over previous state-of-the-art methods across diverse industrial and scientific applications [1].

Table 4: Documented Performance Gains from Graph Neural Network Applications [1]

| Application Domain | Use Case | Baseline Model | GNN Model & Improvement |

|---|---|---|---|

| Recommender Systems | Pinterest (PinSage) | Visual/Annotation Embedding Models | 150% improvement in hit-rate; 60% improvement in Mean Reciprocal Rank (MRR). |

| Recommender Systems | Uber Eats | Previous Production Model | 20%+ performance boost on key metrics; GNN-based feature became the most influential in the final model. |

| Traffic Prediction | Google Maps ETA | Prior Production Approach | Up to 50% accuracy improvement, reducing negative user outcomes. |

| Scientific Discovery | Materials Discovery (GNoME) | Traditional Screening | Discovered 2.2 million new stable crystals; powers external synthesis labs. |

Detailed Experimental Protocol: GNN for Recommendation Systems

The protocol for implementing and evaluating a GNN, as exemplified by Pinterest's PinSage system, involves the following steps [1]:

- Graph Construction: The entire Pinterest platform is represented as a bipartite graph. One set of nodes represents pins (image bookmarks), and the other represents boards (user-created collections). Edges connect pins to the boards they are saved in, capturing user curation behavior.

- Model Architecture (PinSage): A Graph Convolutional Network (GCN) variant is used. The core operation is localized convolution: each pin's representation is iteratively updated by aggregating information from its neighboring pins (those that co-occur on the same boards). This generates dense "embedding" vectors for each pin.

- Training Objective: The model is trained using a max-margin ranking loss. The goal is to learn embeddings such that pins that are frequently co-saved (positive pairs) have similar embeddings, while randomly selected pins (negative pairs) have dissimilar embeddings.

- Evaluation:

- Offline Metrics: Performance is evaluated on a held-out set of user interactions using ranking metrics:

- Hit-rate: Measures if the relevant item appears in the top-K recommendations.

- Mean Reciprocal Rank (MRR): Measures the rank position of the first relevant recommendation.

- Online A/B Testing: The final model is deployed in a live environment to a fraction of users to measure its impact on real user engagement metrics compared to the old model.

- Offline Metrics: Performance is evaluated on a held-out set of user interactions using ranking metrics:

The Scientist's Toolkit: Research Reagent Solutions

Selecting the correct methodological "reagent" is as critical as choosing a chemical reagent for an experiment. The following table maps essential computational tools and techniques to the three methodological paradigms.

Table 5: Essential Research Toolkit by Methodological Paradigm

| Tool/Reagent | Primary Paradigm | Function & Purpose | Considerations |

|---|---|---|---|

| Standard Linearization (SL) [5] | Linearization | Reformulates polynomial terms in binary optimization into linear constraints with auxiliary variables. Provides a baseline MILP formulation. | Can produce weak continuous relaxation bounds. Extended reformulations (MaxBound, ND-MinVar) offer tighter bounds [5]. |

| Operator-Based Linearization (OBL) [4] | Linearization | Pre-computes and tabulates complex physical operators (e.g., fugacity) as functions of state parameters. Drastically reduces online computation. | Highly efficient for problems with smooth parameter spaces but may fail in highly complex, discontinuous regimes [4]. |

| Nonlinear Feature Selection Filters [6] | Traditional Nonlinear | Scores and ranks individual features based on nonlinear statistical associations (e.g., mutual information) with the target variable. | Low computational cost and model-agnostic. May ignore feature interactions; often used as a preprocessing step. |

| Wrapper Methods (e.g., Forward Selection) [6] | Traditional Nonlinear | Iteratively selects feature subsets by training and evaluating a predictive model's performance at each step. | Can find high-performing subsets but is computationally expensive and prone to overfitting without careful validation [6]. |

| Graph Neural Network Framework (e.g., PyTorch Geometric, DGL) | Modern Complex | Provides the software architecture to define, train, and deploy GNN models on graph-structured data. | Requires significant expertise and computational resources. Essential for implementing architectures like GraphSAGE [1]. |

| Node & Graph Embedding | Modern Complex | The vector representation of nodes or entire graphs learned by a GNN. Encodes structural and feature information for downstream tasks. | The quality of the embedding is the direct determinant of performance on tasks like link prediction or classification [1]. |

| Cross-Validation (K-Fold) [2] | All Paradigms | Robust technique to estimate model generalizability by partitioning data into training and validation sets multiple times. | Crucial for preventing overfitting, especially in nonlinear and complex methods with many parameters [2]. |

| Regularization (L1/Lasso, L2/Ridge) [2] | Linearization & Trad. Nonlinear | Adds a penalty term to the model's loss function to shrink coefficients, reducing model complexity and overfitting. | L1 regularization can drive coefficients to zero, performing automatic feature selection. A key tool for managing the bias-variance trade-off [2]. |

This guide provides a comparative analysis of linear and nonlinear analytical methods, anchored by the foundational mathematical principles of superposition and proportionality. Framed within ongoing research to linearize complex nonlinear systems, we assess the performance, applicability, and experimental validation of these approaches, with a focus on applications in biomedical and drug development research.

| Analytical Pillar | Core Principle | Ideal Application Domain | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Linear Methods | Superposition: Response to combined inputs equals the sum of individual responses [7] [8]. Proportionality: Output scaling is directly proportional to input scaling [7] [9]. | Systems with linear, time-invariant components; circuits with resistors, capacitors, inductors [7]; initial data modeling [10]. | High interpretability, mathematical tractability, efficient computation, establishes a clear baseline [7] [10]. | Cannot model inherent nonlinearities (e.g., saturation, hysteresis); fails for complex, interactive systems [7] [10]. |

| Nonlinear Methods | The system's output does not satisfy superposition or homogeneity; relationships are described by curves, thresholds, or complex interactions [7] [10]. | Systems with dynamic feedback, biological pathways, protein-ligand interactions, and real-world observational data [11] [12] [10]. | Captures complex, real-world phenomena and interactions; essential for modeling biology and advanced machine learning [11] [10]. | Computationally intensive; risk of overfitting; parameters can be difficult to estimate and interpret [11] [10]. |

| Linearization Techniques | Approximating a nonlinear system's behavior around a specific operating point using a linear model [7]. | Small-signal analysis of amplifiers; initial stability analysis of complex systems; simplifying models for local prediction [7]. | Enables use of powerful linear analysis tools on nonlinear systems; simplifies initial design and understanding [7]. | Approximation is only valid locally; fails to capture global system behavior or large disturbances [7]. |

Foundational Concepts: The Mathematical Bedrock

The distinction between linear and nonlinear systems is not merely operational but is rooted in fundamental mathematical properties.

1.1 Principle of Superposition For a linear system ( L ), the response to a weighted sum of inputs is the identically weighted sum of the individual responses [8]: [ L(a1 x1(t) + a2 x2(t)) = a1 L(x1(t)) + a2 L(x2(t)) ] This principle is ubiquitous, from analyzing circuits with multiple independent sources [13] [9] to solving linear differential equations governing wave propagation [8]. It allows the deconstruction of complex problems into simpler, solvable components.

1.2 Principle of Proportionality (Homogeneity) A direct corollary of superposition, proportionality states that scaling the input to a linear system by a factor ( k ) scales the output by the same factor [7] [9]: [ L(k \cdot x(t)) = k \cdot L(x(t)) ] This property is exemplified by Ohm's Law ((V = IR)), where doubling the voltage across a resistor doubles the current [7].

1.3 The Emergence of Nonlinearity Nonlinear systems violate these principles. Their behavior is characterized by outputs that are not additive or directly proportional. Examples include diodes (exponential I-V relationship), saturating amplifiers, and most biological systems where feedback loops and thresholds are present [7]. The relationship between drug dose and therapeutic effect often follows a nonlinear, saturating curve rather than a straight line, a concept recognized in pharmacology for over a century [14].

Performance Comparison Guides

Comparative Analysis of Methodological Performance

The choice between linear and nonlinear models has quantifiable impacts on predictive accuracy, computational burden, and interpretability, as evidenced across engineering and biomedical fields.

Table 2.1: Performance Metrics for Linear vs. Nonlinear Methods

| Performance Metric | Linear Methods | Nonlinear Methods (e.g., ML Models) | Experimental Context & Citation |

|---|---|---|---|

| Predictive Accuracy | Lower for complex, curved relationships. Serves as a key baseline [10]. | Higher for systems with interactions and thresholds. Can boost hit rates in virtual screening by >50-fold [12]. | Drug discovery: AI integrating pharmacophoric features [12]. Data Science: Capturing non-constant change [10]. |

| Computational Efficiency | High. Solutions often analytical or requiring minimal computation (e.g., least squares) [7] [10]. | Variable to Low. Can be resource-intensive, requiring iterative optimization (e.g., gradient descent) [10]. | Circuit Analysis: Superposition provides a simpler alternative to solving simultaneous equations [13] [9]. |

| Interpretability & Transparency | High. Parameters (e.g., coefficients) have clear, direct meaning [10]. | Low to Moderate. Often act as "black boxes"; challenging to trace causality [11] [10]. | Drug Development: A barrier for regulatory acceptance of complex Causal ML models [11]. |

| Handling of High-Dimensional Data | Poor. Prone to overfitting without regularization; cannot model complex interactions well. | Good. Designed to identify complex patterns and interactions in large datasets (e.g., genomics) [11] [15]. | Biomarker Discovery: Analyzing omics data to find novel targets [15]. |

| Robustness to Assumptions | Low. Highly sensitive to violations of linearity, independence, and homoscedasticity [10]. | Higher. Flexible function forms can adapt to various data structures. | Statistical Modeling: Linear models fail if the true relationship is curved [10]. |

Experimental Protocol: Decoding Neural Signals with Linear vs. Nonlinear Classifiers

Objective: To compare the efficacy of linear and nonlinear machine learning models in decoding selective attention to speech from ear-EEG recordings [16]. Background: This experiment mirrors a core challenge in biosignal processing and drug development biomarker analysis: extracting a meaningful signal (cognitive attention) from complex, noisy physiological data. Materials:

- Ear-EEG recording device.

- Auditory stimuli (competing speech streams).

- Preprocessing pipeline (filtering, artifact removal).

- Computing platform for model training. Procedure:

- Data Acquisition: Record ear-EEG signals from subjects instructed to attend to one of two competing spoken messages.

- Feature Extraction: Segment EEG data and extract relevant features (e.g., time-frequency representations, amplitude features).

- Model Training & Comparison:

- Linear Model: Train a linear classifier (e.g., Linear Discriminant Analysis or Logistic Regression) on the features to predict the attended speaker.

- Nonlinear Model: Train a nonlinear classifier (e.g., a neural network, support vector machine with nonlinear kernel, or gradient boosting machine) on the same dataset.

- Validation: Evaluate both models using cross-validation, comparing key metrics: decoding accuracy, computational training time, and model interpretability (e.g., analyzing classifier weights for the linear model). Expected Outcome: The nonlinear model is anticipated to achieve higher decoding accuracy by capturing complex neural interactions [16], but at the cost of longer training time and reduced interpretability compared to the linear baseline. This trade-off directly informs the selection of analytical methods for clinical biomarker studies.

Experimental Protocol: Validating Target Engagement via a Cellular Thermal Shift Assay (CETSA)

Objective: To provide quantitative, system-level validation of drug-target engagement in intact cells—a nonlinear pharmacological process—using CETSA [12]. Background: Confirming that a drug molecule physically engages its intended protein target in a physiological environment is a critical, nonlinear step in development, as engagement does not scale linearly with dose and is influenced by complex cellular factors. Materials:

- Intact cells or tissue samples.

- Drug compound of interest and vehicle control.

- Thermal cycler or heating block.

- Cell lysis reagents.

- Centrifuge and protein analysis equipment (e.g., mass spectrometer, western blot). Procedure:

- Dosing & Heating: Treat parallel cell samples with a range of drug concentrations or a vehicle control. Heat each sample to a series of precise temperatures.

- Stabilization & Lysis: Allow samples to cool, promoting aggregation of unfolded, un-stabilized proteins. Lyse cells to release soluble proteins.

- Separation & Quantification: Separate soluble proteins from aggregates by centrifugation. Quantify the remaining soluble target protein in each sample using a specific detection method (e.g., high-resolution mass spectrometry) [12].

- Data Analysis: Plot the fraction of remaining soluble protein versus temperature for each drug dose. A rightward shift in the protein's melting curve indicates thermal stabilization due to drug binding—a direct proof of target engagement. Significance: CETSA moves beyond simplistic, linear biochemical binding assays by quantifying engagement within the nonlinear complexity of the cellular environment. It provides critical data to derisk drug programs and supports more confident go/no-go decisions [12].

Visualizing Pathways and Workflows

Comparative Model Selection Pathway

This diagram outlines the decision logic for researchers choosing between linear and nonlinear analytical models based on data structure and research goals [10].

Integrated Drug Discovery with AI Feedback Loops

This workflow visualizes the modern, non-linear, end-to-end drug discovery pipeline where AI-driven insights create continuous feedback loops, accelerating the entire process [12] [14].

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key materials and platforms essential for conducting research that bridges linear and nonlinear analytical methods in biomedical science.

Table 4.1: Key Research Reagents and Platforms

| Item / Solution | Function / Description | Relevance to Linearity/Nonlinearity |

|---|---|---|

| Cellular Thermal Shift Assay (CETSA) | Quantitatively measures drug-target engagement in intact cells and tissues by detecting ligand-induced thermal stabilization of proteins [12]. | Validates nonlinear pharmacology: Proves binding within the complex, nonlinear cellular environment, closing the gap between linear biochemical potency and cellular efficacy. |

| AI/ML Platforms (e.g., AlphaFold, DeepTox) | AI systems for protein structure prediction, toxicity forecasting, and generative molecular design [15]. | Embrace nonlinearity: Use deep learning (nonlinear models) to predict complex structure-activity relationships and generate novel chemical entities beyond linear intuition. |

| Real-World Data (RWD) Sources | Includes electronic health records (EHRs), insurance claims, patient registries, and wearable device data [11]. | Source of nonlinear complexity: Provides high-dimensional, observational data with inherent confounders, requiring advanced nonlinear/Causal ML methods for analysis [11]. |

| Causal Machine Learning (CML) Frameworks | Integrates ML with causal inference principles (e.g., propensity scoring, doubly robust estimation) to estimate treatment effects from RWD [11]. | Seeks linear causal estimates from nonlinear data: Applies sophisticated methods to mitigate bias in nonlinear observational systems to derive more reliable, linear-effect estimates for decision-making. |

| Electronic Lab Notebook (ELN) & LIMS (e.g., Genemod) | Digital platforms for managing experimental data, workflows, and collaboration, ensuring data integrity and traceability [15]. | Foundational for both: Enables rigorous data collection and sharing, which is essential for building, training, and validating both simple linear and complex nonlinear models. |

Application in Drug Discovery & Development

The principles of linearity and nonlinearity are not abstract concepts but are actively negotiated in modern pharmaceutical research.

5.1 Linearization in a Nonlinear World: The Role of Causal ML A prime example of seeking linear insights from nonlinear complexity is the use of Causal Machine Learning (CML) on Real-World Data (RWD). RWD from EHRs is inherently nonlinear, filled with confounding variables and complex interactions [11]. Traditional linear regression often fails here. Advanced CML methods—such as propensity score modeling with ML, doubly robust estimation, and instrumental variable analysis—attempt to "linearize" the problem. They aim to isolate and estimate the average treatment effect, a linear causal parameter, from the messy nonlinear observational dataset. This supports tasks like creating external control arms or identifying patient subgroups, thereby enhancing trial efficiency and generalizability [11].

5.2 The Nonlinear Reality of Biology and AI-Driven Discovery Conversely, the core of biology and modern discovery is acknowledged as fundamentally nonlinear. This is addressed not by simplification, but by employing more powerful nonlinear tools. Generative AI models (like GANs and VAEs) design novel molecules in a vast, unexplored chemical space, a process guided by nonlinear optimization [14]. Integrated discovery platforms use these AI tools not as siloed steps but in a continuous, nonlinear feedback loop, where clinical findings inform new molecule design in an iterative cycle [14]. The goal is to manage, rather than avoid, complexity to develop better drugs faster, aiming to reverse the trend of "Eroom's Law"—the declining productivity of pharmaceutical R&D [14].

The attempt to linearize inherently nonlinear biological processes represents a persistent, yet often inadequate, tradition in research. From predicting the binding of a drug molecule to forecasting population growth, biological systems are governed by complex interactions, feedback loops, and emergent behaviors that defy simple linear approximation. This comparison guide objectively evaluates the performance of traditional linearization approaches against advanced nonlinear methods across two foundational biological scales: molecular interactions and population dynamics. Framed within a broader thesis that argues for the necessity of physics-aware and mathematically robust nonlinear models, we present experimental data demonstrating that nonlinear methodologies consistently provide superior accuracy, generalizability, and biological insight. This is critical for researchers, scientists, and drug development professionals whose work depends on predictive precision, from in silico drug discovery to ecological and agricultural forecasting [17] [18].

Comparative Analysis: Protein-Ligand Co-Folding Prediction

The prediction of how a small molecule (ligand) binds to a protein target is a cornerstone of rational drug design. Traditional methods often rely on linear approximations or simplified physical scoring functions. The advent of deep learning-based "co-folding" models, which predict protein and ligand structure simultaneously, promises a paradigm shift [17].

Performance Benchmark: Nonlinear AI Models vs. Traditional Docking

Experimental data from adversarial testing reveals a significant performance gap. When the binding site is known, traditional physics-based docking methods like AutoDock Vina achieve approximately 60% accuracy in placing the ligand in its native pose. In contrast, the nonlinear, diffusion-based architecture of AlphaFold3 (AF3) achieves over 93% accuracy under the same conditions, approaching experimental-level precision [17].

Table 1: Performance Comparison of Protein-Ligand Structure Prediction Methods

| Method Category | Example Model | Key Principle | Accuracy (Known Binding Site) | Generalization Robustness | Physical Principle Adherence |

|---|---|---|---|---|---|

| Traditional Docking | AutoDock Vina | Linear scoring functions, rigid-body/soft docking | ~60% [17] | Moderate (sensitive to scoring function) | High (explicit physics-based) |

| Deep Learning Docking | DiffDock | Nonlinear deep learning on poses | ~38% [17] | Low (data-driven) | Low (potential for steric clashes) [17] |

| Co-folding AI | AlphaFold3 (AF3) | Nonlinear diffusion, unified atomic modeling | >93% [17] | Variable (see adversarial tests) | Questionable (memorization bias) [17] |

| Co-folding AI | RoseTTAFold All-Atom | Nonlinear attention-based networks | ~Benchmark Level [17] | Variable | Questionable [17] |

The Robustness Challenge: Exposing Nonlinear Model Vulnerabilities

Despite high benchmark accuracy, nonlinear co-folding models exhibit critical vulnerabilities when tested against fundamental physical principles. In a key experiment, all residues in the ATP-binding site of Cyclin-dependent kinase 2 (CDK2) were mutated to glycine, removing side-chain interactions essential for binding. Linear physical logic dictates ligand displacement. However, models like AF3 and RoseTTAFold All-Atom continued to predict ATP binding in the original pose, indicating overfitting and a lack of genuine physical understanding [17]. In a more extreme test, mutating binding site residues to bulky phenylalanines caused severe steric clashes in model predictions, as the diffusion process failed to resolve atomic overlaps within its iterative steps [17].

Comparative Analysis: Modeling Biological Growth and Population Dynamics

Modeling the growth of organisms or populations is a classic problem where nonlinear functions are essential to capture phases of acceleration, inflection, and saturation.

Evaluating Growth Curve Models

A 2025 study on Pekin duck growth compared ten nonlinear mathematical functions (e.g., Brody, Logistic, Gompertz, von Bertalanffy). The Gompertz model was identified as the most accurate for describing growth trajectories, based on metrics like the adjusted coefficient of determination and Akaike's information criterion [19]. Its first derivative correctly identified the peak absolute growth rate at 23-24 days before decline. The Brody model showed the least favorable fit [19]. This demonstrates that selecting the appropriate nonlinear model is context-dependent and requires empirical comparison.

Table 2: Comparison of Nonlinear Growth Models for Pekin Ducks [19]

| Model Name | Model Form | Key Characteristics | Goodness-of-Fit Ranking | Identified Peak Growth (Days) |

|---|---|---|---|---|

| Gompertz | $W(t) = A \cdot \exp(-\exp(-k(t-t_i)))$ | Sigmoidal, asymmetric inflection | Best | 23 (Danish), 24 (French) |

| Logistic | $W(t) = A / (1 + \exp(-k(t-t_i)))$ | Sigmoidal, symmetric inflection | High | Not Specified |

| von Bertalanffy | $W(t) = A (1 - \exp(-k(t-t_i)))^3$ | Derived from metabolic rates | Moderate | Not Specified |

| Brody | $W(t) = A (1 - b \cdot \exp(-k t))$ | Monotonic approach to asymptote | Poorest | Not Applicable |

The Limitation of Linear Population Models

Theoretical research highlights the fundamental incompatibility of standard linear, one-sex models (e.g., Lotka-Leslie) for population projection. Projections based solely on male parameters diverge from those based solely on female parameters over finite time, except in unrealistic stationary conditions [20]. Nonlinear two-sex models are required to capture the interactive dynamics that determine a population's true intrinsic growth rate, which may or may not be bracketed by the one-sex linear rates depending on initial conditions [20].

Cross-Disciplinary Validation of Nonlinear Methodologies

The superiority of nonlinear methods is corroborated in diverse analytical fields. In Laser-Induced Breakdown Spectroscopy (LIBS) for lithium quantification in geological samples, nonlinear models (e.g., Artificial Neural Networks) significantly outperformed linear methods (e.g., univariate calibration). Linear models were heavily affected by saturation and matrix effects, while nonlinear methods achieved errors compatible with semi-quantitative analysis [21]. Similarly, in predicting complex traits like soybean branching from genomic data, nonlinear models (Support Vector Regression, Deep Belief Networks) consistently outperformed linear counterparts in linking genotype to phenotype, enabling data-driven breeding decisions [18].

Experimental Protocols for Key Cited Studies

Protocol 1: Adversarial Testing of Protein-Ligand Co-folding Models [17]

- System Selection: Choose a high-resolution protein-ligand crystal structure (e.g., CDK2 with ATP).

- Wild-Type Prediction: Input the wild-type protein sequence and ligand SMILES string into the co-folding model (AF3, RFAA, etc.). Generate a predicted structure and calculate RMSD against the crystal structure.

- Binding Site Mutagenesis:

- Glycine Scan: Mutate all binding site residue identities to glycine. Submit the mutated sequence and unchanged ligand for prediction.

- Phenylalanine Packing: Mutate all binding site residues to phenylalanine. Submit for prediction.

- Dissimilar Residue Replacement: Mutate each binding site residue to a chemically dissimilar amino acid (e.g., charged to hydrophobic). Submit for prediction.

- Analysis: Visually and quantitatively (RMSD) assess if the predicted ligand pose is displaced. Check for steric clashes and the preservation of physiochemically implausible interactions.

Protocol 2: Comparative Fitting of Biological Growth Curves [19]

- Data Collection: Obtain longitudinal body weight data for the organism across its developmental timeline.

- Model Selection: Choose a set of candidate nonlinear growth functions (e.g., Gompertz, Logistic, Brody, von Bertalanffy).

- Parameter Estimation: Use nonlinear regression algorithms (e.g., Levenberg-Marquardt) to fit each model's parameters to the weight-time data.

- Goodness-of-Fit Evaluation: Calculate and compare statistical criteria for each fitted model:

- Adjusted Coefficient of Determination (R²adj)

- Root Mean Square Error (RMSE)

- Akaike's Information Criterion (AIC)

- Bayesian Information Criterion (BIC)

- Biological Interpretation: Select the best-fitting model. Calculate its first derivative to identify the time and magnitude of peak growth rate.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Computational Tools for Nonlinear Biological Analysis

| Item/Tool Name | Category | Primary Function in Nonlinear Analysis | Example Use Case |

|---|---|---|---|

| AlphaFold3 | Software/AI Model | Predicts 3D structure of protein-ligand complexes via diffusion-based nonlinear architecture. | In silico drug screening and binding mode prediction [17]. |

| Gompertz Model Package | Software/Algorithm | Fits sigmoidal growth curves to data. Parameters describe growth rate and asymptotic mass. | Modeling animal growth kinetics in agriculture [19]. |

| AutoDock Vina | Software | Performs molecular docking using linear combination of scoring functions. | Traditional baseline for protein-ligand binding studies [17]. |

| SHAP (SHapley Additive exPlanations) | Software/Algorithm | Explains output of nonlinear machine learning models by attributing feature importance. | Interpreting genome-wide association studies in plant breeding [18]. |

| PyTorch/TensorFlow | Software Framework | Provides libraries for building and training custom deep neural networks. | Developing novel nonlinear models for phenotypic prediction [18]. |

| LIBS Spectrometer & ANN Software | Instrument/Analysis | Captures optical emission spectra; Artificial Neural Networks quantify elements despite nonlinear matrix effects. | Quantitative geochemical analysis in mining [21]. |

Visualizing Nonlinear Analysis Workflows

Workflow for Testing AI Model Robustness in Protein-Ligand Folding

Multi-Model Comparison Workflow for Growth Curve Analysis

The central paradigm of molecular biology—the linear flow of information from DNA to RNA to protein—has long provided a foundational framework for biomedical research and biomarker development [22]. This inherently linear, reductionist logic has been translated into statistical modeling, where linear regression and related methods assume simple, direct, and proportional relationships between variables [23]. While offering simplicity and interpretability, this approach is increasingly recognized as insufficient for capturing the complex, dynamic, and interconnected nature of biological systems [22] [24]. In clinical practice, the limitations are stark: biomarkers built on linear, single-analyte logic—such as the Prostate-Specific Antigen (PSA) test for prostate cancer or PD-L1 expression tests for immunotherapy response—are plagued by high rates of false positives, false negatives, and poor predictive accuracy [22]. This guide objectively compares the performance of traditional linear modeling approaches with emerging nonlinear and dynamic methodologies, framing the discussion within a broader thesis on the essential evolution from reductionist to systems-oriented research in biomedicine.

The Oversimplification Problem: Static Models in a Dynamic World

Linear models impose a static, one-way relationship between predictors and outcomes, an assumption that rarely holds in physiology and disease.

Clinical Consequences of Linear Simplification

The reliance on linear, single-factor biomarkers leads to significant clinical shortcomings. For example, a PSA level above 3 ng/mL results in a false positive for prostate cancer in approximately three out of four cases [22]. In immuno-oncology, the standard PD-L1 protein expression test predicts patient response to powerful therapies with an average accuracy of only 40% [22]. These failures stem from modeling complex, multi-factorial diseases as if they were governed by single, linearly proportional causes.

Table 1: Performance Limitations of Linear Biomarker Models in Clinical Practice

| Biomarker/Test | Clinical Use | Reported Performance Limitation | Primary Reason for Failure |

|---|---|---|---|

| PSA Level | Prostate Cancer Screening | ~75% False Positive Rate [22] | Fails to account for non-cancerous prostate conditions; single-analyte linear threshold. |

| PD-L1 IHC Test | Predicting Immunotherapy Response | ~40% Average Accuracy [22] | Linear protein level misses dynamic tumor-immune system interactions and spatial context. |

| Genetic Risk Variants | Complex Disease Prediction | Low prevalence, small effect sizes, incomplete penetrance [22] | Assumes additive, independent effects, ignoring epistatic and network interactions. |

Statistical Flaws: Multicollinearity and Misleading Significance

Beyond biology, linear models suffer from critical statistical vulnerabilities. A large-scale simulation study demonstrated that multicollinearity—correlation among predictor variables—severely distorts parameter estimates and their significance, even at low correlation levels [25]. Conventional reliance on t-statistics and p-values was found to be misleading, as "significant" coefficients often bore little relation to their true simulated values [25]. This is particularly dangerous in omics research, where many measured features (e.g., genes in a pathway) are highly interdependent, violating the linear assumption of independent predictors.

Diagram 1: Central Dogma vs. Biological Reality (92 chars)

Missed Dynamics: The Failure to Capture Time and Interaction

Biological systems are defined by feedback loops, adaptive changes, and time-dependent processes. Static linear models are "inherently short-sighted," ignoring how present states and interventions shape future outcomes [26].

The Need for Temporal and Spatial Dynamics

In cardiology, for instance, the progression of arrhythmias involves complex, nonlinear interactions across ion channels, cell networks, and tissue structure over time [24]. A linear model relating a single genetic variant to arrhythmia risk cannot capture this multi-scale, dynamic pathophysiology. Similarly, the long-range three-dimensional (3D) architecture of the genome acts as a dynamic, heritable imprint of cellular state that regulates gene expression in ways completely missed by linear DNA-to-RNA assays [22]. Biomarkers based on this 3D architecture have shown superior diagnostic performance by capturing this higher-order information [22].

From Population Averages to Individual Trajectories

Personalized medicine requires models that account for intra-individual variability over time. Nonlinear methods, including those powered by AI, can analyze longitudinal data to identify dynamic risk trajectories for chronic diseases, offering a more powerful prediction than a single static measurement [27]. This shift from a "snapshot" to a "movie" view of biology is critical for proactive health management.

Comparison Guide: Nonlinear Methodologies for Complex Biomedicine

This section compares the core capabilities of linear models against a suite of advanced nonlinear alternatives, supported by experimental data.

Table 2: Comparison of Linear and Nonlinear Modeling Approaches in Biomedicine

| Feature | Traditional Linear Models (e.g., Logistic/Cox Regression) | Nonlinear & Dynamic Alternatives | Comparative Experimental Insight |

|---|---|---|---|

| Core Assumption | Linear, additive relationship between inputs and output [23]. | Can capture complex, nonlinear interactions and feedback loops [23] [24]. | A bootstrapping correlation network method for variable selection outperformed PCA and Elastic Net in clustering precision on high-dimensional leukocyte imaging data [28]. |

| Temporal Dynamics | Static; treats time as a fixed covariate at best. | Explicitly models system evolution, state changes, and time-dependent risks [26] [24]. | Dynamic models in economics and ecology reveal long-term trade-offs (e.g., soil degradation) that static models completely miss [26]. |

| Handling High-Dimensional Data | Prone to overfitting with many predictors; requires variable selection/shrinkage. | Designed for high-dimensional spaces (e.g., deep learning, kernel methods) [29] [30]. | Deeply-learned GLMs (dlglm) handle complex nonlinearities and high-dimensional data while explicitly accounting for problematic missing data patterns [30]. |

| Interpretability | High; coefficients directly indicate effect size and direction [23]. | Often lower ("black box"); though methods like SHAP values aim to improve interpretability. | Mechanistic computational models (e.g., cardiac electrophysiology) offer high interpretability by being based on biophysical first principles [24]. |

| Validation & Reporting | Established guidelines (TRIPOD) exist, but adherence is poor; external validation rare [31]. | ML/AI guidelines emerging; validation remains a major challenge [31] [29]. | A 2025 review found no sign of an increase in ML use in biomedical prediction models, and poor reporting practices remain common across all model types [31]. |

Diagram 2: Nonlinear Model Selection Workflow (45 chars)

Experimental Protocols & Data

Protocol: Demonstrating Multicollinearity Impact in Linear Regression

This simulation protocol, based on [25], quantifies how multicollinearity corrupts linear model estimates.

- Data Generation: Simulate a dataset with 5,000 observations and 10 predictors (

x1tox10). Create specified correlation structures: variablesx1-x4are mutually correlated,x5-x7are correlated, andx8-x10are independent. Use correlations (ρ) from 0.05 to 0.95. - True Model Definition: Generate the outcome variable

yas a linear combination of predictors with pre-defined coefficients (e.g., 1, 10, 24 for different variables), plus random normal error at low (σ=20), medium (σ=100), and high (σ=200) levels. - Model Fitting & Analysis: Fit an ordinary least squares linear regression to estimate coefficients. Compare the estimated coefficients and their t-statistics to the known true values across 120 random samples for each correlation-error scenario.

- Key Outcome: The study found that even low correlation (ρ=0.30) caused significant bias and inflation of coefficients, and t-statistics became unreliable indicators of a predictor's true importance [25].

Protocol: Bootstrapping Correlation Networks for Variable Selection

This protocol for nonlinear dimensionality reduction is detailed in [28].

- Network Construction: From high-dimensional data (e.g., hundreds of morpho-kinetic variables from live imaging of leukocytes), calculate the correlation matrix between all variables to form a weighted network.

- Bootstrapping: Re-sample the dataset with replacement many times (e.g., 1000 iterations), recalculating the correlation network each time.

- Edge Stability Assessment: For each edge (variable pair), compute the proportion of bootstrap networks in which it appears. Edges with high stability are considered robust.

- Variable Selection: Rank variables by their connectivity strength in the stable network. Select the top-ranked variables as the most informative features for downstream analysis (e.g., UMAP for visualization).

- Key Outcome: This method outperformed PCA and Elastic Net in clustering precision on biological datasets, preserving interpretability by selecting original variables [28].

Protocol: Multi-Scale Cardiac Electrophysiology Modeling

This mechanistic modeling workflow is described in [24].

- Ion Channel Level (ODE): Model the kinetics of single ion channels using ordinary differential equations (ODEs), parameterized by voltage and ion concentrations. Incorporate effects of drugs or mutations.

- Cellular Level (ODE System): Integrate multiple ion current models into a cardiomyocyte model to simulate action potentials. Adjust parameters for disease states.

- Tissue Level (PDE): Use partial differential equations (PDEs) in a reaction-diffusion system to model the propagation of electrical waves across cardiac tissue, incorporating cellular geometry and fibrosis.

- Simulation & Validation: Run simulations to investigate arrhythmia mechanisms. Validate predictions against optical mapping or clinical electrophysiology data.

- Key Outcome: Provides a highly controlled, causal understanding of system dynamics, enabling hypothesis testing and revealing emergent phenomena not obvious from linear components [24].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Advanced Biomedical Modeling

| Item / Solution | Primary Function in Research | Relevance to Nonlinear Dynamics |

|---|---|---|

| 3C/Hi-C Assay Kits | To capture the 3D architecture and long-range interactions of chromatin in the nucleus [22]. | Provides the spatial interaction data necessary to move beyond linear genome annotation, enabling biomarkers based on structural dynamics. |

| Live-Cell Imaging Systems with High-Content Analysis | To longitudinally track morpho-kinetic variables (e.g., cell shape, movement) in response to stimuli [28]. | Generates high-dimensional temporal data essential for training and validating dynamic, nonlinear models of cell behavior. |

| Uniform Manifold Approximation and Projection (UMAP) | A nonlinear dimensionality reduction technique for visualization and exploration of high-dimensional data [28]. | Reveals complex clusters and relationships in data that linear methods like PCA often obscure. |

| dlglm Software Architecture | A deeply-learned generalized linear model framework that handles non-linearities and complex missing data patterns [30]. | Enables flexible, robust supervised learning on messy real-world biomedical datasets where linear models and simple imputation fail. |

| Cardiac Electrophysiology Simulation Platforms (e.g., Chaste, OpenCARP) | Software to implement multi-scale mechanistic models of heart electrical activity [24]. | Allows in silico experimentation of nonlinear dynamics across scales, from ion channel to whole organ, for basic research and drug safety testing. |

| Structured Missing Data Test Datasets | Curated datasets with known missingness mechanisms (MCAR, MAR, MNAR) [30]. | Critical for benchmarking and developing models like dlglm that must perform reliably under realistic, non-ideal data conditions. |

Diagram 3: Multi-Scale Cardiac Model Workflow (48 chars)

This comparison guide evaluates the performance and application of foundational nonlinear concepts—chaos, sensitivity to initial conditions, and fractals—against traditional linearization methods within scientific research. Framed within a broader thesis on comparative methodology, we objectively assess these paradigms through experimental data, detailing protocols from fields including pharmacodynamics, physiological modeling, and materials science. The analysis reveals that nonlinear methods provide superior accuracy for modeling complex, heterogeneous systems but at increased computational cost, whereas linear methods offer speed and simplicity suitable for first approximations or systems with small perturbations [32] [6]. This guide is structured for researchers and drug development professionals, providing quantitative comparisons, detailed experimental methodologies, essential research toolkits, and visualizations of key concepts.

Core Conceptual Definitions and Comparative Thesis

The study of dynamical systems is fundamentally divided into linear and nonlinear approaches. Linear methods assume proportionality and superposition, where system output is directly proportional to input and the net response to multiple stimuli is the sum of individual responses. These methods are analytically tractable, computationally fast, and form the basis of traditional modeling in many engineering and biological applications [32]. However, they often fail to capture the complex behaviors inherent in physiological, pharmacological, and natural systems.

In contrast, nonlinear dynamics acknowledge that relationships between variables are not proportional and that systems can exhibit emergent, complex behaviors. This guide focuses on three pivotal nonlinear concepts:

- Chaos: A behavior in deterministic systems characterized by aperiodic, seemingly random output that is exquisitely sensitive to initial conditions [33] [34].

- Sensitivity to Initial Conditions (The Butterfly Effect): A hallmark of chaotic systems where infinitesimally small differences in starting points lead to radically divergent future states, making long-term prediction impossible [35]. This was classically demonstrated by Edward Lorenz in weather modeling [36].

- Fractals: Geometric shapes or temporal patterns that exhibit self-similarity across scales, meaning their structure appears similar regardless of magnification level [37]. They are characterized by a non-integer fractal dimension (D), which quantifies complexity and space-filling capacity [33] [38].

The central thesis explored herein is that while linearization provides critical simplifying power, nonlinear methods employing chaos and fractal theory are essential for realistic modeling of complex systems. This is particularly true in drug development, where physiological processes are inherently nonlinear, heterogeneous, and multiscale [34] [36].

Methodological Comparison: Experimental & Analytical Protocols

This section details key experimental and computational protocols used to generate data comparing linear and nonlinear approaches.

2.1 Protocol A: Biomechanical Simulation of Soft Tissues This protocol compares linear elastostatic analysis versus geometrically nonlinear analysis for simulating a biological organ [32].

- Sample Preparation: Obtain CT scan images (e.g., from the Visible Human Project). Segment the images for the target organ (e.g., kidney) using software like ITK-SNAP. Reconstruct a 3D surface model using MeshLab.

- Mesh Generation & Material Assignment: Import the 3D geometry into finite element analysis (FEA) software (e.g., ANSYS). Generate a tetrahedral mesh. Assign material properties: for linear analysis, use a constant Young's modulus and Poisson's ratio; for nonlinear analysis, define a hyperelastic material model (e.g., Mooney-Rivlin).

- Loading & Boundary Conditions: Apply a fixed constraint to one end of the organ. Apply a known displacement or pressure load to a specified surface area to simulate surgical palpation.

- Simulation & Analysis:

- Perform a linear static analysis.

- Perform a nonlinear static analysis accounting for large deformations.

- Output Metrics: Extract maximum principal stress, strain energy, and reaction force. Compute the percentage error of linear results relative to the nonlinear benchmark.

2.2 Protocol B: Fractal Dimension Analysis of Physical Surfaces This protocol quantifies surface complexity using fractal dimension, a nonlinear metric [37] [39].

- Imaging: Obtain a 3D topographic profile of the sample surface. For pharmaceutical particles, use Atomic Force Microscopy (AFM). For anatomical structures (e.g., vascular beds), analyze segmented micro-CT scans.

- Data Preparation: Isolate the height map or binary volume data. For the box-counting method, superimage a series of grids with box sizes, ε.

- Fractal Calculation: For each box size ε, count the number of boxes, N(ε), that contain part of the surface or pattern. Plot log(N(ε)) versus log(1/ε).

- Dimension Estimation: Perform a linear regression on the linear portion of the log-log plot. The fractal dimension D is estimated as the slope of the best-fit line. Surface complexity is directly related to D, where a higher D indicates a more complex, space-filling structure.

2.3 Protocol C: Detecting Nonlinear Dependence in High-Dimensional Datasets This protocol compares linear and nonlinear feature selection methods to identify relevant variables in large datasets [6].

- Dataset: Use a large survey database (e.g., health and aging study) with numerous features and a target outcome variable.

- Ground Truth Establishment: Use synthetic data where the functional relationship (linear or nonlinear) between features and target is known.

- Method Application:

- Linear Methods: Apply filter methods (e.g., Pearson correlation), wrapper methods (e.g., forward selection with linear regression), or embedded methods (e.g., Lasso regression).

- Nonlinear Methods: Apply mutual information (a nonlinear dependency measure), or wrapper methods using nonlinear classifiers (e.g., Random Forest).

- Performance Evaluation: Rank features by relevance scores from each method. Compare rankings to the ground truth using precision-recall curves and area under the curve (AUC). Stability is tested by evaluating method performance on random subsets of the data.

Performance Data & Comparative Analysis

The following tables summarize quantitative findings from executed experimental protocols, comparing the efficacy of linear versus nonlinear methodologies.

Table 1: Comparison of Linear vs. Nonlinear Finite Element Analysis for Kidney Simulation [32]

| Performance Metric | Linear Elastic Analysis | Geometrically Nonlinear Analysis | Percentage Error (Linear vs. Nonlinear) |

|---|---|---|---|

| Maximum Principal Stress (Pa) | 1.82 x 10⁵ | 2.41 x 10⁵ | -24.5% |

| Total Strain Energy (J) | 5.71 x 10⁻⁴ | 8.90 x 10⁻⁴ | -35.8% |

| Reaction Force (N) | 0.85 | 1.12 | -24.1% |

| Computation Time (Relative) | 1.0 (Baseline) | 6.8 - 8.5 | N/A |

| Primary Advantage | Computational speed, simplicity | Accuracy under large deformation | N/A |

| Best Use Case | Preliminary design, small-strain scenarios | Final validation, surgical simulation | N/A |

Table 2: Performance of Linear vs. Nonlinear Feature Selection Methods [6]

| Evaluation Metric | Linear Methods (e.g., Correlation, Lasso) | Nonlinear Methods (e.g., Mutual Information, Random Forest) | Inference |

|---|---|---|---|

| AUC for Linear Features | 0.89 - 0.94 | 0.91 - 0.95 | Comparable performance |

| AUC for Nonlinear Features | 0.52 - 0.61 | 0.83 - 0.91 | Nonlinear methods superior |

| Stability (Score Variance) | High (sensitive to feature subset) | Low (robust to feature subset) | Nonlinear methods more reliable |

| Computational Cost | Low | Moderate to High | Linear methods are faster |

Table 3: Fractal Dimensions in Physiological and Pharmaceutical Systems

| System / Material | Fractal Dimension (D) | Measurement Technique | Interpretation & Relevance |

|---|---|---|---|

| Koch Curve (Theoretical) | 1.262 [37] | Mathematical generation | Benchmark for self-similar patterns. |

| Pharmaceutical Particles | 2.1 - 2.2 [39] | Atomic Force Microscopy (AFM) | Quantifies surface roughness, influences dissolution rate & flowability. |

| Pulmonary Bronchial Tree | ~2.7 - 3.0 (theoretical) [37] | Morphometric analysis | Optimizes gas exchange; deviation from ideal may indicate disease. |

| Solutions to BLP Equation | Non-integer, scale-dependent [38] | Voxel-based box-counting | Confirms self-affine, multiscale structure in nonlinear wave dynamics. |

| Oceanic Plastic Debris | Multifractal spectrum [33] | Multifractal Detrended Fluctuation Analysis | Characterizes complex distribution impacting climate dynamics. |

Visualizations of Nonlinear Concepts and Workflows

Diagram 1: Chaos and Sensitivity in a Pharmacodynamic Pathway (Max Width: 760px)

Diagram 2: Comparative Workflow: Linear vs. Nonlinear/Fractal Methods (Max Width: 760px)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Tools for Nonlinear Dynamics and Fractal Research

| Tool / Reagent | Category | Primary Function | Example Application in Research |

|---|---|---|---|

| Finite Element Analysis (FEA) Software (ANSYS, Abaqus) | Computational | Solves partial differential equations for stress/strain in complex geometries. | Comparing linear vs. nonlinear material models for organ simulation [32]. |

| Atomic Force Microscope (AFM) | Instrumentation | Provides 3D topographic surface profiles at nanoscale resolution. | Measuring fractal dimension of pharmaceutical particle surfaces for QC [39]. |

| ImageJ / ITK-SNAP / MeshLab | Software | Open-source tools for image segmentation, stack processing, and 3D model reconstruction. | Creating 3D organ geometries from CT/MRI scans for simulation [32]. |

| Box-Counting Algorithm Software | Algorithm | Computes fractal dimension from 2D or 3D digital data by analyzing scaling of pattern with measurement scale. | Quantifying complexity of vascular networks or surface roughness [37] [38]. |

| Mutual Information Calculator | Statistical Tool | Measures general (linear and nonlinear) dependence between variables. | Non-linear feature selection in high-dimensional biological datasets [6]. |

| Nonlinear Solver Libraries (SUNDIALS, SciPy) | Computational | Provides numerical methods (e.g., for ODEs/PDEs) capable of handling stiff, chaotic systems. | Simulating chaotic pharmacodynamic models or reaction-diffusion systems [33] [34]. |

| Fractional Calculus Toolbox | Mathematical | Operates with fractional derivatives/integrals, essential for modeling fractal-order dynamics. | Analyzing systems with memory effects and power-law responses [33] [40]. |

The evolution from linear pharmacokinetics (PK) to systems pharmacology represents a fundamental paradigm shift in biomedical research and drug development. Classical linear PK, grounded in the law of mass action and the receptor theory that is over a century old, relies on compartmental models that assume direct proportionality between dose and systemic exposure [41] [42]. While effective for many small molecules, these models often fail to capture the complex, nonlinear behaviors inherent in biological systems, such as saturable processes, feedback loops, and network interactions [43] [44].

The limitation of reductionist approaches has spurred the rise of more integrative disciplines. Quantitative Systems Pharmacology (QSP) has emerged as a holistic framework that uses computational modeling to bridge systems biology and pharmacology [45] [46]. It examines interactions between drugs, biological networks, and disease processes to generate mechanistic, predictive insights [44]. This evolution is driven by the need to address the high attrition rates in drug development and to tackle complex 21st-century diseases, moving from a focus on single targets to understanding polypharmacology and network dynamics [42]. The adoption of Model-Informed Drug Development (MIDD), championed by regulatory agencies, underscores this transition, where QSP and related modeling approaches are becoming the new standard for improving efficiency and decision-making [45] [46].

Core Methodological Comparison: Linear, Nonlinear, and Systems Approaches

This section provides a foundational comparison of the defining characteristics, underlying mathematics, and primary applications of linear pharmacokinetics, nonlinear methods, and systems pharmacology.

Table 1: Foundational Comparison of Pharmacokinetic Modeling Paradigms

| Aspect | Linear (Classical) Pharmacokinetics | Nonlinear Pharmacokinetic Methods | Systems Pharmacology (QSP) |

|---|---|---|---|

| Core Principle | Direct proportionality between dose and exposure (AUC, Cmax); superposition applies [41]. | Dose/exposure relationships are not proportional due to saturable processes (e.g., metabolism, transport) [47] [48]. | Integrative, network-based modeling of drug effects within biological systems [44]. |

| Mathematical Foundation | Linear ordinary differential equations (ODEs); first-order kinetics [49]. | Nonlinear ODEs (e.g., Michaelis-Menten, Target-Mediated Drug Disposition) [47]. | Systems of (non)linear ODEs modeling pathways, feedback, and homeostatic control [44]. |

| Typical Model Structure | Compartmental models (1-, 2-, or 3-compartment) [49]. | Compartmental models integrated with saturable functions [47] [48]. | Mechanistic, physiology-based networks linking PK, biological pathways, and disease modules [46] [44]. |

| Primary Goal | Describe plasma concentration-time profiles to calculate standard PK parameters (CL, Vd, t½) [41] [49]. | Characterize and quantify sources of non-proportionality in PK [43] [48]. | Predict clinical outcomes, optimize therapeutic strategies, and generate testable biological hypotheses [45] [46]. |

| Treatment of Biology | Empirical; the body is a "black box" with abstract compartments [43]. | Semi-mechanistic; incorporates specific saturable biological processes [47] [48]. | Explicitly mechanistic; aims to represent key biological structures and interactions [44] [42]. |

| Major Application Era | Mid-20th century to present, foundational to clinical PK [50] [42]. | Late 20th century to present, crucial for biologics and drugs with saturable clearance [47]. | 21st century to present, for complex diseases and novel therapeutic modalities [45] [46]. |

Detailed Experimental Protocols and Data

Protocol: Investigating Nonlinear PK via Receptor-Mediated Endocytosis (RME)

This protocol, based on the study of therapeutic proteins like epidermal growth factor (EGF) receptor ligands, details how to build a mechanistic model for nonlinear clearance [47].

1. System Definition:

- Ligand: A therapeutic protein (e.g., monoclonal antibody, growth factor).

- Biological System: Cells expressing the target receptor (e.g., EGFR).

- Process: Receptor-Mediated Endocytosis (RME) – the primary source of nonlinear, saturable elimination for many biologics [47].

2. Detailed Mechanistic Model (Model A) Construction:

- Develop a system of ordinary differential equations (ODEs) based on mass action kinetics to describe the following species and transitions [47]:

- Extracellular Ligand (Lex): Binds to free membrane receptor (Rm) with association rate constant kon.

- Membrane Complex (RLm): Formed from binding; can dissociate (koff) or be internalized (kinterRL).

- Intracellular Complex (RLi): Can be recycled to membrane (krecyRL), degraded (kdegRL), or dissociate (kbreak).

- Free Intracellular Receptor (Ri): Generated from complex dissociation; can be recycled (krecyR) or degraded (kdegR).

- Receptor Synthesis: Constant zero-order synthesis rate (ksynth).

3. Model Reduction and Analysis:

- Apply quasi-steady-state assumptions for receptor species to derive a simplified, identifiable model (e.g., an extended Michaelis-Menten equation) [47].

- Use in vitro data (binding, internalization, degradation rates) to inform model parameters.

- Simulate concentration-time profiles under different dose levels to visualize nonlinearity: at low doses, clearance is high (first-order); at high doses, clearance decreases as RME pathways become saturated (zero-order) [47].

4. Key Experimental Insight: The model demonstrates that a receptor system can efficiently eliminate drug even with a low total receptor number, and nonlinearity is more pronounced for systems with high receptor availability and fast internalization [47].

Case Study: Ciclosporin Population PK with Nonlinear Kinetics

This clinical study compared linear and nonlinear modeling strategies for the immunosuppressant ciclosporin in renal transplant patients [48].

1. Experimental Design:

- Data: 2,969 whole-blood concentration measurements (pre-dose and 2-hour post-dose) from 173 adult patients.

- Models Developed: Four population PK (PopPK) models were constructed [48]:

- Linear 1-Compartment Model: Standard linear approach.

- Linear 2-Compartment Model: Standard linear approach with peripheral compartment.

- Empirical Nonlinear Model: Incorporated nonlinearity via empirical covariate relationships.

- Theory-Based Nonlinear Model: Incorporated mechanistic nonlinear sources (e.g., saturable binding to erythrocytes, auto-inhibition of CYP3A4/P-gp).

2. Results and Comparative Performance: The predictive performance was evaluated using prediction-corrected visual predictive checks (pcVPC). The theory-based nonlinear model demonstrated superior predictive performance compared to the linear and empirical nonlinear models. It effectively captured the saturation of erythrocyte binding and the complex drug-drug interaction with prednisolone, which induces metabolic enzymes [48].

Table 2: Performance Summary of Ciclosporin PopPK Models [48]

| Model Type | Structural Basis | Treatment of Nonlinearity | Predictive Performance | Identified Sources of Nonlinearity |

|---|---|---|---|---|

| Linear 1-Compartment | Empirical compartment | None (assumed linearity) | Poorest | Not applicable |

| Linear 2-Compartment | Empirical compartment | None (assumed linearity) | Poor | Not applicable |

| Empirical Nonlinear | Empirical compartment + covariates | Statistically identified covariate effects | Improved, but less consistent | Demographics (e.g., body weight) |

| Theory-Based Nonlinear | Mechanism-informed compartment | Explicit saturable binding & enzyme inhibition | Best | Saturable erythrocyte binding, CYP3A4/P-gp auto-inhibition, drug interaction with prednisolone |

3. Conclusion: Incorporating mechanistically justified nonlinearity significantly improved model predictability and provided a more robust tool for dose individualization, highlighting the limitation of assuming linear PK for drugs like ciclosporin [48].

Visualization of Methodological Evolution

Visualization 1: The Pharmacological Modeling Evolution

Detailed RME Pathway for Therapeutic Proteins

Visualization 2: Receptor-Mediated Endocytosis (RME) Mechanism

Impact and Future Outlook: QSP as a New Standard

The transition to systems pharmacology is marked by tangible impacts on drug development efficiency and decision-making. Industry analyses, such as one from Pfizer presented at the QSP Summit 2025, estimate that MIDD approaches (enabled by QSP, PBPK, and other models) save approximately $5 million and 10 months per development program [45]. Beyond cost and time savings, QSP's power lies in its ability to generate and test biological hypotheses in silico, identify knowledge gaps, and simulate clinical trials for scenarios impractical to test experimentally, such as in rare diseases or pediatric populations [45] [46].

The future of the field is oriented towards greater integration and personalization. Key trends include [45] [46] [44]:

- Virtual Patient Populations and Digital Twins: Creating simulated cohorts to explore personalized therapies and optimize trial design, especially for hard-to-study populations.

- AI and Machine Learning Integration: Enhancing model development, data integration, and pattern recognition from large, complex datasets.

- Regulatory Acceptance: Growing endorsement by agencies like the FDA and EMA as part of the MIDD framework, with QSP evidence increasingly included in submissions.

- Reduction of Animal Testing: Providing predictive, mechanistic alternatives that align with the "3Rs" (Replace, Reduce, Refine) principle.

Table 3: Key Research Reagent Solutions and Tools

| Tool/Reagent Category | Specific Example/Representation | Primary Function in Research |

|---|---|---|

| Mechanistic Biological System | Epidermal Growth Factor Receptor (EGFR) Pathway [47] | A well-characterized model system for studying Receptor-Mediated Endocytosis (RME), nonlinear PK, and signal transduction dynamics. |

| Computational Modeling Software | PBPK/QSP Platforms (e.g., Certara's Suite, MATLAB/SimBiology) [45] [46] | Software environments for building, simulating, and validating mechanistic, multi-scale models that integrate physiology, pharmacology, and disease biology. |

| Modeling Framework | Target-Mediated Drug Disposition (TMDD) Model [47] | A semi-mechanistic structural model framework specifically designed to characterize PK driven by high-affinity binding to a pharmacological target. |

| In Vitro Binding & Trafficking Assays | Surface Plasmon Resonance (SPR), Internalization Flow Cytometry [47] | Experimental methods to quantify critical rate constants (kon, koff, kinter) needed to parameterize mechanistic RME and TMDD models. |

| Clinical PopPK/PD Software | NONMEM, Monolix, R/Phoenix [48] | Industry-standard tools for population analysis of clinical trial data, enabling the identification of nonlinear kinetics and covariate effects in patient populations. |

| Therapeutic Modality | Monoclonal Antibodies (mAbs) & Therapeutic Proteins [47] | A major class of drugs whose disposition is frequently dominated by nonlinear, saturable clearance pathways (e.g., RME), making them prime subjects for advanced PK/PD modeling. |

Toolkit for Complexity: Implementing Nonlinear Methods in the Drug Development Pipeline

The analysis of physiological signals—such as electroencephalogram (EEG), electrocardiogram (ECG), and speech—presents a fundamental challenge in biomedical research and drug development: accurately quantifying the complexity of underlying biological systems. Traditional linear methods of signal analysis, including spectral analysis and linear time-invariant modeling, often fail to capture the inherent nonlinearity, non-stationarity, and multiscale dynamics of living systems [51]. This limitation has driven a paradigm shift toward nonlinear time series analysis, where entropy measures serve as critical tools for assessing system complexity and disorder [52] [53].

This comparison guide is framed within a broader thesis investigating the comparative efficacy of traditional linearization approaches versus contemporary nonlinear methods. The core argument posits that nonlinear complexity measures, particularly those based on permutation entropy and its derivatives, provide a more robust, information-rich, and physiologically relevant characterization of signals than linear statistics alone [51] [54]. These measures are instrumental in distinguishing pathological states, such as epileptic seizures from normal brain activity or shockable from non-shockable cardiac arrhythmias, and in monitoring dynamic states like anesthesia depth [55] [56]. For researchers and drug development professionals, selecting the optimal complexity metric is crucial for developing sensitive diagnostic biomarkers and evaluating therapeutic interventions.

Comparative Framework: A Spectrum of Entropy Measures

Nonlinear entropy measures for physiological signal analysis exist on a spectrum, from those closely related to classical linear statistics to novel, fully nonlinear approaches. The following diagram illustrates the logical relationships and evolution of key entropy measures discussed in this guide, positioning them within the broader research context of linear versus nonlinear methodology.

Methodological Evolution of Entropy Measures

Empirical Performance Comparison Across Physiological Signals

The following tables summarize quantitative data from key studies, comparing the performance of permutation entropy (PE) and its modern variants against traditional linear methods and other nonlinear entropy measures across different physiological signals and classification tasks.

Performance in Differentiating Physiological and Pathological States

Table 1: Comparison of entropy measure performance in classifying clinical states from EEG and ECG signals. SE: Sample Entropy, PE: Permutation Entropy, SSCE: State Space Correlation Entropy. Values are mean ± standard deviation where available. [55]

| Signal Type | Clinical States (Class 0 vs. Class 1) | Sample Entropy (SE) | Permutation Entropy (PE) | State Space Correlation Entropy (SSCE) | Best Performing Measure |

|---|---|---|---|---|---|

| EEG | Non-Seizure vs. Seizure | 0.98 ± 0.25 vs. 0.34 ± 0.13 | 0.84 ± 0.05 vs. 0.67 ± 0.07 | 1.60 ± 0.36 vs. 1.72 ± 0.46 | SSCE (Higher mean for seizure) [55] |