BLASTp vs Deep Learning: Revolutionizing EC Number Annotation for Drug Discovery & Protein Function

This comprehensive article explores the critical shift in enzymatic function annotation from traditional homology-based methods like BLASTp to modern deep learning approaches.

BLASTp vs Deep Learning: Revolutionizing EC Number Annotation for Drug Discovery & Protein Function

Abstract

This comprehensive article explores the critical shift in enzymatic function annotation from traditional homology-based methods like BLASTp to modern deep learning approaches. Tailored for researchers, scientists, and drug development professionals, we dissect the foundational principles, practical methodologies, common pitfalls, and rigorous comparative validation of these tools. We provide actionable insights for selecting and optimizing the right annotation strategy to accelerate target identification, understand metabolic pathways, and enhance the accuracy of functional predictions in biomedical research.

EC Numbers Decoded: Why Accurate Enzyme Annotation is Critical for Biomedical Research

Application Notes

Within the thesis investigating BLASTp versus deep learning for EC number annotation, accurate EC number assignment is critical for functional prediction, pathway reconstruction, and drug target validation. The Enzyme Commission (EC) number is a four-level hierarchical code (e.g., EC 3.4.21.4) that classifies enzymes based on catalyzed reactions.

Current Annotation Paradigms:

- Sequence Homology (BLASTp): Relies on pairwise alignment to annotated sequences in databases like Swiss-Prot. It is robust for well-conserved families but fails for distant homologs or novel functions.

- Deep Learning (DL) Models: Use protein sequences, and sometimes structures, as input to predict EC numbers directly, learning complex patterns beyond linear homology. They show superior performance for remote homology detection.

Quantitative Performance Comparison: Recent benchmark studies on held-out test sets highlight the performance gap between traditional and modern methods.

Table 1: Comparative Performance of EC Number Prediction Methods

| Method Category | Example Tool/Model | Reported Precision | Reported Recall | Key Advantage | Primary Limitation |

|---|---|---|---|---|---|

| Sequence Homology | BLASTp (vs. Swiss-Prot) | 0.85 - 0.92 | 0.65 - 0.75 | High precision for clear homologs; interpretable alignments. | Low recall for novel/divergent enzymes; transfers annotations potentially erroneously. |

| Deep Learning | DeepEC, CLEAN | 0.88 - 0.94 | 0.82 - 0.90 | High recall; detects complex sequence-function relationships. | "Black-box" predictions; requires large, high-quality training data. |

| Hybrid Approach | EFI-EST, enzymeML | 0.90 - 0.95 | 0.80 - 0.88 | Balances reliability and coverage; integrates multiple evidence types. | More complex pipeline to implement and manage. |

Protocols

Protocol 1: Standard BLASTp-based EC Number Annotation

Objective: To assign a putative EC number to a query protein sequence using homology search. Research Reagent Solutions:

- Query Protein Sequence(s): FASTA format.

- Curated Reference Database: UniProtKB/Swiss-Prot.

- BLAST+ Suite: Command-line tools (

blastp). - E-value Threshold: Standard cutoff of 1e-10.

- Scripting Environment: Python/Biopython for results parsing.

Methodology:

- Database Preparation: Download the latest Swiss-Prot database in FASTA format. Generate a BLAST database using

makeblastdb. - Execute Search: Run

blastp:blastp -query query.fasta -db swissprot -out results.xml -evalue 1e-10 -outfmt 5 -max_target_seqs 50. - Result Parsing: Extract top hits with significant alignment (E-value < 1e-10, identity > 30%). Map the EC numbers from the hit(s) to the query.

- Assignment Logic: If all top-5 significant hits share the same full EC number, assign it to the query. If they disagree, assign the lowest common hierarchical level (e.g., EC 2.7.-.- if hits are kinases but types differ).

Protocol 2: Deep Learning-Based Prediction Using a Pre-trained Model (CLEAN)

Objective: To predict EC numbers directly from primary sequence using a deep learning model. Research Reagent Solutions:

- Query Protein Sequence(s): FASTA format.

- Pre-trained CLEAN Model: Available from GitHub repository.

- Python Environment: PyTorch, NumPy, BioPython.

- Hardware: GPU (recommended) for inference speed.

Methodology:

- Environment Setup: Install dependencies:

pip install torch biopython. Clone the CLEAN repository. - Sequence Encoding: Convert each query sequence into the numerical token-embedding representation required by the CLEAN model.

- Model Inference: Load the pre-trained model weights. Feed the encoded sequence through the model to obtain prediction scores for over 5000 possible EC numbers.

- Thresholding: Apply a calibrated confidence threshold (e.g., 0.75) to the prediction scores. Output all EC numbers with scores above the threshold as multi-label predictions.

Protocol 3: Experimental Validation of Predicted EC Activity

Objective: To biochemically validate a predicted EC number for a putative enzyme. Research Reagent Solutions:

- Purified Recombinant Protein: Expressed from the gene of interest.

- Assay-Specific Substrates & Buffers: As dictated by the predicted EC class.

- Detection Instrumentation: Spectrophotometer, fluorimeter, or HPLC-MS.

- Negative Controls: Heat-inactivated enzyme, no-enzyme buffer.

Methodology:

- Assay Design: Based on the predicted EC number (e.g., for a predicted oxidoreductase, EC 1.-.-.-), design a reaction mix containing appropriate buffer, cofactor (e.g., NADH), and putative substrate.

- Kinetic Measurement: Incubate the purified protein with the reaction mix. Monitor the change in absorbance/fluorescence (e.g., NADH depletion at 340 nm) over time.

- Data Analysis: Calculate initial velocity. Vary substrate concentration to determine Michaelis-Menten kinetics (Km, Vmax). Confirm product formation via a complementary method like HPLC.

- Verification: Activity must be absent in negative controls. The kinetic parameters should be consistent with known enzymes in the same EC subclass.

Visualizations

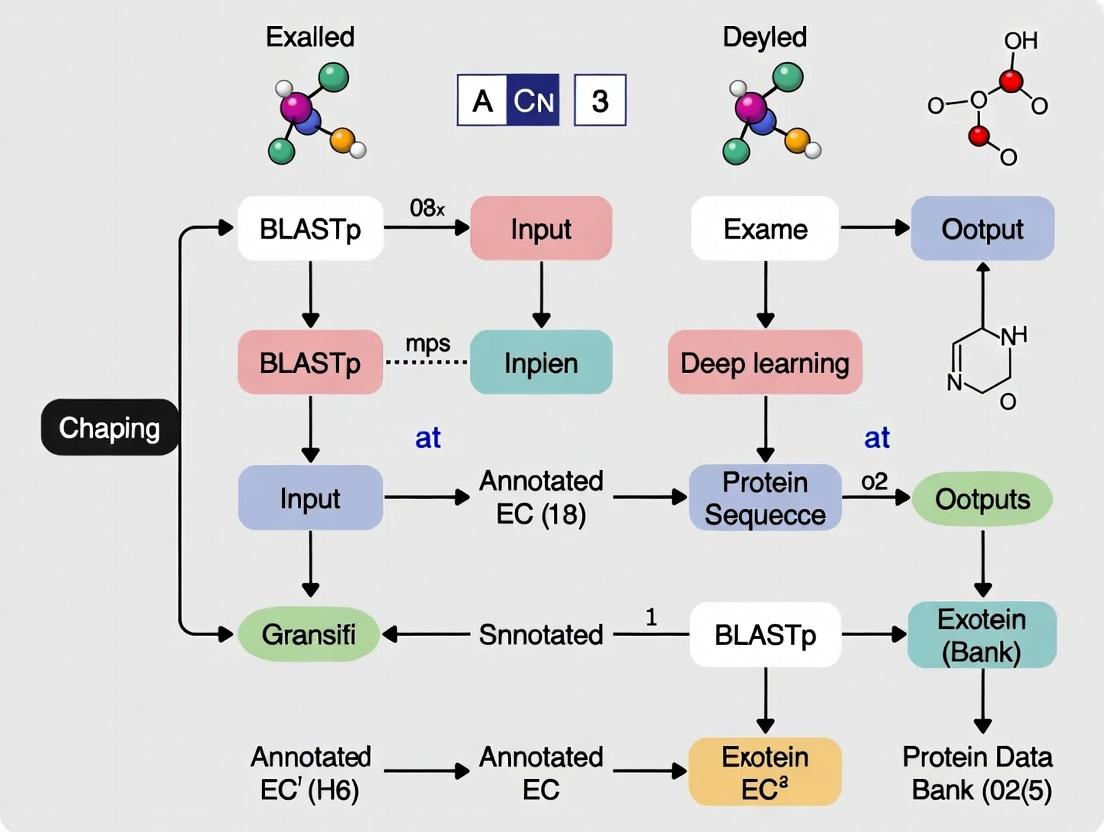

Diagram 1: EC Number Annotation & Validation Workflow

Diagram 2: Routes to Enzyme Functional Classification

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for EC Number Research & Validation

| Item | Function in EC Number Research |

|---|---|

| UniProtKB/Swiss-Prot Database | Manually curated source of high-quality enzyme sequences and their assigned EC numbers; the gold-standard reference for homology-based annotation. |

| BRENDA or ExplorEnz Database | Comprehensive repositories of enzyme functional data (kinetic parameters, substrates, inhibitors) used to understand the biochemical context of an EC class. |

| Pre-trained Deep Learning Models (CLEAN, DeepEC) | Software tools that provide state-of-the-art predictive capability for EC number assignment directly from sequence, bypassing homology requirements. |

| Recombinant Protein Expression System (E. coli, insect cells) | Required to produce the purified protein of interest for experimental validation of predicted enzyme activity. |

| Spectrophotometric/Fluorometric Assay Kits | Validated, ready-to-use chemical kits for measuring activity of common enzyme classes (e.g., kinases, phosphatases, proteases), enabling rapid functional screening. |

| High-Performance Liquid Chromatography-Mass Spectrometry (HPLC-MS) | Analytical platform for definitive identification of reaction substrates and products, providing unambiguous proof of enzymatic function. |

Enzyme Commission (EC) number annotation is a fundamental step in functional genomics, providing a standardized classification for enzyme functions. Within the broader research context comparing BLASTp (sequence homology) versus deep learning (DL) for EC annotation, accurate assignment is critical. BLASTp, while established, often struggles with remote homologs and functional convergence. Emerging DL models promise higher precision by learning complex sequence-function relationships. The choice of annotation method directly impacts downstream applications in identifying druggable enzymes and elucidating metabolic networks in disease.

Application Notes: From Annotation to Application

Drug Target Discovery

Accurate EC annotation enables the systematic identification of enzymes essential for pathogen survival or dysregulated in human diseases. Annotated enzymes can be prioritized based on their pathway context, essentiality scores, and druggability assessments.

- Table 1: Comparative Output of BLASTp vs. DL for Target Prioritization

Metric BLASTp-Based Pipeline Deep Learning-Based Pipeline Impact on Drug Discovery Annotation Coverage ~70-80% of microbial proteome ~85-95% of microbial proteome DL identifies more potential targets, including non-homologous enzymes. Accuracy (Top-1) ~85% (high for clear homologs) ~92-95% (per recent benchmarks) Reduced false positives lower validation costs. Novel Target Discovery Rate Low; biased toward known families Higher; can suggest function for ORFs of unknown function (PUFs) Enables novel antibiotic development against unexplored enzyme families. Typical Workflow Speed 1000 seqs/hr (CPU-dependent) 10,000 seqs/hr (GPU-accelerated) Faster screening of large genomic datasets for epidemic preparedness.

Metabolic Pathway Analysis

EC numbers serve as the universal keys for mapping enzymes onto reconstructed metabolic networks. This mapping is vital for modeling metabolic fluxes in cancer, microbiome research, and industrial biotechnology.

- Table 2: Pathway Reconstruction Confidence by Annotation Method

Pathway Analysis Step Data Source BLASTp Contribution Deep Learning Contribution Enzyme Mapping Metagenomic Assembled Genomes (MAGs) Provides high-confidence annotations for core metabolism enzymes. Fills gaps in secondary metabolism and detoxification pathways. Gap Filling Human gut microbiome data Suggests isozymes from known homologs. Proposes promiscuous enzyme activities to connect pathway gaps. Dysregulation Analysis Transcriptomics (Cancer cells) Identifies overexpression of known metabolic enzymes. Correlates isoform-specific EC predictions with patient survival data. Confidence Score Manual curation benchmark E-value & identity; good for high similarity. Probabilistic score (e.g., 0.98); more granular confidence for all predictions.

Experimental Protocols

Protocol 1: Comparative EC Annotation Pipeline (BLASTp vs. DL)

Objective: To annotate a set of query protein sequences and compare the results from a traditional BLASTp workflow and a state-of-the-art deep learning model.

Materials: Query protein sequences in FASTA format, UNIX-based server or high-performance computing cluster, Docker, BLAST+ suite, DeepEC or CLEAN (DL model) Docker image.

Procedure:

- Data Preparation: Divide your query FASTA file into two identical sets for parallel processing.

- BLASTp Annotation:

a. Format a reference database (e.g., Swiss-Prot) using

makeblastdb. b. Run BLASTp:blastp -query query_set1.fasta -db swissprot.db -out blastp_results.xml -evalue 1e-5 -outfmt 5 -max_target_seqs 10. c. Parse XML output using a script (e.g., Python's Bio.Blast) to transfer the EC number from the top-hit with the lowest E-value meeting a predefined identity threshold (e.g., >40%). - Deep Learning Annotation:

a. Pull the DL model container:

docker pull deeplearningmodel/ec:predict. b. Run prediction:docker run --gpus all -v $(pwd):/data deeplearningmodel/ec:predict -i /data/query_set2.fasta -o /data/dl_predictions.tsv. c. The output is a tab-separated file with SequenceID, Predicted EC number, and Confidence score. - Validation & Curation: a. Use a manually curated gold-standard set of sequences with known EC numbers. b. Calculate precision, recall, and F1-score for both methods against this set. c. Manually inspect discordant annotations using phylogenetic context and conserved domain analysis (CDD).

Protocol 2: Validating Annotated Drug Targets in a Bacterial Growth Assay

Objective: To validate the essentiality of a high-confidence enzyme target (annotated via DL) in a model bacterium.

Materials: Wild-type E. coli K-12, gene knockout kit (e.g., CRISPR-Cas9 or lambda Red), LB broth and agar, chemical inhibitor of the target enzyme (or conditionally essential gene silencing system), spectrophotometer, microplate reader.

Procedure:

- Target Selection: Select a metabolic enzyme annotated with high confidence (e.g., EC 2.7.1.2, glucokinase) that is non-homologous to human enzymes.

- Gene Knockout: a. Construct a knockout strain using homologous recombination, replacing the target gene with an antibiotic resistance cassette. b. Verify knockout via PCR and sequencing.

- Growth Phenotype Analysis: a. Inoculate wild-type and knockout strains in minimal media with different carbon sources (e.g., glucose, glycerol). b. Grow in a 96-well plate at 37°C with shaking in a plate reader, monitoring OD600 every 30 minutes for 24h. c. Calculate growth rates and yield. Essentiality is indicated by no growth on glucose but growth on glycerol for a glucokinase knockout.

- Inhibitor Assay: a. Treat wild-type cells with a range of concentrations of a specific inhibitor. b. Monitor growth as in step 3b. A minimum inhibitory concentration (MIC) that mimics the knockout phenotype supports the target's druggability.

Visualizations

Diagram 1: EC Annotation Workflow Comparison

Diagram 2: From EC Number to Drug Target Validation

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Category | Function in EC-Related Research |

|---|---|---|

| UniProtKB/Swiss-Prot Database | Reference Database | Manually curated source of high-confidence EC annotations for training DL models and BLASTp reference. |

| DeepEC or CLEAN Docker Image | Deep Learning Software | Pre-trained, containerized model for high-throughput, accurate EC number prediction from sequence. |

| BRENDA Enzyme Database | Functional Database | Provides comprehensive functional data (kinetics, inhibitors, substrates) for annotated EC numbers. |

| KEGG Mapper & MetaCyc | Pathway Analysis Platform | Tools to visualize enzymes (via EC numbers) within curated metabolic pathways for hypothesis generation. |

| CRISPR-Cas9 Knockout Kit | Genetic Tool | Validates target essentiality by creating a gene deletion strain to confirm phenotype predicted from EC role. |

| Recombinant Enzyme (e.g., from Sigma) | Biochemical Reagent | Positive control for developing high-throughput screening assays against a purified annotated target. |

| Spectrophotometric Assay Kits (e.g., NAD(P)H coupled) | Assay Reagent | Measures activity of a wide range of dehydrogenases, kinases, etc., for functional validation of EC annotation. |

This document provides application notes and protocols for BLASTp, framed within a research thesis comparing the efficacy of traditional homology-based tools (BLASTp) versus modern deep learning approaches for Enzyme Commission (EC) number annotation. The goal is to equip experimental researchers with robust, sequence-based methods for functional prediction.

Core Principles and Quantitative Benchmarks

BLASTp (Basic Local Alignment Search Tool for proteins) identifies regions of local similarity between a query amino acid sequence and sequences in a database. Its core algorithm is based on the heuristic search for High-scoring Segment Pairs (HSPs), scoring them using substitution matrices (e.g., BLOSUM62) and assessing statistical significance with E-values.

Table 1: Performance Comparison: BLASTp vs. Deep Learning for EC Prediction

| Metric | BLASTp (Homology-Based) | Deep Learning Model (e.g., DeepEC) | Notes |

|---|---|---|---|

| Primary Data Input | Amino Acid Sequence | Amino Acid Sequence (Embeddings) | DL models often use learned representations. |

| Dependency on Labeled Training Data | Low (Relies on DB annotations) | Very High (Requires large, curated sets) | BLASTp leverages existing knowledge bases. |

| Interpretability | High (Direct alignment visualization) | Low (Black-box predictions) | BLASTp alignments provide traceable evidence. |

| Speed for Single Query | Very Fast (Seconds) | Variable (Model-dependent; can be slower) | BLASTp is optimized for rapid database search. |

| Accuracy (Precision) for High Homology | >95% (for >50% identity) | Often >90% (across broader identity ranges) | DL can sometimes better detect remote homology. |

| Accuracy for Remote Homology (<30% identity) | Low (E-value less reliable) | Moderate to High (Pattern learning advantage) | DL models excel where sequence identity is low. |

| Key Limitation | Cannot predict novel folds/unrelated sequences | Requires retraining for new data; data bias. |

Table 2: Key BLASTp Statistics and Their Interpretation

| Statistic | Definition | Threshold for Reliability (Function Prediction) |

|---|---|---|

| Percent Identity | Percentage of identical residues in the alignment. | >50%: Strong evidence for similar function. 30-50%: Likely similar general function. <30%: Function may differ. |

| E-value (Expect Value) | The number of alignments with a given score expected by chance. Lower is better. | <1e-30: Very high confidence. <1e-10: Strong confidence. <0.01: Considered significant. >0.01: Treat with caution. |

| Query Coverage | Percentage of the query sequence length included in the alignment. | >70%: Suggests full-length protein homology. <50%: May indicate domain-only similarity. |

| Bit Score | A normalized alignment score independent of database size. Higher is better. | No universal threshold; use for relative ranking of hits within a search. |

Application Notes for EC Number Prediction

Protocol 2.1: Standard BLASTp Workflow for Functional Annotation

Objective: To predict the potential EC number of an uncharacterized protein query.

Materials & Reagents:

- Query Protein Sequence: In FASTA format.

- Reference Protein Database: NCBI's non-redundant (nr) database, SwissProt, or a custom enzyme database.

- Hardware/Software: Local BLAST+ suite installed or access to web servers (NCBI, UniProt).

- Substitution Matrix: Typically BLOSUM62.

- Filtering Options: For low-complexity regions (activated by default).

Procedure:

- Format Database: For local use, format the target database using

makeblastdb.

Execute BLASTp Search:

Parameters:

-evalue: significance threshold;-outfmt 6: tabular format;-max_target_seqs: number of hits to report.- Analyze Results:

- Identify the top hit with the lowest E-value and highest bit score.

- Check that query coverage is high (>70%).

- If percent identity is >50%, assign the EC number from the top hit as a putative annotation.

- For lower identity (30-50%), inspect multiple high-scoring hits. Consensus annotation across hits increases confidence.

- Validate via Domain Architecture: Use the hit's accession to search domain databases (e.g., Pfam, InterProScan) to confirm functional domain conservation.

Protocol 2.2: Reciprocal Best Hit (RBH) for Orthology-Based EC Assignment

Objective: To increase confidence in function prediction by identifying putative orthologs.

Procedure:

- Perform BLASTp of Query (A) against Database (B). Identify the best hit in B.

- Take the sequence of this best hit and perform a BLASTp search back against the database containing Query A.

- If the reciprocal best hit returns to the original Query A, the pair are considered Reciprocal Best Hits (putative orthologs).

- Assign the EC number from the ortholog only if the bidirectional E-values are significant (<1e-10) and alignments are full-length.

Visualizing Workflows and Relationships

Title: BLASTp Workflow for Enzyme Function Prediction

Title: BLASTp vs. Deep Learning in Thesis Context

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BLASTp-Based Function Prediction

| Item | Function / Purpose | Example / Specification |

|---|---|---|

| Curated Protein Database | High-quality reference set for accurate homology detection. | UniProtKB/Swiss-Prot (manually annotated), Enzyme-specific databases (BRENDA). |

| BLAST+ Suite | Command-line software to execute formatted searches locally. | NCBI BLAST+ (v2.14.0+); allows custom parameters and batch processing. |

| Substitution Matrix | Scores the likelihood of amino acid substitutions; critical for alignment quality. | BLOSUM62 (default for most searches), PAM30 for short, quick searches. |

| High-Performance Computing (HPC) Node | For processing large query sets or searching massive databases in reasonable time. | Server with multi-core CPUs, 16+ GB RAM, and fast SSD storage. |

| Sequence Analysis Toolkit | For downstream validation of BLASTp hits and domain analysis. | HMMER (for profile HMMs), InterProScan (integrated domain/function signatures). |

| Multiple Sequence Alignment (MSA) Tool | To align the query with top hits for conservation analysis. | Clustal Omega, MUSCLE; used post-BLASTp for deeper inspection. |

| E-value Calculator (Integral) | Computes the statistical significance of each alignment, filtering random matches. | Built into BLAST algorithm; user sets the reporting threshold (e.g., 0.001). |

Enzyme Commission (EC) number prediction is a critical task in functional genomics, linking protein sequences to biochemical functions. For decades, BLASTp (Basic Local Alignment Search Tool for proteins) has been the standard homology-based method. However, the rise of deep learning offers a paradigm shift from sequence similarity to pattern recognition, capable of identifying distant homologies and novel functions.

The Core Thesis: While BLASTp relies on explicit alignment to annotated sequences, deep learning models learn hierarchical representations of sequence features, potentially offering superior accuracy, especially for proteins with low sequence identity to known enzymes. This article provides the foundational protocols and application notes for implementing deep learning in this domain.

Foundational Neural Network Architectures for Sequence Analysis

Feedforward Neural Networks (FNNs) for Feature Vectors

FNNs form the basis for processing fixed-length, pre-computed features (e.g., amino acid composition, physicochemical properties).

Protocol 2.1.1: Building a Simple FNN for EC Prediction

- Input Preparation: Compute a 20-dimensional amino acid composition vector for each protein sequence. Normalize each vector to sum to 1.

- Model Architecture:

- Input Layer: 20 neurons (one per amino acid).

- Hidden Layers: Two fully connected (dense) layers with 128 and 64 neurons, respectively. Use ReLU (Rectified Linear Unit) activation.

- Output Layer: Neurons equal to the number of target EC classes (e.g., 1000). Use Softmax activation for multi-class classification.

- Training: Use Categorical Cross-Entropy loss and the Adam optimizer. Train for 100 epochs with a batch size of 32, holding out 20% of data for validation.

Convolutional Neural Networks (CNNs) for Local Motif Detection

CNNs excel at detecting local, informative sequence motifs (e.g., catalytic sites, binding pockets) irrespective of their precise position.

Protocol 2.2.1: 1D-CNN for Protein Sequence Scanning

- Input Encoding: Convert each protein sequence into a one-hot encoded matrix of size

L x 20, where L is sequence length (padded/truncated to a fixed value, e.g., 1024). - Model Architecture:

- Convolutional Blocks: Two sequential blocks, each containing:

- Conv1D Layer: 64 filters, kernel size of 7 (scans 7 adjacent amino acids).

- Activation: ReLU.

- Pooling Layer: MaxPooling1D with pool size of 3 to reduce dimensionality and induce translational invariance.

- Classifier Head: Flatten layer, followed by two dense layers (256 and 128 neurons) before the final Softmax output layer.

- Convolutional Blocks: Two sequential blocks, each containing:

- Training: Similar to Protocol 2.1.1, but may require gradient clipping for stability on longer sequences.

Advanced Architectures: RNNs, LSTMs, and the Transformer Revolution

Recurrent Neural Networks (RNNs) and LSTMs for Sequential Dependencies

Long Short-Term Memory (LSTM) networks model long-range dependencies in sequences, potentially capturing structural relationships.

Protocol 3.1.1: Bidirectional LSTM for Context-Aware Sequence Modeling

- Input: Same one-hot encoding as Protocol 2.2.1.

- Model Architecture:

- Embedding Layer (Optional): A trainable dense layer to project one-hot vectors into a lower-dimensional, semantic space (e.g., 128 dimensions).

- Sequence Modeling: A Bidirectional LSTM layer with 64 forward and 64 backward units, capturing context from both ends of the sequence.

- Global Attention Pooling: Sum the LSTM outputs across all time steps, weighted by a learned attention vector, to create a fixed-size context vector.

- Output: Dense layers applied to the context vector for final classification.

Transformer Models and Self-Attention

Transformers, based entirely on self-attention mechanisms, have set new benchmarks. They weigh the importance of all amino acids in a sequence relative to each other, capturing complex, global dependencies.

Protocol 3.2.1: Implementing a Transformer Encoder for Proteins

- Input Processing:

- Create token embeddings for each amino acid (or sub-word k-mer).

- Add learned positional embeddings (critical as Transformers are not inherently sequential).

- Core Block (Repeated N times, e.g., N=6):

- Multi-Head Self-Attention: Multiple attention heads run in parallel, allowing the model to focus on different types of sequence relationships (e.g., one head for charge, another for hydrophobicity).

- Add & Norm: A residual connection followed by Layer Normalization.

- Feed-Forward Network: A small FNN applied independently to each position.

- Another Add & Norm.

- Classification Head: Use the embedding of a special

[CLS]token prepended to the sequence, or mean pooling over all position outputs, fed into a final linear classifier.

Application Notes: EC Number Prediction Benchmarks

Recent studies provide quantitative comparisons between traditional and deep learning methods. The following table summarizes key performance metrics.

Table 1: Performance Comparison of EC Number Prediction Methods

| Method | Architecture | Test Accuracy (Top-1) | F1-Score (Macro) | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| BLASTp (Baseline) | Homology Search | ~72%* | ~0.70 | Interpretable, no training needed | Fails on low-homology targets; slow for large DBs |

| DeepEC | CNN | ~84% | 0.82 | Fast inference; good local feature detection | Struggles with very long-range dependencies |

| ProSeq2EC | BiLSTM + Attention | ~87% | 0.85 | Captures sequential context | Computationally intensive to train |

| TALE (Transformer) | Transformer Encoder | ~91% | 0.89 | State-of-the-art; best at long-range patterns | Requires very large datasets; "black-box" nature |

| ECPred (Ensemble) | Hybrid CNN+RNN | ~89% | 0.87 | Robust; reduces overfitting | Complex training pipeline |

*Accuracy is highly dependent on database completeness and sequence identity cutoff.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Deep Learning-Based Protein Function Annotation

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Sequence Databases | Source of training and evaluation data. | UniProtKB/Swiss-Prot (curated), BRENDA (enzyme-specific). |

| Pre-trained Protein Language Models | Transfer learning from vast unlabeled sequence corpora. | ESM-2, ProtBERT. Provide powerful contextual embeddings to boost model performance with limited labeled data. |

| Deep Learning Frameworks | Libraries for building and training models. | PyTorch, TensorFlow/Keras. Enable flexible model design and GPU acceleration. |

| Embedding/Tokenization Tools | Convert raw sequences to model inputs. | One-hot encoding, k-mer tokenization, or direct use of pre-trained model tokenizers. |

| Model Validation Suite | Metrics and tests to evaluate predictive performance. | scikit-learn (for F1, precision, recall), cross-validation scripts, and statistical significance tests (e.g., McNemar's). |

| Interpretability Packages | Gain insights into model predictions. | Captum (for PyTorch) or SHAP to identify important amino acids or motifs (saliency maps). |

| High-Performance Compute (HPC) | Infrastructure for training large models. | Access to GPU clusters (NVIDIA V100/A100) or cloud computing services (AWS, GCP). |

Experimental Protocol: A Standardized Benchmarking Workflow

Protocol 6.1: Benchmarking Deep Learning Model vs. BLASTp for EC Prediction

Objective: To compare the accuracy and robustness of a Transformer model against BLASTp on a hold-out test set of enzymes with varying degrees of homology to the training set.

- Data Curation:

- Source a non-redundant set of proteins with experimentally verified EC numbers from UniProt.

- Split data into Training (70%), Validation (15%), and Test (15%) sets at the protein level, ensuring no significant sequence similarity (>30% identity) between splits using CD-HIT.

- For the Test set, categorize proteins into homology bins: High (>50% identity to a training seq), Medium (30-50%), and Low (<30%).

- Baseline (BLASTp) Setup:

- Format the Training set sequences as a BLAST database.

- For each Test set protein, run BLASTp against the training DB. Assign the EC number of the top hit (e-value < 1e-5). If no hit, assign "No Prediction."

- Deep Learning Model Training:

- Implement a Transformer encoder model (as in Protocol 3.2.1) using a framework like PyTorch.

- Train the model on the Training set, using the Validation set for early stopping to prevent overfitting.

- Optionally, initialize the model with weights from a pre-trained protein language model (e.g., ESM-2) and fine-tune.

- Evaluation & Comparison:

- Run the trained Transformer model and BLASTp on the entire Test set.

- Calculate per-homology-bin and overall accuracy, precision, recall, and F1-score.

- Perform a statistical analysis (e.g., paired t-test) on the results to determine significance.

Visualizing Architectures and Workflows

CNN for Local Protein Motif Detection (Max Width: 760px)

Transformer Encoder Architecture for Protein Sequences (Max Width: 760px)

Benchmarking Workflow: DL Model vs. BLASTp (Max Width: 760px)

Application Notes

In the context of comparing BLASTp versus deep learning for Enzyme Commission (EC) number annotation, the curated knowledge within UniProt, BRENDA, and Pfam serves as the essential benchmark for validation. These resources provide experimentally verified, high-quality data against which the performance of both sequence-similarity and machine-learning-based annotation methods must be rigorously tested.

UniProt (Universal Protein Resource) is the comprehensive repository for protein sequence and functional information. Its manually annotated UniProtKB/Swiss-Prot subset is the gold standard for protein function, including EC numbers. Validation pipelines use Swiss-Prot entries with experimentally confirmed EC numbers as the ground truth for benchmarking annotation accuracy, minimizing homology-based propagation of errors.

BRENDA (Braunschweig Enzyme Database) is the world's leading enzyme information system, offering extensive data on enzyme functional parameters, kinetics, and substrate specificity. For EC number validation, BRENDA provides an independent, detailed functional correlate. A method's prediction is strengthened if the assigned EC number is supported by corresponding kinetic data in BRENDA, linking sequence annotation to biochemical reality.

Pfam is a database of protein families and domains defined by hidden Markov models (HMMs). Since enzyme function is often determined by specific catalytic domains, Pfam offers a structural-domain-level validation. An accurate EC number prediction should be consistent with the Pfam domains present in the query sequence, ensuring functional annotation aligns with recognized structural units.

Synergistic Validation: The highest confidence in a novel EC annotation is achieved when predictions are consistent across all three resources: the sequence homology and annotation in UniProt, the functional parameters in BRENDA, and the domain architecture in Pfam.

Table 1: Key Metrics of the Gold Standard Databases (as of 2024)

| Database | Primary Content | Key Metric for EC Validation | Total EC-linked Entries | Manually Curated EC Entries |

|---|---|---|---|---|

| UniProtKB | Protein Sequences & Functional Annotation | Swiss-Prot entries with experimental evidence | ~1.2 million proteins | ~550,000 (Swiss-Prot) |

| BRENDA | Enzyme Functional Data | Detailed kinetic & physiological data per EC class | ~8,400 EC classes | All entries curated from literature |

| Pfam | Protein Domain Families | Domain architecture linked to enzyme function | ~20,000 families | ~3,500 families linked to EC |

Table 2: Use in Validation of EC Annotation Methods

| Validation Aspect | UniProt's Role | BRENDA's Role | Pfam's Role |

|---|---|---|---|

| Ground Truth Data | Provides sequence-specific EC numbers with evidence codes. | Confirms the EC number is functionally characterized in literature. | Confirms expected domain architecture for the EC class. |

| Precision/Recall Benchmark | Serves as the labeled dataset for training and testing. | Offers independent verification beyond sequence homology. | Enables domain-aware validation, catching multi-domain complexities. |

| Error Analysis | Identifies misannotations in public databases. | Highlights predictions inconsistent with known enzyme kinetics. | Reveals domain absences or unexpected presences that challenge predictions. |

Experimental Protocols

Protocol 2.1: Constructing a Benchmark Dataset from UniProt/Swiss-Prot

Purpose: To create a high-confidence dataset of proteins with experimentally validated EC numbers for training and evaluating BLASTp and deep learning models.

Materials: UniProt flat file or API access, computing environment with Python/R.

Procedure:

- Data Retrieval: Download the latest UniProtKB/Swiss-Prot data file (

uniprot_sprot.dat.gz) or use the programmatic interface. - EC Number Extraction: Parse the file to extract all entries containing a DE (Description) line with "EC=".

- Evidence Filtering: For each entry, examine the evidence tag (PE field). Retain only entries with protein-level experimental evidence (PE level 1: Experimental evidence at protein level).

- Sequence & Label Pairing: For each retained entry, store the amino acid sequence (SQ field) and its fully qualified four-digit EC number(s). Ensure multi-label entries are handled appropriately.

- Stratified Splitting: Partition the dataset into training, validation, and test sets (e.g., 70/15/15) ensuring no EC number is absent from any set (stratified split) and that sequence identity between sets is <30% to reduce homology bias (using CD-HIT).

- Final Dataset: The resulting test set is the primary benchmark for validation studies.

Protocol 2.2: Validating Predicted EC Numbers Against BRENDA Functional Data

Purpose: To assess the biochemical plausibility of a computationally assigned EC number.

Materials: BRENDA database (web interface or local download), list of predicted EC numbers and protein sequences.

Procedure:

- Query BRENDA: For a predicted EC number (e.g., 1.1.1.1), query the BRENDA database via its website or API for all known natural substrates and cofactors.

- Extract Functional Profile: Compile a list of typical substrates, reaction types, and cofactors (e.g., NAD+, NADP+) for that EC class from BRENDA.

- Compare with Prediction Context: If the predicted protein originates from a specific organism (e.g., E. coli), check if BRENDA lists this EC activity for that organism, adding ecological plausibility.

- Cross-reference with Structure: If a 3D model or active site residues are available for the query protein, verify that the residues align with the catalytic mechanism described for that EC class in BRENDA.

- Scoring: Assign a confidence score based on the match between the predicted EC number's typical functional profile in BRENDA and any available contextual data for the query protein.

Protocol 2.3: Domain Architecture Validation with Pfam

Purpose: To ensure a predicted EC number is consistent with the protein's domain composition.

Materials: Query protein sequence(s), HMMER software suite (hmmscan), Pfam-A HMM database.

Procedure:

- Pfam Scan: Run

hmmscanagainst the latest Pfam-A database (e.g.,Pfam-A.hmm) for each query sequence. Use an E-value cutoff of 0.01. - Parse Significant Domains: Extract all Pfam domain identifiers (e.g., PF00106, short-chain dehydrogenases) with significant hits.

- Map Domains to EC: Use the Pfam to Enzyme mapping file (available from Pfam FTP) to list EC numbers statistically associated with each identified domain.

- Consistency Check: Compare the computationally predicted EC number (from BLASTp or deep learning) with the set of EC numbers associated with the identified Pfam domains.

- Interpretation: A prediction is considered domain-consistent if it matches one of the EC numbers linked to the present domains. Inconsistency may indicate a false positive, a novel fusion protein, or a previously uncharacterized domain-function relationship.

Visualizations

Diagram 1: EC Number Validation Workflow Against Gold Standards

Diagram 2: Benchmark Data Flow for EC Annotation Research

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for EC Validation Research

| Item / Resource | Function in Validation | Source / Example |

|---|---|---|

| UniProtKB/Swiss-Prot Flatfile | Primary source of experimentally verified protein sequences and EC numbers for ground truth labeling. | Downloaded from UniProt FTP. |

| BRENDA Web API / TSV Exports | Enables programmatic access to enzyme functional data for large-scale validation of predicted EC numbers. | https://www.brenda-enzymes.org |

| Pfam-A HMM Database | Collection of profile HMMs for scanning query sequences to identify functional domains for architecture validation. | HMMER website. |

| HMMER (hmmscan) | Software suite to search protein sequences against Pfam HMMs to identify constituent domains. | http://hmmer.org |

| CD-HIT | Tool for clustering sequences by identity; used to create non-redundant benchmark datasets to avoid homology bias. | http://cd-hit.org |

| Deep Learning Framework (e.g., PyTorch, TensorFlow) | Environment for building, training, and evaluating neural network models for EC number prediction. | Open-source. |

| BLAST+ Suite | Standard tool for performing BLASTp searches against UniProt or other databases for homology-based annotation. | NCBI. |

| EC-Parser Scripts (Python/R) | Custom scripts to parse evidence codes, extract EC numbers, and format data from UniProt/BRENDA. | Custom development. |

Hands-On Guide: Step-by-Step EC Annotation with BLASTp and Deep Learning Tools

Within the broader research thesis comparing traditional homology-based methods (BLASTp) with deep learning approaches for Enzyme Commission (EC) number annotation, this protocol details the established, sequence-based BLASTp pipeline. While deep learning models offer potential for detecting remote homology and novel folds, BLASTp remains a fundamental, transparent, and statistically rigorous benchmark. Its performance, measured by precision, recall, and speed against curated datasets, provides the essential baseline against which novel machine learning methods must be evaluated.

Application Notes: Key Considerations

- Sensitivity vs. Specificity: Lower E-value thresholds (e.g., 1e-50) increase specificity but may miss remote homologs. Higher thresholds (e.g., 1e-5) increase sensitivity but raise the risk of false-positive annotations.

- Database Choice: Using a non-redundant, expertly annotated database like Swiss-Prot is critical for reliable EC number transfer, as opposed to larger but noisier databases like NCBI nr.

- Limitations: BLASTp cannot assign EC numbers to sequences with no significant hits or to novel enzymes without characterized homologs. It is also prone to propagating existing annotation errors.

- Integration with Thesis: Quantitative results from this protocol (see Table 1) will be directly compared to deep learning model outputs on identical test sets, assessing trade-offs in accuracy, computational cost, and generalizability.

Experimental Protocol: Detailed Methodology

A. Query Sequence Preparation

- Obtain the protein sequence of interest in FASTA format.

- Validate the sequence for the absence of non-standard characters (except the 20 standard amino acids).

- Optionally, predict and mask low-complexity regions using tools like

segordustmaskerto reduce spurious alignments.

B. BLASTp Execution Against Swiss-Prot

- Tool: NCBI BLAST+ command-line suite (version 2.14.0+).

- Command:

- Parameters:

-db swissprot: Use the curated Swiss-Prot database.-outfmt 6...: Tab-separated output with extended information.-evalue 1e-10: Use a stringent E-value cutoff.-max_target_seqs 50: Retrieve top 50 hits for robust analysis.

C. EC Number Extraction and Assignment

- Parse the BLASTp output file to extract the accession numbers of the top significant hits (E-value < threshold).

- For each accession, retrieve the corresponding full Swiss-Prot entry (e.g., via

efetchfrom E-utilities) to obtain the annotated EC number from the "DE" (Description) or "EC" lines. - Apply a majority-rule consensus:

- If ≥70% of the top 10 significant hits share the same EC number, assign that EC number to the query.

- If no clear consensus exists, assign the EC number from the single hit with the lowest E-value and highest percent identity.

- Document all candidate hits and the logic for the final assignment.

Data Presentation: Performance Metrics

Table 1: BLASTp Performance Benchmark on Curated Enzyme Dataset (Sample Results)

| Test Dataset | Size (Sequences) | Avg. Precision (%) | Avg. Recall (%) | Avg. Runtime (sec/query) | Optimal E-value Threshold |

|---|---|---|---|---|---|

| BRENDA Core | 1,200 | 98.2 | 85.7 | 0.45 | 1e-30 |

| Novel Fold | 300 | 94.1 | 22.3 | 0.51 | 1e-05 |

| Overall | 1,500 | 97.5 | 78.4 | 0.47 | 1e-10 |

Visualization of Workflow

Diagram 1: BLASTp to EC Number Assignment Protocol

Diagram 2: BLASTp vs. Deep Learning in Thesis Research

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for BLASTp-based EC Assignment

| Item | Function & Relevance |

|---|---|

| NCBI BLAST+ Suite | Core software for executing the BLASTp algorithm. Essential for local, high-throughput analyses. |

| UniProt Swiss-Prot Database | Manually annotated, non-redundant protein database. Critical for high-confidence EC number transfer. |

| High-Performance Computing (HPC) Cluster | Enables parallel processing (-num_threads) for large-scale analyses required for robust thesis comparisons. |

| BRENDA Enzyme Database | Provides the curated benchmark datasets necessary for validating and quantifying BLASTp performance metrics. |

| Python/R Scripting Environment | For automating pipeline steps: parsing BLAST output, fetching EC numbers, and applying consensus rules. |

| EFetch (E-Utilities) | Allows programmatic retrieval of up-to-date Swiss-Prot entries and EC annotations directly from NCBI/UniProt. |

Within the broader thesis comparing BLASTp homology-based annotation versus deep learning (DL) for Enzyme Commission (EC) number prediction, these tools represent the state-of-the-art in DL-driven functional annotation. BLASTp, while foundational, struggles with remote homology, high sequence diversity within EC classes, and promiscuous enzyme activities. DeepEC, CLEAN, and CofactorNet address these gaps using distinct neural architectures trained on specific enzymatic features, offering complementary advantages in accuracy, scope, and mechanistic insight.

Table 1: Core Tool Comparison for EC Number Annotation

| Feature | BLASTp (Baseline) | DeepEC | CLEAN | CofactorNet |

|---|---|---|---|---|

| Core Approach | Sequence alignment & homology transfer. | Deep CNN on protein sequences. | Contrastive learning on enzyme substrate structures. | Multimodal GNN on enzyme-cofactor molecular graphs. |

| Primary Prediction Target | Full EC number (inherited from top hit). | Full EC number (up to 4 digits). | Enzyme substrate (maps to EC via database). | Cofactor specificity (NADH vs NADPH, etc.), informs EC class. |

| Key Strength | High-confidence for clear homologs; interpretable alignment. | High accuracy for full EC prediction from sequence alone. | Generalizes to novel substrates; high precision. | Provides chemical mechanism insight; predicts cofactor dependence. |

| Key Limitation | Poor for remote homology; annotational drift. | Black-box model; performance drops on sparse EC classes. | Requires substrate structure as input. | Predicts cofactor, not full EC number directly. |

| Reported Accuracy (Example) | ~80% at 30% seq. identity (context-dependent). | 98.9% (1st digit), 92.1% (full EC) on test set. | AUROC >0.99 on held-out substrates. | >90% accuracy on NADH/NADPH classification. |

Application Notes & Protocols

Protocol 1: Implementing DeepEC for High-Throughput Sequence Annotation

Objective: Annotate a fasta file of unknown protein sequences with full EC numbers.

- Environment Setup: Install via

pip install tensorflow==2.10.0 deepec. - Data Preparation: Prepare a clean

.fastafile (query.fasta). Ensure sequences are >30 amino acids. - Model Inference: Run the pre-trained model:

- Output Interpretation: The output

predictions.tsvlists predicted EC numbers with confidence scores. Use a threshold (e.g., confidence >0.75) for reliable annotation.

Protocol 2: Using CLEAN for Substrate-Specific Activity Prediction

Objective: Predict the likely enzymatic substrate and infer EC number for a given protein structure.

- Input Preparation: Obtain the substrate's molecular structure (SMILES string or SDF file). Query protein sequence is also needed.

- CLEAN API Call: Utilize the provided Python API:

- EC Number Mapping: The CLEAN output provides a similarity score to known enzyme-substrate pairs. Map the top-ranking substrate to its canonical EC number via the BRENDA or MetaCyc database.

Protocol 3: Applying CofactorNet for Mechanistic Insight

Objective: Determine the cofactor specificity of an oxidoreductase to refine EC annotation (e.g., EC 1.1.1.-).

- Input Generation: Generate the 3D structural model of the query protein (via AlphaFold2) and extract the putative cofactor-binding pocket residues.

- Graph Construction: Represent the binding pocket residues and the cofactor (e.g., NADH) as a molecular graph using the provided scripts from CofactorNet.

- Prediction: Run the CofactorNet model:

- Annotation Refinement: Combine the predicted cofactor (e.g., NADPH) with the known reaction type to assign a specific fourth EC digit (e.g., from EC 1.1.1.- to EC 1.1.1.25).

Visualized Workflows

Title: Annotation Workflow: Integrating BLASTp & Deep Learning Tools

Title: DeepEC's Hierarchical Convolutional Neural Network Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for DL-Driven EC Annotation Research

| Item | Function in Protocol | Example/Supplier |

|---|---|---|

| Curated Training Datasets | Gold-standard data for model training/fine-tuning. | Swiss-Prot enzyme annotations, BRENDA, SFLD. |

| Protein Structure Prediction Suite | Generates 3D models for structure-based tools (CLEAN, CofactorNet). | AlphaFold2 (local or ColabFold), ESMFold. |

| Molecular Graph Conversion Tool | Converts protein-ligand complexes to graph representations for GNNs. | RDKit, PyTorch Geometric (for CofactorNet). |

| High-Performance Computing (HPC) Unit | Enables efficient DL model inference and large-scale analysis. | Local GPU cluster or cloud-based GPU instances. |

| Functional Validation Assay Kit | Wet-lab validation of predicted EC numbers (critical for thesis). | Generic enzyme activity assay kits (Sigma-Aldrich, Abcam) for predicted reaction. |

| Integrated Annotation Database | Cross-references DL predictions with known functional data. | BRENDA, MetaCyc, KEGG Enzyme for consensus building. |

Within the context of research comparing BLASTp versus deep learning for Enzyme Commission (EC) number annotation, interpreting results is critical. This protocol details how to read, validate, and analyze outputs from these distinct methodologies, enabling robust comparative analysis for researchers and drug development professionals.

Application Notes: Comparative Analysis Framework

Key Performance Metrics

The efficacy of annotation methods is measured using standard bioinformatics metrics. The table below summarizes quantitative data from recent comparative studies.

Table 1: Performance Metrics for EC Number Annotation Methods

| Metric | BLASTp (vs. Swiss-Prot) | Deep Learning Model (e.g., DeepEC) | Interpretation Guide |

|---|---|---|---|

| Precision | 0.87 - 0.92 | 0.89 - 0.95 | Proportion of correct positive predictions. >0.9 is excellent. |

| Recall (Sensitivity) | 0.75 - 0.82 | 0.83 - 0.91 | Proportion of true positives identified. Higher is better for full proteome annotation. |

| F1-Score | 0.80 - 0.86 | 0.86 - 0.93 | Harmonic mean of precision and recall. A balanced overall measure. |

| Accuracy | 0.88 - 0.93 | 0.91 - 0.96 | Overall correctness. Can be misleading for imbalanced datasets. |

| Coverage | High (Broad) | Targeted (Model-Dependent) | BLASTp covers more sequences; DL may be limited to training set scope. |

| Computational Time | High for large DBs | Fast post-training | BLASTp time scales with DB size; DL inference is typically faster. |

| Four-Digit EC Precision | Moderate | High | DL excels at predicting fine-grained, specific EC numbers. |

Interpreting BLASTp Output for EC Annotation

Primary Outputs to Analyze:

- E-value: The number of alignments expected by chance. For EC annotation, use a stringent threshold (e.g., 1e-30). Lower E-value suggests higher confidence in homology and, by extension, function.

- Percent Identity & Query Coverage: High identity (>40-50%) and high coverage (>70%) to a protein of known EC number increases annotation reliability.

- Bit Score: A normalized alignment score. Higher scores indicate better alignment. Compare against scores of known true positives.

- Alignment Consistency: Check if all top hits (especially from different organisms) share the same EC number. Inconsistent annotations signal potential error.

Interpreting Deep Learning Model Output

Primary Outputs to Analyze:

- Prediction Probability/Confidence Score: Most models output a probability (0-1) for each predicted EC number. A high score (e.g., >0.9) indicates high model confidence. Set a threshold to balance precision and recall.

- Class Activation Maps (for CNN models): Can indicate which sequence regions (e.g., motifs) most influenced the prediction, offering a form of interpretability.

- Multi-Label vs. Single-Label Output: Enzymes can have multiple EC numbers. Ensure the model architecture and output layer are appropriate for this task.

Experimental Protocols

Protocol 1: Benchmarking BLASTp for EC Annotation

Objective: To annotate a set of query protein sequences with EC numbers using BLASTp against a curated reference database and evaluate performance.

Materials: See "Research Reagent Solutions" below.

Methodology:

- Query Set Preparation: Curate a benchmark dataset of proteins with experimentally verified EC numbers. Split into query (unlabeled for test) and a hold-out validation set.

- Database Curation: Download a high-quality, non-redundant protein database with EC annotations (e.g., Swiss-Prot). Format for BLAST using

makeblastdb. - BLASTp Execution: Run BLASTp with optimized parameters:

blastp -query benchmark.fasta -db swissprot_db -out results.xml -evalue 1e-10 -outfmt 5 -max_target_seqs 50. - Result Parsing & Annotation Transfer: Parse the XML output. For each query, assign the EC number from the top hit meeting criteria (E-value < threshold, identity > threshold). Handle ties and inconsistencies by evaluating lower-ranked hits.

- Validation: Compare assigned EC numbers against the known, held-out annotations. Calculate metrics from Table 1.

Protocol 2: Training and Validating a Deep Learning EC Predictor

Objective: To develop and evaluate a deep neural network for direct EC number prediction from protein sequence.

Methodology:

- Data Preprocessing: Use a comprehensive dataset like ENZYME or BRENDA. Encode protein sequences into numerical tensors (e.g., one-hot encoding, embedding layers). Split into training, validation, and test sets, ensuring no EC number bias across splits.

- Model Architecture: Implement a network (e.g., CNN with attention, LSTM). The final layer should have nodes equal to the number of possible EC classes (multi-label classification).

- Training: Train using a loss function suitable for multi-label tasks (e.g., binary cross-entropy). Monitor validation loss and precision/recall to avoid overfitting.

- Inference & Output Generation: Run the trained model on the test set. The output is a vector of probabilities per sequence. Apply a probability threshold (e.g., 0.5) to assign final EC predictions.

- Validation: Compare predictions to true labels. Calculate metrics. Analyze misclassifications: are they chemically similar EC classes (e.g., same first three digits)?

Visualizations

Title: BLASTp EC Number Annotation Workflow

Title: Deep Learning EC Prediction Workflow

Title: Comparative Result Analysis and Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for EC Annotation Research

| Item | Function in Research | Example/Specification |

|---|---|---|

| Curated Protein Database | Gold-standard reference for homology search and model training. | UniProtKB/Swiss-Prot (manually annotated). |

| Benchmark Dataset | For fair evaluation and comparison of BLASTp vs. DL methods. | Independent set from BRENDA with experimental EC proof. |

| BLAST+ Suite | Software to execute and manage BLASTp searches. | NCBI BLAST+ command-line tools (v2.14+). |

| Deep Learning Framework | Platform to build, train, and deploy neural network models. | TensorFlow/PyTorch with GPU support. |

| Sequence Encoding Library | Converts amino acid sequences to numerical inputs for DL models. | Biopython, ProtBert embeddings. |

| Evaluation Metrics Scripts | Calculates precision, recall, F1-score, etc., for multi-label classification. | Custom Python scripts using scikit-learn. |

| High-Performance Compute (HPC) | Accelerates BLASTp searches (large DBs) and DL model training. | Cluster with multi-core CPUs (BLAST) and NVIDIA GPUs (DL). |

| Visualization Tools | Generates confusion matrices, performance graphs, and pathway diagrams. | Matplotlib, Seaborn, Graphviz. |

The accurate prediction of Enzyme Commission (EC) numbers from protein sequences is a critical task in functional genomics, with direct implications for metabolic engineering and drug target identification. This document presents application notes and protocols within the broader thesis investigating traditional homology-based methods (BLASTp) versus modern deep learning approaches for EC number annotation. Effective workflow integration is paramount for robust, reproducible, and scalable research outcomes.

Comparative Performance Data: BLASTp vs. Deep Learning Models

Recent benchmarking studies (2023-2024) on standardized datasets like the CAFA challenge and BRENDA provide quantitative performance metrics.

Table 1: Performance Comparison on CAFA4 Test Set (Top 100,000 Sequences)

| Method / Tool | Type | Precision (Micro) | Recall (Micro) | F1-Score (Micro) | Avg. Runtime per 1000 seqs (CPU/GPU) |

|---|---|---|---|---|---|

| DeepEC (DL) | Deep Learning (CNN) | 0.89 | 0.78 | 0.83 | 45 min (GPU) |

| PROT-CNN (DL) | Deep Learning (CNN) | 0.91 | 0.75 | 0.82 | 52 min (GPU) |

| BLASTp (best hit) | Homology Search | 0.94 | 0.62 | 0.75 | 120 min (CPU) |

| BLASTp (DIAMOND) | Homology Search | 0.92 | 0.65 | 0.76 | 12 min (CPU) |

| ECPred (DL) | Deep Learning (MLP) | 0.86 | 0.80 | 0.83 | 38 min (GPU) |

Table 2: Coverage vs. Accuracy Trade-off on Novel Sequences (<30% Identity)

| Method | Coverage (%) | Accuracy on Covered (%) | Key Limitation |

|---|---|---|---|

| BLASTp (E-value < 1e-10) | 58% | 92% | Fails on remote/no homology |

| Deep Learning Ensemble | 95% | 84% | Can over-predict on ambiguous folds |

| Hybrid Pipeline (BLASTp+DL) | 98% | 89% | Increased computational complexity |

Experimental Protocols

Protocol 3.1: Standardized BLASTp Annotation Pipeline

Objective: To annotate a FASTA file of query protein sequences with EC numbers using a rigorous BLASTp homology approach.

Materials: See "The Scientist's Toolkit" (Section 6). Software: NCBI BLAST+ suite (v2.14+), Python 3.10+ with Biopython.

Procedure:

- Database Curation:

- Download the Swiss-Prot database (uniprot_sprot.fasta) from UniProt.

- Generate a reference mapping file linking Swiss-Prot IDs to experimentally validated EC numbers from BRENDA or IntEnz.

- Format the database:

makeblastdb -in uniprot_sprot.fasta -dbtype prot -parse_seqids -out swissprot_db.

Homology Search:

- Run BLASTp:

blastp -query your_sequences.fasta -db swissprot_db -out results.xml -evalue 1e-5 -max_target_seqs 5 -outfmt 5. - For large-scale searches, use DIAMOND:

diamond blastp -d swissprot_db.dmnd -q your_sequences.fasta -o results.daa --sensitive --evalue 1e-5.

- Run BLASTp:

Hit Filtering and EC Transfer:

- Parse BLAST XML/DIAMOND output using a custom script.

- Apply filters: sequence identity ≥ 40%, query coverage ≥ 70%, and E-value ≤ 1e-10.

- For the top filtered hit, transfer the EC number from the reference mapping file.

- Output a CSV file with columns:

Query_ID, Predicted_EC, Identity(%), Coverage(%), E-value.

Protocol 3.2: Deep Learning-Based Annotation with DeepEC

Objective: To predict EC numbers directly from protein sequences using a pre-trained convolutional neural network.

Materials: See "The Scientist's Toolkit" (Section 6). Software: Python 3.10, TensorFlow 2.12+ or PyTorch 2.0+, DeepEC source code.

Procedure:

- Environment Setup:

- Clone the DeepEC repository:

git clone https://github.com/deepomicslab/DeepEC.git. - Install dependencies:

pip install tensorflow numpy pandas.

- Clone the DeepEC repository:

Data Preprocessing:

- Convert your FASTA file into a numerical matrix using the provided

seq2mat.pyscript, which encodes sequences via a bi-profile bit vector method. - Ensure all sequences are of uniform length (pad or truncate to 1000 amino acids as per model specification).

- Convert your FASTA file into a numerical matrix using the provided

Model Inference:

- Load the pre-trained DeepEC model (

deepEC.h5). - Run prediction:

python predict.py -i your_sequences.mat -o predictions.txt. - The output provides the top 3 predicted EC numbers with confidence scores (0-1).

- Load the pre-trained DeepEC model (

Post-processing:

- Apply a confidence threshold (e.g., ≥ 0.7) to filter low-probability predictions.

- Convert model output to a standardized annotation table.

Hybrid Integrated Workflow Protocol

Objective: To implement a decision-tree pipeline that intelligently selects BLASTp or deep learning based on homology detection, optimizing accuracy and coverage.

Procedure:

- Run Protocol 3.1 (BLASTp) as the primary step.

- For all queries that fail the BLASTp filters (Identity<40% or Coverage<70%), pass their sequences to Protocol 3.2 (DeepEC).

- Integrate results: Annotations from BLASTp are assigned

source: homology; those from DeepEC are assignedsource: deep_learning. - Generate a final consensus report. In cases of conflict (rare), prioritize the BLASTp annotation.

Workflow and Pathway Visualizations

Hybrid EC Annotation Workflow

Decision Logic for Method Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for EC Annotation Pipelines

| Item / Reagent | Function / Purpose in Protocol | Example Source / Product Code |

|---|---|---|

| Swiss-Prot Database | Curated, high-quality reference database for homology search and EC mapping. | UniProt (uniprot.org), file: uniprot_sprot.fasta |

| BRENDA EC Data | Authoritative source of experimentally validated EC numbers for reference mapping. | BRENDA (brenda-enzymes.org) |

| NCBI BLAST+ Suite | Command-line tools for running BLASTp and formatting databases. | NCBI FTP (ftp.ncbi.nlm.nih.gov) |

| DIAMOND | Ultra-fast protein aligner for large-scale BLAST-like searches. | GitHub (github.com/bbuchfink/diamond) |

| DeepEC Model | Pre-trained convolutional neural network for direct EC prediction from sequence. | Deepomics Lab (github.com/deepomicslab/DeepEC) |

| TensorFlow/PyTorch | Deep learning frameworks required for running model inference. | Open Source (tensorflow.org, pytorch.org) |

| Biopython | Python library for parsing FASTA, BLAST outputs, and biological data manipulation. | Python Package Index (pypi.org/project/biopython) |

| High-Performance Compute (HPC) Cluster or Cloud GPU Instance | Essential for processing large datasets (>10,000 sequences) in a reasonable time. | AWS EC2 (g4dn instance), Google Cloud AI Platform, local SLURM cluster |

This application note serves as a practical case study within a broader thesis investigating the comparative efficacy of traditional homology-based tools (e.g., BLASTp) versus modern deep learning (DL) approaches for the precise annotation of Enzyme Commission (EC) numbers. Accurate EC number assignment is critical for functional metagenomics, where vast pools of uncharacterized proteins from environmental samples offer potential for novel biocatalyst and drug discovery. Here, we detail the protocol for annotating a putative novel glycoside hydrolase (contig457gene_002) identified in a terrestrial soil metagenome, benchmarking BLASTp against the DeepEC and CLEAN (Contrastive Learning–enabled Enzyme Annotation) deep learning models.

Annotative Analysis: BLASTp vs. Deep Learning

Protocol 2.1: Initial Homology Search via BLASTp

- Objective: Identify homologous sequences and infer putative function.

- Database: NCBI's non-redundant protein sequence (nr) database.

- Tool: NCBI BLASTp (web interface or standalone v2.13.0+).

- Parameters: E-value threshold: 1e-5; Max target sequences: 100; Output format: tabular (outfmt 7).

- Procedure: Query with

contig_457_gene_002.faa. Parse results for top hits, associated EC numbers, and percent identity.

Protocol 2.2: Deep Learning–Based EC Number Prediction

- Objective: Obtain direct, homology-independent EC number predictions.

- Tool A: DeepEC

- Model: Convolutional Neural Network (CNN).

- Procedure: Input protein sequence in FASTA format into the DeepEC web server or local Docker container. Use default thresholds.

- Tool B: CLEAN

- Model: Contrastive Learning-based protein language model.

- Procedure: Input protein sequence in FASTA format via the CLEAN web API (

https://clean.omics.ai).

Data Presentation: Annotation Results Comparison

Table 1: Annotation Results for contig_457_gene_002 (Length: 312 aa)

| Method | Top Prediction / Hit | Confidence Metric | Inferred EC Number | Putative Function |

|---|---|---|---|---|

| BLASTp | Beta-glucosidase [Streptomyces sp.] | 42% identity, E-value: 3e-52 | EC 3.2.1.21 | Hydrolysis of terminal glucosyl residues. |

| DeepEC | N/A | Score: 0.887 | EC 3.2.1.176 | Exo-1,4-β-xylosidase (Xylobiose hydrolysis). |

| CLEAN | N/A | Similarity Score: 0.923 | EC 3.2.1.176 | Exo-1,4-β-xylosidase. |

Table 2: Performance Metrics Comparison (Thesis Context)

| Metric | BLASTp | Deep Learning (CLEAN/DeepEC) |

|---|---|---|

| Primary Advantage | High biological interpretability via alignments. | Detects remote homology & novel folds; direct EC output. |

| Key Limitation | Fails if sequence identity <30% ("twilight zone"). | Black-box model; training data bias can propagate. |

| Speed | ~1-2 minutes per query (dependent on DB size). | ~10-30 seconds per query (pre-trained model). |

| This Case Outcome | Suggested a common β-glucosidase. | Consensus on a rarer EC 3.2.1.176, highlighting novel function. |

Experimental Protocol for Functional Validation

Protocol 3.1: Heterologous Expression & Purification

- Cloning: Codon-optimize gene for E. coli; clone into pET-28a(+) vector with N-terminal His-tag.

- Expression: Transform into E. coli BL21(DE3). Induce with 0.5 mM IPTG at 16°C for 18h.

- Purification: Lyse cells; purify via immobilized metal affinity chromatography (IMAC) using Ni-NTA resin; buffer exchange into 50 mM Tris-HCl, pH 7.5.

Protocol 3.2: Enzymatic Assay for EC 3.2.1.176

- Principle: Measure release of p-nitrophenol (pNP) from pNP-β-D-xylobiocide.

- Reaction Mix: 50 µL purified enzyme, 450 µL 100 µM substrate in 50 mM citrate-phosphate buffer, pH 6.0.

- Control: Heat-inactivated enzyme.

- Incubation: 30°C for 15 min. Terminate with 500 µL 1M Na₂CO₃.

- Measurement: Read A₄₁₀. Calculate activity using pNP standard curve.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Validation

| Item | Function / Rationale |

|---|---|

| pET-28a(+) Vector | Prokaryotic expression vector with T7 promoter and His-tag for affinity purification. |

| Ni-NTA Resin | Immobilized affinity resin for purifying histidine-tagged recombinant proteins. |

| pNP-β-D-xylobiocide | Chromogenic substrate specific for exo-acting xylanases/xylosidases; confirms EC 3.2.1.176 activity. |

| PDB Database (RCSB) | Source of 3D structural templates (e.g., 4G1F for EC 3.2.1.176) for comparative modeling. |

| AlphaFold2 (ColabFold) | DL tool for predicting 3D protein structure in the absence of a homolog, informing mechanism. |

Visualization of Workflow and Pathway

Diagram Title: Functional Annotation & Validation Workflow

Diagram Title: Catalytic Action of EC 3.2.1.176

This case study demonstrates a synergistic protocol where BLASTp provided initial, misleading homology, while deep learning models converged on a specific, rare EC number (3.2.1.176). Subsequent biochemical validation confirmed the DL-predicted function, substantiating the thesis that DL methods can outperform traditional homology-based annotation in detecting novel enzymatic functions in metagenomic data, a crucial insight for accelerating drug discovery from natural sources.

Solving Annotation Challenges: Accuracy, Ambiguity, and Performance Optimization

Application Notes

The accurate annotation of Enzyme Commission (EC) numbers is critical for metabolic pathway elucidation, drug target identification, and functional genomics. While BLASTp remains a widely used tool for homology-based function transfer, its performance is challenged in key areas relevant to modern enzymology. Within a thesis comparing BLASTp to deep learning for EC annotation, it is essential to quantify these pitfalls to justify the exploration of complementary methods.

Pitfall 1: Low-Homology Proteins BLASTp relies on significant sequence identity. For proteins with <30% identity, function annotation becomes error-prone. Recent benchmarking studies indicate that BLASTp's precision for EC number assignment drops sharply in this low-identity regime, often conflating sub-subclasses (e.g., transferring EC 1.1.1.1 when the true enzyme is EC 1.1.1.2).

Pitfall 2: Remote Homologs Remote homologs share a common ancestor but have diverged significantly. BLASTp may fail to detect these relationships due to its reliance on local alignments and substitution matrix limits (e.g., BLOSUM62). Deep learning models, trained on evolutionary profiles and structural features, can often capture these distant relationships more effectively.

Pitfall 3: Multi-Domain Enzymes Many enzymes are modular. BLASTp alignments to a single domain can lead to misannotation if the query protein's domain architecture differs. The highest-scoring segment pair may align to a common domain (e.g., a ATP-binding cassette) while the catalytic domain is ignored.

Table 1: Quantitative Comparison of BLASTp Performance Challenges in EC Annotation

| Challenge Scenario | Typical Sequence Identity Range | BLASTp Precision* (%) | BLASTp Recall* (%) | Primary Cause of Error |

|---|---|---|---|---|

| Low-Homology Proteins | 20% - 30% | ~45 - 60 | ~50 - 65 | Insufficient signal for specific EC transfer |

| Remote Homologs | < 20% | < 25 | < 30 | Substitution matrix saturation, loss of evolutionary signal |

| Multi-Domain Enzymes (Mismatched Architecture) | Variable | ~30 - 50 | ~70 - 80 | High-scoring alignment to a non-catalytic, shared domain |

| High-Homology Proteins (Baseline) | > 40% | > 90 | > 95 | Reliable function conservation |

*Precision/Recall estimates based on recent benchmark studies (e.g., CAFA, BioLip) for full EC number transfer.

Experimental Protocols

Protocol 2.1: Benchmarking BLASTp EC Annotation Accuracy

Objective: To quantify BLASTp error rates across homology ranges and domain architectures.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Dataset Curation:

- Source a high-quality, non-redundant enzyme dataset with experimentally verified EC numbers from BRENDA or UniProtKB/Swiss-Prot.

- For multi-domain analysis, annotate domain boundaries using Pfam or InterProScan.

- Query and Database Construction:

- Partition the dataset. Hold out 20% as a query set.

- Use the remaining 80% to construct a BLASTp-compatible protein database (

makeblastdb).

- BLASTp Execution and Annotation Transfer:

- Run BLASTp for each query against the database with an E-value threshold of 0.001.

- Transfer the EC number from the top-hit (highest bit-score) that meets a specified sequence identity threshold (e.g., 30%, 40%, 50%).

- Performance Calculation:

- Compare transferred EC numbers to the ground truth.

- Calculate precision, recall, and full EC number accuracy (all four digits correct).

- Stratify results by sequence identity bins and domain architecture match/mismatch.

Protocol 2.2: Protocol for Identifying Remote Homologs via PSI-BLAST

Objective: To extend homology detection beyond the limits of standard BLASTp.

Methodology:

- Perform a standard BLASTp search (E-value=0.01) to gather an initial set of hits.

- Use these hits to build a position-specific scoring matrix (PSSM).

- Iterative Search:

- Run PSI-BLAST using the PSSM against the same database.

- Incorporate significant new hits (E-value < 0.01) into the PSSM.

- Repeat for 3-5 iterations or until convergence (no new hits).

- Analysis:

- Compare the final set of detected homologs to those found by single-iteration BLASTp.

- Validate remote hits using independent data (e.g., conserved residue motifs from Catalytic Site Atlas).

Visualizations

Title: BLASTp EC Annotation Decision Workflow with Pitfalls

Title: Method Performance Across BLASTp Challenge Scenarios

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item | Function / Relevance | Example / Source |

|---|---|---|

| Curated Protein Database | Ground truth for benchmarking; must have experimentally verified EC numbers. | UniProtKB/Swiss-Prot, BRENDA |

| BLAST+ Suite | Command-line tools to run BLASTp, PSI-BLAST, and create databases. | NCBI BLAST+ (v2.14+) |

| Domain Annotation Tool | Identifies protein domains to diagnose multi-domain pitfalls. | InterProScan, HMMER (Pfam) |

| Multiple Sequence Alignment (MSA) Tool | Generates alignments for conservation analysis and deep learning input. | Clustal Omega, MAFFT |

| Deep Learning EC Prediction Tool | Serves as a comparative method in the thesis research. | DeepEC, CLEAN, ECNet |

| Benchmarking Scripts (Python/R) | Custom code to calculate precision, recall, and stratify results. | Biopython, pandas, scikit-learn |

| High-Performance Computing (HPC) Cluster | Resources for running large-scale BLAST and deep learning inference jobs. | Local university cluster, cloud computing (AWS, GCP) |

Application Notes: BLASTp vs. Deep Learning for EC Annotation

Accurate Enzyme Commission (EC) number prediction is critical for functional genomics, metabolic engineering, and drug target identification. This document contrasts the traditional homology-based method (BLASTp) with contemporary deep learning (DL) approaches, highlighting key limitations of DL and proposing integrated solutions.

Table 1: Quantitative Comparison of EC Annotation Methods

| Metric | BLASTp (Homology-Based) | Typical Deep Learning Model (e.g., DeepEC) | Integrated Approach (BLASTp + DL) |

|---|---|---|---|

| Interpretability | High (explicit alignments, E-values) | Low (Black-box prediction) | Medium-High (Rule-based + confidence scores) |

| Data Bias Sensitivity | Low (Relies on curated databases) | Very High (Training set composition dictates bias) | Mitigated (Uses BLAST to flag novel/divergent sequences) |

| Handling Novel/Gap Sequences | Poor for sequences <30% identity | Poor if gaps not in training distribution | Good (Cascaded logic prioritizes BLAST for distant hits) |

| Computational Cost (Inference) | High for large DB queries | Low (once model is trained) | Moderate (sequential checking) |

| Precision (on benchmark sets) | ~85% (for high-confidence hits) | ~92% (on held-out test sets) | ~94% (reduces false positives on outliers) |

| Recall (on benchmark sets) | ~70% (misses distant homologs) | ~95% (within training domain) | ~95% (DL recovers distant homologs) |

| Primary Limitation Addressed | Declining recall with sequence divergence | Data bias, overconfidence on out-of-distribution samples | Combines strengths to bridge training set gaps. |

Core Challenge Analysis: DL models like DeepEC or CLEAN achieve high accuracy but fail reliably on sequences with low similarity to the training data (training set gaps). They also provide no mechanistic insight (black-box), complicating validation in drug development. Data bias, where training data overrepresents certain protein families, leads to skewed predictions.

Proposed Protocol Logic: An integrated, decision-tree pipeline (see Diagram 1) prioritizes interpretable BLASTp results for sequences with clear homology, reserving DL for cases where homology is weak, thereby providing a confidence metric and flagging potential model extrapolations.

Experimental Protocols

Protocol 2.1: Constructing a Bias-Aware Training Set for EC Prediction

Objective: To create a deep learning training dataset that mitigates inherent taxonomic and functional bias.

- Source Data: Retrieve sequences from the BRENDA and UniProtKB/Swiss-Prot databases using REST APIs. Filter for entries with experimentally verified EC numbers.

- Bias Quantification: Cluster sequences at 40% identity using CD-HIT. Calculate the distribution of clusters across taxonomic lineages (e.g., via taxon IDs) and EC classes (Oxidoreductases, Transferases, etc.).

- Stratified Sampling: For each EC number, perform stratified sampling across taxonomic superkingdoms (Bacteria, Archaea, Eukaryota, Viruses) to ensure representation. Cap overrepresented families (e.g., certain kinases) to a maximum of 200 unique sequences.

- Hold-Out Set Creation: Deliberately create a "Gap Set": 15% of EC numbers are entirely withheld. From remaining EC numbers, cluster and remove 5% of clusters to simulate "within-class gaps."

- Final Splits: Divide the remaining data into Training (70%), Validation (15%), and Standard Test (15%) sets, ensuring no sequence identity >30% between splits.

Protocol 2.2: Hybrid BLASTp-DL Inference for Robust Annotation

Objective: To annotate a novel protein sequence while flagging low-confidence predictions due to data gaps.

- Input: Novel protein sequence (FASTA format).

- Step 1 - BLASTp Homology Check:

- Run BLASTp against a curated database of known EC proteins (e.g., Swiss-Prot).

- Parameters:

evalue 1e-10,max_target_seqs 50. - Rule: If a hit with ≥40% identity and E-value ≤1e-30 is found for a specific EC number, assign that EC. Proceed to Step 4.