Enzyme Kinetic Parameter Identifiability Analysis: Bridging Theory, Experiment, and Computation for Reliable Models

This article provides a comprehensive analysis of the identifiability of enzyme kinetic parameters—a fundamental challenge in creating reliable biochemical models for research, drug development, and biocatalysis.

Enzyme Kinetic Parameter Identifiability Analysis: Bridging Theory, Experiment, and Computation for Reliable Models

Abstract

This article provides a comprehensive analysis of the identifiability of enzyme kinetic parameters—a fundamental challenge in creating reliable biochemical models for research, drug development, and biocatalysis. We explore the core theoretical distinctions between structural and practical identifiability, highlighting common pitfalls in complex reaction schemes like substrate competition[citation:1]. The review covers modern methodological solutions, from advanced progress curve analysis[citation:4] and numerical identifiability procedures[citation:3] to innovative computational frameworks like UniKP for parameter prediction[citation:6][citation:9]. We detail troubleshooting strategies for ill-posed estimation problems, including experimental design and data preprocessing[citation:5][citation:8]. Finally, we examine validation paradigms and comparative assessments of tools and databases, such as EnzyExtract and SKiD, that are illuminating the 'dark matter' of enzyme data[citation:2][citation:5]. This synthesis aims to equip researchers and developers with a practical framework for obtaining robust, trustworthy kinetic parameters essential for predictive biology and engineering.

The Foundations of Identifiability in Enzyme Kinetics: Core Concepts and Inherent Challenges

In the development of reliable mathematical models for systems biology and enzyme kinetics, parameter identifiability is a foundational concept that determines whether unique and meaningful values can be inferred from data [1]. This analysis is typically divided into two sequential categories: structural identifiability and practical identifiability. While often conflated, they address distinct theoretical and empirical challenges [2].

Structural identifiability is a theoretical property of the model itself. It asks whether, given perfect, noise-free, and continuous data, the model's parameters can be uniquely determined from the observed outputs [3] [4]. It is a prerequisite for reliable parameter estimation, determined solely by the model's equations, the observation functions, and the known inputs [1]. If a model is structurally unidentifiable, no amount or quality of data will allow for unique parameter estimation [4].

Practical identifiability, in contrast, concerns the real-world application of the model. It assesses whether parameters can be accurately estimated given the limitations of actual experimental data, which are finite in time points, contaminated with noise, and may not be optimally informative [3] [2]. A model can be structurally identifiable yet practically unidentifiable if the available data are insufficient to constrain the parameters [1].

The following table provides a detailed comparison of these two critical concepts.

Table: Core Comparison of Structural and Practical Identifiability

| Aspect | Structural Identifiability | Practical Identifiability |

|---|---|---|

| Core Question | Can parameters be theoretically uniquely identified from perfect (noise-free, continuous) data? [3] [4] | Can parameters be reliably estimated from the available, real-world (noisy, limited) data? [3] [2] |

| Primary Dependency | Model structure (system dynamics, observation function) [1] [4]. | Quality, quantity, and information content of the experimental dataset [1] [2]. |

| Analysis Timing | A priori, before data collection (for experimental design) or immediately after model formulation [3]. | A posteriori, after data collection and during the parameter estimation process [3]. |

| Typical Causes of Failure | Over-parameterization, redundant mechanisms, insufficient or poorly chosen observed outputs [4]. | Insufficient data points, high measurement noise, poorly informative experimental conditions (e.g., sub-optimal stimuli) [1] [2]. |

| Consequences of Non-Identifiability | Unique parameter estimation is mathematically impossible. Model predictions may be non-unique [4]. | Parameter estimates have large, often ill-defined uncertainties. Predictions are unreliable [2]. |

| Common Remedial Actions | Model reformulation or reduction, reparameterization (using identifiable combinations), fixing some parameter values, changing observed outputs [3] [4]. | Design of new, more informative experiments, collection of more or higher-quality data, reduction of measurement noise [1] [2]. |

| Current Research Status | Well-defined with increasingly efficient computational tools (e.g., differential algebra, generating series) [1] [5]. | More challenging; active development of methods like profile likelihood to replace misleading Fisher Information Matrix approaches [2]. |

A Central Case Study: Identifiability in CD39 Enzyme Kinetics

Research on the enzyme CD39 (NTPDase1) provides a concrete example of identifiability challenges in enzyme kinetics [6]. CD39 sequentially hydrolyzes ATP to ADP and then ADP to AMP. This creates a system where ADP is both a product and a substrate, leading to substrate competition within a Michaelis-Menten modeling framework [6].

A study aimed to re-estimate the kinetic parameters (V_max and K_M) for both the ATPase and ADPase activities of CD39 using modern nonlinear least squares methods, as opposed to older, unreliable graphical linearization techniques [6]. When attempting to fit all four parameters simultaneously to time-course data, researchers encountered severe practical unidentifiability. Different combinations of parameters yielded nearly identical model fits to the data, preventing reliable, unique estimation [6].

The root cause was a structural identifiability issue: the model's structure made the parameters highly correlated when estimated from a single experiment starting with only ATP [6]. The solution was to ensure structural identifiability through experimental design: independently isolating the ADPase reaction (by spiking with ADP only) to estimate its parameters, and then using ATP-spiking experiments with the ADPase parameters fixed to estimate the ATPase parameters uniquely [6].

The discrepancy between old and new methods highlights the practical impact of this analysis:

Table: Parameter Estimates for CD39 from Different Methods [6]

| Parameter | Nominal Value (Graphical Method) | Estimated Value (Naïve Nonlinear Fit) |

|---|---|---|

| V_max1 (ATPase) | 1.91 × 10³ µM/min | 855.38 µM/min |

| K_M1 (ATPase) | 5.83 × 10² µM | 841.87 µM |

| V_max2 (ADPase) | 1.89 × 10³ µM/min | 534.51 µM/min |

| K_M2 (ADPase) | 6.32 × 10² µM | 274.73 µM |

Workflow for Comprehensive Identifiability Analysis

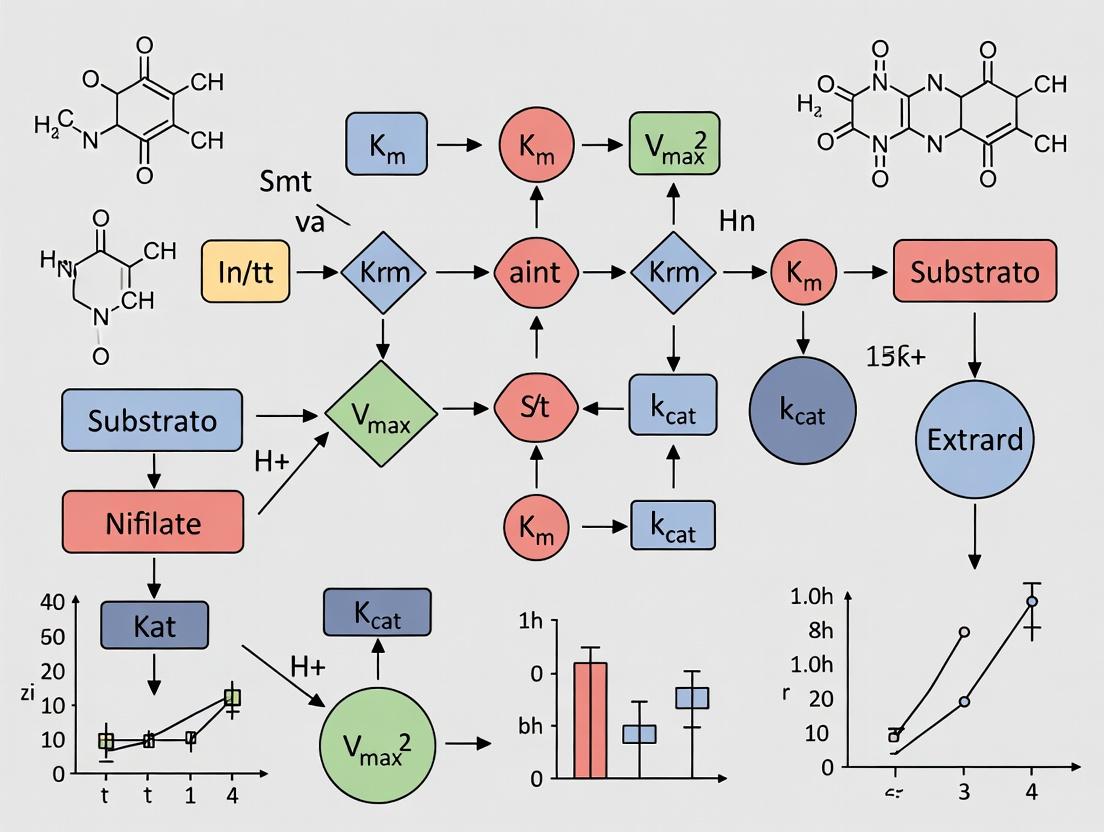

A robust modeling workflow must integrate both structural and practical identifiability analyses to ensure parameter reliability [3]. The following diagram outlines this essential process.

Flow of identifiability analysis in model development

Conducting a rigorous identifiability analysis requires both conceptual understanding and practical tools. The following table lists key software and methodological resources cited in recent literature.

Table: Research Toolkit for Identifiability Analysis

| Tool / Resource | Type | Primary Use & Function | Key Reference/Example |

|---|---|---|---|

| StrucID | Software Algorithm | A fast and efficient algorithm for performing structural identifiability analysis on ODE models [1] [5]. | [1] [5] |

| StructuralIdentifiability.jl | Software Package (Julia) | A differential algebra-based package for rigorous structural identifiability analysis, capable of handling non-integer exponents via model reformulation [7]. | [7] |

| Profile Likelihood | Methodological Approach | A powerful method to detect and resolve practical identifiability issues by exploring parameter space, superior to the often-misleading Fisher Information Matrix [2]. | [2] |

| GrowthPredict Toolbox (MATLAB) | Software Toolbox | Used for parameter estimation and forecasting with phenomenological models; applied in studies to validate identifiability results with real-world epidemiological data [7]. | [7] |

| Generating Series with Identifiability Tableaus | Methodological Approach | A method for structural identifiability analysis noted for offering a good compromise between applicability, complexity, and information provided [4]. | [4] |

| Nonlinear Least Squares (NLSQ) | Methodological Approach | The standard recommended method for parameter estimation in enzyme kinetics, replacing inaccurate graphical linearization methods [6]. | [6] |

| Independent Reaction Isolation | Experimental Strategy | A workflow to ensure identifiability by designing separate experiments (e.g., ATP-only, ADP-only spikes) to decouple correlated parameters [6]. | [6] |

The distinction between structural and practical identifiability is not merely academic but a critical, sequential checkpoint in robust scientific modeling [3]. As noted in recent literature, with advanced computational tools, determining structural identifiability is no longer a major bottleneck [2]. The frontier of challenge now lies in practical identifiability, which deals with the imperfections of real data and experiments [1] [2].

For researchers in enzyme kinetics and drug development, this means adopting a disciplined workflow: first, using tools like differential algebra or generating series to verify a model's structure is theoretically sound [7] [4]. Second, after data collection, employing methods like profile likelihood to rigorously assess the precision that the actual data afford to parameter estimates [2]. As demonstrated in the CD39 case study, this process directly informs experimental design, guiding researchers to collect data that truly constrain the parameters of biological interest, leading to models that can be trusted for prediction and therapeutic insight [6].

The accurate determination of enzyme kinetic parameters—the Michaelis constant (K_m) and the maximum reaction rate (v_max or k_cat)—is foundational to understanding biological systems, predicting metabolic fluxes, and designing drugs that target enzymatic pathways [8]. However, a significant and often overlooked challenge in this field is parameter identifiability: the ability to uniquely and reliably estimate these parameters from experimental data. When parameters are unidentifiable, different combinations of values can produce identical model outputs, rendering the estimated values meaningless and compromising downstream applications [6].

This problem is acutely manifested in enzymes with complex reaction mechanisms, such as CD39 (NTPDase1). CD39 is a critical ectonucleotidase that sequentially hydrolyzes extracellular ATP to ADP and then ADP to AMP, playing a vital role in regulating purinergic signaling in vascular homeostasis, inflammation, and thrombosis [6] [9]. Its mechanism presents a classic identifiability trap: ADP is both the product of the first reaction and the substrate for the second. This creates a scenario of intrinsic substrate competition, where the two hydrolytic reactions are coupled and interdependent [6]. Traditional methods for estimating kinetic parameters, which often treat reactions in isolation, fail catastrophically in such systems. This guide compares established and emerging methodological solutions to this identifiability problem, providing a framework for researchers to obtain reliable kinetic parameters for complex enzyme mechanisms like that of CD39.

Comparative Analysis of Methodological Approaches

The following table summarizes and compares the core methodological strategies for tackling parameter identifiability in complex enzyme systems, highlighting their principles, applications, and inherent limitations.

Table 1: Comparison of Methodological Approaches to Identifiability in Enzyme Kinetics

| Methodological Approach | Core Principle | Application to CD39/Substrate Competition | Key Advantages | Major Limitations & Pitfalls |

|---|---|---|---|---|

| Classic Graphical/Linearization (e.g., Lineweaver-Burk) [6] | Transforms Michaelis-Menten equation into a linear form for easy parameter estimation from plots. | Historically used to report K_m and v_max for CD39’s ATPase and ADPase activities independently. | Simple to implement with minimal computational requirements. | Severely distorts error structure, leading to biased and inaccurate parameter estimates. Fails completely for coupled reactions, ignoring substrate competition. |

| Nonlinear Least Squares (NLS) Fitting - "Naïve" Approach [6] | Directly fits the non-linear Michaelis-Menten model to time-course data by minimizing the sum of squared residuals. | Attempts to fit all four parameters (v_max1, K_m1, v_max2, K_m2) simultaneously to a dataset where ATP is converted to AMP. | More statistically sound than linearization; accounts for non-linear data structure. | Leads to practical unidentifiability; parameters exhibit strong correlations and high uncertainty because multiple parameter combinations fit the data equally well [6]. |

| Isolated Reaction Estimation [6] | Decouples the system. Parameters for each reaction are estimated independently using tailored experiments (e.g., ATPase parameters from an ATP-spiking experiment where ADP→AMP is blocked). | K_m2 and v_max2 for the ADPase reaction are determined in experiments starting with ADP as the sole substrate, isolating it from the ATPase reaction. | Theoretically ensures identifiability by breaking parameter correlations. Provides a reliable foundation for building a full system model. | Requires carefully designed experiments that may be technically challenging (e.g., inhibiting one reaction). Does not account for potential allosteric or regulatory effects present in the full system. |

| Modern Computational & AI-Driven Workflows [10] [11] [12] | Uses machine learning to predict parameters from sequence/structure or advanced computational pipelines to robustly fit models while assessing uncertainty. | 1. UniKP [10]: Predicts k_cat and K_m from enzyme sequence and substrate structure.2. MASSef [11]: A workflow for robust parameter estimation of detailed enzyme models, reconciling inconsistent data. | Can leverage large, diverse datasets. Frameworks like MASSef explicitly handle parameter uncertainty and data inconsistency. Useful for initial estimates or when data is sparse. | Predictive accuracy depends on training data quality and relevance. Cannot replace carefully controlled experiments for mechanistic validation. May not resolve identifiability issues inherent to the model structure itself. |

| Optimal Experimental Design (e.g., 50-BOA) [13] | Employs mathematical analysis of the error landscape to determine the minimal, most informative experimental conditions for precise parameter estimation. | While developed for inhibition constants (K_ic, K_iu), the principle is directly applicable. It would identify the optimal substrate and inhibitor concentrations to resolve CD39’s kinetic parameters. | Dramatically reduces experimental burden (>75%) while improving precision. Systematically eliminates uninformative data points that contribute noise or bias. | Requires initial pilot data (e.g., an IC₅₀ estimate) to design the optimal experiment. Novel approach not yet widely adopted for basic Michaelis-Menten parameter estimation. |

Detailed Experimental Protocols for Reliable Parameter Estimation

Overcoming identifiability issues requires meticulously designed experiments. Below are detailed protocols derived from the analyzed literature for the two most robust approaches.

Protocol 1: Isolated Reaction Analysis for CD39 Kinetics

This protocol, based on the solution proposed in [6], involves physically or conceptually isolating the two hydrolytic steps of CD39.

Objective: To independently determine the Michaelis-Menten parameters (v_max1, K_m1) for the ATPase reaction and (v_max2, K_m2) for the ADPase reaction of CD39.

Materials:

- Purified, recombinant human CD39 (soluble or membrane-bound form).

- Substrates: ATP (for Part A), ADP (for Part B).

- Reaction buffer (e.g., containing required divalent cations like Ca²⁺/Mg²⁺).

- Enzyme stop solution.

- Phosphate detection assay kit (e.g., malachite green) or HPLC system for nucleotide quantification.

Procedure:

Part A: Determination of ADPase Parameters (K_m2, v_max2)

- Reaction Setup: Prepare a series of reactions containing a fixed concentration of CD39 enzyme and varying concentrations of ADP (e.g., 0, 10, 25, 50, 100, 250, 500, 1000 µM) in an appropriate buffer. Ensure ATP is absent.

- Initial Rate Measurement: Initiate the reaction by adding enzyme. Allow it to proceed at a constant temperature (e.g., 37°C) for a short, linear time period (e.g., 5-10 minutes).

- Reaction Termination & Quantification: Stop the reaction at the designated time. Quantify the amount of phosphate released or the depletion of ADP/product formation of AMP using a calibrated method.

- Data Analysis: Plot the initial velocity (V) against the ADP concentration ([S]). Fit the standard Michaelis-Menten equation (V = (v_max2 * [S]) / (K_m2 + [S])) to the data using non-linear least squares regression.

Part B: Determination of ATPase Parameters (K_m1, v_max1)

- Conceptual Isolation: Utilize the parameters obtained in Part A. Set up a reaction starting with ATP as the sole substrate (e.g., 500 µM).

- Time-Course Measurement: Take frequent time points and quantify the concentrations of ATP, ADP, and AMP simultaneously (e.g., via HPLC).

- System Modeling: Construct a coupled ordinary differential equation (ODE) model as defined in [6]:

d[ATP]/dt = -V_ATPd[ADP]/dt = V_ATP - V_ADPd[AMP]/dt = V_ADPwhereV_ATP = (v_max1 * [ATP]) / (K_m1 * (1 + [ADP]/K_m2) + [ATP])andV_ADPuses the known v_max2 and K_m2 from Part A. - Parameter Fitting: Fix the parameters v_max2 and K_m2 to the values from Part A. Fit the ODE model to the time-course data for all three nucleotides, optimizing only for the remaining ATPase parameters v_max1 and K_m1.

Protocol 2: Characterizing Substrate Inhibition in CD39

Recent research reveals that CD39 exhibits substrate inhibition at high concentrations of ATP or ADP, a complication that further challenges parameter identifiability if unaccounted for [14].

Objective: To characterize substrate inhibition kinetics and determine the inhibition constant (K_i).

Materials: As in Protocol 1, with substrates including ATP, ADP, and analogs like 2-methylthio-ADP [14].

Procedure:

- Extended Substrate Range: Perform initial rate assays as described in Part A of Protocol 1, but extend the substrate concentration range to very high levels (e.g., up to 3-5 mM for ATP/ADP).

- Model Fitting: Plot the data. If the velocity decreases after reaching a maximum, fit a substrate inhibition model:

V = (v_max * [S]) / (K_m + [S] + ([S]² / K_i))where K_i is the substrate inhibition constant. - Specificity Control: Repeat with different substrates (e.g., UDP, 2-MeS-ADP) to confirm the phenomenon is specific to adenine nucleotides [14].

- Product Inhibition Control: Rule out apparent substrate inhibition caused by accumulating AMP by: a) using very short assay times, or b) adding a scavenging system for AMP, or c) directly testing AMP inhibition at concentrations generated in the assay [14].

Diagram 1: Workflow for Identifiable Parameter Estimation in CD39 Kinetics (76 characters)

Essential Research Reagent Solutions and Tools

Table 2: Research Toolkit for Enzyme Kinetics & Identifiability Analysis

| Tool/Reagent | Function & Description | Key Consideration for Identifiability |

|---|---|---|

| High-Purity Recombinant Enzyme | Provides a consistent, defined catalyst for kinetic assays. Soluble CD39 fragments are often used for in vitro studies [14]. | Enzyme preparation must be stable and homogeneous. Batch-to-batch variability is a major source of parameter inconsistency [8]. |

| Defined Nucleotide Substrates & Analogs | Natural substrates (ATP, ADP) and modified analogs (e.g., 2-methylthio-ADP, UDP) [14]. | Analog studies are crucial for dissecting mechanism-specific features like substrate inhibition, which impacts model selection and identifiability [14]. |

| Coupled Phosphate Detection Assay | A common, continuous method to monitor reaction velocity by measuring inorganic phosphate release. | Must ensure the coupling system is not rate-limiting and operates in the linear range. Assay conditions (pH, ions) must match physiological context where possible [8]. |

| HPLC or LC-MS Systems | For direct, simultaneous quantification of substrate and product concentrations in time-course experiments. | Essential for generating the multi-species time-series data required to fit coupled models and diagnose identifiability issues [6]. |

| Nonlinear Regression Software (e.g., Prism, MATLAB, Python SciPy) | Performs NLS fitting of models to data. | Software must provide confidence intervals and covariance matrices for parameters. A flat likelihood surface indicates unidentifiability [6]. |

| Computational Modeling Environment (e.g., MATLAB, COPASI, MASSef [11]) | Used to construct and simulate ODE models, perform parameter sweeps, and assess global identifiability. | Tools like MASSef are specifically designed to handle parameter uncertainty and reconcile conflicting data, directly addressing identifiability [11]. |

| Curated Kinetic Databases (e.g., BRENDA, SABIO-RK, EnzyExtractDB [12]) | Repositories of published kinetic parameters and conditions. | Critical for validation. New tools like EnzyExtractDB use AI to extract "dark data" from literature, expanding the reference set for comparison and machine learning [12]. |

The pitfalls of traditional methods and the success of the isolation strategy are quantitatively demonstrated in the CD39 case study [6].

Table 3: Comparison of CD39 Kinetic Parameters from Different Estimation Methods

| Parameter | Nominal Values (Graphical Method from literature) [6] | "Naïve" NLS Fit to Coupled System [6] | Proposed Isolated Reaction Method [6] | Notes on Identifiability |

|---|---|---|---|---|

| v_max1 (ATPase) | 1.91 × 10³ µM/min | 855.38 µM/min | ~1.91 × 10³ µM/min (retained) | Naïve fit deviates >50% from nominal, showing failure. |

| K_m1 (ATPase) | 5.83 × 10² µM | 841.87 µM | ~5.83 × 10² µM (retained) | Strong correlation with v_max1 in naïve fit causes drift. |

| v_max2 (ADPase) | 1.89 × 10³ µM/min | 534.51 µM/min | 1.89 × 10³ µM/min | Most sensitive to coupling. Naïve fit is highly inaccurate. |

| K_m2 (ADPase) | 6.32 × 10² µM | 274.73 µM | 6.32 × 10² µM | Isolated via direct ADP-spiking experiment, ensuring identifiability. |

Furthermore, the substrate-specific nature of CD39 kinetics is highlighted by the following data on substrate inhibition, which must be incorporated into models for physiological relevance [14].

Table 4: Substrate Inhibition Parameters for Human Soluble CD39 [14]

| Substrate | K_m (µM) | V_max (nmol/min/µg) | K_i (µM) | Inhibition Type |

|---|---|---|---|---|

| ADP | 24.0 ± 1.8 | 0.0120 ± 0.0003 | 470 ± 50 | Strong substrate inhibition |

| ATP | 29.6 ± 3.7 | 0.0119 ± 0.0005 | 990 ± 200 | Substrate inhibition |

| UDP | 33.7 ± 2.5 | 0.0061 ± 0.0001 | > 1000 | Very weak/no inhibition |

| 2-MeS-ADP | 10.7 ± 1.5 | 0.0105 ± 0.0003 | > 1000 | No substrate inhibition |

Diagram 2: CD39 Reaction Network with Identifiability Conflicts (63 characters)

Identifiability failure in complex enzyme mechanisms is not merely a mathematical curiosity; it is a fundamental experimental challenge that invalidates many reported kinetic parameters. The case of CD39, with its substrate competition and inhibition, serves as a paradigm for this issue.

Strategic Recommendations for Researchers:

- Diagnose Before Fitting: Always assess parameter identifiability. Use tools that provide confidence intervals and correlation matrices. If parameters are highly correlated or intervals are extremely wide, the model is likely unidentifiable with your data.

- Design Decoupling Experiments: When faced with coupled reactions, invest in experimental designs that isolate individual steps, as demonstrated for CD39. The upfront cost yields uniquely identifiable parameters.

- Account for All Mechanisms: Incorporate known complexities—like substrate inhibition—into the kinetic model from the outset. Fitting a standard Michaelis-Menten model to data governed by substrate inhibition guarantees wrong and unidentifiable parameters.

- Leverage Computational Tools Judiciously: Use AI predictors like UniKP [10] for initial estimates or to fill gaps, and robust fitting frameworks like MASSef [11] to handle uncertainty. However, anchor these tools in high-quality, mechanistically sound experimental data.

- Adopt Optimal Design Principles: Implement strategies like the 50-BOA [13] to design maximally informative experiments with minimal resources, moving beyond traditional "vary substrate and inhibitor" grids.

Ultimately, reliable kinetic modeling for systems biology and drug discovery depends on recognizing and overcoming identifiability pitfalls. By applying the comparative methodologies and rigorous protocols outlined in this guide, researchers can move from generating potentially misleading parameters to establishing a robust, quantitative foundation for understanding enzyme function.

Within the broader thesis on identifiability analysis in enzyme kinetics research, this guide examines a central challenge: kinetic parameters that are unidentifiable—impossible to determine uniquely from available data—severely undermine the reliability of predictive models. This ambiguity directly compromises bioprocess design, leading to suboptimal scale-up, increased risk of batch failure, and inefficient quality-by-design (QbD) implementation. This publication compares state-of-the-art computational and experimental strategies designed to mitigate this issue. We objectively evaluate frameworks for parameter prediction, novel data curation pipelines, and advanced identifiability analysis toolkits, providing supporting experimental data on their accuracy and utility. The synthesis presented here aims to equip researchers and process engineers with the knowledge to build more robust, predictive models, thereby de-risking bioprocess development from enzyme engineering to manufacturing.

Comparative Analysis of Enzyme Kinetic Parameter Prediction Tools

The foundation of any reliable kinetic model is an accurate set of parameters. Traditional experimental measurement is a bottleneck, making computational prediction essential. This section compares three modern frameworks that address different facets of the prediction challenge, from unified deep learning to uncertainty-aware Bayesian estimation.

Table 1: Comparison of Modern Enzyme Kinetic Parameter Prediction Tools

| Tool / Framework | Core Methodology | Key Predictions | Reported Performance (Test Set) | Primary Advantage | Limitation / Consideration |

|---|---|---|---|---|---|

| UniKP [15] | Pretrained language models (ProtT5, SMILES) + ensemble machine learning (Extra Trees). | kcat, Km, kcat/Km from sequence and substrate. |

R² = 0.68 for kcat (20% improvement over DLKcat). PCC = 0.85 [15]. |

High accuracy & unified prediction of three core parameters; enables direct efficiency (kcat/Km) calculation. |

Performance can be constrained by underlying dataset size and diversity. |

| EF-UniKP [15] | Two-layer framework extending UniKP to incorporate environmental factors. | kcat under specific pH and temperature conditions. |

Validated on representative pH/temperature datasets [15]. | Integrates critical experimental context (pH, Temp) for more realistic in situ predictions. | Requires specialized datasets with environmental metadata. |

| ENKIE [16] | Bayesian Multilevel Models with categorical predictors (e.g., enzyme class, substrate type). | Km, kcat values with calibrated uncertainty estimates. |

Performance comparable to deep learning approaches [16]. | Provides predictive uncertainty, crucial for identifiability analysis and model reliability assessment. | Less reliant on direct sequence/structure; uses higher-level categorical features. |

Comparative Analysis of Enhanced Kinetic Datasets

The performance of all predictive tools is intrinsically linked to the quality, scale, and structure of the underlying data. Addressing the "dark matter" of enzymology—data locked in literature—is critical. The following table compares two recent, significant contributions to structured kinetic data.

Table 2: Comparison of Enhanced Kinetics Datasets for Model Training

| Dataset | Source & Curation Method | Scale | Key Features & Integration | Impact on Model Performance | Utility for Identifiability |

|---|---|---|---|---|---|

| EnzyExtractDB [12] | LLM-powered (GPT-4o) extraction from 137,892 full-text publications. | 218,095 entries (kcat/Km); 92,286 high-confidence, sequence-mapped entries [12]. |

Maps entries to UniProt & PubChem; preserves experimental context (pH, Temp, mutations). | Retraining models (MESI, DLKcat) with this data improved RMSE, MAE, and R² on held-out tests [12]. | Massive scale increases coverage, helping to constrain parameters for diverse enzyme-substrate pairs. |

| SKiD [17] | Curated from BRENDA, integrated with structural bioinformatics. | 13,653 unique enzyme-substrate complexes with 3D structural data [17]. | Provides 3D structural coordinates of enzyme-substrate complexes; includes protonation states at experimental pH. | Directly links kinetic parameters to structural features, enabling mechanistic insights into parameter values. | Structural context can help diagnose why parameters are unidentifiable (e.g., ambiguous binding modes). |

Experimental Protocols Supporting Tool Development and Validation

The advancement of tools like UniKP and databases like EnzyExtractDB relies on rigorous experimental and computational protocols. Below are detailed methodologies for key experiments cited in the comparison.

Protocol: High-AccuracykcatPrediction with UniKP Framework

This protocol outlines the workflow for predicting enzyme turnover numbers as described for UniKP [15].

1. Representation Generation: * Enzyme Sequence Encoding: Input the protein amino acid sequence. Use the ProtT5-XL-UniRef50 pretrained language model to generate a 1024-dimensional per-residue vector. Apply mean pooling across residues to obtain a single 1024-dimensional protein representation vector. * Substrate Structure Encoding: Convert the substrate molecular structure to its SMILES string. Process the SMILES using a pretrained SMILES transformer to generate a 256-dimensional vector per symbol. Create a final 1024-dimensional molecular representation by concatenating the mean and max pooling of the last layer, and the first outputs of the last and penultimate layers [15].

2. Model Prediction:

* Concatenate the 1024D protein vector and the 1024D substrate vector to form a 2048D combined feature vector.

* Input the combined feature vector into a trained Extra Trees ensemble regression model. This model, selected after comparison of 18 algorithms, outputs predictions for kcat, Km, or the calculated kcat/Km [15].

3. Validation: * Performance is evaluated via coefficient of determination (R²), Root Mean Square Error (RMSE), and Pearson Correlation Coefficient (PCC) on a held-out test set (e.g., 16,838 samples from DLKcat dataset). Robustness is assessed via multiple random splits of training/test data [15].

Protocol: Construction of the Structure-Oriented Kinetic Dataset (SKiD)

This protocol details the creation of a kinetics dataset integrated with 3D structural information [17].

1. Data Curation from BRENDA:

* Extract raw kcat and Km values, EC numbers, UniProt IDs, substrate names, and experimental conditions (pH, temperature) from BRENDA using in-house scripts.

* Resolve redundancies by comparing annotations and computing geometric means for repeated measurements under identical conditions. Perform outlier removal based on statistical thresholds (e.g., beyond three standard deviations of log-transformed distributions).

2. Substrate and Enzyme Annotation: * Substrate: Convert substrate IUPAC names to isomeric SMILES using OPSIN and PubChemPy. For unresolved names, perform manual annotation using PubChem, ChEBI, and commercial catalogues. Generate 3D coordinates from SMILES using RDKit and minimize energy with the MMFF94 force field. * Enzyme: Map the UniProt ID to one or more PDB structures. Classify structures based on ligand content (substrate+cofactor, substrate-only, etc.).

3. Structure Processing and Complex Modeling: * For enzymes with bound substrates/cofactors, extract the relevant ligand. For apo structures or mismatched ligands, use computational docking (e.g., AutoDock Vina) to generate a plausible enzyme-substrate complex. * Adjust the protonation states of all enzyme residues to reflect the experimental pH recorded in BRENDA. * The final output for each entry is a curated kinetic value paired with a ready-to-use 3D structural model of the enzyme-substrate complex [17].

The Scientist's Toolkit: Essential Research Reagent Solutions

Building reliable kinetic models and conducting identifiability analysis requires specialized resources. This table lists key databases, software tools, and analytical frameworks.

Table 3: Essential Toolkit for Identifiability Analysis & Kinetic Modeling

| Tool / Resource | Type | Primary Function | Relevance to Identifiability & Bioprocess |

|---|---|---|---|

| VisId [18] | MATLAB Toolbox | Performs practical identifiability analysis for large-scale kinetic models. Uses collinearity indexes and optimization to find identifiable parameter subsets. | Directly addresses the core problem by diagnosing unidentifiable parameters and visualizing their correlations within the model network. |

| ENKIE Package [16] | Python Package | Predicts Km/kcat with calibrated uncertainty using Bayesian Multilevel Models. |

Provides prior distributions and uncertainty estimates essential for Bayesian parameter estimation and quantifying prediction reliability. |

| EnzyExtract Pipeline [12] | LLM Data Pipeline | Automates extraction of kinetic parameters and experimental conditions from literature PDFs/XML. | Solves the data scarcity problem, generating large-scale, context-rich datasets necessary to constrain complex models. |

| SKiD Dataset [17] | Curated Structural-Kinetic Database | Provides 3D enzyme-substrate complexes linked to kinetic parameters. | Enables analysis of the structural determinants of kinetic parameters, informing model structure and plausible parameter ranges. |

| PAT Methodology [19] | Process Analytics Framework | Uses first-principles models & mass balances with off-gas (CO₂, O₂) data to estimate real-time specific growth & substrate uptake rates. | Generates high-quality, time-series data from bioreactors for dynamic model calibration, improving practical identifiability. |

Visualizing Workflows and Analytical Relationships

Impact and Solutions for Unidentifiable Parameters

UniKP Framework for Unified Parameter Prediction

Connecting Identifiability to Broader Goals in Metabolic Engineering and Drug Discovery

A central challenge in systems biology and bioengineering is the accurate determination of enzyme kinetic parameters, such as Km and kcat. These parameters are foundational for constructing predictive mathematical models of metabolism, which in turn drive rational strain engineering for bioproduction and the identification of novel drug targets in pathogens. However, the intrinsic issue of parameter identifiability—whether unique and reliable values can be inferred from experimental data—poses a significant bottleneck. Recent advances in computational workflows, machine learning, and experimental design are directly addressing this identifiability challenge, creating a crucial bridge to achieving broader goals in sustainable manufacturing and therapeutic development [6] [15] [20]. This guide compares key methodologies that connect robust identifiability analysis to applications in metabolic engineering and drug discovery.

Publish Comparison Guide 1: Parameter Estimation Methods in Enzyme Kinetics

Objective: This guide compares established and novel methods for estimating identifiable enzyme kinetic parameters, a prerequisite for reliable metabolic models.

Performance and Data Comparison

The table below compares the performance, data requirements, and primary applications of different parameter estimation methodologies.

Table 1: Comparison of Parameter Estimation Methods for Enzyme Kinetic Modeling

| Method | Core Principle | Data Requirements | Identifiability Strength | Primary Application Context | Key Limitation |

|---|---|---|---|---|---|

| Graphical/Linearization (e.g., Lineweaver-Burk) [6] | Linear transformation of Michaelis-Menten equation for visual parameter estimation. | Steady-state velocity vs. substrate concentration. | Weak: Distorts error structure; leads to inaccurate parameter estimates. | Historical analysis; initial data exploration. | Poor accuracy, especially with complex mechanisms like substrate competition. |

| Nonlinear Least Squares (NLS) Estimation [6] | Direct numerical optimization to minimize difference between model and time-course data. | Time-series concentration data for substrates and products. | Context-Dependent: Can be unidentifiable with single time-course (e.g., for competing substrates). | Standard for in vitro enzyme characterization. | Susceptible to local minima; requires careful experimental design for identifiability. |

| Multiple Steady-State (MSS) Identification [20] | Solving polynomial systems from steady-state measurements under varying conditions (e.g., enzyme levels). | Metabolite concentrations at steady state across multiple perturbation experiments. | Strong: Algebraic approach can guarantee local/global identifiability for modular networks. | Large-scale metabolic network modeling. | Requires multiple, carefully designed steady-state experiments. |

| Independent Reaction Isolation [6] | Physically or computationally isolating linked reactions to estimate parameters independently. | Separate datasets for each catalytic step (e.g., ATPase-only and ADPase-only assays). | Very Strong: Breaks parameter interdependence, ensuring identifiability. | Enzymes with sequential or competing substrate reactions (e.g., CD39). | Not always experimentally feasible for complex in vivo systems. |

Supporting Experimental Data: A pivotal study on CD39 (NTPDase1) kinetics demonstrated the failure of traditional methods and the success of an identifiability-focused workflow. Using nominal parameters from literature (estimated via graphical methods) in a model for ATP→ADP→AMP hydrolysis failed to fit experimental time-course data [6]. A naïve nonlinear least squares fit to a single dataset yielded parameters (Vmax1=855.38, Km1=841.87, Vmax2=534.51, Km2=274.73) but with high uncertainty due to unidentifiability. The proposed solution—treating ATPase and ADPase reactions independently—theoretically ensures all four kinetic parameters are identifiable, enabling reliable models of purinergic signaling for drug discovery [6].

Publish Comparison Guide 2: Predictive Tools for Kinetic Parameter Prediction

Objective: This guide compares computational tools that predict kinetic parameters, accelerating model building where experimental data is scarce.

Performance and Data Comparison

The table below benchmarks the performance and features of leading predictive frameworks against conventional alternatives.

Table 2: Comparison of Computational Tools for Enzyme Kinetic Parameter Prediction

| Tool / Approach | Predictive Scope | Key Innovation | Reported Performance (Test Set) | Addresses Identifiability? | Best Use Case |

|---|---|---|---|---|---|

| Classic Machine Learning (ML) / Deep Learning (DL) [15] | Often single parameters (e.g., kcat or Km). | Varied architectures (CNN, RNN) applied to sequence/structure data. | Lower performance (e.g., CNN R²=0.10 for kcat) [15]. | Indirectly, by providing prior estimates. | Specialized, narrow-scope predictions. |

| UniKP Framework [15] | Unified prediction of kcat, Km, and kcat/Km. | Pretrained language models (ProtT5, SMILES) + ensemble ML (Extra Trees). | High Accuracy: R²=0.68 for kcat, 20% improvement over predecessor [15]. | Yes, via accurate kcat/Km prediction, a fundamental identifiable parameter. | High-throughput enzyme discovery and directed evolution. |

| EF-UniKP (Two-Layer Framework) [15] | Prediction under environmental factors (pH, temperature). | Ensemble model integrating predictions from multiple condition-specific models. | Validated on pH/temperature datasets; identifies high-activity enzymes under specified conditions [15]. | Yes, by providing context-specific parameters for identifiable models. | Metabolic engineering for industrial conditions (e.g., bioreactor pH). |

| Flux Balance Analysis (FBA) with KO Constraints [21] | Not direct parameter prediction; infers reaction essentiality. | Constraint-based modeling of genome-scale metabolic networks. | Qualitative growth/no-growth predictions for gene knockouts. | No; uses stoichiometry, not kinetics. | Prioritizing essential pathogen genes as drug targets [21]. |

Supporting Experimental Data: The UniKP framework was validated on a dataset of 16,838 samples. It achieved an average test set R² of 0.68 for kcat prediction, a 20% improvement over the previous DLKcat model [15]. Its strength lies in unified prediction, accurately computing the catalytic efficiency kcat/Km, which is often a more identifiable composite parameter than its individual components. In a practical application, UniKP guided the directed evolution of tyrosine ammonia lyase (TAL), leading to the identification of mutants with the highest reported kcat/Km values, directly impacting metabolic engineering for compound synthesis [15].

Detailed Experimental Protocols

This protocol outlines the steps to overcome parameter unidentifiability in an enzyme with sequential reactions.

- Model Derivation: Formulate the system using Michaelis-Menten equations accounting for substrate competition, where ADP is both a product of the ATPase reaction and a substrate for the ADPase reaction.

- Data Acquisition: Use time-course concentration data for ATP, ADP, and AMP from experiments where recombinant CD39 enzyme is spiked with an initial dose of ATP (e.g., 500 µM) [6].

- Naïve Parameter Estimation: Attempt to fit all four parameters (Vmax1, Km1, Vmax2, Km2) simultaneously to the full time-course data using nonlinear least squares minimization. This typically results in poor convergence and wide confidence intervals, revealing unidentifiability.

- Identifiability Solution - Independent Estimation:

- ATPase Parameters: Design an experiment or use a computational strategy to isolate the ATP→ADP reaction. Fit Vmax1 and Km1 using data where ADP accumulation is minimized or treated differently.

- ADPase Parameters: Similarly, isolate the ADP→AMP reaction (e.g., by directly spiking ADP as the initial substrate) to independently fit Vmax2 and Km2.

- Model Validation: Integrate the independently estimated parameters into the full model and validate against the original multi-substrate time-course data.

This protocol uses steady-state perturbations for parameter identification in metabolic networks.

- Network and Model Definition: Define the metabolic network with standardized general rate laws (e.g., convenience kinetics) for each reaction.

- Steady-State Perturbation Experiments: Cultivate the organism (e.g., microbes) under a series of different conditions—such as varying enzyme expression levels (via induction or repression) or substrate availability—to achieve distinct metabolic steady-states.

- Measurement: For each steady-state condition, quantitatively measure the intracellular concentrations of relevant metabolites.

- Algebraic Parameter Estimation: For each reaction in the network, construct and solve a system of polynomial equations derived from the steady-state flux balance equations and the applied rate laws, using the concentration data from multiple steady-states.

- Modular Integration: Assemble the uniquely identified parameters from each reaction module to build a globally identified kinetic model of the full network.

Visualization of Key Concepts and Workflows

Diagram 1: Identifiability Analysis Workflow for Enzyme Kinetics

Diagram 2: UniKP Framework for Predictive Parameter Identification

Diagram 3: Steady-State Metabolic Network Parameter Identification

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Tools for Identifiability-Focused Kinetic Research

| Item | Function / Description | Application Context |

|---|---|---|

| Recombinant CD39 Enzyme [6] | Membrane ectonucleotidase that hydrolyzes ATP to ADP and ADP to AMP. | A model system for studying identifiability challenges in enzymes with sequential/substrate-competition reactions. |

| ATP & ADP Substrates [6] | Purine nucleotides serving as specific substrates and products in the CD39 kinetic cascade. | Essential for in vitro assays to generate time-course data for parameter estimation. |

| General Rate Law Frameworks [20] | Standardized mathematical forms (e.g., convenience kinetics) to describe reaction fluxes. | Enables modular, systematic parameter identification across large metabolic networks using steady-state data. |

| Pretrained Language Models (ProtT5, SMILES) [15] | AI models that convert protein sequences and substrate structures into numerical feature vectors. | Core component of the UniKP framework for high-throughput, accurate prediction of kinetic parameters. |

| Ensemble Machine Learning Models (e.g., Extra Trees) [15] | A robust regression algorithm that combines predictions from multiple decision trees. | The machine learning module in UniKP chosen for its high accuracy in predicting kcat, Km, and kcat/Km from sequence/structure data. |

| Flux Balance Analysis (FBA) Software [21] | Constraint-based modeling approach using genome-scale metabolic reconstructions. | Identifies essential metabolic reactions in pathogens, generating high-priority drug target candidates, complementing kinetic modeling. |

Methodological Advances: From Progress Curves to AI-Driven Prediction Frameworks

The classical approach to enzyme kinetics has long relied on initial rate measurements, where the linear portion of product formation is used to estimate velocity. This method, while mathematically straightforward, discards the vast majority of data contained within a reaction's progress curve [22]. Progress curve analysis (PCA) represents a more powerful and data-rich alternative, utilizing the entire time-course of substrate depletion and product formation for parameter estimation [23]. This shift is fundamental within identifiability analysis for enzyme kinetic parameters, as it directly impacts whether unique, reliable estimates for constants like k~cat~ and K~M~ can be derived from experimental data [24] [6].

PCA operates on the principle of fitting the integrated form of rate equations to continuous data. For a simple Michaelis-Menten system (E + S ⇄ ES → E + P), the differential equation dP/dt = k~2~E(S~0~ - P)/(K~M~ + S~0~ - P) can be integrated to t = (1/k~2~E) P + (K~M~/(k~2~E)) ln(S~0~/(S~0~ - P))*, which describes the full progress curve [23]. The central challenge—and advantage—of PCA is that it requires sophisticated nonlinear regression to identify parameters from this implicit function, moving beyond simple linear approximations [23] [25].

This guide objectively compares the performance of PCA against traditional initial rate methods and evaluates contemporary software tools and modeling frameworks. It is framed within the critical thesis that parameter identifiability—whether a unique set of parameters can be determined from available data—is not a guaranteed outcome of kinetic analysis and must be actively assessed and engineered through careful experimental design and appropriate model selection [6] [26].

Performance Comparison: Progress Curve Analysis vs. Initial Rate Methods

The choice between progress curve analysis and initial rate methods involves fundamental trade-offs in data efficiency, experimental demand, parameter reliability, and applicability. The following table summarizes a direct performance comparison.

Table 1: Performance Comparison of Progress Curve Analysis vs. Initial Rate Methods

| Feature | Progress Curve Analysis | Initial Rate Analysis | Performance Implication |

|---|---|---|---|

| Data Utilization | Uses the entire time-course of reaction [23]. | Uses only the initial linear phase [22]. | PCA extracts significantly more information per experiment. |

| Experimental Throughput | Lower. Requires high-quality, continuous data collection for each condition. | Higher. Single-time-point measurements for multiple substrate concentrations are faster [22]. | Initial rates are preferable for high-throughput screening. |

| Parameter Identifiability | Can be challenging and non-unique without optimal design; prone to correlation between parameters [23] [27]. | Generally more straightforward, but linear transformations (e.g., Lineweaver-Burk) distort error structures [25] [6]. | Both require careful design, but PCA's identifiability issues are more mathematically complex. |

| Assumption Sensitivity | Highly sensitive to substrate depletion, product inhibition, and enzyme stability over long times [23]. | Assumes negligible substrate depletion and absence of early transients [22]. | PCA models must account for more reaction features to be accurate. |

| Optimal Design | Requires substrate concentration near the K~M~ value for identifiability, which is often unknown a priori [27]. | Requires a substrate concentration range spanning below and far above K~M~ for saturation [27]. | PCA design can be a "catch-22" without prior parameter knowledge. |

| Model Scope | Can be extended to complex mechanisms (e.g., reversible reactions, multi-step, competition) via numerical integration [23] [6]. | Best suited for simple initial velocity studies under fixed conditions. | PCA is inherently more flexible for mechanistic studies. |

Key Experimental Finding: A landmark analysis demonstrated the critical flaw of relying on a single progress curve. When a trypsin-catalyzed reaction was analyzed, optimization algorithms converged on two wildly different but statistically equivalent parameter sets: (K~M~ = 84.4 µM, k~2~ = 113.3 s⁻¹) and (K~M~ = 19.9 mM, k~2~ = 14020 s⁻¹) [23]. This starkly illustrates the practical unidentifiability that can arise from suboptimal experimental design, a risk not present in initial rate assays with varied substrate concentrations.

Comparison of Modern Analysis Tools & Methodologies

With the computational demands of PCA, researchers rely on specialized software and statistical methods. The landscape ranges from established regression packages to advanced Bayesian and hybrid computational frameworks.

Table 2: Comparison of Software Tools and Methodologies for Progress Curve Analysis

| Tool/Method | Core Approach | Key Advantages | Key Limitations/Demands | Best Suited For |

|---|---|---|---|---|

| GraphPad Prism | User-friendly nonlinear regression for explicit equations (e.g., integrated Michaelis-Menten) [28]. | Accessibility, robust GUI, excellent for standard models and initial rate analysis [28] [22]. | Cannot fit models defined by differential equations (true progress curves) [22]. | Routine initial rate analysis and teaching; not for advanced PCA. |

| FITSIM / DYNAFIT | Numerical integration of ODEs for user-defined mechanisms; iterative parameter fitting [23]. | Unmatched flexibility for arbitrary complex mechanisms [23]. | Requires expert knowledge; risk of unidentifiable parameters without proper experimental design [23]. | Mechanistic studies of complex enzymatic pathways. |

| Bayesian Inference (tQ Model) | Uses the Total Quasi-Steady-State Approximation (tQ) model within a Bayesian framework [27]. | Accurate for any [E] / [S] ratio; provides credible intervals; enables optimal experimental design [27]. | Computationally intensive; requires familiarity with probabilistic programming. | High-value kinetics where conditions violate standard assumptions (e.g., high [E]). |

| Hybrid Neural ODEs (HNODE) | Embeds a neural network within an ODE framework to model unknown system components [26]. | Robust when mechanistic knowledge is incomplete; can handle noisy, partial data [26]. | Extreme computational cost; risk of mechanistic parameter non-identifiability due to network flexibility [26]. | Exploratory systems biology with poorly characterized pathways. |

| Robust NLR (MDPD) | Nonlinear regression using Minimum Density Power Divergence estimators [29]. | Resistant to outliers and non-normal error distributions [29]. | A relatively new methodology; less integrated into standard workflows. | Analyzing data with significant noise or anomalies. |

Supporting Experimental Data: The superiority of the Bayesian tQ model was demonstrated in a comprehensive simulation study. While the standard Michaelis-Menten (sQ) model produced biased parameter estimates when enzyme concentration was not negligibly low, the tQ model yielded unbiased estimates across all tested combinations of enzyme and substrate concentrations, from catalytic to stoichiometric ratios [27]. Furthermore, a workflow employing independent estimation of parameters for sequential reactions (e.g., ATPase and ADPase activity of CD39) was shown to overcome the severe identifiability challenges posed by substrate competition, where a product (ADP) is also a substrate for the next reaction [6].

The Central Challenge: Parameter Identifiability in Progress Curve Analysis

Parameter identifiability is the cornerstone of reliable kinetic modeling. It asks: can the parameters of a proposed model be uniquely determined from the available experimental data? Progress curve analysis is particularly susceptible to structural and practical non-identifiability.

Structural Non-Identifiability: This arises from the model structure itself. For the basic reaction scheme, different combinations of individual rate constants (k~1~, k~-1~, k~2~) can yield the same observed progress curve because the observable output (product) is only sensitive to certain aggregates, namely K~M~ = (k~-1~+k~2~)/k~1~ and k~cat~ = k~2~ [23]. A study fitting trypsin progress curves found that rate constant sets differing by six orders of magnitude in k~-1~ produced visually and statistically indistinguishable fits [23]. This means individual rate constants cannot be uniquely identified from a single progress curve of product formation.

Practical Non-Identifiability: This occurs when data quality or experimental design is insufficient to uniquely constrain parameters, even if they are structurally identifiable. The classic example is attempting to fit both K~M~ and V~max~ from a single progress curve at one substrate concentration. As shown in Figure 2 of [23], two parameter pairs with K~M~ values differing 250-fold (84 µM vs. 19.9 mM) fit the data equally well. The design fails because the curve's shape is determined by the ratio of S~0~/K~M~; without varying S~0~, this ratio (and thus the parameters) cannot be pinned down.

The following diagram illustrates the logical decision process for diagnosing and addressing identifiability issues in progress curve analysis.

Diagram: A diagnostic workflow for parameter identifiability issues in enzyme kinetics. The process differentiates between structural flaws in the model and practical limitations of the experimental data or design [23] [24] [6].

Experimental Protocols for Robust Progress Curve Analysis

To overcome the pitfalls and leverage the power of PCA, rigorous experimental and computational protocols are essential.

Protocol for a Basic Progress Curve Experiment with Identifiability Checks

This protocol is designed for estimating K~M~ and k~cat~ for a simple Michaelis-Menten enzyme.

- Preliminary Assay: Run a quick initial rate assay with a broad substrate range to get an approximate K~M~ value. This informs the design of the main PCA experiment [27].

- Experimental Design: Prepare reaction mixtures with at least four different initial substrate concentrations (S~0~). Crucially, these should bracket the approximate K~M~, with values at roughly 0.3K~M~, 1K~M~, 3K~M~, and 10K~M~. This design ensures the curves have diverse shapes, providing the information needed for unique parameter identification [23] [27].

- Data Collection: Initiate reactions and record product concentration (via absorbance, fluorescence, etc.) continuously or with high frequency until substrate is at least 90% depleted. Perform all replicates.

- Data Analysis with Nonlinear Regression: a. Use software capable of fitting the integrated Michaelis-Menten equation or solving the corresponding ODE (e.g., DynaFit, a custom script in R/Python). b. Fit the ensemble of progress curves (all S~0~) simultaneously to a shared set of K~M~ and k~cat~ parameters.

- Identifiability Diagnostics: a. Check the correlation matrix of the fitted parameters. A correlation between K~M~ and k~cat~ absolute value >0.95 indicates poor practical identifiability. b. Perform a Monte Carlo simulation [23]. Add realistic random noise to your best-fit curve to generate 100-1000 synthetic datasets. Refit each one. The distribution of the resulting parameters reveals their confidence intervals. Tight, unimodal distributions indicate good identifiability; broad or multi-modal distributions indicate failure.

Protocol for Analyzing Complex Mechanisms: The CD39 Case Study

The enzyme CD39 hydrolyzes ATP to ADP and then ADP to AMP, creating a system where ADP is both a product and a substrate. This introduces substrate competition, making standard fitting fail [6].

- Isolate the Individual Reactions: a. ATPase Reaction: Start with a high concentration of ATP as the sole substrate. Measure the initial rate of ADP production while ADP concentration is still negligible to avoid competition. Use these initial rates across different [ATP] to estimate K~M1~ and V~max1~ for the ATP→ADP step. b. ADPase Reaction: Start with a high concentration of ADP as the sole substrate. Measure the initial rate of AMP production to estimate K~M2~ and V~max2~ for the ADP→AMP step [6].

- Construct the Full Competetive Model: Build an ODE model using the rate laws for competing substrates: d[ATP]/dt = -V~max1~[ATP] / ( K~M1~ (1 + [ADP]/K~M2~) + [ATP] ) d[ADP]/dt = + (... from ATP) - V~max2~[ADP] / ( K~M2~ (1 + [ATP]/K~M1~) + [ADP] ) [6]

- Global Fitting & Validation: Use the independently estimated parameters from Step 1 as informed initial guesses. Fit the full ODE model simultaneously to time-course data for both ATP and ADP depletion from an experiment started with ATP only. This final step refines the parameters based on the complete system behavior.

Table 3: Key Research Reagent Solutions for Progress Curve Analysis

| Reagent / Resource | Function & Role in PCA | Critical Considerations for Identifiability |

|---|---|---|

| High-Purity, Well-Characterized Substrate | The reactant whose depletion is modeled. Impurities or unknown concentration directly bias parameter estimates. | Substrate contamination is a major source of error. Use nonlinear regression methods that can fit the contaminant concentration as an extra parameter alongside K~M~ and V~max~ [25]. |

| Stable, Homogeneous Enzyme Preparation | The catalyst. Activity must remain constant throughout the progress curve. | Enzyme inactivation during the assay distorts the curve shape, leading to unidentifiable "apparent" parameters. Include an enzyme stability term in the model if inactivation is suspected [23]. |

| Specific, Calibrated Detection System | Quantifies product formation or substrate depletion (e.g., spectrophotometer, fluorimeter, HPLC). | The signal must be linear with concentration over the full range. Non-linearity introduces systematic error that confounds the kinetic model fit. |

| Continuous Assay Buffer | Maintains pH, ionic strength, and cofactor concentrations. | The buffer must inhibit product feedback unless such inhibition is part of the model. Unaccounted-for product inhibition is a common cause of model mismatch. |

| Software for ODE Modeling & NLR | Tools like COPASI, MATLAB with Global Optimization Toolbox, or Python (SciPy, PyDDE). | Essential for fitting complex models. The software must provide parameter confidence intervals and correlation matrices, which are key diagnostics for identifiability [6] [26]. |

| Monte Carlo Simulation Script | A custom script (e.g., in Python or R) to perform parameter confidence analysis. | Not a physical reagent, but a crucial computational resource for diagnosing practical identifiability and reporting reliable error estimates for fitted parameters [23]. |

The following diagram synthesizes the modern, identifiability-aware workflow for progress curve analysis, integrating experimental design, data collection, and advanced computational checks.

Diagram: The modern progress curve analysis workflow. This pipeline emphasizes iterative design based on identifiability diagnostics and incorporates advanced methods like the tQ model and Monte Carlo simulation to ensure parameter reliability [23] [6] [27].

Progress curve analysis represents a more information-dense and mechanistically informative approach to enzyme kinetics than traditional initial rate methods. However, this power comes with the inherent risk of parameter non-identifiability, which can render results meaningless if not properly managed.

The key to successful PCA lies in recognizing it as an integrated problem of experimental design, model selection, and computational analysis. As evidenced by the comparative data, no single software or method is universally best. Researchers must choose tools based on their system's complexity—from GraphPad Prism for standard work to DynaFit or Bayesian tQ methods for challenging scenarios where enzyme concentration is high or parameters are correlated [27].

Ultimately, framing PCA within the context of identifiability analysis transforms it from a simple curve-fitting exercise into a rigorous discipline. By adopting protocols that include multiple substrate concentrations, using models appropriate for the enzyme-to-substrate ratio, and employing mandatory diagnostic checks like Monte Carlo simulations, researchers can extract the rich data contained in progress curves with confidence, advancing both basic enzymology and drug development.

Within the broader thesis on identifiability analysis for enzyme kinetic parameters, a fundamental challenge persists: determining whether unique, reliable parameter values can be inferred from experimental data [30]. This process, known as identifiability analysis, is a critical gatekeeper before model calibration. Reliable parameter estimation is impossible if the model structure or available data cannot support it, leading to ill-calibrated models with low predictive power and large uncertainty [30].

Identifiability problems manifest in two principal forms. Structural identifiability is a theoretical property of the model structure itself, independent of data quality. It asks whether, given perfect and noise-free data, parameters can be uniquely determined [30] [2]. Practical identifiability considers real-world limitations, such as noisy, sparse, or limited data, and assesses whether the available experimental observations are informative enough to identify the parameters uniquely [30] [2]. A parameter that is practically identifiable is, by definition, structurally identifiable, but the converse is not true [30]. For modern research aiming to use models for discovery and decision-making—such as predicting enzyme function, optimizing bioprocesses, or informing therapeutic strategies—addressing both identifiability types is essential to ensure mechanistic insight and reliable predictions [31].

This comparison guide objectively evaluates established and emerging numerical procedures for conducting local identifiability analysis. It provides a step-by-step workflow contextualized for enzyme kinetics, compares the performance and requirements of key methodologies, and presents supporting experimental data to guide researchers and drug development professionals in selecting and implementing the most appropriate tools for their work.

Comparative Analysis of Numerical Procedures

The table below summarizes the core characteristics of the primary numerical procedures used for local identifiability analysis, facilitating a direct comparison of their approaches, requirements, and outputs.

Table 1: Comparison of Numerical Procedures for Local Identifiability Analysis

| Procedure Name | Core Analytical Basis | Key Outputs | Identifiability Type Addressed | Computational Demand | Primary Software/Implementation |

|---|---|---|---|---|---|

| Numerical Local Approach [30] | Sensitivity & Optimization | Histograms of parameter estimates, correlation matrices, standard deviations | Structural & Practical | High (scales with model complexity & desired accuracy) | Custom MATLAB/Python scripts |

| Profile-Wise Analysis (PWA) [31] | Profile Likelihood | Profile likelihood curves, confidence intervals for parameters and predictions | Primarily Practical | Moderate to High | Custom Python workflow (GitHub available) |

| Fisher Information Matrix (FIM) [2] | Local Curvature of Likelihood | Parameter covariance matrix, coefficient of variation (CV) estimates | Primarily Practical (with caveats) | Low | Built into many fitting tools (e.g., KinTek Explorer [32]) |

| Deep Learning Prediction (CataPro) [33] | Deep Neural Networks | Predicted kcat and Km values, generalizability benchmarks | Provides prior estimates to inform design | Very High (training); Low (deployment) | Python-based CataPro framework |

The Numerical Local Approach (Walter & Pronzato)

This conceptually straightforward procedure is based on generating high-quality synthetic data and testing parameter recoverability [30].

Step-by-Step Workflow:

- Nominal Parameter Selection: Choose a plausible nominal parameter set (θ*).

- Synthetic Data Generation: Use the model with θ* to simulate ideal, noise-free output data (yf).

- Parameter Estimation from Synthetic Data: Attempt to re-estimate the parameters by starting an optimization routine (e.g., simplex) from θ* and fitting to yf.

- Repetition & Analysis: Repeat steps 1-3 for many different nominal parameter values across the plausible space.

- Assessment: Analyze the distributions of the resulting parameter estimates. A parameter is considered structurally locally identifiable if its estimates consistently converge to the nominal value from which the optimization started. For practical identifiability, the steps are repeated using realistic, noisy experimental data [30].

Supporting Experimental Data: This method was applied to a ping-pong bi-bi kinetic model for an ω-transaminase [30]. The structural analysis confirmed local identifiability, but the practical analysis revealed that high values of the forward rate parameter Vf became unidentifiable, especially at higher substrate concentrations. This finding directly informed experimental design, highlighting the need for measurements at lower substrate ranges to ensure reliable calibration [30].

Profile-Wise Analysis (PWA) & Profile Likelihood

PWA is a unified, likelihood-based workflow that integrates identifiability analysis, parameter estimation, and prediction [31]. Its core is the profile likelihood method, which is powerfully recommended for diagnosing practical identifiability [2].

Step-by-Step Workflow:

- Define Likelihood Function: Establish the likelihood p(y|θ) for the observed data y given parameters θ.

- Profile a Parameter: For a parameter of interest θi, fix it at a series of values across its range.

- Optimize Over Nuisance Parameters: At each fixed θi, optimize the likelihood over all other "nuisance" parameters.

- Construct Profile Curve: Plot the optimized likelihood value against the fixed θi value.

- Assessment: A flat profile indicates the parameter is practically unidentifiable—a wide range of values are equally plausible given the data. A well-defined, peaked profile indicates identifiability. The confidence interval is derived from a threshold on the profile [31] [2].

Supporting Experimental Data: Profile likelihood is effective for complex models like those in systems biology. It is cited as a robust solution to the practical identifiability challenge, overcoming severe shortcomings associated with relying solely on the Fisher Information Matrix (FIM), which can provide misleading results in nonlinear models [2]. The PWA workflow has been demonstrated on ODE models, efficiently producing reliable confidence sets for predictions [31].

Fisher Information Matrix (FIM) Based Approximation

The FIM approximates the curvature of the likelihood function at the optimum and is computationally inexpensive.

Step-by-Step Workflow:

- Parameter Estimation: Find the maximum likelihood parameter estimates (θ̂).

- Calculate FIM: Compute the matrix of second-order partial derivatives (Hessian) of the log-likelihood at θ̂.

- Invert FIM: The inverse of the FIM provides an estimate of the parameter covariance matrix.

- Calculate Coefficients of Variation: The square root of the diagonal elements of the covariance matrix gives standard error estimates, which can be expressed as a percentage of the parameter value (Coefficient of Variation, CV).

- Assessment: A very high CV (e.g., >100%) suggests the parameter may be practically unidentifiable. However, this local linear approximation can be highly misleading for nonlinear models, especially when profiles are flat or non-elliptical [2].

Emerging Approach: Deep Learning for Parameter Prediction

While not an identifiability analysis tool per se, deep learning models like CataPro represent a paradigm shift in addressing the parameter determination challenge [33]. CataPro uses pre-trained protein language models and molecular fingerprints to directly predict kinetic parameters (kcat, Km) from enzyme sequences and substrate structures.

Role in the Workflow: Such tools can provide high-quality prior estimates for parameters, which can be used to inform nominal parameter selection in numerical identifiability procedures or to design more informative experiments by highlighting potentially unidentifiable regions of parameter space.

Supporting Experimental Data: In a benchmark using unbiased datasets clustered to prevent data leakage, CataPro demonstrated superior accuracy and generalization in predicting kcat and Km compared to baseline models [33]. It successfully assisted in discovering and engineering an enzyme with significantly increased activity, validating its practical utility [33].

Detailed Experimental Protocols from Key Studies

Protocol: Isolating Reactions to Cure Identifiability (CD39 Enzyme Kinetics)

Objective: To accurately determine the kinetic parameters (Vmax, Km) for CD39 (NTPDase1), which hydrolyzes ATP to ADP and ADP to AMP, where ADP is both a product and a substrate, leading to inherent identifiability issues [6].

Methodology:

- Problem: A combined model fitting ATP and ADP hydrolysis time-course data simultaneously led to unidentifiable parameters due to strong correlations [6].

- Solution - Experimental Isolation:

- ATPase Parameters: Fit the model (Eq. 3,4 from source [6]) to data from an experiment where the enzyme is spiked with ATP only. Initial conditions: [ATP]=500 µM, [ADP]=[AMP]=0. Only parameters Vmax1 and Km1 are estimated.

- ADPase Parameters: Fit the model to data from an independent experiment where the enzyme is spiked with ADP only. Initial conditions: [ADP]=500 µM, [ATP]=[AMP]=0. Only parameters Vmax2 and Km2 are estimated [6].

- Validation: The independently estimated parameter sets are combined in the full model. Simulations from this model show excellent agreement with time-course data from a separate experiment, confirming the parameters are now reliable and identifiable [6].

Key Finding: Naïve simultaneous fitting of the full model to a single dataset yielded inaccurate and unstable parameter estimates. The isolation strategy ensured all kinetic parameters were theoretically and practically identifiable [6].

Protocol: A Comprehensive Workflow for Microbial Community Models

Objective: To identify dynamic ODE models of microbial communities while systematically addressing pitfalls of identifiability, blow-up, underfitting, and overfitting [34].

Methodology: This integrated workflow consists of sequential analysis phases [34]:

- Structural Identifiability Analysis: Apply a differential algebra (e.g., STRIKE-GOLDD) or generating series approach to check if parameters are uniquely determinable from perfect data.

- Practical Identifiability & Global Optimization: Use multi-start deterministic global optimization (e.g., scatter search) for model calibration. Assess practical identifiability via parameter histograms from multi-start runs and profile likelihood.

- Stability Check: Analyze the Jacobian of the calibrated model to ensure equilibrium points are stable and biologically plausible, avoiding "blow-up" solutions.

- Predictive Power Assessment: Validate the model on unseen experimental data not used for calibration to guard against overfitting.

Key Finding: This systematic workflow mitigates the risk of deriving unreliable models and is demonstrated on case studies of increasing complexity, such as Generalized Lotka-Volterra models [34].

Visual Workflow and Procedural Diagrams

Diagram 1: Decision Workflow for Identifiability Analysis

Diagram 2: Step-by-Step Procedural Comparison of Core Methods

Table 2: Key Research Reagent Solutions for Identifiability Analysis

| Tool / Resource | Type | Primary Function in Identifiability Analysis | Key Features / Notes |

|---|---|---|---|

| KinTek Explorer [32] [35] | Commercial Software | Model simulation & fitting; provides error analysis (FIM-based). | Real-time simulation; domain-optimized fitting for kinetics; confidence contours. Useful for initial exploration. |

| ICEKAT [36] | Free Web Tool | Data preprocessing for reliable initial rate calculation. | Semi-automates initial rate determination from kinetic traces, reducing bias in the primary data fed to models. |

| Custom PWA Workflow [31] | Open-Source Code (GitHub) | Implements the Profile-Wise Analysis workflow. | Unifies profile likelihood-based identifiability, estimation, and prediction. Code is available for replication. |

| CataPro Deep Learning Model [33] | AI Prediction Framework | Provides prior parameter estimates to inform analysis and design. | Predicts kcat and Km from sequence/structure; helps set plausible parameter ranges and anticipate issues. |

| MATLAB / Python (Custom Scripts) | Programming Environment | Implement numerical local approach, profile likelihood, etc. | Maximum flexibility. Walter & Pronzato method [30] and CD39 protocol [6] were implemented in MATLAB. |

A vast repository of enzyme kinetic measurements—spanning parameters like kcat and Km—remains buried within decades of scientific literature, constituting what researchers have termed the "dark matter" of enzymology [37]. Manually curating this data is prohibitively slow, creating a bottleneck for fields that depend on high-quality, large-scale kinetic data. This gap directly impacts identifiability analysis, a critical step in building robust mathematical models of biological systems. Identifiability analysis determines whether unique, reliable values for kinetic parameters can be derived from experimental data, a prerequisite for predictive simulation and engineering [6].

The emergence of large language models (LLMs) offers a transformative solution. This guide objectively evaluates EnzyExtract, an LLM-powered pipeline designed to automate the extraction and structuring of enzyme kinetic data from full-text publications [37] [38]. We compare its performance against traditional alternatives and provide detailed experimental data, framing the discussion within the essential context of parameter identifiability in enzyme kinetics research.

The utility of a kinetic data source is measured by its scale, accuracy, and readiness for computational modeling. The following table summarizes a quantitative comparison based on reported benchmarks [37] [38].

Table 1: Comparative Performance of Enzyme Kinetic Data Sources

| Data Source | Scale (Entries) | Key Coverage Metric | Automation Level | Primary Use Case |

|---|---|---|---|---|

| EnzyExtract | >218,000 kcat/Km entries [37] | 94,576 entries absent from BRENDA [38] | Full automation (LLM pipeline) | Large-scale model training, dataset expansion |

| Manual Curation (e.g., BRENDA) | ~1.8 million entries (total) | Gold standard for known data | Manual expert curation | Reference database, targeted queries |

| Graphical/Linear Estimation [6] | Single studies | Parameter sets for specific enzymes | Manual digitization & fitting | Individual enzyme studies (potentially error-prone) |

| Focused Auto-Extraction Tools | Variable, typically smaller | High precision for defined fields | Semi-automated (rule-based) | Extracting data from specific journal formats |