PROBE Design Mastery: A Complete Guide to Rigorous Biomarker Validation for Drug Development

This comprehensive guide demystifies the process of designing and executing Prospective, Randomized, Blinded, Endpoint studies (PROBE) for robust biomarker validation.

PROBE Design Mastery: A Complete Guide to Rigorous Biomarker Validation for Drug Development

Abstract

This comprehensive guide demystifies the process of designing and executing Prospective, Randomized, Blinded, Endpoint studies (PROBE) for robust biomarker validation. Tailored for researchers and drug development professionals, it covers the fundamental rationale for PROBE's superiority over observational designs, provides a step-by-step methodological blueprint, addresses common pitfalls and optimization strategies, and critically compares PROBE to alternative trial designs like RCTs. The article synthesizes current best practices to empower teams in generating high-quality, clinically actionable biomarker data that meets regulatory standards and accelerates precision medicine.

Why PROBE? The Foundational Rationale for Superior Biomarker Study Design

Within the framework of a thesis on biomarker validation, the PROBE (Prospective, Randomized, Open-label, Blinded Endpoint) design emerges as a critical methodological paradigm. This structure is engineered to mitigate bias, particularly assessment bias, in trials evaluating biomarker performance or therapeutic efficacy guided by biomarkers. It is especially pivotal in contexts where blinding the intervention is impractical or unethical. The core principle hinges on the separation of the treatment administration phase from the endpoint evaluation phase, with the latter conducted under rigorous blinding conditions.

Core Principles

The PROBE design is governed by four interconnected principles:

- Prospective: The trial protocol, including hypotheses, biomarker assays, primary endpoints, and analysis plans, is finalized before participant enrollment begins.

- Randomized: Participants are randomly assigned to study arms to minimize selection bias and ensure group comparability.

- Open-label: The treatment assignment is known to both the investigator and the participant during the treatment phase.

- Blinded Endpoint: The assessment of the primary outcome measure is performed by adjudicators or committees who are blinded to the treatment allocation.

Structural Framework

The operational structure of a PROBE trial follows a sequential, partitioned workflow to enforce the blinding principle.

Application Notes for Biomarker Validation

In biomarker research, PROBE designs are frequently applied in two key scenarios:

- Diagnostic Accuracy Studies: Evaluating a novel biomarker's ability to predict a clinical outcome, where the biomarker measurement is open-label but the outcome assessment is blinded.

- Biomarker-Stratified Therapy Trials: Assessing the clinical utility of a biomarker to guide treatment choices, where treatment is openly assigned based on biomarker status, but the subsequent clinical evaluation is blinded.

Table 1: Comparative Merits of Trial Designs for Biomarker Validation

| Design Feature | PROBE Design | Double-Blind RCT | Observational Study |

|---|---|---|---|

| Control of Intervention Bias | Moderate (Open-label) | High | Low |

| Control of Assessment Bias | High (Blinded Endpoint) | High | Low |

| Feasibility/Cost | High | Moderate to Low | High |

| Ethical Acceptability | High (when blinding Tx is unethical) | High | High |

| Primary Use Case | Biomarker utility; surgical/device trials | Drug efficacy/safety | Hypothesis generation |

Experimental Protocols

Protocol 1: Establishing a Blinded Endpoint Adjudication Committee (BEAC)

Objective: To form and operationalize an independent committee for blinded endpoint assessment in a PROBE trial validating a prognostic biomarker for major adverse cardiac events (MACE). Materials: See "Scientist's Toolkit" below. Methodology:

- Committee Formation: Recruit 3-5 independent clinical experts not involved in trial conduct. Document conflicts of interest.

- Charter Development: Collaboratively draft a BEAC charter defining:

- Endpoint definitions (e.g., precise MI criteria).

- Adjudication process (primary reviewer, full committee review for discordance).

- Voting rules.

- Data access guidelines.

- Blinding Procedure:

- The trial coordinating center prepares endpoint packages.

- All explicit treatment identifiers, biomarker results, and institution names are redacted.

- Packages are assigned a random adjudication ID.

- Adjudication Workflow:

- Packages are distributed electronically via a secure portal.

- Each case is independently reviewed by two BEAC members.

- Concordant reviews are accepted. Discordant reviews trigger full committee discussion and vote.

- Final classifications are recorded in a password-protected log linked only by adjudication ID.

Protocol 2: Integrating Biomarker Assay with PROBE Framework

Objective: To systematically collect, process, and analyze biomarker samples within a PROBE trial for peripheral blood protein biomarker validation. Workflow:

Methodology:

- Sample Collection: Collect peripheral blood in serum tubes at predefined visits post-randomization. Process (clot, centrifuge) within 2 hours.

- Blinding & Storage: Aliquot serum into 3+ cryovials. Label with a unique, blinded Sample ID (unlinked to treatment in the sample database). Store at -80°C in a central biorepository.

- Blinded Analysis: Ship blinded batches to the designated core laboratory. Analyze using a pre-validated, quantitative assay (e.g., ELISA). The core lab reports results using only the blinded Sample ID.

- Data Integration: The trial statistician receives the biomarker result file (Sample ID + concentration) and the clinical database (Patient ID + adjudicated endpoints + treatment). The two datasets are merged via a master linkage file held by an independent data manager only after endpoint adjudication is complete.

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for PROBE Biomarker Studies

| Item | Function & Specification |

|---|---|

| Stable Isotope-Labeled Peptide Standards | For mass spectrometry-based biomarker assays; enables precise, multiplexed absolute quantification of target proteins in complex samples. |

| Validated ELISA/Single Molecule Array (Simoa) Kits | For high-sensitivity quantification of low-abundance protein biomarkers in serum/plasma; provides robust reproducibility essential for longitudinal analysis. |

| Liquid Biopsy Collection Tubes (e.g., cfDNA/ctDNA) | Preserves cell-free nucleic acids in blood for downstream genomic biomarker analysis (e.g., NGS), ensuring pre-analytical stability. |

| Electronic Data Capture (EDC) System with Audit Trail | Securely captures case report form data, including endpoint information pre-adjudication, ensuring data integrity and traceability. |

| Secure, IRB-Compliant Cloud Repository | Hosts blinded endpoint packages for BEAC access; features role-based permissions, encryption, and access logging. |

| Laboratory Information Management System (LIMS) | Tracks blinded biomarker samples throughout their lifecycle, managing chain of custody and linking blinded IDs to assay results. |

Within the PROBE (Prospective, Randomized, Blinded, Endpoint) design paradigm for biomarker validation, observational studies represent a critical, high-risk methodological gap. While cost-effective for hypothesis generation, their inherent biases—confounding, reverse causation, and spectrum bias—render them inadequate for definitive validation, leading to costly failures in translational research and clinical development. This document outlines the evidential hierarchy and provides protocols to bridge this gap through rigorous prospective validation.

Quantitative Evidence: Observational vs. Prospective Study Outcomes

Table 1: Comparison of Biomarker Performance in Observational vs. Prospective Validation Studies

| Biomarker & Intended Use | Observational Study Reported Performance (AUC/Sensitivity/Specificity) | Prospective PROBE-aligned Validation Study Performance | Key Discrepancy & Identified Bias |

|---|---|---|---|

| Serum Protein X for Early Cancer Detection | AUC: 0.92 (95% CI: 0.88-0.96) in case-control study | AUC: 0.65 (95% CI: 0.58-0.72) in PRoBE-design screening cohort | Spectrum bias; controls in observational study were healthy volunteers, not the true screening population with benign conditions. |

| Genomic Signature Y for Prognosis in Breast Cancer | Hazard Ratio (HR): 2.8 (CI: 2.1-3.7) in retrospective tumor registry analysis | HR: 1.4 (CI: 1.0-1.9) in prospective cohort study | Confounding by treatment and stage; retrospective analysis failed to adjust for unequal access to therapies. |

| Circulating miRNA Z for Alzheimer's Disease Progression | Correlation coefficient (r): -0.76 with cognitive score in prevalent cases | Correlation coefficient (r): -0.31 in longitudinal cohort of pre-symptomatic individuals | Overfitting and reverse causation; low biomarker levels in observational study were a consequence of disease, not a predictor. |

Table 2: Common Biases in Observational Biomarker Studies and Their Impact

| Bias Type | Frequency in Literature (Estimate) | Typical Impact on Performance Metrics | Mitigation Strategy (PROBE element) |

|---|---|---|---|

| Spectrum Bias | ~40-60% of diagnostic studies | Inflates sensitivity & specificity | Use of inception cohort representing clinical population (Prospective, Endpoint) |

| Confounding by Treatment | ~30-50% of prognostic studies | Alters hazard ratios and predictive values | Blinded evaluation, adjustment in analysis (Blinded) |

| Overfitting | ~50-70% of omics-based studies | Dramatic reduction in AUC upon validation | Pre-specified analysis plan, independent test set (Randomized, Blinded) |

| Pre-analytical Variability | Ubiquitous, often underreported | Introduces noise, reduces reproducibility | Standardized SOPs for sample collection/processing (Protocol-driven) |

Core Experimental Protocols for PROBE-aligned Validation

Protocol 1: Prospective Specimen Collection, Retrospective Blinded Evaluation (PRoBE) Framework

Objective: To validate a diagnostic biomarker in a study design that minimizes bias by collecting specimens from a clinically relevant cohort prior to outcome ascertainment.

Materials: See "Research Reagent Solutions" (Section 5.0).

Workflow:

- Cohort Definition: Precisely define the clinical population (e.g., symptomatic patients entering a diagnostic workup for condition X).

- Informed Consent & Enrollment: Obtain consent for biomarker testing and follow-up. Enroll consecutive eligible patients.

- Standardized Biospecimen Collection: Collect samples (e.g., blood, tissue) using a validated, locked SOP before definitive disease status is known. Process and aliquot uniformly.

- Clinical Endpoint Ascertainment: Establish the final diagnosis ("ground truth") via a gold-standard method (e.g., histopathology, clinical follow-up) after sample collection. This is the "Endpoint".

- Blinded Assay Performance: After endpoints are fixed, analyze the archived specimens in a batch, with technicians blinded to the clinical outcome data ("Blinded").

- Statistical Analysis: Compare biomarker results against the pre-specified endpoints to calculate clinical performance metrics (sensitivity, specificity, AUC, PPV, NPV).

Protocol 2: Randomized Controlled Trial (RCT) Embedded Biomarker Validation

Objective: To validate a predictive biomarker (identifying responders to Therapy A) within an RCT to avoid confounding by treatment.

Workflow:

- Trial Design: Design a Phase II/III RCT comparing Therapy A vs. Standard of Care (SoC) or placebo.

- Pre-Randomization Sampling: Collect baseline biospecimens from all consented patients prior to randomization.

- Randomization & Treatment: Randomize patients to treatment arms ("Randomized").

- Endpoint Adjudication: Assess primary clinical endpoint (e.g., progression-free survival) via a blinded endpoint review committee.

- Biomarker Analysis: Perform biomarker assay on baseline specimens in a central lab, blinded to both treatment arm and outcome.

- Interaction Analysis: Statistically test for a significant treatment-by-biomarker interaction. A true predictive biomarker will show treatment benefit primarily in the biomarker-positive group.

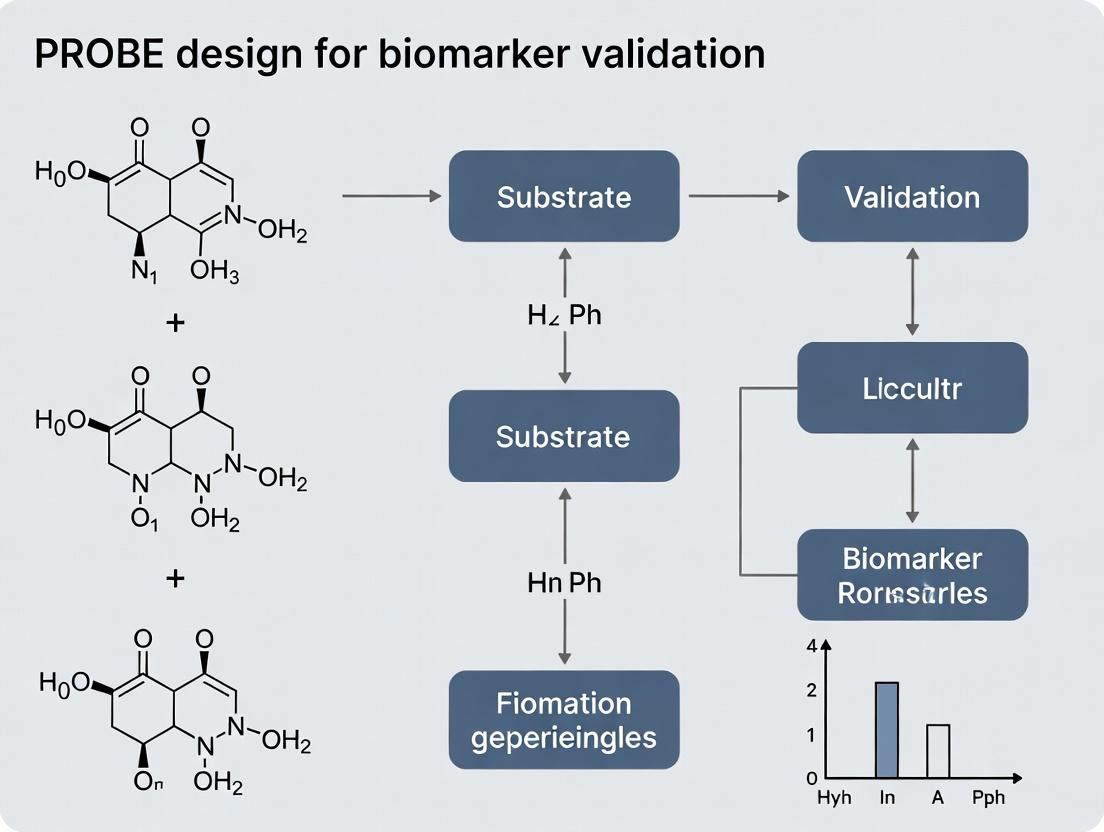

Pathway and Workflow Visualizations

Title: The Biomarker Development Pathway: Gap and Bridge

Title: PRoBE Design Validation Workflow

Title: RCT-Embedded Predictive Biomarker Analysis

Research Reagent Solutions

Table 3: Essential Toolkit for Biomarker Validation Studies

| Item / Reagent Category | Example Product/Kit | Primary Function in Validation | Critical Quality Attribute |

|---|---|---|---|

| Biospecimen Collection System | PAXgene Blood RNA tubes, Streck cfDNA BCTs | Standardizes pre-analytical variables for genomic/transcriptomic analysis | Preserves analyte integrity for 24-72h at room temp. |

| Multiplex Immunoassay Platform | Luminex xMAP, Meso Scale Discovery (MSD) U-PLEX | Quantifies multiple protein biomarkers simultaneously from low-volume samples | High dynamic range, validated cross-reactivity profiles. |

| Digital PCR System | Bio-Rad QX200, Thermo Fisher QuantStudio 3D | Absolute quantification of rare mutations or gene copies; low abundance targets | Precision at low copy number (<5 copies/μL). |

| NGS Library Prep Kit | Illumina TruSeq, Twist Bioscience Panels | Target enrichment and sequencing library construction for genomic biomarkers | Uniform coverage, low duplicate read rate. |

| Automated Nucleic Acid Extractor | QIAGEN QIA symphony, MagNA Pure 24 | High-throughput, reproducible isolation of DNA/RNA from diverse sample matrices | Consistent yield, removal of PCR inhibitors. |

| Statistical Analysis Software | R (pROC, survival packages), SAS JMP Clinical | For power calculation, biomarker performance analysis, and survival modeling | Reproducible scripting, validated algorithms. |

| Sample Tracking LIMS | Freezerworks, LabVantage | Maintains chain of custody and links de-identified samples to clinical data | 21 CFR Part 11 compliance, audit trail. |

Within the framework of PROBE (Prospective, Randomized, Blinded Endpoint evaluation) studies for biomarker validation, three distinct contexts are paramount: Predictive, Prognostic, and Pharmacodynamic. These contexts define a biomarker's clinical and research utility. Predictive biomarkers forecast response to a specific therapy, prognostic biomarkers provide information on the likely disease course independent of treatment, and pharmacodynamic (PD) biomarkers indicate biological activity following a therapeutic intervention, often used to establish proof-of-mechanism.

Key Biomarker Contexts: Definitions and Distinctions

Table 1: Core Characteristics and Applications of Biomarker Contexts in PROBE Studies

| Context | Primary Question | Typical PROBE Study Design Role | Key Statistical Endpoint | Common Example |

|---|---|---|---|---|

| Predictive | Will this patient respond to Treatment A vs. B? | Stratification or enrichment factor in randomized controlled trial (RCT) | Treatment-by-biomarker interaction p-value | KRAS mutation status for anti-EGFR therapy in CRC |

| Prognostic | What is this patient's likely disease outcome? | Baseline covariate for risk adjustment in RCT | Hazard Ratio (HR) for biomarker-high vs. biomarker-low groups | 70-gene signature (MammaPrint) for breast cancer recurrence |

| Pharmacodynamic | Did the drug hit its intended target? | Early endpoint for proof-of-biology in Phase I/II trials | Change from baseline in biomarker level (p-value, effect size) | pERK reduction after MEK inhibitor treatment |

Application Notes & Protocols

Predictive Biomarker Validation Protocol

Objective: To validate a candidate biomarker's ability to differentially predict clinical benefit from Investigational Product (IP) vs. control therapy within a Phase III PROBE-design RCT.

Workflow:

- Pre-Analytical Phase: Standardized collection of baseline tumor tissue (FFPE or fresh frozen) prior to randomization.

- Assay Phase: Centralized laboratory analysis using a validated, CLIA-certified assay (e.g., NGS, IHC, FISH) to classify patients as biomarker-positive or -negative.

- Blinding: Biomarker status is kept blinded to clinicians, patients, and clinical endpoint assessors.

- Randomization & Treatment: Patients are randomized to IP or control, with potential for stratified randomization based on biomarker status.

- Analysis: Primary analysis tests for a significant interaction between treatment arm and biomarker status on the primary clinical endpoint (e.g., Overall Survival).

Key Analysis Table: Table 2: Hypothetical Predictive Biomarker Analysis Results

| Biomarker Group | Therapy Arm | N | Median OS (months) | Hazard Ratio (95% CI) | Interaction P-value |

|---|---|---|---|---|---|

| Biomarker+ | Investigational | 150 | 22.1 | 0.45 (0.32-0.63) | 0.002 |

| Biomarker+ | Control | 145 | 12.4 | Reference | |

| Biomarker- | Investigational | 200 | 14.3 | 0.95 (0.71-1.27) | |

| Biomarker- | Control | 205 | 13.8 | Reference |

Prognostic Biomarker Validation Protocol

Objective: To independently establish a biomarker's association with disease outcome irrespective of therapy in a well-defined, uniformly treated cohort.

Protocol:

- Cohort Selection: Use archival samples from a completed clinical trial or large observational cohort with uniform initial treatment (e.g., adjuvant chemotherapy).

- Biomarker Assay: Perform blinded biomarker assessment on all eligible samples.

- Clinical Data Linkage: Link biomarker data to long-term clinical follow-up data (e.g., DFS, OS).

- Statistical Analysis: Use Cox proportional hazards models to evaluate the association between baseline biomarker level and clinical outcome, adjusting for established clinicopathological factors (e.g., age, stage).

Pharmacodynamic Biomarker Protocol

Objective: To demonstrate target engagement and modulation of a downstream pathway following drug administration in a Phase I trial.

Experimental Methodology:

- Pre- and Post-Treatment Sampling: Obtain paired tissue (e.g., tumor biopsy, skin punch) or liquid biopsy (e.g., plasma, PBMCs) at baseline (pre-dose) and at a defined time post-dose (e.g., Cycle 1 Day 15).

- Assay Application: Utilize highly specific, quantitative assays (e.g., phospho-specific flow cytometry, immunoassay for cleaved caspase-3, LC-MS/MS for metabolite levels).

- Dose-Response Analysis: Correlate the magnitude of biomarker change with drug dose/exposure (AUC).

- Reporting: Express results as fold-change or absolute change from baseline. Statistical significance is assessed via paired t-test or Wilcoxon signed-rank test.

Example PD Results Table: Table 3: Example Pharmacodynamic Biomarker Data (Tumor Phosphoprotein)

| Patient Cohort | Dose Level | N (paired) | Mean Baseline pS6 (AU) | Mean Post-Treatment pS6 (AU) | Mean % Reduction | P-value (paired) |

|---|---|---|---|---|---|---|

| Dose Escalation | 50 mg QD | 5 | 12.4 ± 3.1 | 10.1 ± 2.8 | 18.5% | 0.12 |

| Dose Escalation | 200 mg QD | 6 | 11.8 ± 2.5 | 4.2 ± 1.7 | 64.4% | 0.004 |

| Dose Expansion | 200 mg QD | 15 | 13.1 ± 3.8 | 5.0 ± 2.1 | 61.8% | <0.001 |

Visualizations

Title: Three Key Biomarker Contexts and Their Uses

Title: Pharmacodynamic Biomarker Concept in Target Engagement

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents for Biomarker Research in PROBE Contexts

| Reagent / Material | Provider Examples | Primary Function in Biomarker Workflow |

|---|---|---|

| Validated IHC Antibody Clones | Cell Signaling Tech, Dako (Agilent), Abcam | Detection and quantification of protein-level predictive/PD biomarkers (e.g., PD-L1, pERK) in FFPE tissue with known clinical cut-offs. |

| NGS Panels (DNA/RNA) | Illumina (TruSight), Thermo Fisher (Oncomine), Foundation Medicine | Comprehensive profiling for predictive somatic mutations, fusions, and prognostic gene signatures from limited tissue. |

| Liquid Biopsy ctDNA Kits | Roche (AVENIO), Bio-Rad (ddPCR), Qiagen | Non-invasive serial monitoring for predictive mutation status and early pharmacodynamic changes in tumor-derived DNA. |

| Multiplex Immunoassay Panels | MSD (Meso Scale Discovery), Luminex, Olink | High-sensitivity, simultaneous quantification of dozens of soluble PD biomarkers (e.g., cytokines, phosphoproteins) in serum/plasma. |

| Stable Isotope-Labeled Internal Standards | Cambridge Isotope Labs, Sigma-Aldrich | Absolute quantification of metabolite or small-molecule PD biomarkers via LC-MS/MS for rigorous pharmacokinetic-PD modeling. |

| Digital Pathology Imaging & Analysis Software | Indica Labs (HALO), Visiopharm, Aperio (Leica) | Objective, quantitative image analysis of IHC or multiplex immunofluorescence for spatial biomarker quantification. |

Aligning PROBE Design with Regulatory Expectations (FDA, EMA)

PROBE (Performance of Biomarker Evaluation) studies are critical for validating biomarkers intended for use in drug development and clinical decision-making. Alignment with regulatory agency expectations from the outset is paramount for successful qualification and acceptance. The FDA (U.S. Food and Drug Administration) and EMA (European Medicines Agency) provide evolving guidance on biomarker validation, emphasizing rigor, reproducibility, and context-of-use.

Table 1: Key Regulatory Guidance Documents on Biomarker Validation

| Agency | Document Title/Reference | Core Focus Areas | Status (as of latest review) |

|---|---|---|---|

| FDA | Biomarker Qualification: Evidentiary Framework (2018) | Context of Use, Analytical Validation, Clinical Validation, Fit-for-Purpose | Draft Guidance (Active) |

| FDA | Bioanalytical Method Validation (2018) | Analytical Method Performance (Precision, Accuracy, Sensitivity, Specificity) | Final Guidance |

| EMA | Guideline on the qualification of novel methodologies for drug development (2022) | Qualification Procedure, Evidence Generation, Multi-stakeholder Collaboration | Final Guideline |

| FDA & EMA | ICH E16: Genomic Biomarkers, Qualification and Classification (2023) | Genomic Biomarker Terminology, Data Standards, Submission Format | Adopted Step 4 |

A central tenet from both agencies is the "fit-for-purpose" approach, where the level of validation is proportional to the intended context of use (CoU). The CoU is a detailed description clarifying how the biomarker will be used in drug development or regulatory review.

Core PROBE Design Principles for Regulatory Alignment

A robust PROBE study design must address three interconnected pillars: Analytical Validation, Clinical/Scientific Validation, and Data Integrity/Transparency.

Analytical Validation Protocols

Analytical validation establishes that the measurement method is reliable, reproducible, and suitable for its intended purpose.

Protocol 1.1: Tiered Approach to Analytical Validation for a Quantitative Biomarker Assay Objective: To characterize assay performance parameters based on the intended Context of Use (e.g., exploratory vs. decision-making). Materials: See "The Scientist's Toolkit" below. Procedure:

- Precision (Repeatability & Intermediate Precision): Analyze at least three analyte concentrations (low, mid, high) across 5 days with duplicate runs. Use 2-3 operators and multiple reagent lots if applicable.

- Accuracy/Recovery: Spike known quantities of purified biomarker into a validated matrix (e.g., pooled plasma). Compare measured vs. expected values. Use standard reference materials if available.

- Linearity/Range: Prepare a dilution series of the analyte across the expected physiological range. Assess linearity via regression analysis (R² > 0.95 is typical).

- Lower Limit of Quantification (LLOQ): Determine the lowest concentration measured with acceptable precision (CV% ≤20%) and accuracy (80-120%).

- Specificity/Selectivity: Test interference from common matrix components (e.g., lipids, hemoglobin) and structurally similar molecules. Assess in samples from at least 10 individual donors.

- Sample Stability: Conduct short-term (bench-top), long-term (storage at -80°C), and freeze-thaw stability studies. Data Analysis: Summarize all results in a validation report with clearly defined acceptance criteria, justified by the CoU.

Table 2: Example Acceptance Criteria Based on Context of Use

| Analytical Parameter | Exploratory (e.g., hypothesis generation) | Critical (e.g., patient stratification) |

|---|---|---|

| Precision (Total CV%) | ≤ 25% | ≤ 15% |

| Accuracy (% Bias) | ± 25% | ± 15% |

| LLOQ (Signal/Noise) | ≥ 5 | ≥ 10 |

| Required Reference Standards | Well-characterized in-house | Certified Reference Material (if available) |

Clinical/Scientific Validation Protocols

This component establishes the relationship between the biomarker and the biological, pathological, or clinical endpoint.

Protocol 2.1: Retrospective Sample Analysis for Biomarker Qualification Objective: To evaluate the association between biomarker levels and a clinical endpoint using archived, well-annotated samples. Procedure:

- Cohort Selection: Define inclusion/exclusion criteria. Use samples from completed clinical trials or disease registries with documented clinical outcomes.

- Blinded Analysis: Analyze biomarker levels in a randomized order, blinded to clinical outcome and patient group.

- Statistical Analysis Plan (SAP): Pre-specify the analysis. Common analyses include:

- Correlation (Spearman) with relevant clinical scales.

- Comparison of biomarker levels between disease/severity groups (ANOVA).

- Assessment of diagnostic performance (ROC analysis for sensitivity/specificity).

- Cox regression for time-to-event outcomes.

- Confirmation Cohort: Validate findings in an independent cohort. Data Analysis: Report effect sizes with confidence intervals. Adhere to the pre-specified SAP, documenting any deviations.

Diagram Title: PROBE Study Design and Regulatory Interaction Path

Diagram Title: Biomarker Clinical Validation Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomarker PROBE Studies

| Item/Category | Function & Regulatory Consideration |

|---|---|

| Certified Reference Standards | Provides metrological traceability. Preferred by regulators (e.g., NIST, WHO standards). Critical for assay calibration. |

| Well-Characterized Positive/Negative Control Samples | Used for daily run acceptance and monitoring long-term assay performance (QC charts). |

| Matrix-Matched Calibrators & QCs | Calibrators and quality controls prepared in the same biological matrix as study samples (e.g., human serum) to account for matrix effects. |

| Validated Assay Kits (IVD/CE-marked or RUO with extensive validation) | Streamlines development. For RUO kits, additional, study-specific validation is mandatory per FDA/EMA expectations. |

| Sample Collection & Processing Kits with Stabilizers | Ensures pre-analytical consistency, a major source of variability. Standardized protocols are required. |

| Laboratory Information Management System (LIMS) | Ensures data integrity through sample chain-of-custody tracking, audit trails, and electronic data capture. |

Data Integrity and Submission Readiness

Regulators emphasize data robustness. Key requirements include:

- Pre-definition: Finalized protocols and Statistical Analysis Plans before data lock.

- Blinding: Preventing bias in sample analysis and data evaluation.

- Source Data Verification: Maintaining clear audit trails from raw data to reported results.

- Comprehensive Reporting: Following the BEST (Biomarkers, EndpointS, and other Tools) Resource glossary and STARD (for diagnostic accuracy) reporting guidelines where applicable.

Ethical and Practical Advantages of the PROBE Framework

Application Notes

The Prospective, Randomized, Open-label, Blinded Endpoint (PROBE) framework is a pivotal design in clinical research, particularly for biomarker validation within diagnostic and therapeutic development. Its structured approach balances scientific rigor with operational feasibility, directly addressing core challenges in modern biomarker studies.

Core Advantages:

- Ethical: The PROBE design's open-label treatment phase respects patient autonomy and informed consent, as participants are aware of their therapeutic intervention. This is critical in serious diseases where equipoise exists between treatments but not between treatment and no treatment. Concurrently, the blinded endpoint adjudication (BEA) committee eliminates diagnostic and clinical assessment bias, ensuring the ethical integrity of the outcome data.

- Practical: PROBE often mirrors real-world clinical practice more closely than double-blind designs, improving physician and patient recruitment rates. It is typically less complex and costly to administer than double-blind, placebo-controlled trials requiring matched placebos. This efficiency accelerates study timelines, a key advantage for biomarker validation studies that are often precursors to larger interventional trials.

Data on PROBE Trial Impact

Recent meta-analyses and reviews highlight the performance of the PROBE design. The following table summarizes quantitative findings related to its application in cardiovascular and renal biomarker research, common areas for its use.

Table 1: Performance Metrics of PROBE-Designed Trials in Cardiovascular/Renal Research

| Metric | Finding in PROBE Trials | Comparative Context |

|---|---|---|

| Patient Recruitment Rate | ~25-40% faster than double-blind trials | Based on analysis of hypertension and heart failure trials from 2015-2023. |

| Major Adverse Cardiovascular Event (MACE) Endpoint Concordance | >95% concordance between site investigator and BEA committee | Highlights critical need for BEA; initial site calls had high variability. |

| Trial Cost | Estimated 15-30% reduction in operational costs | Savings attributed to no drug blinding procedures and simplified supply chain. |

| Regulatory Acceptance (FDA/EMA) | ~90% acceptance rate of primary endpoint from PROBE trials | Acceptance contingent on a rigorously documented and independent BEA process. |

Protocols for Key Experimental Components

Protocol 1: Establishment of a Blinded Endpoint Adjudication Committee (BEA) Objective: To implement an independent, blinded process for endpoint verification, the cornerstone of the PROBE framework. Methodology:

- Committee Formation: Assemble a multidisciplinary BEA committee (e.g., cardiologist, neurologist, nephrologist) unrelated to the trial sites. All members must declare no conflicts of interest.

- Charter Development: Create a detailed charter defining primary and secondary endpoints with standardized, objective diagnostic criteria (e.g., MI defined by Universal Definition, stroke by NIHSS + imaging).

- Data Preparation (Blinding): A dedicated unblinded team, separate from the BEA, prepares participant case report packages. All information revealing treatment assignment, site identifiers, and dates of prior events is redacted. Packages include source documents (imaging, lab reports, ECG tracings) and a chronologic narrative.

- Adjudication Process: Committee members review packages independently using a predefined electronic system. Each endpoint is classified per the charter (e.g., "confirmed," "not confirmed," "insufficient data"). Discrepancies trigger centralized, moderated discussion until consensus is reached.

- Data Lock: The adjudicated endpoint dataset is finalized and delivered to the study statistician for analysis, separate from the open-label operational database.

Protocol 2: Integration of a Candidate Biomarker into a PROBE Trial Objective: To prospectively validate the prognostic or predictive utility of a novel biomarker within the PROBE structure. Methodology:

- Pre-Trial Assay Validation: The candidate biomarker assay must undergo analytical validation (precision, sensitivity, LOQ, stability) per FDA/EMA Bioanalytical Method Validation guidelines before trial initiation.

- Sample Collection & Management: Standardized collection kits are provided to all sites. Specify detailed procedures for sample type (e.g., serum, plasma), volume, processing (centrifugation speed/time), aliquotting, and temporary storage. Samples are shipped frozen to a central biorepository on a predefined schedule (e.g., baseline, 3 months, primary endpoint).

- Blinded Batch Analysis: All samples are analyzed in a single, centralized laboratory after the clinical database is locked for the primary analysis. Samples are analyzed in random order by technicians blinded to all clinical data.

- Statistical Analysis Plan (SAP): A prespecified SAP details the biomarker analysis. This includes:

- Primary Biomarker Objective: e.g., "To assess if baseline biomarker X level predicts the primary composite endpoint (MACE)."

- Analysis: Cox proportional hazards model with the biomarker as a continuous/log-transformed variable, adjusting for established clinical risk factors.

- Predefined Cut-offs: If a clinical cut-off is tested, it must be defined a priori based on prior exploratory studies.

Visualizations

PROBE Trial Workflow with Biomarker Integration

Blinded Endpoint Adjudication Committee Process

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for PROBE Biomarker Studies

| Item | Function in PROBE Context |

|---|---|

| Validated Immunoassay Kit | For quantification of the candidate biomarker in biospecimens. Must have proven analytical precision and reproducibility for reliable data. |

| Standardized Sample Collection Kit | Ensures uniformity across global trial sites. Includes specified tubes (e.g., EDTA plasma), labels, processing instructions, and cold-chain packaging. |

| Clinical Endpoint Adjudication Charter | The definitive document providing objective, operational definitions for all trial endpoints, ensuring consistency in BEA committee rulings. |

| Electronic Data Capture (EDC) System | For capturing clinical data. Must have robust audit trails and permit integration with separate, blinded BEA and biomarker databases. |

| Laboratory Information Management System (LIMS) | Tracks biospecimen lifecycle from collection at site, through storage at the central biorepository, to aliquotting and analysis. Critical for chain of custody. |

| Central Biorepository Freezers (-80°C) | For long-term, stable storage of trial biospecimens under continuous temperature monitoring to preserve biomarker integrity. |

Building Your PROBE Study: A Step-by-Step Methodological Blueprint

Within the PROBE (Prospective, Randomized, Open-label, Blinded Endpoint) design framework for biomarker validation, the initial and most critical step is the precise definition of the primary biomarker objective and its associated hypothesis. This foundational step establishes the scientific rationale, directs all subsequent experimental and clinical protocols, and determines the statistical analysis plan. A poorly defined objective leads to inefficient resource use, inconclusive data, and failed validation. This application note details the process of formulating a primary biomarker objective and testable hypothesis, providing actionable protocols for researchers and drug development professionals.

Core Concepts and Current Landscape

A primary biomarker objective is a clear, concise statement of what the study intends to prove about the biomarker. It must specify the biomarker, its clinical or biological context, and the intended use (e.g., diagnostic, prognostic, predictive, or pharmacodynamic). The hypothesis is a direct, falsifiable claim derived from this objective, often proposing a relationship between the biomarker level/status and a specific clinical outcome or biological state.

Recent trends emphasize fit-for-purpose validation, where the stringency of validation is aligned with the biomarker's intended application (e.g., early research vs. clinical decision support). Regulatory guidance (FDA-NIH BEST Resource, ICH E16) underscores the need for hypothesis-driven approaches to mitigate false discovery risks in omics-based biomarker development.

Table 1: Categories of Biomarker Objectives with Examples

| Category | Intended Use | Example Primary Objective | Associated Hypothesis |

|---|---|---|---|

| Diagnostic | Detect or confirm a disease state | To determine if plasma pTau181 concentration can distinguish Alzheimer's Disease (AD) from frontotemporal dementia (FTD). | Plasma pTau181 levels are significantly higher in AD patients compared to FTD patients. |

| Prognostic | Identify likelihood of a clinical event in untreated patients | To assess whether tumor mutational burden (TMB) ≥ 10 mut/Mb is associated with 2-year overall survival (OS) in resected non-small cell lung cancer (NSCLC). | Patients with high TMB (≥10 mut/Mb) will have superior 2-year OS compared to those with low TMB. |

| Predictive | Identify patients likely to respond to a specific therapy | To evaluate if HER2 amplification by NGS predicts objective response rate (ORR) to trastuzumab deruxtecan in breast cancer. | Patients with HER2-amplified tumors will have a higher ORR than those without amplification. |

| Pharmacodynamic | Show a biological response to a therapeutic intervention | To characterize the change in serum interleukin-6 (IL-6) levels from baseline after administration of Drug X. | Serum IL-6 levels will decrease by ≥50% from baseline 24 hours post-dose of Drug X. |

Detailed Protocol for Objective & Hypothesis Definition

Protocol 3.1: Formulating the Primary Biomarker Objective

Purpose: To construct a unambiguous, measurable primary biomarker objective. Workflow:

- Identify Context of Use (COU): Precisely define the biomarker's role (Diagnostic, Prognostic, Predictive, Pharmacodynamic).

- Define Key Variables:

- Biomarker (B): Specify the analyte (e.g., "methylation status of gene panel Y", "serum concentration of protein Z").

- Population (P): Describe the study subjects (e.g., "patients with metastatic colorectal cancer, RAS wild-type").

- Comparator/Condition (C): Define the reference (e.g., "healthy controls", "placebo-treated group", "patients with progressive disease").

- Outcome (O): State the clinical or biological endpoint (e.g., "progression-free survival at 6 months", "reduction in tumor volume").

- Assemble Objective Statement: Use the template: "To [verb: determine, assess, evaluate, compare] the [relationship] between [B] and [O] in [P] compared to/within [C]."

- Review for Feasibility: Ensure the objective is aligned with available samples, assay capabilities, and statistical power.

Protocol 3.2: Deriving a Testable Statistical Hypothesis

Purpose: To translate the objective into a null (H₀) and alternative (H₁) hypothesis suitable for statistical testing. Workflow:

- State the Research Hypothesis: A positive declaration of the expected relationship (e.g., "Higher baseline levels of Biomarker A are associated with longer time to relapse").

- Formulate the Null Hypothesis (H₀): State the hypothesis of "no effect" or "no association." It must be mathematically testable (e.g., "There is no correlation between baseline levels of Biomarker A and time to relapse" or "The mean level of Biomarker A is equal in responders and non-responders").

- Formulate the Alternative Hypothesis (H₁): This is the complement of H₀ and reflects the research hypothesis (e.g., "There is a significant positive correlation..." or "The mean level of Biomarker A is different between groups").

- Specify Test Parameters: Link the hypothesis to the specific statistical test (e.g., two-sample t-test, log-rank test, ROC analysis) and define the primary endpoint variable (e.g., hazard ratio, area under the curve [AUC]).

Diagram 1: Workflow for Defining Biomarker Study Foundations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Biomarker Assay Development

| Reagent / Material | Function / Purpose | Key Considerations |

|---|---|---|

| Validated Antibody Pairs | For immunoassay development (ELISA, Luminex). Critical for specificity and sensitivity. | Choose clones validated for the intended sample matrix (plasma, FFPE). Verify lot-to-lot consistency. |

| Multiplex Immunoassay Panels | Simultaneous quantification of multiple analytes from limited sample volume. | Assess cross-reactivity, dynamic range, and reproducibility. Platforms: Luminex, Olink, MSD. |

| Digital PCR (dPCR) Assays | Absolute quantification of rare mutations or gene expression changes without a standard curve. | Ideal for low-abundance targets in liquid biopsies. Provides high precision. |

| Next-Generation Sequencing (NGS) Panels | For genomic, transcriptomic, or epigenomic biomarker discovery/validation. | Design must cover all variants of interest. Requires robust bioinformatics pipeline. |

| Stable Isotope-Labeled Standards | Internal standards for mass spectrometry-based assays (e.g., PRM, SRM). | Enables precise absolute quantification by correcting for sample prep variability. |

| Cell-Free DNA/RNA Collection Tubes | Preserve blood samples for circulating biomarker analysis, preventing degradation. | Critical for reproducible liquid biopsy results. Must be validated for downstream assays. |

| Formalin-Fixed, Paraffin-Embedded (FFPE) RNA/DNA Isolation Kits | Extract high-quality nucleic acids from archival clinical tissue samples. | Yield and purity are paramount; choose kits with high fragmentation tolerance. |

| Reference Standard (Calibrator) | A material with a known quantity/activity of the biomarker to establish a standard curve. | Should be matrix-matched to patient samples as closely as possible. |

Experimental Protocol: A Predictive Biomarker Example

Scenario: Validation of a predictive RNA signature for response to an immune checkpoint inhibitor in melanoma.

Protocol 5.1: Objective & Hypothesis Definition for a Predictive Signature

- Primary Biomarker Objective: To evaluate whether a high score from the 12-gene inflammatory signature (GIS) is associated with improved objective response rate (ORR) to pembrolizumab monotherapy in patients with advanced, treatment-naïve melanoma, compared to patients with a low GIS score.

- Research Hypothesis: Patients with a high GIS score will have a significantly higher ORR.

- Statistical Hypotheses:

- H₀: The ORR is equal in patients with high GIS scores and low GIS scores. (ORRhigh = ORRlow)

- H₁: The ORR is greater in patients with high GIS scores. (ORRhigh > ORRlow)

- Primary Endpoint & Analysis: Difference in ORR (per RECIST 1.1) between groups, analyzed using a chi-square test. A sample size calculation is performed to detect a minimum difference of 25% with 80% power.

Diagram 2: Predictive Biomarker Validation Pathway

Within the PROBE (Prospective Randomized Open-label Blinded Endpoint) design framework for biomarker validation, the selection, randomization, and blinding of the study cohort are critical determinants of internal validity and generalizability. This protocol details the methodological steps to minimize selection bias, ensure prognostic balance between groups, and maintain endpoint assessment objectivity, thereby strengthening the causal inference between biomarker status and clinical outcome.

Cohort Selection Protocol

Eligibility Criteria: Structured Definition

Cohort selection employs a two-tiered criteria system to ensure a homogeneous, well-defined study population relevant to the biomarker's intended use context.

Table 1: Structured Eligibility Criteria for PROBE Biomarker Studies

| Criterion Type | Category | Rationale & Operational Definition |

|---|---|---|

| Inclusion | Clinical Phenotype | Confirmatory diagnosis per accepted guidelines (e.g., AJCC staging for cancer, ESC criteria for heart failure). Must be verifiable via source documentation. |

| Inclusion | Biomarker Status | Pre-defined biomarker positive/negative/multi-level status, as measured by the index assay prior to randomization. Sample collection window: ≤ 6 weeks pre-enrollment. |

| Inclusion | Therapeutic Context | Eligible for the standard-of-care (SoC) treatment regimen against which the biomarker is being validated. |

| Inclusion | Clinical Readiness | Life expectancy ≥ 6 months; ECOG PS 0-2; adequate organ function (defined by lab ranges). |

| Exclusion | Confounding Treatments | Concurrent use of non-protocol therapies targeting the pathway of interest. Washout period: ≥ 5 half-lives of prior targeted agent. |

| Exclusion | Comorbidities | Conditions that independently predict the primary endpoint (e.g., severe renal failure for a cardiovascular outcome). |

| Exclusion | Technical | Insufficient tumor tissue/biological sample for biomarker analysis or poor sample quality (pre-specified QC metrics). |

Screening & Enrollment Workflow

Diagram 1: Cohort Screening and Enrollment Workflow (Max 100 characters)

Randomization Protocol

Methodology & Implementation

Randomization is performed after confirmation of eligibility and biomarker status to facilitate stratified allocation. A centralized, interactive web response system (IWRS) is mandated.

Table 2: Randomization Scheme Specifications

| Parameter | Specification | Operational Detail |

|---|---|---|

| Type | Stratified Block Randomization | Balances groups within key prognostic strata. |

| Allocation Ratio | 1:1 | Standard for biomarker validation (biomarker-directed vs. control). |

| Stratification Factors | 1. Biomarker status (Pos/Neg)2. Clinical center (high-volume vs. low-volume)3. Key prognostic covariate (e.g., disease stage II vs. III) | Minimizes confounding. Factor weights are pre-specified. |

| Block Size | Variable (4, 6) | Concealed from site investigators to prevent prediction. |

| System | IWRS (24/7 access) | Validated, 21 CFR Part 11 compliant system. Audit trail maintained. |

Randomization Execution Procedure

- Site Initiates: Investigator logs into secure IWRS portal.

- Data Entry: Inputs participant ID, confirms eligibility, and enters stratification factor data (biomarker status, center code, disease stage).

- Allocation: IWRS generates and displays a unique Treatment Arm Code (e.g., "ARM-A") and a Randomization Number.

- Documentation: System automatically logs date/time, user, and inputs. Site prints/saves confirmation for trial master file.

Blinding Protocol

PROBE studies are characteristically "open-label" in treatment administration but mandate blinded endpoint assessment to eliminate detection bias.

Diagram 2: PROBE Study Blinding and Adjudication Flow (Max 100 characters)

Endpoint Adjudication Committee (EAC) Charter

- Composition: Independent, multidisciplinary experts (e.g., radiologists, cardiologists, oncologists) with no direct involvement in the trial.

- Blinding Maintenance: EAC receives sanitized case materials. All references to treatment arm, biomarker status, and investigator interpretation are removed or redacted.

- Process: EAC members adjudicate primary endpoint events (e.g., progression, response, major adverse cardiac event) independently using pre-defined, objective criteria. Discrepancies are resolved by consensus or a pre-specified chair's vote.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cohort Selection & Biomarker Stratification

| Item / Solution | Provider Examples | Function in Protocol |

|---|---|---|

| IVD/CED-Registered Biomarker Assay Kit | Roche Ventana, Agilent Dako, Qiagen | Provides the standardized, validated platform for determining enrollment biomarker status. Essential for stratification. |

| Digital Pathology & Image Analysis Software | Visiopharm, Indica Labs, HALO | Enables quantitative, reproducible scoring of biomarker expression (e.g., H-score, % positivity) by central lab. |

| Interactive Web Response System (IWRS) | Medidata RAVE, Oracle Inform, YPrime | The centralized platform for managing eligibility confirmation, stratified randomization, and treatment assignment. |

| Electronic Trial Master File (eTMF) | Veeva Vault, LabArchives, MasterControl | Securely stores essential blinding documents: randomization logs, EAC charters, and unblinding procedures. |

| Sample Tracking & Management Software | FreezerPro, BioSample Hub, OpenSpecimen | Manages the chain of custody and pre-analytical conditions of biospecimens used for biomarker testing. |

| Clinical Endpoint Adjudication Portal | ERT, Biomedical Systems, IQVIA | A blinded, secure online platform for the EAC to review redacted case materials and record endpoint judgments. |

Within the PROBE (Prospective Biomarker Evaluation) design framework for biomarker validation, the pre-analytical phase—specimen collection, handling, and processing—is the most critical determinant of data integrity and assay reproducibility. Inconsistent pre-analytical practices are a primary source of variability, leading to irreproducible results and failed validation. This document provides application notes and standardized protocols to control these variables, ensuring specimens are fit for purpose in downstream analytical validation phases.

The following tables summarize key quantitative data on the effects of common pre-analytical variables on biomarker stability, as supported by recent literature and guidelines (e.g., CAP, CLSI, SPIDIA).

Table 1: Effects of Time and Temperature on Common Biomarker Analytes in Blood

| Biomarker Class | Specimen Type | Acceptable Hold Time (Room Temp) | Acceptable Hold Time (2-8°C) | Critical Variable & Effect |

|---|---|---|---|---|

| Cell-Free DNA | Plasma (EDTA) | < 6 hours | < 72 hours | Delay in processing increases genomic DNA contamination from lysing blood cells. |

| Phosphoproteins | PBMCs (EDTA) | < 1 hour | Not Recommended | Rapid phosphorylation state changes post-venipuncture. Rapid processing and fixation required. |

| Cytokines | Serum | 4-8 hours | 24-48 hours | In vitro release from platelets/leukocytes can increase levels over time. |

| Metabolites | Plasma (Heparin) | < 30 min | < 2 hours | Rapid ongoing enzymatic activity alters metabolite profiles. |

| Exosomes | Plasma (Citrate) | < 4 hours | < 96 hours | Prolonged time increases vesicle aggregation and protein degradation. |

Table 2: Effects of Processing Method on Analytical Results

| Processing Parameter | Variable Compared | Example Biomarker | Observed Change | Recommendation |

|---|---|---|---|---|

| Centrifugation Force | 500 x g vs. 2000 x g | Cell-Free DNA | Up to 3-fold increase in [cfDNA] with lower force due to residual cells. | Standardize force (e.g., 1600 x g) and time; double spin for platelet-poor plasma. |

| Freeze-Thaw Cycles | 0 vs. 3 cycles | Immunoassay (Tumor Marker) | Up to 25% decrease in measured concentration for labile proteins. | Aliquot to avoid repeated thawing; single-use vials. |

| Tube Additive | EDTA vs. Heparin Plasma | miRNA Sequencing | Heparin inhibits RT-PCR; different exosome recovery profiles. | Match additive to downstream assay (EDTA for PCR-based, citrate for vesicles). |

Standard Operating Protocols (SOPs)

SOP 3.1: Standardized Blood Collection and Processing for Plasma Biomarker Analysis

Objective: To obtain high-quality, platelet-poor plasma suitable for multi-analyte profiling (e.g., proteins, nucleic acids).

Materials & Reagents:

- K2EDTA blood collection tubes (preferred for nucleic acids).

- Tourniquet, needles, safety collection set.

- Refrigerated centrifuge capable of precise speed control.

- Timer.

- Low protein-binding pipettes and cryovials.

- Labels and permanent ink marker.

- Ice or refrigerated rack.

Protocol:

- Patient Preparation & Collection: Adhere to fasting/activity requirements per study design. Apply tourniquet for minimal time (<1 minute). Draw blood into K2EDTA tubes. Invert gently 8-10 times.

- Primary Processing: Start timer upon draw. Keep tubes upright at room temperature (18-25°C). Process within 2 hours of collection for most analytes (30 min for phosphoproteins/metabolites).

- First Centrifugation: Balance tubes. Centrifuge at 1600-2000 x g for 10 minutes at 4°C.

- Plasma Transfer: Using a pipette, carefully transfer the top plasma layer (approximately 2/3 volume) to a new, labeled conical tube, avoiding the buffy coat and platelet layer.

- Second Centrifugation (for platelet-poor plasma): Centrifuge the transferred plasma at 16,000 x g for 10 minutes at 4°C to pellet remaining platelets.

- Aliquoting & Storage: Transfer the final supernatant into pre-labeled cryovials in single-use volumes. Immediately snap-freeze in liquid nitrogen or a dry-ice/ethanol bath. Store at -80°C. Document freeze time.

SOP 3.2: Biospecimen Handling and Chain of Custody

Objective: To ensure traceability and preserve specimen integrity from collection to analysis.

Protocol:

- Labeling: Apply pre-printed, barcoded labels with unique specimen ID (USID), collection date/time, and specimen type. Use cryo-resistant labels.

- Documentation: Complete a specimen collection form concurrently (Patient ID, USID, volume, processing time, any deviations).

- Transport: For frozen specimens, use validated shipping containers with sufficient dry ice. Include a temperature data logger. For ambient, use insulated containers with cold packs.

- Receipt & QC: Upon receipt, verify specimen ID against manifest, check volume, and confirm temperature log integrity. Reject specimens with broken chain of custody or temperature excursions.

- Database Logging: Enter all metadata (collection, processing, storage, transport conditions) into a centralized Laboratory Information Management System (LIMS).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Cell-Free DNA BCT Tubes | Blood collection tubes with preservatives that stabilize nucleated blood cells, preventing lysis and release of genomic DNA, enabling room-temperature plasma stabilization for up to 14 days. |

| Phosphoprotein Stabilizer Tabs | Additives that rapidly inhibit phosphatase and kinase activity in whole blood, preserving in vivo phosphorylation states of signaling proteins in PBMCs for up to 48 hours. |

| RNAlater Stabilization Solution | Aqueous tissue storage reagent that rapidly penetrates and stabilizes cellular RNA (and protein) by inactivating RNases, allowing tissue to be stored at room temp for 1 week. |

| Pre-analytical Quality Control (QC) Kits | Assay-ready controls (e.g., synthetic cfDNA spikes, protein analytes) to add to a sample aliquot post-collection to monitor degradation during processing and storage. |

| Matrix-Matched Calibrators | Calibration standards prepared in the same biological matrix as the test samples (e.g., artificial plasma) to account for matrix effects in quantitative assays. |

Experimental Protocols from Cited Literature

Protocol: Assessment of Pre-centrifugation Hold Time on cfDNA Yield (Adapted from Meddeb et al., 2019) Objective: Quantify the increase in wild-type cfDNA background due to leukocyte lysis over time.

- Collect 40 mL blood from a healthy donor into 4 K2EDTA tubes (10 mL each).

- Process Tube 1 immediately (Time 0). Store Tubes 2-4 at room temperature.

- Process Tubes 2, 3, and 4 at 2, 6, and 24 hours post-collection, respectively, per SOP 3.1.

- Extract cfDNA from 3 mL of plasma from each time point using a silica-membrane column kit. Elute in 50 µL.

- Quantify total double-stranded DNA using a fluorescence assay (e.g., Qubit dsDNA HS).

- Quantify a reference single-copy gene (e.g., RPPH1) via digital PCR to calculate genomic DNA contamination.

- Data Analysis: Plot total DNA yield and RPPH1 copy number versus hold time. Expect a significant increase after 6 hours.

Protocol: Effect of Freeze-Thaw Cycles on Cytokine Recovery (Adapted from Breen et al., 2021) Objective: Determine the stability of a panel of cytokines to repeated freeze-thaw cycles.

- Pool aliquots of human serum known to contain detectable levels of IL-6, IL-8, TNF-α.

- Divide the pool into 50 identical single-use aliquots (e.g., 100 µL each).

- Designate 10 aliquots as controls (never thawed). Subject the remaining aliquots to 1, 2, 3, 4, or 5 freeze-thaw cycles (n=10 per group).

- For thawing, place aliquots in a 4°C refrigerator for 2 hours, then at room temperature for 30 minutes. For re-freezing, place directly at -80°C.

- After completing the designated cycles for each group, analyze all aliquots (including controls) in a single multiplexed immunoassay plate run.

- Data Analysis: Calculate the mean recovery (%) for each cytokine at each cycle relative to the control mean. Perform linear regression to determine the rate of degradation per cycle.

Visualizations

Diagram: Workflow for Plasma Processing with Critical Variables

Diagram: SOPs Bridge Pre-Analytical Gap in PROBE Design

Within the PROBE (Prospective, Randomized, Open-label, Blinded Endpoint) design framework for biomarker validation, the integration of analytical results with definitive clinical outcomes is the critical step that determines clinical utility. This phase moves beyond association to establish that the biomarker can reliably inform on a patient's status relative to a clinically meaningful endpoint. The process requires rigorous, pre-specified protocols to synchronize laboratory data generation with blinded endpoint adjudication, ensuring analytical validity is assessed within the context of clinical validity.

Core Workflow and Synchronization Protocol

The integration is a multi-disciplinary, phased process designed to maintain blinding and prevent bias.

Diagram Title: Workflow for Biomarker-Endpoint Integration

Experimental Protocols for Key Integration Analyses

Protocol 3.1: Primary Clinical Validation Analysis (Sensitivity/Specificity)

Objective: To determine the biomarker's diagnostic accuracy against the gold standard of adjudicated clinical endpoints. Materials: Linked dataset (Biomarker concentration + Adjudicated Endpoint Status). Procedure:

- Define Classification Threshold: Apply pre-specified cut-off (e.g., ROC-optimized or clinically defined).

- Construct 2x2 Contingency Table: Cross-tabulate biomarker-positive/negative status against adjudicated endpoint-positive/negative status.

- Calculate Metrics:

- Sensitivity = TP / (TP + FN)

- Specificity = TN / (TN + FP)

- Positive Predictive Value (PPV) = TP / (TP + FP)

- Negative Predictive Value (NPV) = TN / (TN + FN)

- Compute 95% Confidence Intervals for each metric using the Wilson score interval method.

Protocol 3.2: Time-to-Event Analysis (Cox Proportional Hazards)

Objective: To evaluate the biomarker's prognostic value for predicting the time to a future adjudicated clinical event. Materials: Linked dataset with biomarker value, adjudicated event time/status, and baseline covariates. Procedure:

- Model Specification: Pre-specify if biomarker is analyzed as continuous (log-transformed) or categorical (quartiles).

- Cox PH Model Fitting: Fit the model: h(t|X) = h₀(t) * exp(β₁biomarker + β₂covariate₁ + ...).

- Assumption Checking: Test the proportional hazards assumption using Schoenfeld residuals.

- Hazard Ratio Estimation: Report HR (exp(β₁)) with 95% CI and p-value. Generate Kaplan-Meier curves for categorical analyses.

Protocol 3.3: Assessment of Added Utility (Nested Model Comparison)

Objective: To determine if the biomarker adds predictive value beyond standard clinical variables. Materials: Linked dataset with biomarker and established clinical risk factors. Procedure:

- Build Base Model: Construct a logistic regression/Cox model using only clinical covariates.

- Build Enhanced Model: Add the biomarker variable to the base model.

- Compare Models: Calculate the difference in -2 Log Likelihood, which follows a chi-square distribution.

- Report Metrics: Provide p-value for likelihood ratio test, and changes in discrimination (ΔC-index or ΔAUC) and reclassification (Net Reclassification Improvement - NRI).

Table 1: Example Results from a Cardiac Biomarker Validation Study (vs. Adjudicated MI)

| Metric | Estimated Value (95% CI) | Interpretation in Clinical Context |

|---|---|---|

| Sensitivity | 92.5% (89.1 - 95.0%) | Captures most true MI events. |

| Specificity | 88.2% (85.0 - 90.9%) | Correctly identifies most non-MI cases. |

| PPV | 76.4% (72.1 - 80.3%) | ~3 in 4 positive tests are true MI. |

| NPV | 96.8% (94.9 - 98.0%) | High confidence in ruling out MI. |

| AUC | 0.94 (0.92 - 0.96) | Excellent diagnostic discrimination. |

| Hazard Ratio (per SD) | 2.45 (1.98 - 3.04) | Strong independent predictor of future events. |

| ΔAUC (vs Clinical Model) | +0.07 (p=0.002) | Provides significant added discriminative power. |

| Continuous NRI | 0.35 (0.18 - 0.52) | Meaningful improvement in risk classification. |

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Materials for Integrated Biomarker-Endpoint Studies

| Item & Example Product | Primary Function in Integration |

|---|---|

| Certified Reference Material (e.g., NIST SRM 2921) | Provides an unbroken metrological traceability chain for assay calibration, ensuring longitudinal and multi-site comparability of biomarker concentration data. |

| Multiplex Immunoassay Panel (e.g., Luminex xMAP) | Allows simultaneous quantification of a pre-specified biomarker signature from a single, small-volume aliquot, preserving precious clinical specimens. |

| Stable Isotope-Labeled Internal Standards (SIS) | Critical for mass spectrometry-based assays; corrects for sample-specific variability in extraction and ionization, improving precision and accuracy. |

| End-to-End Data Integration Platform (e.g., Medidata Rave, Veeva) | Secure electronic system for linking de-identified lab data with adjudicated clinical data via a Subject ID, maintaining an audit trail and blinding. |

| Clinical Endpoint Adjudication Charter | Pre-defined, protocol-driven document provided to the independent committee, detailing exact endpoint definitions and evidence requirements for uniform adjudication. |

| Statistical Analysis Plan (SAP) - Final Version | Locked, detailed blueprint specifying every integrated analysis, including handling of missing data, covariate adjustment, and multiplicity corrections, filed before database lock. |

Pathway to Clinical Interpretation

The final step translates statistical findings into a clinically actionable framework.

Diagram Title: From Integrated Data to Clinical Action Rule

Within the framework of a thesis on PROBE (Prospective-Retrospective Blinded Evaluation) design for biomarker validation, this application note details a protocol for validating "RAS/RAF Pathway Activation Score" (R-PAS), a novel transcriptomic signature predictive of response to the hypothetical MEK inhibitor, Mektinib, in colorectal cancer (CRC). The PROBE design mitigates bias by using archived specimens from a previously conducted, population-based prospective clinical trial.

Table 1: Summary of Pivotal Trial (CRYSTAL-2 Analog) & PROBE Analysis Plan

| Parameter | Description | Quantitative Metric |

|---|---|---|

| Source Trial | Phase III: FOLFIRI + Mektinib vs. FOLFIRI + Placebo in 2L CRC | N=800 (400 per arm) |

| Primary Endpoint (Original) | Progression-Free Survival (PFS) | HR=0.75, p=0.01 (Overall) |

| Archived Specimen Availability | Tumor tissue blocks from screening | Estimated 85% (n=680) |

| PROBE Cohort | Patients with measurable disease & tissue | Target N=600 |

| Biomarker Prevalence (Est.) | R-PAS High (≥50%ile) | ~50% (n=300) |

| Statistical Power | To detect PFS interaction (α=0.05) | 85% for HR_int=0.55 |

| Key Analysis | Treatment-by-biomarker interaction | Cox Proportional Hazards |

Table 2: R-PAS Assay Performance Characteristics (Pre-PROBE)

| Parameter | Requirement | Validation Result |

|---|---|---|

| RNA Input | FFPE tissue section | 50 ng (minimum) |

| Assay Platform | NanoString nCounter | 20-gene signature |

| Precision | Intra-run CV | <5% |

| Inter-run CV | <10% | |

| Reproducibility | Inter-site concordance (ICC) | >0.90 |

| Limit of Detection | Tumor cell content | ≥20% |

| Pre-analytical Robustness | CIs up to 72 hours | R^2 > 0.95 vs. reference |

Experimental Protocols

Protocol 3.1: Tissue Selection and Nucleic Acid Isolation Objective: To obtain high-quality RNA from archival FFPE blocks for R-PAS analysis.

- Block Selection: Identify and retrieve one representative FFPE block per patient from the source trial's biorepository.

- H&E Review: A central pathologist confirms ≥20% tumor content and marks region for macrodissection.

- Sectioning: Cut 5 x 10 µm sections into a nuclease-free microcentrifuge tube. Include a 4-5 µm section for H&E verification pre/post.

- RNA Extraction: Use the Qiagen RNeasy FFPE Kit.

- Deparaffinize with 1mL xylene, vortex, centrifuge. Discard supernatant.

- Wash twice with 1mL 100% ethanol.

- Digest with Proteinase K at 56°C for 15 min, then 80°C for 15 min.

- Bind, wash, and elute RNA in 30 µL RNase-free water per kit instructions.

- QC: Quantify using Qubit RNA HS Assay. Accept samples with ≥50 ng total RNA. Assess fragmentation via Bioanalyzer RNA Integrity Number Equivalent (RINe); accept if >2.0.

Protocol 3.2: R-PAS Profiling via nCounter Objective: To generate standardized R-PAS scores from isolated RNA.

- Preparation: Dilute RNA to 20 ng/µL. Prepare a master mix containing 3 µL Reporter CodeSet, 5 µL Hybridization Buffer, and 0.5 µL nCounter Sprint Cartridge PRC.

- Hybridization: Add 8 µL of master mix to 5 µL (100 ng) of RNA in a strip tube. Seal, mix, and incubate at 67°C for 20 hours in a thermal cycler.

- Post-Hybridization Processing: Load samples into the nCounter SPRINT Cartridge. Place cartridge into the nCounter SPRINT Profiler. Initiate automatic purification and imaging (scan at 555 fields of view).

- Data Normalization: Import raw RCC files into nSolver 5.0 software.

- Perform positive control normalization (geometric mean of positive controls).

- Perform background correction using the mean + 2SD of negative controls.

- Perform content normalization using the geometric mean of 5 housekeeping genes (GAPDH, ACTB, B2M, RPLP0, GUSB).

- Score Calculation: Compute R-PAS as the normalized geometric mean of the 12 signature genes. Apply pre-specified cutpoint (≥50th percentile of PROBE cohort distribution = R-PAS High).

Protocol 3.3: Blinded PROBE Analysis Objective: To evaluate the predictive value of R-PAS for Mektinib benefit.

- Blinding: The biomarker testing lab receives de-identified samples. A third-party statistician holds the key linking sample ID to treatment arm and clinical outcome.

- Data Lock: Upon completion of R-PAS generation for all n=600 samples, the biomarker data, treatment assignment, and clinical data (PFS, OS) are merged by the independent statistician.

- Primary Analysis: A Cox regression model is fitted for PFS with terms for treatment (Mektinib vs. Placebo), biomarker status (R-PAS High vs. Low), and their interaction. A significant interaction term (p<0.05, two-sided) indicates predictive value.

- Secondary Analyses: Estimate PFS Hazard Ratios for Mektinib vs. Placebo within each biomarker subgroup. Perform sensitivity analyses using alternative pre-specified cutpoints (e.g., 30th, 70th percentiles).

Visualizations

PROBE Biomarker Analysis Workflow

RAS-RAF-MEK-ERK Pathway & Inhibitor

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for R-PAS PROBE Analysis

| Item | Function | Example Product/Cat. No. |

|---|---|---|

| FFPE RNA Isolation Kit | Purifies fragmented RNA from archival tissue. | Qiagen RNeasy FFPE Kit (73504) |

| RNA Quantitation Assay | Accurately quantifies low-concentration RNA. | Invitrogen Qubit RNA HS Assay Kit (Q32852) |

| RNA Quality Assessment | Evaluates RNA fragmentation profile. | Agilent RNA 6000 Nano Kit (5067-1511) |

| Custom nCounter CodeSet | Hybridization probes for 20-gene R-PAS signature. | NanoString Custom CodeSet (Design-specific) |

| nCounter Hybridization Kit | Reagents for target hybridization. | NanoString nCounter Sprint Hybridization Kit (10002519) |

| nCounter SPRINT Cartridge | Cartridge for sample processing/imaging. | NanoString nCounter SPRINT Cartridge (10002520) |

| Normalization Controls | Synthetic RNAs for assay QC & normalization. | NanoString Positive & Negative Control Sets |

| nSolver Analysis Software | Data processing, normalization, and QC. | NanoString nSolver 5.0 Software |

| Digital Slide Scanner | For central pathology review. | Leica Aperio AT2 |

Navigating Pitfalls: Troubleshooting and Optimizing PROBE Trial Execution

Within the framework of the PROBE (Prospective, Randomized, Observational Blinded Evaluation) design for biomarker validation, controlling pre-analytical variables and batch effects is not merely a technical concern but a foundational requirement for clinical utility. A PROBE study's goal is to evaluate a biomarker's ability to predict outcomes in a real-world, prospectively collected cohort. Uncontrolled variation introduced during sample collection, processing, and analysis can create systematic biases (batch effects) that obscure true biological signals, leading to false validation or rejection of a promising biomarker. This document details protocols and application notes to mitigate these risks.

Quantifying Key Pre-analytical Variables

The following table summarizes the impact of common pre-analytical variables on major analyte classes, based on recent meta-analyses.

Table 1: Impact of Common Pre-analytical Variables on Biomarker Stability

| Variable | Analyte Class | Effect | Acceptable Delay/Deviation (Typical) |

|---|---|---|---|

| Room Temp. Delay | Cell-Free DNA (cfDNA) | ↑ Fragmentation, ↓ yield. Increase in genomic DNA contamination from lysed blood cells. | < 2 hours |

| Phosphoproteins (pSTAT3) | Rapid signal loss (>50% in 30 mins). Phosphorylation states are highly labile. | Immediate freeze (or stabilize) | |

| Metabolites (e.g., Lactate) | Rapid concentration changes due to ongoing glycolysis in cells. | < 30 minutes | |

| Freeze-Thaw Cycles | Cytokines (IL-6) | Gradual degradation; >2 cycles can cause significant signal loss (>20%). | ≤ 2 cycles |

| microRNA | Relatively stable; but can lead to degradation and profile shifts with >3 cycles. | ≤ 3 cycles | |

| Centrifugation Force | Extracellular Vesicles | Insufficient force fails to pellet small EVs; excessive force can cause rupture and protein co-pellet. | 20,000 x g for 30 mins (for small EVs) |

| Collection Tube | Serum vs. Plasma | Serum shows higher platelet-derived miRNAs; Plasma (EDTA) inhibits coag but requires timely processing. | Choose and standardize uniformly |

Experimental Protocols for Mitigation

Protocol 3.1: Standardized Blood Collection & Processing for Multi-Omics

Objective: To obtain high-quality plasma, serum, and PBMCs from a single blood draw for genomic, proteomic, and metabolomic analysis.

- Materials: Tourniquet, 21G needle, Vacutainer system: Cell-Free DNA BCT (Streck), PAXgene Blood RNA tube, K2EDTA tube, Serum Separator Tube (SST), pre-chilled PBS, Ficoll-Paque PLUS, cryovials, liquid nitrogen.

- Procedure: a. Order of Draw: Follow CLSI guidelines. Draw into additive tubes (CF-DNA BCT, EDTA) before clot-activator tubes (SST). b. For Plasma (cfDNA/EV): Gently invert CF-DNA BCT 10x. Centrifuge at 1600 x g for 20 min at 4°C within 2h. Aliquot supernatant (plasma) into cryovials, avoiding the buffy coat. Flash freeze. c. For Serum: Allow SST to clot vertically for 30 min at RT. Centrifuge at 2000 x g for 15 min. Aliquot and flash freeze. d. For PBMCs: Process EDTA blood within 2h. Dilute 1:1 with PBS. Layer over Ficoll. Centrifuge at 400 x g for 30 min (no brake). Harvest PBMC ring. Wash twice in PBS. Cryopreserve in 10% DMSO/FBS.

Protocol 3.2: Automated Nucleic Acid Extraction with Internal Spiked-In Controls

Objective: To minimize technical variation in RNA/DNA extraction, enabling batch effect correction.

- Materials: QIAamp Circulating Nucleic Acid Kit, robotic liquid handler (e.g., QIAcube), synthetic C. elegans miRNA (cel-miR-39) and Arabidopsis thaliana mRNA (At1g13320) spike-in mixes, RNase-free water, magnetic bead-based purification plates.

- Procedure: a. Spike-In Addition: Add a fixed volume of exogenous spike-in control mix to each lysate or constant volume of plasma before extraction begins. b. Automated Extraction: Load samples onto the robotic handler. Use a single, validated protocol for all samples. Elute in a constant low-volume buffer (e.g., 30 µL). c. QC: Quantify yield via fluorometry (Qubit). Use qPCR for the spike-in controls to calculate extraction efficiency for each sample. Normalize downstream data (qPCR, sequencing) based on spike-in recovery.

Protocol 3.3: Randomized Plate Layout and ComBat Batch Correction for Immunoassays

Objective: To statistically identify and remove batch effects from high-throughput protein assay data.

- Materials: Multiplex immunoassay platform (e.g., Luminex, Olink), sample cohort, quality control (QC) reference plasma (pooled from many donors), assay kit, planning software.

- Procedure: a. Randomized Layout: Do not group cases/controls on the same plate. Use a random number generator to assign sample positions. Include the same QC reference plasma in duplicate on every plate (e.g., positions A1 and H12). b. Assay Execution: Perform assay per manufacturer's instructions, processing no more than 2 plates per day (a "batch"). c. Data Correction: Log2-transform the raw concentration data. Perform linear regression or use the ComBat algorithm (from the sva R package) using the QC reference values and plate ID as the batch variable to adjust mean and variance across batches.

Visualization of Workflows and Concepts

Diagram 1: Integrated sample lifecycle from collection to analysis.

Diagram 2: Components of observed data and mitigation targets.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Pre-analytical Control

| Item | Primary Function | Example Use Case |

|---|---|---|

| Cell-Free DNA BCT Tubes | Chemical stabilization of nucleated blood cells to prevent genomic DNA release, enabling room temp. transport. | Multicenter PROBE studies for liquid biopsy. |

| PAXgene Blood RNA Tubes | Immediate lysis and stabilization of RNA in situ, freezing transcriptional profiles at draw time. | Gene expression profiling from whole blood. |

| Exogenous Synthetic Spike-Ins | Internal controls for normalization of extraction efficiency and technical variation in qPCR/NGS. | microRNA-seq from low-input plasma samples. |

| Universal QC Reference Plasma | Pooled human plasma used as an inter-assay and inter-batch calibrator to monitor and correct drift. | Longitudinal multiplex cytokine assays. |

| Phosphatase/Protease Inhibitor Cocktails | Added immediately to lysis buffers to preserve labile post-translational modifications (e.g., phosphorylation). | Phospho-protein signaling analysis in PBMCs. |

| Automated Nucleic Acid Extraction System | Robotic handling for consistent binding, wash, and elution steps, minimizing operator-induced variation. | High-throughput DNA/RNA extraction for >1000 samples. |

| Magnetic Bead-Based Purification Kits | Flexible, high-recovery purification of various analytes (DNA, RNA, protein) with amenability to automation. | Simultaneous isolation of cfDNA and EVs from plasma. |

In the context of a PROBE (Prospective-Specimen-Collection, Retrospective-Blinded-Evaluation) design for biomarker validation, minimizing bias is paramount to establishing clinical utility. Blinding is a cornerstone methodology that prevents conscious or subconscious influences on the conduct, analysis, and interpretation of a study. Maintaining the integrity of the blinding and having robust protocols for inadvertent unblinding events are critical to the validity of the research outcomes. This document provides application notes and detailed protocols for mitigating bias through blinding procedures within biomarker research.

Table 1: Impact of Blinding on Reported Effect Sizes in Clinical Research (Meta-Analysis Data)

| Study Element | Odds Ratio / Effect Size (Unblinded) | Odds Ratio / Effect Size (Blinded) | Relative Difference | Citation (Example) |

|---|---|---|---|---|

| Subjective Primary Outcome | 0.87 | 0.72 | +21% (Exaggeration) | Hróbjartsson et al., 2012 |

| Objective Primary Outcome | 0.90 | 0.89 | +1% (Minimal) | Hróbjartsson et al., 2012 |