Revolutionizing Drug Discovery: How AI Tools Predict Enzyme-Substrate Matching with Unprecedented Accuracy

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the latest AI tools for enzyme-substrate matching.

Revolutionizing Drug Discovery: How AI Tools Predict Enzyme-Substrate Matching with Unprecedented Accuracy

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the latest AI tools for enzyme-substrate matching. We cover the foundational principles of why traditional methods fall short and how AI bridges the gap, explore the core methodologies and practical applications of leading tools like AlphaFold, DeepFRI, and substrate-specific models, detail common challenges in implementation and strategies for optimizing predictions, and critically validate performance through comparative analysis with experimental data. The article concludes with a synthesis of the field's current state and its profound implications for accelerating targeted drug design and enzyme engineering.

The AI Revolution in Enzyme-Substrate Prediction: Why Traditional Methods Are No Longer Enough

Within pharmaceutical research, a vast majority of therapeutics exert their effects by modulating enzyme activity. This modulation—whether inhibition, activation, or allosteric regulation—hinges on the precise molecular recognition between an enzyme and its endogenous substrate. Consequently, the fundamental challenge of accurately predicting Enzyme-Substrate (ES) pairs lies at the heart of rational drug design. Within the thesis that AI tools are revolutionizing biochemical research, ES prediction emerges as the critical first-principle problem. Accurately mapping the enzyme-substrate interactome enables the identification of novel drug targets, the prediction of off-target effects, and the design of high-specificity inhibitors.

The Quantitative Scale of the Problem

The gap between known enzymes and their validated substrates presents a massive knowledge deficit.

Table 1: The Known vs. Unknown in Human Enzymology

| Metric | Approximate Count | Data Source & Year | Implication for Drug Discovery |

|---|---|---|---|

| Human Protein-Coding Genes | ~20,000 | Ensembl 2023 | Potential pool of all proteins. |

| Confirmed Enzymes (Human) | ~7,500 | BRENDA 2024 | Direct druggable targets. |

| Enzymes with ≥1 Validated Substrate | ~4,200 | BRENDA, UniProt 2024 | Basis for current rational design. |

| Experimentally Validated Unique ES Pairs | ~150,000 | STRING DB, MetaNetX 2023 | Limited ground truth for AI training. |

| In Silico Predicted Potential ES Pairs | Tens of Millions | Various Studies | Vast, untapped target & off-target space. |

Core Methodologies for Experimental Validation

AI predictions require validation through established experimental protocols. Below are detailed methodologies for key techniques.

Isothermal Titration Calorimetry (ITC) for Binding Affinity

Objective: To measure the binding thermodynamics (Kd, ΔH, ΔS) of a purified enzyme with a candidate substrate or inhibitor. Protocol:

- Sample Preparation: Purify recombinant enzyme and synthetize/purity substrate. Dialyze both into identical buffer (e.g., 20 mM HEPES, 150 mM NaCl, pH 7.4).

- Instrument Setup: Load the cell with enzyme solution (typically 10-100 µM). Fill the syringe with substrate solution (10-20x concentrated relative to expected Kd).

- Titration: Perform automated injections of substrate into the enzyme cell at constant temperature (e.g., 25°C). The instrument measures the heat released or absorbed after each injection.

- Data Analysis: Integrate heat peaks per injection. Fit binding isotherm to a one-site binding model using the instrument's software to derive Kd, stoichiometry (n), enthalpy (ΔH), and entropy (ΔS).

Coupled Enzyme Activity Assay (Spectrophotometric)

Objective: To determine kinetic parameters (Km, kcat) for a predicted substrate. Protocol:

- Reaction Design: Choose a coupled system where the product of the primary enzyme (E1) reaction is a substrate for a second, indicator enzyme (E2) that generates a measurable signal (e.g., NADH oxidation/reduction at 340 nm).

- Assay Setup: In a 96-well plate, mix fixed [E1] with varying [Substrate] in reaction buffer. Include excess concentrations of coupling enzymes and their co-factors.

- Kinetic Measurement: Initiate reaction. Monitor absorbance (A340) continuously for 5-10 minutes using a plate reader.

- Michaelis-Menten Analysis: Calculate initial velocity (v0) from the linear slope of A340 vs. time. Plot v0 vs. [S]. Fit data to the Michaelis-Menten equation: v0 = (Vmax * [S]) / (Km + [S]) to extract Km and Vmax. Calculate kcat = Vmax / [E1].

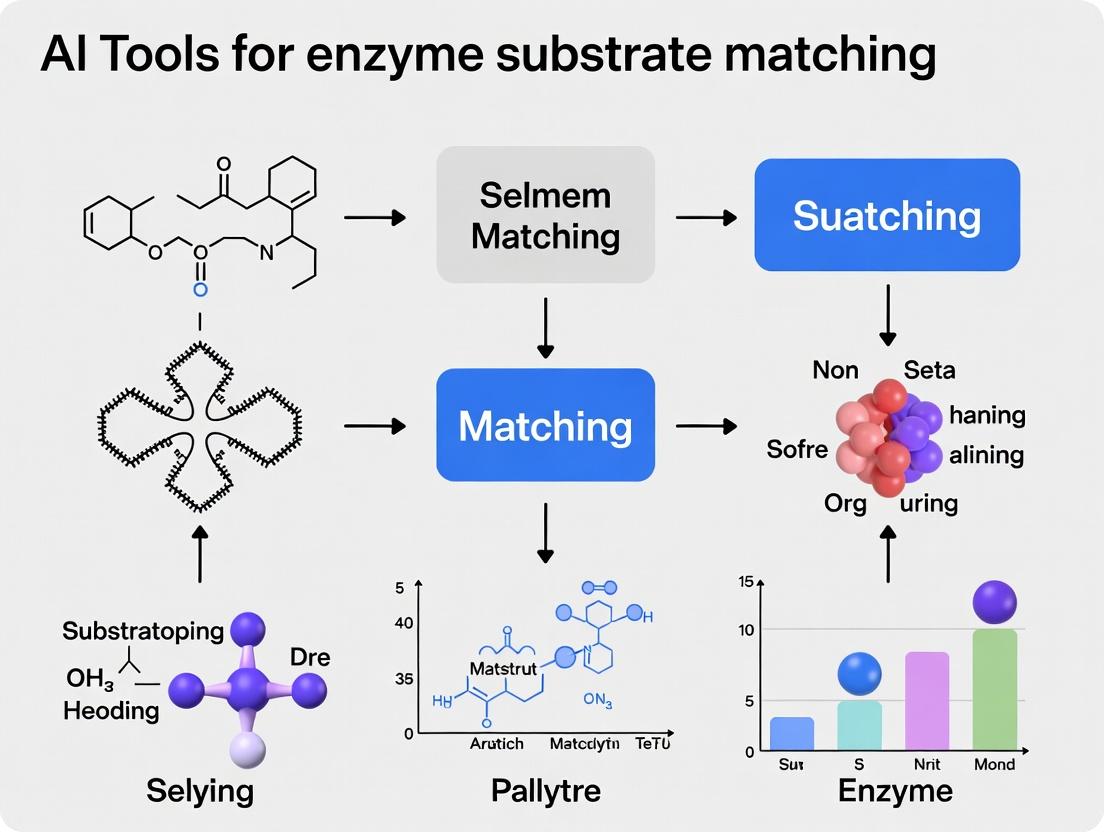

AI-Driven Workflow for ES Pair Prediction

The integration of AI tools creates a cyclical workflow of prediction, prioritization, and experimental validation.

Diagram Title: AI-Powered ES Pair Prediction and Validation Cycle

Key Research Reagent Solutions

Successful experimental validation relies on high-quality, specific reagents.

Table 2: Essential Research Toolkit for ES Validation

| Reagent / Material | Function & Importance in ES Research |

|---|---|

| Recombinant Purified Enzyme (Tagged) | Essential for binding/activity assays. Tags (e.g., His, GST) enable uniform purification and immobilization. |

| Synthetic Substrate Library | Defined chemical libraries allow high-throughput screening of AI-predicted substrates. |

| Fluorescent/Chromogenic Probe Substrates | Enable real-time, sensitive detection of enzymatic activity, especially for kinetic assays. |

| ITC Buffer Kit | Pre-formulated, degassed buffers ensure stable baselines and accurate thermodynamic measurements. |

| Coupled Enzyme System Kits | Pre-optimized mixtures of coupling enzymes (e.g., lactate dehydrogenase, pyruvate kinase) for reliable activity assays. |

| Inhibitor/Control Compounds | Known inhibitors (positive controls) and inactive analogs (negative controls) are critical for assay validation. |

| High-Affinity Ni-NTA/Streptavidin Plates | For immobilizing tagged enzymes or biotinylated substrates in surface-based binding assays (SPR, BLI). |

Signaling Pathway Context: ES Prediction in Kinase Drug Discovery

Kinases are a prime drug target class where ES prediction is critical. Mapping a kinase to its physiological substrates reveals its role in disease pathways.

Diagram Title: Kinase-Substrate Cascade in a Pro-Survival Pathway

The critical challenge of predicting enzyme-substrate pairs is not merely an academic exercise. It is the foundational step in de-risking drug discovery. By leveraging AI tools to expand the known enzymome with high-fidelity predictions, researchers can systematically identify novel targets within disease pathways, design inhibitors with exquisite specificity to minimize side effects, and repurpose existing drugs for new indications. The integration of computational prediction with rigorous experimental validation, as outlined in this guide, creates a powerful engine for generating the fundamental knowledge required to develop the next generation of therapeutics.

The accurate prediction of enzyme-substrate interactions and catalytic activity is a cornerstone of modern drug discovery and green chemistry. For decades, researchers have relied on two primary computational pillars: Classical Molecular Docking for high-throughput screening of binding poses, and Quantum Mechanics/Molecular Mechanics (QM/MM) for detailed mechanistic studies. While indispensable, these methods are fundamentally limited by the trade-off between computational speed and physical accuracy. This creates a critical bottleneck in the broader thesis of employing AI tools for scalable, predictive enzyme substrate matching. This guide details these limitations and the quantitative case for next-generation solutions.

Core Limitations: A Quantitative Analysis

Limitations of Classical Molecular Docking

Classical docking employs empirical scoring functions to predict the binding pose and affinity of a ligand within a protein's active site. Its primary limitations stem from simplified physics.

Key Shortcomings:

- Static Receptor Model: Proteins are treated as rigid or semi-rigid bodies, ignoring essential dynamics like side-chain rearrangements and loop movements upon substrate binding.

- Inaccurate Scoring Functions: Force fields are parameterized for general protein-ligand interactions and fail to capture the unique electrostatic and polarization effects critical for enzyme catalysis.

- Neglect of Chemical Reactivity: Docking predicts binding, not reaction. It cannot model bond formation/breaking, transition states, or reaction energetics.

Table 1: Performance Metrics of Classical Docking in Enzyme Contexts

| Metric | Typical Range/Value | Implication for Enzyme Research |

|---|---|---|

| Pose Prediction Accuracy (RMSD < 2.0 Å) | 60-80% for rigid targets; <50% for flexible enzymes | High risk of missing catalytically relevant binding modes. |

| Docking Runtime per Ligand | 30 seconds to 5 minutes (single CPU core) | Enables virtual screening of 10⁵-10⁶ compounds. |

| Correlation (R²) of Score vs. Experimental Ki/Kd | 0.3 - 0.6 | Poor quantitative prediction of binding affinity, especially for transition-state analogs. |

| Treatment of Solvent | Implicit or static water molecules | Fails to model specific catalytic water molecules. |

| Treatment of Metal Ions | Often inaccurate charge/parameterization | Critical failure in metalloenzyme studies. |

Limitations of Quantum Mechanics/Molecular Mechanics (QM/MM)

QM/MM methods partition the system: a QM region (active site, substrate) is treated with quantum chemistry, while the MM region (protein bulk, solvent) uses molecular mechanics. This provides accuracy at extreme computational cost.

Key Shortcomings:

- Prohibitive Computational Cost: QM calculations scale poorly with system size (O(N³) to O(N⁷)), limiting QM region size and sampling.

- Limited Conformational Sampling: Due to cost, studies are often restricted to a single reaction pathway or a handful of snapshots from an MM simulation.

- Sensitivity to QM/MM Partitioning: Results can be highly dependent on the chosen boundary and the treatment of the link between regions.

- High Expertise Barrier: Requires careful parameterization and deep expertise to avoid artifacts.

Table 2: Computational Cost of QM/MM Methods for Enzyme Catalysis

| QM Method / MM Region Size | Typical QM Region Size (Atoms) | Estimated Wall Time for Energy+Forces | Estimated Wall Time for Reaction Pathway | Primary Use Case |

|---|---|---|---|---|

| Semiempirical (e.g., PM6)/~20k atoms | 50-100 | 1-10 minutes | 1-7 days | Preliminary scanning, large systems. |

| Density Functional Theory (DFT)/~10k atoms | 50-200 | 30 min - 4 hours | 2 weeks - 3 months | Standard mechanistic study. |

| High-Level Ab Initio (e.g., CCSD(T))/~5k atoms | 20-50 | 5 - 24 hours | 6 months - 2+ years (often infeasible) | Benchmarking, small critical regions. |

Experimental Protocols for Benchmarking

To objectively evaluate any new method (including AI), it must be benchmarked against classic docking and QM/MM on standardized tasks.

Protocol 1: Benchmarking Pose Prediction vs. Classical Docking

- Dataset Curation: Select a diverse set of 50-100 enzyme-ligand complexes from the PDB, ensuring high-resolution crystallographic data (<2.0 Å) and a variety of enzyme classes (hydrolases, oxidoreductases, etc.).

- Preparation: Prepare protein and ligand structures using a standard pipeline (e.g., Protonation via

reduce, assignment of AMBER/CHARMM force field parameters withtleaporMCPB.pyfor metals). - Classical Docking Control: Perform blind docking with 3 leading software packages (e.g., AutoDock Vina, Glide, GOLD). Use default scoring functions and 20-50 docking runs per ligand. Record top-scoring pose and its RMSD from the crystal structure.

- Evaluation Metric: Calculate the success rate (% of cases with RMSD < 2.0 Å) and the average RMSD for the top-scoring pose across the dataset.

Protocol 2: Benchmarking Reaction Barrier Prediction vs. QM/MM

- System Selection: Choose a well-studied enzymatic reaction with reliable experimental kinetic data (e.g., kcat) and prior QM/MM characterization (e.g., chorismate mutase, HIV-1 protease).

- Model Construction: Build the solvated enzyme-substrate complex from a crystal structure. Define the QM region (substrate + key residues/cofactors).

- QM/MM Reference Calculation: Perform a minimum energy pathway (MEP) calculation using a validated DFT functional (e.g., B3LYP/6-31G(d)) and an MM force field (e.g., CHARMM36) via software like

CP2KorTerachem. Use the Nudged Elastic Band (NEB) or String method to locate the transition state. Calculate the activation free energy (ΔG‡) using umbrella sampling or thermodynamic integration over the reaction coordinate. - New Method Test: Apply the novel method (e.g., a machine learning potential) to the same system, using identical initial structures and reaction coordinate definition.

- Evaluation Metric: Compare the predicted ΔG‡ and reaction energy (ΔG_rxn) to the QM/MM reference and the experimental value. Report mean absolute error (MAE) and required computational resources (CPU/GPU hours).

Visualizing the Workflow and Limitations

Diagram 1: Classic vs. AI-Enhanced Enzyme Analysis Workflow

Diagram 2: The Accuracy vs. Speed Trade-Off

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Enzyme Docking & QM/MM Studies

| Tool/Reagent Name | Type/Category | Primary Function in Research |

|---|---|---|

| AutoDock Vina / GNINA | Docking Software | Open-source tools for high-throughput molecular docking and pose scoring. GNINA incorporates CNN scoring. |

| Schrödinger Suite (Glide) | Commercial Docking Software | Industry-standard for robust protein-ligand docking with advanced scoring functions. |

| CHARMM36 / AMBER ff19SB | Molecular Mechanics Force Field | Provides parameters for simulating protein and organic molecule dynamics and energetics. |

| GAFF2 | General Force Field | Parameterizes novel ligand molecules for use with AMBER/OpenMM. |

| CP2K | QM/MM Software | Performs ab initio and DFT-based QM/MM molecular dynamics, suitable for large enzyme systems. |

| ORCA | Quantum Chemistry Software | Computes high-level electronic structure for QM regions (DFT, coupled-cluster) for single-point energies. |

| OpenMM | MD Simulation Library | GPU-accelerated toolkit for running classical and mixed MM/MD simulations, enabling enhanced sampling. |

| PDB2PQR / PROPKA | Protein Preparation Tool | Assigns protonation states of amino acids at a given pH, critical for accurate electrostatics. |

| PyMOL / VMD | Visualization Software | For visualizing molecular structures, trajectories, docking poses, and active site interactions. |

| Rosetta | Protein Modeling Suite | Used for enzyme design and predicting protein-ligand interactions with sophisticated energy functions. |

The limitations of classic docking (lack of reactivity, poor scoring) and QM/MM (prohibitive cost, limited sampling) create a critical gap between high-throughput discovery and high-accuracy validation. This gap directly impedes the acceleration of enzyme design and drug discovery. The compelling need for speed and scale without sacrificing quantum-level accuracy forms the foundational thesis for integrating AI tools—such as machine-learned potentials, equivariant neural networks, and deep learning scoring functions—into enzyme substrate matching research. These next-generation methods promise to unify the workflow, offering QM/MM-fidelity predictions at docking-appropriate speeds, thereby enabling the exploration of chemical space at an unprecedented scale.

Within the critical research axis of AI tools for enzyme substrate matching, the accurate computational modeling of molecular interactions is paramount. This guide details the core AI methodologies—Machine Learning (ML), Deep Learning (DL), and Graph Neural Networks (GNNs)—that are revolutionizing our ability to predict binding affinities, reaction pathways, and substrate specificity, thereby accelerating rational enzyme design and drug discovery.

Foundational Concepts & Data Representation

Molecular Descriptors for Traditional ML

Traditional ML models require fixed-length feature vectors. Common descriptors include:

- Physicochemical Properties: Molecular weight, logP (lipophilicity), topological surface area (TPSA), count of hydrogen bond donors/acceptors.

- Fingerprints: Binary bit vectors representing the presence or absence of specific substructures (e.g., ECFP, MACCS keys).

- 3D Descriptors: Potential energy, dipole moment, spatial moments (from geometries optimized by tools like Gaussian or RDKit).

Graph Representation for GNNs

Molecules are natively represented as graphs ( G = (V, E) ), where:

- Nodes (V): Atoms, with features like element type, hybridization, formal charge.

- Edges (E): Bonds, with features like bond type (single, double, aromatic), stereochemistry.

This representation preserves topological structure and is invariant to atom indexing, making it ideal for learning structure-activity relationships.

Methodological Deep Dive

Machine Learning Pipeline for QSAR

Quantitative Structure-Activity Relationship (QSAR) modeling is a cornerstone application.

Experimental Protocol: QSAR Modeling with Random Forest

- Dataset Curation: Assemble a dataset of molecules with experimentally measured binding affinities (e.g., pIC50, Ki). Public sources include ChEMBL and BindingDB.

- Descriptor Calculation: Use cheminformatics software (e.g., RDKit, Mordred) to compute a comprehensive set of 2D/3D molecular descriptors for each compound.

- Data Preprocessing: Handle missing values, apply feature scaling (standardization), and remove low-variance or highly correlated descriptors.

- Dataset Splitting: Split data into training (70%), validation (15%), and hold-out test (15%) sets using stratified sampling based on activity range.

- Model Training: Train a Random Forest regressor/classifier on the training set. Optimize hyperparameters (number of trees, max depth) via grid search on the validation set.

- Model Evaluation: Assess on the test set using metrics: Mean Absolute Error (MAE) for regression; ROC-AUC for classification.

Deep Learning: Convolutional Neural Networks on Molecular Graphs

Graph Convolutional Networks (GCNs) operate directly on the graph structure.

Experimental Protocol: Training a GCN for Property Prediction

- Graph Construction: Convert SMILES strings to graph objects with node/edge feature matrices.

- Graph Pooling: For graph-level prediction (e.g., property of entire molecule), a readout function (global mean/sum pooling) aggregates final node embeddings.

- Model Architecture: Stack multiple GCN layers. Each layer updates a node's representation by aggregating features from its neighbors: ( hv^{(l+1)} = \sigma( \sum{u \in \mathcal{N}(v) \cup {v}} \frac{1}{c{vu}} W^{(l)} hu^{(l)} ) ) where ( \mathcal{N}(v) ) are neighbors of node ( v ), ( c_{vu} ) is a normalization constant, and ( W^{(l)} ) is a learnable weight matrix.

- Training: Use Adam optimizer with weight decay (L2 regularization). Loss function is Mean Squared Error (MSE) for regression or Cross-Entropy for classification.

Advanced GNNs: Message Passing Neural Networks

Message Passing Neural Networks (MPNNs) provide a general framework unifying many GNNs.

Key Steps in a Message Passing Phase:

- Message Function: For each edge, a message is created from the sender node, receiver node, and edge features.

- Aggregation Function: For each node, incoming messages are aggregated (sum, mean, max).

- Update Function: The node's representation is updated based on its previous state and the aggregated message.

Quantitative Performance Comparison

Table 1: Model Performance on Key Biochemical Datasets (2023-2024 Benchmarks)

| Model Class | Dataset (Task) | Key Metric | Reported Performance | Key Advantage |

|---|---|---|---|---|

| Random Forest | PDBbind (Binding Affinity) | RMSE (pK) | 1.45 - 1.60 | Interpretable, robust to small data |

| GCN | MoleculeNet (ESOL - Solubility) | RMSE (log mol/L) | 0.58 - 0.82 | Learns structural features automatically |

| Attentive FP | Tox21 (Toxicity) | ROC-AUC | 0.855 | Uses attention for relevant substructures |

| 3D GNN (SchNet) | QM9 (Atomization Energy) | MAE (meV) | < 10 | Incorporates 3D spatial distance information |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for AI-Driven Molecular Interaction Research

| Item | Function/Description |

|---|---|

| RDKit | Open-source cheminformatics library for descriptor calculation, fingerprint generation, and molecule I/O. |

| PyTorch Geometric (PyG) | A library built on PyTorch for easy implementation and training of GNNs. |

| DGL-LifeSci | A toolkit for applying GNNs to various life science tasks, with pre-built models and pipelines. |

| AlphaFold DB | Database of predicted protein structures, providing essential 3D targets for interaction modeling. |

| OpenMM | High-performance toolkit for molecular simulations, used to generate training data or validate predictions. |

| Streamlit | Framework for rapidly building interactive web apps to deploy trained models for research team use. |

Visualized Workflows & Architectures

Title: AI Model Development Pipeline for Molecular Property Prediction

Title: Message Passing Neural Network (MPNN) Architecture

The progression from descriptor-based ML to geometric DL and expressive GNNs provides researchers in enzyme informatics with a powerful, multi-faceted toolkit. By directly learning from molecular graphs, modern GNNs capture intricate electronic and steric interactions critical for predicting enzyme-substrate compatibility. Integrating these models into scalable pipelines represents the forefront of in silico biocatalyst design and rational drug development.

Within the broader thesis on AI tools for enzyme-substrate matching research, the curation of high-quality, multimodal biological data is paramount. This technical guide details the systematic integration of three cornerstone public repositories—BRENDA (The Comprehensive Enzyme Information System), the Protein Data Bank (PDB), and UniProt (The Universal Protein Resource)—for the training and validation of robust AI models. These models aim to predict novel enzyme functions, catalytic efficiency, and substrate specificity, accelerating discovery in biocatalysis and drug development.

Core Data Source Specifications

The following table summarizes the key quantitative attributes and primary utility of each database for AI model development.

Table 1: Core Database Specifications for AI-Driven Enzyme Research

| Database | Primary Content | Key Quantitative Metrics (as of 2024) | AI Model Utility |

|---|---|---|---|

| BRENDA | Enzyme functional data: EC classification, kinetic parameters (Km, kcat, Ki), substrate specificity, organism source, pH/temp optima, inhibitors. | > 90,000 enzymes; > 150,000 documented substrates; > 2.5 million kinetic parameter entries. | Training Labels: Provides ground-truth functional annotations and quantitative kinetic parameters (kcat/Km) for supervised learning. |

| Protein Data Bank (PDB) | 3D macromolecular structures (proteins, nucleic acids, complexes) from X-ray, NMR, Cryo-EM. | > 220,000 structures; ~170,000 are proteins; ~60% are enzymes. | Structural Features: Source for spatial graphs (atom/residue-level), active site coordinates, and binding pocket descriptors for graph neural networks (GNNs). |

| UniProt | Comprehensive protein sequence and functional annotation. Swiss-Prot (reviewed) and TrEMBL (unreviewed). | > 200 million sequences; ~ 570,000 in Swiss-Prot; Extensive cross-references. | Sequence Features: Source for amino acid sequences, domains, families (Pfam), and post-translational modifications for sequence-based models (LSTMs, Transformers). |

Integrated Data Pipeline: From Curation to Model Input

A critical step is the creation of a unified dataset where each enzyme entry is linked across all three resources.

Data Retrieval & Curation Protocol

Objective: Create a non-redundant, high-quality dataset of enzymes with associated sequences, structures, and kinetic parameters.

Protocol:

- Seed from BRENDA: Query BRENDA via its RESTful API or downloaded flat files for all enzymes with at least one reported kinetic parameter (kcat or Km) for a natural substrate.

- Map to UniProt: Use the provided EC number and organism source to map to canonical UniProt IDs via the UniProt mapping service. Prioritize Swiss-Prot entries for high-confidence annotations.

- Map to PDB: For each UniProt ID, query the SIFTS (Structure Integration with Function, Taxonomy and Sequence) resource to obtain all corresponding PDB IDs. Resolve multiple structures by selecting the one with the highest resolution and completeness in the active site region.

- Data Integration: Create a master table with columns:

EC_Number,UniProt_ID,PDB_ID(best),Organism,Substrate_List,kcat_Value,Km_Value,kcat/Km_Value,pH,Temperature. - Quality Filtering: Remove entries where critical data (sequence, structure, or a kinetic value) is missing. Apply thresholds for structural resolution (e.g., ≤ 3.0 Å).

Feature Engineering for AI Models

Table 2: Engineered Features from Integrated Data

| Feature Type | Source Database | Extraction Method | AI Model Input Format |

|---|---|---|---|

| Sequential | UniProt | Amino acid sequence (canonical). | One-hot encoding, k-mer tokenization, or pre-trained language model embeddings (e.g., from ProtBERT). |

| Structural | PDB | 3D coordinates of atoms/residues. Atomic interaction graphs, dihedral angles, solvent accessibility. | Graph representation (nodes: residues/atoms; edges: distances/interactions). Voxelized 3D grid. |

| Functional | BRENDA | Numerical kinetic parameters (kcat, Km). Categorical substrate names. | Scalar normalization for kinetic values. Substrate fingerprinting via molecular descriptors (from PubChem). |

| Contextual | All | Enzyme Commission (EC) number hierarchy, organism taxonomy. | Hierarchical encoding, taxonomic one-hot vectors. |

Experimental Validation Protocol for AI Predictions

Predictions from trained models (e.g., novel substrate predictions or engineered enzyme kinetics) require in silico and in vitro validation.

Protocol for In Silico Docking Validation:

- Input: AI-predicted novel substrate for a target enzyme (PDB: 1XYZ).

- Ligand Preparation: Generate the 3D conformation of the predicted substrate using RDKit or Open Babel. Assign partial charges and optimize geometry.

- Protein Preparation: Using the PDB file 1XYZ, remove water molecules and heteroatoms, add hydrogen atoms, and assign protonation states (e.g., using UCSF Chimera or Schrödinger's Protein Preparation Wizard).

- Binding Site Definition: Define the active site coordinates from the catalytic residues annotated in the Catalytic Site Atlas (CSA) or from the original BRENDA/PDB annotation.

- Molecular Docking: Perform flexible-ligand docking into the defined binding site using AutoDock Vina or similar. Run 20 docking poses.

- Analysis: Rank poses by binding affinity (kcal/mol). A pose with favorable affinity and correct geometry relative to the catalytic machinery supports the AI prediction.

Protocol for In Vitro Kinetic Assay Validation (Example: Spectrophotometric Assay):

- Cloning & Expression: Clone the gene for the target enzyme (UniProt ID as reference) into an expression vector (e.g., pET-28a). Transform into E. coli BL21(DE3) cells.

- Protein Purification: Induce expression with IPTG. Purify the His-tagged enzyme via Ni-NTA affinity chromatography. Confirm purity via SDS-PAGE.

- Assay Setup: Prepare a reaction buffer at the optimal pH from BRENDA. Create a substrate concentration series (e.g., 0.1xKm to 10xKm predicted) of the AI-predicted novel substrate.

- Activity Measurement: In a 96-well plate, mix enzyme with substrate and initiate reaction. Monitor the change in absorbance (e.g., at 340 nm for NADH/NADPH coupling) over 5 minutes using a plate reader.

- Kinetic Analysis: Fit the initial velocity data versus substrate concentration to the Michaelis-Menten equation (using GraphPad Prism or similar) to derive experimental Km and kcat values.

- Validation Criterion: Compare AI-predicted kinetic efficiency (kcat/Km) trend (low, medium, high) with the experimentally measured value. A significant detectable activity confirms substrate acceptance.

Visualizing the Integrated Workflow

Data Integration and AI Model Development Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Experimental Validation

| Reagent/Material | Supplier Examples | Function in Protocol |

|---|---|---|

| Expression Vector (pET-28a) | Novagen (MilliporeSigma), Addgene | Provides T7 promoter for high-level, inducible expression of the cloned enzyme gene with an N-terminal His-tag for purification. |

| E. coli BL21(DE3) Competent Cells | New England Biolabs (NEB), Thermo Fisher | Optimized bacterial host strain for T7 RNA polymerase-driven protein expression upon IPTG induction. |

| Ni-NTA Agarose Resin | Qiagen, Cytiva | Immobilized metal affinity chromatography (IMAC) resin for purifying His-tagged recombinant enzymes. |

| IPTG (Isopropyl β-D-1-thiogalactopyranoside) | GoldBio, Thermo Fisher | Inducer molecule that binds lac repressor to initiate transcription of the T7 RNA polymerase gene, leading to target enzyme expression. |

| Spectrophotometric Assay Kit (e.g., NAD(P)H-coupled) | Sigma-Aldrich, Cayman Chemical | Provides optimized buffer, cofactors, and reference standards for convenient, high-throughput measurement of enzyme activity. |

| 96-Well Clear Flat-Bottom Assay Plates | Corning, Greiner Bio-One | Microplate format for running parallel spectrophotometric kinetic assays in a plate reader. |

| Molecular Docking Software (AutoDock Vina) | The Scripps Research Institute | Open-source program for predicting the binding pose and affinity of a small molecule substrate within a protein's active site. |

| Protein Preparation Suite (UCSF Chimera) | RBVI, UCSF | Software for preparing PDB files for docking: adding hydrogens, assigning charges, and removing clashes. |

Within the broader thesis on AI tools for enzyme substrate matching research, a critical frontier has emerged: the transition from computational de novo enzyme design to the predictive modeling of off-target activity. This progression represents the maturation of the field from pure creation to comprehensive safety and efficacy analysis, which is paramount for applications in drug development and synthetic biology.

TheDe NovoDesign Pipeline: Principles and Protocols

Core Computational Methodology

De novo enzyme design constructs novel protein scaffolds to catalyze a target reaction for which no natural enzyme exists. The contemporary pipeline is AI-driven.

Key Experimental Protocol (In Silico Design Cycle):

- Reactive Motif Placement (Theozyme Construction): Quantum mechanics calculations (e.g., DFT at the B3LYP/6-31G* level) define the optimal geometry and energetics of transition-state analogs and catalytic residues.

- Scaffold Search & Matching: A neural network (e.g., ProteinMPNN, AlphaFold) scans structural databases (PDB, SCOPe) to identify protein backbones capable of hosting the designed active site. Metrics include RMSD (<1.0 Å for catalytic atoms) and burial depth.

- Sequence Design: A second network, conditioned on the scaffold, generates amino acid sequences that fold into the target structure. Rosetta sequence design protocols are often used in tandem for energy minimization.

- In Silico Filtration: Designed sequences undergo molecular dynamics simulations (≥100 ns) in explicit solvent (e.g., TIP3P water, 150 mM NaCl) to assess stability (backbone RMSF < 1.5 Å) and binding pocket integrity. Docking of the substrate and transition-state analog is performed (AutoDock Vina, Schrödinger Glide) to verify productive binding modes.

- Ranking & Selection: Designs are ranked by a composite score: ΔG_bind (MM/GBSA calculation, target < -10 kcal/mol), predicted ΔΔGfold (ddgmonomer, target < 2.0 kcal/mol), and evolutionary plausibility (pLDDT > 80 from AlphaFold2).

Table 1: Quantitative Benchmarks for Successful De Novo Enzyme Designs

| Metric | Target Value | Typical Range in Literature | Measurement Tool |

|---|---|---|---|

| Catalytic Efficiency (kcat/KM) | > 1 M⁻¹s⁻¹ | 0.1 - 10⁴ M⁻¹s⁻¹ | Michaelis-Menten kinetics |

| Thermal Stability (Tm) | > 50°C | 45 - 85°C | Differential Scanning Fluorimetry |

| Active Site RMSD | < 1.0 Å | 0.5 - 1.5 Å | X-ray Crystallography |

| pLDDT (Confidence) | > 80 | 70 - 95 | AlphaFold2 output |

| ΔG_bind (Substrate) | < -10 kcal/mol | -8 to -15 kcal/mol | MM/GBSA Calculation |

Title: AI-Driven De Novo Enzyme Design Workflow

The Off-Target Prediction Challenge

A designed enzyme, particularly for therapeutic use (e.g., prodrug activation, metabolite clearance), must not catalyze undesired reactions with native substrates. Off-target prediction involves modeling enzyme promiscuity against a physiological substrate library.

Methodology for Off-Target Profiling

Experimental Protocol (Computational Substrate Screening):

- Library Curation: Compile a structurally diverse library of potential off-target substrates (e.g., from HMDB, ChEMBL). Typical size: 10,000 - 100,000 compounds.

- Ensemble Docking: Using the designed enzyme structure (or an ensemble from MD), dock all library compounds. Use flexible docking protocols (e.g., Glide SP/XP, AutoDock Vina with side-chain flexibility). Generate 20-50 poses per compound.

- Binding Pose Filtering: Filter poses based on geometric alignment to the catalytic machinery (distance to catalytic residue < 3.5 Å, angle tolerance < 30°).

- Reactivity Prediction: Apply machine learning or QM/MM methods:

- ML Approach: Use a graph neural network (e.g., Directed Message Passing Neural Network) trained on reaction databases (e.g., USPTO) to predict the likelihood of the enzyme catalyzing a transformation on the docked pose. Output: a probability score (p_react).

- QM/MM Approach: For high-risk hits, perform constrained QM/MM optimization (e.g., using Gaussian/ORCA coupled with AMBER) on the reaction coordinate to calculate activation energy (ΔG‡). A ΔG‡ < 15-20 kcal/mol suggests plausible off-target activity.

- Validation: Top predicted off-target hits (p_react > 0.7 or ΔG‡ < 18 kcal/mol) are tested in vitro using LC-MS/MS activity-based protein profiling (ABPP) or targeted metabolite detection.

Table 2: Key Metrics for Off-Target Risk Assessment

| Risk Level | Predicted p_react | Predicted ΔG‡ | Experimental kcat/KM (Off-target) | Required Action |

|---|---|---|---|---|

| High | > 0.85 | < 15 kcal/mol | > 0.1% of target activity | Redesign enzyme |

| Medium | 0.70 - 0.85 | 15 - 20 kcal/mol | Detectable but < 0.1% | Iterative optimization |

| Low | < 0.70 | > 20 kcal/mol | Not detectable | Proceed to further development |

Title: Computational Off-Target Effect Prediction Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Design & Validation

| Item | Supplier Examples | Function in Research |

|---|---|---|

| PyRosetta License | Rosetta Commons | Provides the core software suite for energy-based protein design and structural refinement. |

| AlphaFold2/ProteinMPNN | DeepMind, GitHub | Neural networks for protein structure prediction and sequence design, respectively. |

| GPU Compute Cluster | AWS (p3/p4 instances), NVIDIA DGX | Essential for running large-scale neural network inferences (design) and MD simulations. |

| GROMACS/AMBER | Open Source, UCSF | Molecular dynamics simulation packages for in silico stability and dynamics assessment. |

| Schrödinger Suite | Schrödinger Inc. | Integrated platform for high-throughput molecular docking, MM/GBSA, and QM/MM calculations. |

| Activity-Based Probes (ABPs) | Thermo Fisher, Cayman Chemical | Chemical tools containing a reactive group and a reporter tag to experimentally profile off-target enzyme activity in complex lysates. |

| LC-MS/MS System | Agilent, Sciex, Waters | High-sensitivity analytical platform for detecting and quantifying products from off-target reactions. |

| HisTrap HP Column | Cytiva | For rapid immobilized metal affinity chromatography (IMAC) purification of His-tagged designed enzymes. |

A Practical Guide to Leading AI Tools for Enzyme-Substrate Matching: From AlphaFold to Specialized Platforms

This whitepaper, situated within a broader thesis on AI tools for enzyme-substrate matching research, provides an in-depth technical guide to three transformative structure prediction tools: AlphaFold 3, RoseTTAFold All-Atom, and ESMFold. The accurate prediction of protein-ligand, protein-nucleic acid, and protein-protein complexes is fundamental to understanding enzyme function and identifying potential substrates or inhibitors. This document details their methodologies, comparative performance, and provides explicit protocols for employing these tools in binding site analysis for drug and enzyme research.

Tool Architectures & Core Methodologies

AlphaFold 3 (DeepMind/Isomorphic Labs)

AlphaFold 3 is a diffusion-based model that generates joint structures of biomolecular complexes. It integrates multiple sequence alignments (MSAs) and pairwise features into a single representation, processed through a modified AlphaFold 2 architecture with an improved diffusion head.

RoseTTAFold All-Atom (Baker Lab)

This tool extends the original RoseTTAFold by employing a three-track neural network (1D sequences, 2D distances, 3D coordinates) that simultaneously reasons over proteins, nucleic acids, small molecules, and post-translational modifications.

ESMFold (Meta AI)

ESMFold is an end-to-end single-sequence prediction model. It uses a large language model (ESM-2) trained on evolutionary-scale protein sequences to generate per-residue embeddings, which are directly fed into a folding trunk to produce 3D coordinates without MSAs or homology.

Comparative Performance Data

Table 1: Benchmark Performance on Protein-Ligand Complex Prediction (PDBbind Test Set)

| Metric | AlphaFold 3 | RoseTTAFold All-Atom | ESMFold | Notes |

|---|---|---|---|---|

| Ligand RMSD (Å) | 1.47 | 2.85 | N/A | Lower is better. ESMFold not designed for ligand prediction. |

| Top-1 Accuracy (%) | 65.2 | 41.7 | N/A | Percentage of predictions with RMSD < 2.0 Å. |

| Inference Time | Moderate | Fast | Very Fast | Hardware-dependent; ESMFold is fastest due to single-sequence input. |

| Input Requirements | Complex (MSA) | Complex (MSA) | Simple (Sequence Only) |

Table 2: General Protein Structure Prediction (CASP15 Targets)

| Metric | AlphaFold 3 | RoseTTAFold All-Atom | ESMFold |

|---|---|---|---|

| TM-Score (Avg) | 0.92 | 0.88 | 0.83 |

| GDT_TS (Avg) | 88.5 | 82.1 | 78.3 |

Experimental Protocol for Binding Site Analysis

Protocol 1: Comparative Binding Site Analysis Using Multiple Tools

Objective: To predict and analyze the binding site of a target enzyme with a novel small molecule substrate.

Materials & Software:

- Target: Amino acid sequence or structure of the enzyme of interest.

- Ligand: SMILES string or 3D conformation of the putative substrate/inhibitor.

- Hardware: GPU-equipped workstation or access to cloud computing (e.g., Google Cloud, AWS).

- Software/Platforms:

- AlphaFold 3: Available via the AlphaFold Server (web interface).

- RoseTTAFold All-Atom: Local installation from GitHub or web server.

- ESMFold: API via ESM Metagenomic Atlas or local inference.

- Analysis Tools: PyMOL, UCSF ChimeraX, OpenBabel (for ligand format conversion).

Procedure:

Step 1: Input Preparation

- For AlphaFold 3 and RoseTTAFold All-Atom: Prepare the protein sequence in FASTA format. Convert the ligand SMILES to a 3D SDF or PDB file using OpenBabel (

obabel -ismi ligand.smi -osdf -h --gen3D). - For ESMFold: Input is the protein FASTA sequence alone. Ligand docking requires a separate step using the predicted structure.

Step 2: Structure Prediction Run

- AlphaFold 3 Job:

- Navigate to the AlphaFold Server.

- Upload protein FASTA and ligand SDF files.

- Select "Nucleotide" or "Other" molecule type as appropriate.

- Initiate prediction. Download the resulting PDB file and confidence metrics (pLDDT, PAE).

- RoseTTAFold All-Atom Job (Local Example):

- ESMFold Job (Local Example):

Step 3: Binding Site Analysis

- Visual Inspection: Load predicted complex (AF3, RFAA) or apo structure (ESMFold) into PyMOL/ChimeraX.

- Site Identification: Locate the predicted ligand position. For ESMFold's apo structure, run a complementary docking tool (e.g., AutoDock Vina) using the predicted structure as the receptor.

- Metric Calculation:

- Measure intermolecular distances (H-bonds, hydrophobic contacts).

- Calculate buried surface area of the interface using

PyMOLorChimeraX. - Analyze per-residue confidence scores (pLDDT for AF3, ESMFold; estimated confidence for RFAA) at the binding site.

Step 4: Validation & Comparison

- Superimpose the three predicted protein structures to assess backbone consensus.

- Compare the predicted ligand pose (from AF3 and RFAA) and the docked pose (from ESMFold+Vina). Calculate RMSD between them.

- Correlate high-confidence regions with known catalytic or binding residues from literature.

Visualization of Workflows

Title: Comparative Binding Site Analysis Workflow

Title: AlphaFold 3 Simplified Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for AI-Driven Binding Site Analysis

| Item | Function / Description | Key Provider / Source |

|---|---|---|

| AlphaFold Server | Web platform for running AlphaFold 3 predictions on proteins, nucleic acids, and ligands. No local installation required. | DeepMind / Isomorphic Labs |

| RoseTTAFold All-Atom GitHub Repo | Source code and weights for local installation and custom pipeline integration. | Baker Lab (UW) |

| ESMFold API & Weights | Enables high-throughput, single-sequence structure prediction via API or local inference. | Meta AI (ESM) |

| PDBbind Database | Curated benchmark dataset of protein-ligand complexes with binding affinity data for validation. | PDBbind-CN |

| OpenBabel | Open-source chemical toolbox for converting ligand file formats (e.g., SMILES to SDF/PDB). | Open Babel Project |

| UCSF ChimeraX | Advanced visualization and analysis software for measuring interfaces, buried surface area, and clashes. | RBVI, UCSF |

| AutoDock Vina | Widely-used molecular docking program for predicting ligand poses against a protein binding site. | The Scripps Research Institute |

| GPUs (e.g., NVIDIA A100) | High-performance computing hardware essential for rapid local inference of large models. | Cloud Providers (AWS, GCP, Azure) |

Within the broader thesis on AI tools for enzyme function annotation and substrate matching, a critical challenge is predicting specific molecular interactions. This guide details the application of three advanced deep learning models—DeepFRI, D-SCRIPT, and CLEAN—for predicting enzyme-substrate specificity, a cornerstone for drug discovery and metabolic engineering.

Model Architectures & Core Methodologies

DeepFRI (Functional Residue Identification)

DeepFRI predicts Molecular Function (MF) and Enzyme Commission (EC) numbers by integrating sequence and protein structure via Graph Convolutional Networks (GCNs).

Experimental Protocol (Inference):

- Input Preparation: Provide protein sequence or PDB file.

- Contact Map Generation: Use built-in functions to compute a multi-scale contact map from structure or predicted structure via Alphafold2.

- Graph Construction: Represent protein as a graph where nodes are residues and edges are defined by spatial proximity.

- Model Inference: Load pre-trained GCN model (available on GitHub). Run forward pass to obtain predictions for Gene Ontology (GO) terms and EC numbers.

- Interpretation: The model outputs attention scores highlighting functionally important residues potentially involved in substrate binding.

D-SCRIPT (Deep Sequence Contact Residual Interaction Prediction Together)

D-SCRIPT predicts physical protein-protein interaction interfaces from sequence alone, adaptable for enzyme-substrate docking.

Experimental Protocol:

- Embedding Generation: Convert enzyme and putative substrate (protein) sequences into ESM-1b language model embeddings.

- Contact Map Prediction: Process embeddings through a residual neural network to predict intra-protein (enzyme and substrate) and inter-protein contact maps.

- Docking Decoy Generation: Use the predicted inter-protein contact map as a distance restraint to guide rigid-body docking with tools like HDOCK or RosettaDock.

- Evaluation: Assess docking poses against known complexes or mutagenesis data.

CLEAN (Contrastive Learning–Enabled Enzyme Annotation)

CLEAN uses contrastive learning to measure functional similarity between enzymes, enabling precise EC number prediction and substrate analog inference.

Experimental Protocol (Similarity Search):

- Database Construction: Download the pre-computed CLEAN vector embeddings for the Universe of Natural and Artificial Enzymes database.

- Query Enzyme Embedding: Compute the enzyme sequence's embedding using the provided CLEAN model (Esm2+MLP).

- Nearest Neighbor Search: Calculate cosine similarity between the query embedding and all database embeddings.

- Functional Inference: Retrieve top-k most similar enzymes. Their known substrates/EC numbers serve as high-confidence predictions for the query enzyme.

Quantitative Performance Comparison

Table 1: Benchmark Performance on Enzyme Function Prediction Tasks

| Model | Input Type | Primary Task | Key Metric | Reported Performance (Example) |

|---|---|---|---|---|

| DeepFRI | Sequence/Structure | EC Number Prediction | Fmax (MF) | 0.57 (on test set PDB chains) |

| D-SCRIPT | Sequence | Protein-Protein Interaction & Interface Prediction | AUPR (Interface) | 0.38 (on D-SCRIPT benchmark set) |

| CLEAN | Sequence | EC Number Prediction & Functional Similarity | Top-1 Accuracy (EC) | 76.2% (on third-digit EC prediction) |

| DeepFRI | Sequence/Structure | Gene Ontology Prediction | AUPR (BP) | 0.47 (on CAFA3 test set) |

| CLEAN | Sequence | Novel Enzyme Discovery (vs. BLASTp) | Enrichment Ratio | >4.0 (for discovering non-homologous enzymes) |

Table 2: Practical Implementation Requirements

| Model | Availability | Compute Demand (Typical) | Key Dependencies |

|---|---|---|---|

| DeepFRI | GitHub, Web Server | Medium (GPU beneficial) | TensorFlow, Biopython, PDB files |

| D-SCRIPT | GitHub | High (GPU required) | PyTorch, ESM, Docking software |

| CLEAN | GitHub, Web Tool | Low (CPU sufficient for inference) | PyTorch, NumPy, Esm |

Integrated Workflow for Substrate Specificity Prediction

(Diagram 1: AI workflow for substrate specificity prediction.)

Table 3: Essential Computational Resources & Databases

| Item | Function & Relevance |

|---|---|

| AlphaFold2 (Colab/DB) | Predicts high-accuracy protein structures from sequence, required for structure-based tools like DeepFRI. |

| PDB (Protein Data Bank) | Source of experimental structures for training, validation, and comparative analysis. |

| UniProt Knowledgebase | Comprehensive source of protein sequences and annotated functional data (EC, GO) for ground truth. |

| BRENDA/ExplorEnz | Curated databases of enzyme functional data, including substrate specificity, for validation. |

| CLEAN Universe Database | Pre-computed embeddings for millions of enzymes, enabling rapid similarity searches. |

| ESM-1b/ESM2 Models | State-of-the-art protein language models used as input encoders for D-SCRIPT and CLEAN. |

| HDOCK/RosettaDock | Rigid-body docking servers used in conjunction with D-SCRIPT's predicted contact maps. |

| PyMol/ChimeraX | Visualization software to analyze predicted structures, interfaces, and residue importance. |

Case Study: Predicting Kinase Substrate Specificity

(Diagram 2: Kinase substrate prediction using a combined approach.)

Protocol:

- Run the novel kinase sequence through CLEAN to identify its closest functional neighbors in kinase space.

- Retrieve known substrates for the top homologs from a curated database like PhosphoSitePlus as candidate substrates.

- Predict the kinase's structure with AlphaFold2 and analyze with DeepFRI to confirm kinase-like fold and identify catalytic loop residues.

- For each candidate substrate protein, use D-SCRIPT to predict the interaction interface and generate a docking pose, focusing on the proximity of the substrate's serine/threonine/tyrosine to the kinase's catalytic site.

- Rank candidates by docking score, CLEAN similarity score, and evolutionary evidence.

DeepFRI, D-SCRIPT, and CLEAN represent complementary pillars of a modern AI toolkit for deciphering enzyme substrate specificity. DeepFRI offers interpretable, structure-aware function prediction. D-SCRIPT models the physical interaction interface. CLEAN provides a powerful, rapid similarity-based search engine. Their integration, as outlined, provides a robust, multi-evidence framework for accelerating enzyme characterization and drug discovery.

Within the broader thesis on AI tools for enzyme-substrate matching research, the advent of de novo generative protein design platforms represents a paradigm shift. Moving beyond the prediction of existing structures, tools like RFdiffusion and Chroma enable the computational generation of entirely novel protein folds and enzyme active sites tailored for specific substrates or catalytic functions. This technical guide delves into the operational principles, experimental validation, and practical application of these platforms for designing novel enzymes.

Core Platform Architectures & Mechanisms

RFdiffusion

Developed by the Baker Lab, RFdiffusion is a generative model built upon RoseTTAFold. It uses a diffusion model that learns to denoise random 3D protein backbones into coherent, novel structures conditioned on user-defined specifications.

Key Mechanism: The process begins with a cloud of Cα atoms. Over a series of steps, the model iteratively refines this noise into a plausible protein structure. Conditioning can be applied via "inpainting" (fixing specific regions) or "motif scaffolding" (designing a structure around a predefined functional motif, like an enzyme active site).

Chroma

Created by Generate Biomedicines, Chroma is a multimodal generative model that combines diffusion on coordinates with conditioning via "grammars"—a programmable language for specifying symmetries, substructures, shape, and even natural language descriptions of function.

Key Mechanism: Chroma's diffusion process operates on a latent representation of structure. Its power lies in its composition of multiple conditioners, allowing a scientist to simultaneously enforce a binding site topology, a global shape, and a functional text prompt (e.g., "hydrolase for cellulose").

Quantitative Performance Comparison

Table 1: Performance Metrics of RFdiffusion and Chroma

| Metric | RFdiffusion | Chroma | Notes |

|---|---|---|---|

| Design Success Rate | ~ 20-40% (experimental validation) | Published metrics pending | RFdiffusion success varies by task (e.g., motif scaffolding > unconditional generation). |

| Designable Length | Up to ~500 residues | Up to ~2000+ residues | Chroma claims capability for large, complex assemblies. |

| Conditioning Flexibility | Structural motifs, symmetry, inpainting. | Structural grammars, text, shape, symmetry. | Chroma offers a more diverse, programmable conditioning suite. |

| Computational Scale | Can run on high-end GPUs (e.g., A100); single designs in minutes. | Large-scale model; typically accessed via API/cloud. | Accessibility differs; RFdiffusion is open-source. |

| Experimental Validation | Multiple papers show designed proteins express, fold, and function. | Initial preprints demonstrate in vitro and in vivo activity. | Both platforms have moved into the experimental phase. |

Table 2: Key Experimental Results from Published Studies (2023-2024)

| Study (Tool) | Design Target | Experimental Result | Quantitative Outcome |

|---|---|---|---|

| Watson et al., 2023 (RFdiffusion) | De novo protein binders | High-affinity binding to target surfaces. | Success rate: 18% of designs showed nM-μM binding. |

| Ingraham et al., 2023 (Chroma) | Symmetric enzymes, vaccines | Structured, stable assemblies expressed in vivo. | Cryo-EM structures matched designs with <2Å RMSD. |

| Salveson et al., 2024 (RFdiffusion) | Custom endonuclease | Novel enzymes with designed specificity. | 10 out of 12 designs showed measurable cleavage activity. |

Detailed Experimental Protocol forDe NovoEnzyme Design & Validation

This protocol outlines the end-to-end process for generating and testing a novel hydrolase.

Phase 1: Computational Design (Using RFdiffusion as an example)

- Specification: Define the catalytic triad/binding pocket geometry (motif) from a known enzyme or idealized coordinates.

- Conditional Generation: Use the motif scaffolding mode of RFdiffusion, providing the fixed motif atoms and a desired overall length (~300 residues). Generate 500-1000 candidate backbone structures.

- Sequence Design: For each candidate backbone, use a protein sequence design tool like ProteinMPNN to generate optimal amino acid sequences that stabilize the fold.

- Filtering: Filter designs using:

- Rosetta/pLDDT: For predicted stability and confidence.

- AlphaFold2/3: Predict the structure of the designed sequence. Retain designs where the predicted structure matches the generative model's output (<2Å RMSD).

- Functional Site Check: Use SCWRL4 or Docking to ensure the catalytic site geometry is preserved.

Phase 2: In Vitro Expression and Biophysical Characterization

- Gene Synthesis & Cloning: 10-50 top designs are codon-optimized for E. coli and synthesized. Genes are cloned into a pET vector with a His-tag.

- Expression Test: Transform into BL21(DE3) cells. Induce with 0.5 mM IPTG at 18°C for 18 hours.

- Purification: Lyse cells, purify via Ni-NTA affinity chromatography, and further by size-exclusion chromatography (SEC).

- Biophysical Assays:

- SEC-MALS: Confirm monodispersity and expected molecular weight.

- Circular Dichroism (CD): Verify secondary structure matches design.

- Differential Scanning Calorimetry (DSC): Measure melting temperature (Tm). Successful designs typically have Tm > 55°C.

Phase 3: Functional Enzymatic Assay

- Substrate Incubation: Incubate purified enzyme (1 µM) with target substrate (e.g., a custom fluorogenic ester, 200 µM) in suitable buffer at 25°C.

- Kinetic Measurement: Monitor fluorescence (ex/em: 360/460 nm) every 30 seconds for 10 minutes using a plate reader.

- Analysis: Calculate initial velocity (V0). Determine kcat/KM by fitting to the Michaelis-Menten equation or as a first-order rate constant under substrate-limited conditions.

Visualization of Workflows

De Novo Enzyme Design & Validation Workflow (88 chars)

Generative Model Core Mechanism (60 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for De Novo Enzyme Experiments

| Item | Function in Protocol | Example Product/Kit |

|---|---|---|

| Codon-Optimized Gene Fragments | Source of DNA for designed protein sequences. | Twist Bioscience gBlocks, IDT Gene Fragments. |

| High-Efficiency Cloning Kit | For rapid and reliable insertion of gene into expression vector. | NEB HiFi DNA Assembly Kit, Gibson Assembly Master Mix. |

| Expression Host Cells | Robust protein expression system. | E. coli BL21(DE3) Gold, NEB Turbo Competent Cells. |

| Affinity Purification Resin | One-step purification via engineered tag. | Ni-NTA Superflow (Qiagen), HisPur Cobalt Resin (Thermo). |

| Size-Exclusion Column | Polishing step for monodisperse sample. | Cytiva HiLoad 16/600 Superdex 75 pg. |

| Fluorogenic Enzyme Substrate | Sensitive detection of designed enzyme activity. | Custom synthesis from Sigma-Aldrich or Enzo Life Sciences (e.g., 4-methylumbelliferyl esters). |

| Stability Assay Dye | Rapid thermal stability assessment. | Prometheus nanoDSF Grade Capillaries (NanoTemper). |

| Precision Mass Spec Standard | Confirm exact molecular weight of purified design. | Waters ESI Tuning Mix, positive ion mode. |

This technical guide details a systematic framework for integrating artificial intelligence (AI) prediction tools into the experimental pipeline for enzyme substrate matching, a critical domain in enzymology and drug discovery. Framed within a broader thesis on AI applications in biochemical research, this document provides researchers with actionable methodologies to enhance predictive accuracy and experimental throughput.

The traditional process of identifying enzyme substrates is resource-intensive. AI models, particularly those based on deep learning and graph neural networks (GNNs), have emerged to predict binding affinities, reaction products, and novel substrate-enzyme pairs with increasing accuracy. This integration accelerates the hypothesis generation and validation cycle.

Core AI Model Architectures and Performance Data

A live search for recent (2023-2024) benchmark studies reveals the following performance metrics for prominent AI architectures in enzyme-substrate prediction.

Table 1: Comparative Performance of AI Models for Enzyme-Substrate Matching

| Model Architecture | Primary Dataset (e.g., BRENDA) | Prediction Task | Reported Accuracy | Key Advantage |

|---|---|---|---|---|

| Transformer (Product-Based) | MetaCyc / RHEA | Reaction Outcome | 88.7% | Captures long-range molecular dependencies |

| Graph Neural Network (GNN) | BindingDB / PDB | Binding Affinity (ΔG) | RMSE: 1.2 kcal/mol | Encodes 3D molecular structure |

| Ensemble (CNN+GNN) | CASF Benchmark | Dock Score Prediction | Pearson's R: 0.81 | Combines spatial and sequential features |

| Pre-trained Language Model (e.g., ESM-2) | UniProt | Active Site Matching | 85.3% | Leverages evolutionary sequence data |

Step-by-Step Integration Workflow

This workflow is designed as an iterative, closed-loop pipeline.

Step 1: Problem Framing & Data Curation

- Objective: Define the specific prediction goal (e.g., binary classification of binding, regression of Ki/IC50).

- Protocol: Assemble a high-quality, curated dataset. For a novel enzyme family study:

- Source Data: Extract known substrates and non-substrates from specialized databases (BRENDA, PubChem).

- Standardization: Use RDKit to standardize molecule representations (SMILES), remove duplicates, and correct stereochemistry.

- Split: Perform a scaffold split based on Bemis-Murcko frameworks to ensure model generalizability to novel chemotypes. Use a 70/15/15 ratio for training/validation/test sets.

Step 2: Model Selection & In Silico Validation

- Objective: Choose an appropriate pre-trained model or architecture and validate computationally.

- Protocol:

- Select a model from Table 1 aligned with your data type (sequence, graph, etc.).

- Fine-tuning: If using a pre-trained model (e.g., ESM-2 for enzymes), fine-tune the final layers on your curated dataset. Use a cross-entropy loss for classification or MSE for regression.

- Validation: Employ k-fold cross-validation (k=5). Key metrics: Area Under the Precision-Recall Curve (AUPRC) for imbalanced data, RMSE for affinity predictions.

- Interpretability: Apply SHAP (SHapley Additive exPlanations) or attention visualization to identify which enzyme residues or substrate atoms drive the prediction.

Step 3: Design of Validation Experiments

- Objective: Translate top AI predictions into testable in vitro experiments.

- Protocol for a Fluorescence-Based Activity Assay:

- Reaction Setup: Prepare 100 µL reaction mixtures in a 96-well plate: 50 nM purified target enzyme, 50 mM appropriate buffer (pH optima from BRENDA), varying concentrations of AI-predicted substrates (1 µM – 10 mM).

- Kinetic Measurement: Use a coupled fluorescent assay (e.g., NADH/NADPH depletion at 340 nm) or a direct fluorogenic substrate derivative. Initiate reactions with enzyme addition.

- Control: Include a known positive substrate and a known negative compound (DMSO vehicle).

- Data Acquisition: Monitor fluorescence every 30 seconds for 30 minutes using a plate reader (e.g., Tecan Spark). Calculate initial velocities (V0).

- Analysis: Fit V0 vs. [Substrate] to the Michaelis-Menten equation using non-linear regression (GraphPad Prism) to derive Km and Vmax.

Step 4: Feedback Loop & Model Retraining

- Objective: Use experimental results to improve the AI model iteratively.

- Protocol: For each tested compound, add a new data point: (Substrate SMILES, Enzyme Sequence, Experimental Label/Km). Retrain the model on this expanded dataset. This active learning loop prioritizes the prediction and testing of compounds where model confidence is low but potential impact is high.

Visualizing the Integrated Pipeline

(Diagram 1: AI-Integrated Research Pipeline for Enzyme Substrate Matching)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Experimental Validation of AI Predictions

| Item / Reagent | Function & Rationale | Example Product / Specification |

|---|---|---|

| Recombinant Enzyme | Target protein for kinetic assays. Purity is critical for accurate kinetics. | Purified enzyme >95% (SDS-PAGE), activity-verified. |

| Fluorogenic/Chromogenic Probe | Enables high-throughput, quantitative measurement of enzyme activity. | Methylumbelliferyl (MUF)-conjugated substrate analog. |

| NADH/NADPH Cofactor | Essential for coupled assays measuring oxidoreductase activity; absorbance at 340 nm. | β-NADH, disodium salt, >97% (HPLC). |

| HTS Microplate Reader | For parallel kinetic readouts of multiple AI-predicted substrates. | Multi-mode reader with temperature control (e.g., 25-37°C). |

| Liquid Handling Robot | Ensures precision and reproducibility in assay setup for large compound sets. | Automated pipetting system (e.g., Beckman Coulter Biomek). |

| Chemical Library | Source of novel compounds for AI model training and experimental testing. | Commercially available diverse library (e.g., Enamine REAL). |

| Data Analysis Software | For curve fitting, statistical analysis, and visualization of kinetic data. | GraphPad Prism, Python (SciPy, scikit-learn). |

The seamless integration of AI predictions into the enzyme research pipeline represents a paradigm shift. By following this structured process—curating robust data, selecting and validating models in silico, designing rigorous validation experiments, and closing the feedback loop—researchers can significantly accelerate the discovery of novel enzyme functions and inhibitors, directly contributing to advances in biotechnology and drug development.

The integration of Artificial Intelligence (AI) into enzyme research represents a pivotal advancement in the broader thesis of AI-driven enzyme substrate matching. This whitepaper provides a technical guide for applying predictive computational methods to characterize novel kinases and Cytochrome P450 (CYP) enzymes, crucial for drug discovery and toxicology.

Core Predictive Methodologies

Predicting substrates for novel enzymes employs a multi-strategy approach.

Homology & Sequence-Based Modeling

For kinases, catalytic domain sequence similarity to known kinases (from databases like UniProt or PhosphoSitePlus) is a primary predictor. For CYPs, similarity in the heme-binding region and substrate recognition sites (SRSs) is analyzed.

Structure-Based Docking

If a 3D model (experimental or homology-modeled) is available, molecular docking screens virtual compound libraries to predict favorable binding poses and interaction energies.

Machine Learning (ML) & Deep Learning (DL) Models

Supervised models trained on known enzyme-substrate pairs learn complex, non-linear relationships. Features include molecular fingerprints (ECFP, MACCS), physicochemical descriptors, and sequence-derived features.

Table 1: Comparison of Key Predictive Approaches

| Method | Typical Accuracy Range | Data Requirements | Computational Cost | Best For |

|---|---|---|---|---|

| Sequence Homology | 60-75% | High-quality multiple sequence alignment (MSA) | Low | Novel kinases with close homologs |

| Molecular Docking | 70-85% (pose); lower for affinity | 3D enzyme structure, compound library | High | Prioritizing candidates from a library |

| Random Forest ML | 80-88% (AUC) | Large, labeled substrate/non-substrate dataset | Medium | High-throughput virtual screening |

| Graph Neural Network | 85-92% (AUC) | Large, labeled dataset with structural info | Very High | Capturing complex molecular patterns |

Experimental Protocol for Validation

Predictions require biochemical validation. Below is a generalized protocol for novel kinase substrate validation.

Protocol: In Vitro Kinase Activity Assay for Predicted Substrates

Objective: To validate computational predictions of peptide/protein substrates for a novel kinase.

Materials:

- Purified novel kinase protein.

- Predicted substrate peptides/proteins.

- Control peptides (known substrate for a related kinase, scrambled sequence).

- [γ-³²P]ATP or ATP analog for detection.

- Kinase assay buffer (e.g., 25 mM Tris-HCl pH 7.5, 10 mM MgCl₂, 5 mM β-glycerophosphate, 2 mM DTT, 0.1 mM Na₃VO₄).

- Phosphocellulose paper (P81) or SDS-PAGE equipment.

- Autoradiography film/phosphorimager or anti-phosphoantibody for Western blot.

Procedure:

- Reaction Setup: In a 30 µL reaction volume, combine:

- 1-10 µg of substrate peptide/protein.

- 10-100 ng of purified kinase.

- Kinase assay buffer.

- 100 µM ATP + 5 µCi [γ-³²P]ATP (or 200 µM non-radioactive ATP for phospho-specific antibody detection).

- Incubation: Incubate at 30°C for 30 minutes.

- Termination: Stop the reaction by adding EDTA to 25 mM final concentration or by adding SDS-PAGE loading buffer and boiling.

- Detection:

- Radioactive: Spot reaction mix on P81 paper, wash extensively in 0.75% phosphoric acid, dry, and quantify by scintillation counting or autoradiography.

- Non-Radioactive: Resolve proteins by SDS-PAGE, transfer to membrane, and probe with relevant phospho-specific primary antibody and secondary HRP-conjugated antibody for chemiluminescent detection.

- Analysis: Compare phosphorylation signals of predicted substrates to positive and negative controls. Perform kinetic assays (Km, Vmax) on confirmed hits.

Research Reagent Solutions Toolkit

Table 2: Essential Reagents for Validation Experiments

| Reagent/Category | Example Product/Kit | Function in Experiment |

|---|---|---|

| Kinase/CYP Enzyme | Recombinant purified protein (e.g., from Sigma, Thermo Fisher, custom expression) | The catalytic entity being studied. |

| Activity Assay Kit | ADP-Glo Kinase Assay (Promega), P450-Glo Assay (Promega) | Provides a luminescent, homogeneous readout of enzyme activity. |

| Phosphorylation Detection | [γ-³²P]ATP (PerkinElmer), Anti-phospho-Ser/Thr/Tyr Antibodies (Cell Signaling Tech) | Directly labels or detects the phosphate group transferred. |

| Substrate Library | Peptide library (e.g., from JPT Peptide Technologies), Drug metabolite library (e.g., from Cayman Chemical) | Provides a set of candidate molecules for empirical testing. |

| Chromatography-Mass Spec | UPLC-MS/MS System (e.g., Waters, Agilent) | Gold standard for identifying and quantifying novel metabolites (for CYPs) or phosphorylated peptides. |

Integrated AI-Driven Workflow

(Diagram 1: AI-Driven Substrate Prediction Workflow)

Signaling Pathway Context for Kinases

Understanding the biological context of a novel kinase's predicted substrates is critical.

(Diagram 2: Novel Kinase in a Signaling Cascade)

Cytochrome P450 Metabolism Prediction Workflow

For novel CYPs, the prediction focus shifts to metabolic site (regioselectivity) and metabolite formation.

(Diagram 3: CYP Metabolism Prediction & ID)

Table 3: Key Public Data Sources for Model Training

| Database | Primary Use | Key Metrics (As of Latest Search) |

|---|---|---|

| UniProtKB | Enzyme sequence/function annotation | ~200 million entries; > 500,000 manually reviewed. |

| PDB | 3D structural templates for modeling | ~210,000 structures; ~12,000 are human proteins. |

| ChEMBL | Bioactivity data (Ki, IC50) for molecules | ~2.3 million compounds; ~17 million bioactivities. |

| PubChem | Compound library for virtual screening | ~111 million unique chemical structures. |

| BRENDA | Comprehensive enzyme functional data | ~90,000 enzymes; ~150,000 annotated EC numbers. |

| DrugBank | Drug & drug metabolism information | ~16,000 drug entries; ~5,500 experimental drugs. |

Table 4: Performance Benchmarks of Recent Predictive Models

| Model (Year) | Enzyme Class | Core Algorithm | Reported Performance |

|---|---|---|---|

| DeepKinZero (2023) | Kinase | Deep Metric Learning | Top-1 Accuracy: 68% on orphan kinase substrate prediction. |

| CYPstrate (2022) | Cytochrome P450 | Ensemble (RF, XGBoost) | AUC: 0.91 for major site of metabolism prediction. |

| KINATEST-ID (2024) | Kinase | Graph Neural Network (GNN) | AUC-PR: 0.85 on held-out novel kinase families. |

| MetaboliticNN (2023) | CYP | Attention-based Neural Network | Accuracy: 88% for classifying metabolizing CYP isoform. |

This case study demonstrates that predicting substrates for novel kinases and CYPs is a tractable problem within the AI for enzyme substrate matching thesis. Success relies on integrating sequential, structural, and chemical data into robust ML models, followed by rigorous experimental validation using standardized biochemical protocols. The iterative feedback loop between prediction and validation is essential for refining models and accelerating discovery in enzymology and drug development.

Overcoming Pitfalls: How to Troubleshoot and Optimize Your AI-Driven Enzyme-Substrate Predictions

Within the rapidly evolving field of AI-driven enzyme substrate matching, predictive model failures are frequently traced to three persistent challenges: reliance on poor-quality structural data, low-sequence homology to known templates, and overlooked cofactor dependencies. This guide provides a technical framework for diagnosing and mitigating these failure modes to enhance the reliability of computational predictions in drug development and enzyme engineering.

Handling Poor-Quality Structural Data

AI models trained on the Protein Data Bank (PDB) inherit its inherent noise. Common issues include missing residues, incorrect side-chain rotamers, and crystal packing artifacts.

Key Indicators & Quantitative Impact

The following table summarizes the correlation between structural quality metrics and AI model prediction error for substrate binding affinity.

Table 1: Impact of Structural Quality Metrics on Prediction Error

| Quality Metric | Threshold for "High" Quality | Avg. RMSE Increase in ΔG Prediction | Primary AI Model Affected |

|---|---|---|---|

| Resolution (Å) | ≤ 2.0 Å | Baseline (0.15 kcal/mol) | All Structure-Based Models |

| > 2.5 Å | +0.35 kcal/mol | AlphaFold2, EquiBind | |

| Ramachandran Outliers (%) | < 1% | Baseline | RosettaFold, Docking Networks |

| > 5% | +0.42 kcal/mol | RosettaFold, Docking Networks | |

| Clashscore | < 10 | Baseline | Molecular Dynamics (MD) Surrogates |

| > 20 | +0.28 kcal/mol | Molecular Dynamics (MD) Surrogates | |

| Missing Residues in Active Site | 0 | Baseline | Active Site-Specific GNNs |

| ≥ 1 | +0.85 kcal/mol | Active Site-Specific GNNs |

Experimental Protocol: Structure Refinement and Validation

Protocol 1: Iterative Refinement Loop for Poor-Quality Structures

- Initial Assessment: Upload PDB file to the PDB-REDO server (https://pdb-redo.eu/) for automated correction of crystallographic biases.

- Side-Chain Optimization: Use SCWRL4 or Rosetta fixbb protocol to correct rotameric states, particularly for active site residues.

- Loop Modeling: For missing loops, especially near the binding pocket, employ MODELLER or Rosetta loopmodel with kinematic closure.

- Energy Minimization: Perform restrained minimization using AMBER or CHARMM force fields within NAMD or GROMACS to relieve steric clashes while preserving overall fold.

- Final Validation: Run the refined model through MolProbity. Accept only structures with a Clashscore < 10, Ramachandran outliers < 2%, and no missing heavy atoms in the catalytic site.

Overcoming Low-Sequence Homology

When target enzyme sequence identity to training set templates falls below 20-30%, homology-based and many deep learning methods struggle.

Quantitative Performance Degradation

Table 2: AI Tool Performance vs. Sequence Identity to Nearest Training Homolog

| Sequence Identity Range | AlphaFold2 (pLDDT) | TrRosetta (TM-score) | Traditional Homology Modeling (TM-score) | Suggested Remedial Strategy |

|---|---|---|---|---|

| > 50% | ≥ 90 | ≥ 0.90 | ≥ 0.85 | Standard workflows reliable. |

| 30% - 50% | 80 - 90 | 0.75 - 0.90 | 0.60 - 0.85 | Use meta-servers (e.g., SwissModel). |

| 20% - 30% | 70 - 80 | 0.60 - 0.75 | 0.40 - 0.60 | Ab initio folding or coevolution. |

| < 20% ("Twilight Zone") | < 70 | < 0.60 | < 0.40 | Require experimental constraints (e.g., SAXS). |

Experimental Protocol: Integrating Sparse Experimental Data

Protocol 2: Incorporating SAXS Data for Ab Initio Folding

- Data Collection: Perform Small-Angle X-ray Scattering (SAXS) on the purified target enzyme at multiple concentrations (e.g., 1, 2, 5 mg/mL) in relevant buffer. Use beamline instrumentation (e.g., BioSAXS at APS).

- Data Processing: Subtract buffer scattering, check for aggregation via Guinier plot (linear region for q*Rg < 1.3), and compute the pairwise distance distribution function P(r) using PRIMUS or ATSAS.

- Constraint-Driven Modeling: Input the sequence and the experimental scattering curve I(q) into modeling suites like:

- BUNCH (ATSAS): For multi-domain proteins, generates ensembles satisfying SAXS data.

- Rosetta with SAXS constraints: Use the rosetta_scripts application with the SAXSEnergy term to bias ab initio folding trajectories toward shapes matching the P(r) profile.

- Validation: The final ensemble's computed scattering profile must fit the experimental data with a χ² value < 2.0.

Accounting for Cofactor Dependencies

Failure to account for essential metal ions, cosubstrates (e.g., NADH, ATP), or post-translational modifications is a major source of false-negative predictions in substrate matching.

Prevalence and Impact

Table 3: Prevalence of Cofactors in Enzyme Catalysis and Computational Omission Penalty

| Cofactor Type | Approx. % of Enzymes | Example | Avg. ΔΔG Prediction Error if Omitted |

|---|---|---|---|

| Divalent Metal Ions | ~40% | Mg²⁺, Zn²⁺ | +3.2 kcal/mol |

| Nucleotides (ATP/NAD) | ~30% | ATP, NADPH | +4.1 kcal/mol |

| Prosthetic Groups | ~15% | Heme, FAD | +5.5 kcal/mol |

| Activating Ions (Monovalent) | ~10% | K⁺, Na⁺ | +1.8 kcal/mol |

Experimental Protocol: Identifying and Modeling Cofactors

Protocol 3: Cofactor Identification via Isothermal Titration Calorimetry (ITC) and Subsequent Modeling

- Binding Assay: Perform ITC using a MicroCal PEAQ-ITC instrument.

- Fill the cell with 20 µM enzyme in assay buffer.

- Load the syringe with 200 µM suspected cofactor (e.g., MgCl₂, ATP).

- Run 19 injections of 2 µL each at 25°C.

- Fit the integrated heat data to a single-site binding model to obtain stoichiometry (N), binding constant (Kd), and enthalpy (ΔH).

- Structural Modeling:

- If a homologous structure with cofactor exists: Perform alignment and direct placement of the cofactor, followed by restrained minimization of the coordination shell.

- If no template exists: Use a geometry-based metal ion placement tool (e.g., CHED or MIB). For organic cofactors (e.g., FAD), use template-based docking with Autodock Vina, applying restraints based on known binding motifs (e.g., Rossmann fold for NAD).